- The paper introduces semantic loss to penalize invalid predictions using Boolean logic constraints and weighted model counting.

- It employs neuro-symbolic entropy regularization to ensure low-entropy, confident predictions that comply with predefined logical structures.

- Experimental results on entity-relation extraction, path prediction, and game level generation showcase improved robustness and validity.

Semantic Loss Functions for Neuro-Symbolic Structured Prediction

Introduction

The paper addresses structured output prediction by integrating symbolic reasoning into neural network models through a concept called semantic loss. This approach efficiently combines deep learning with symbolic constraints, enhancing the network's ability to generate predictions that adhere to predefined logical structures. The semantic loss is shown to be adaptable, facilitating its use with various neural architectures, including generative models like GANs.

Semantic Loss and Its Implementation

Semantic loss quantifies how well a neural network's outputs satisfy a given logical constraint, expressed through Boolean logic. The core idea is to adjust the network by penalizing the probability mass assigned to invalid predictions, thus steering the network towards outputs that comply with the constraints. Formally, it leverages weighted model counting (WMC) and knowledge compilation techniques to efficiently compute its gradients.

Neuro-Symbolic Entropy Regularization

Neuro-symbolic entropy regularization augments the semantic loss by enforcing that the predictive distribution over valid outputs is both confident and complies with the constraints. This is crucial for ensuring the predictive model optimally allocates mass to valid predictions while maintaining low entropy, effectively improving classification accuracy and robustness against noise.

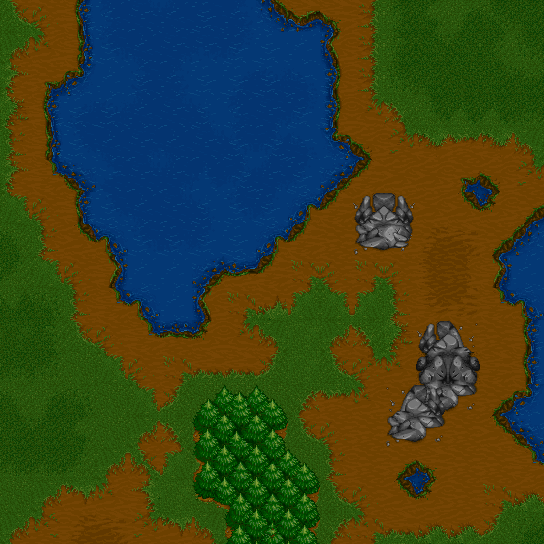

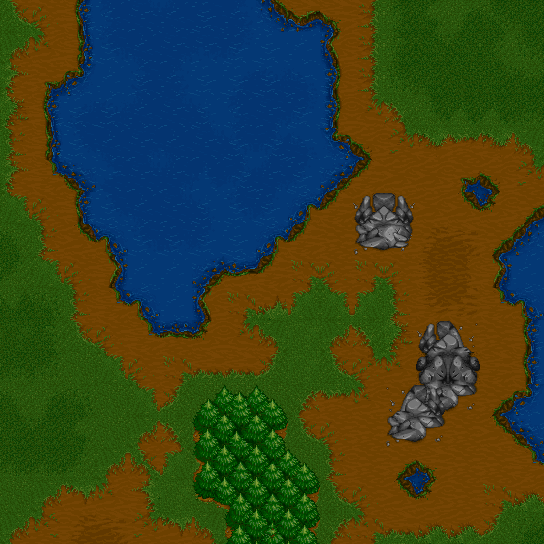

Figure 1: Warcraft dataset. Each input (left) is a 12 \times 12 grid corresponding to a Warcraft II terrain map, the output is a matrix (middle) indicating the shortest path from top left to bottom right (right).

Applications and Experimental Evaluation

The paper demonstrates the effectiveness of the proposed methods across various tasks, including:

- Entity-Relation Extraction: Performance improves significantly in semi-supervised settings by integrating constraints that reflect relational ontologies in data. Neuro-symbolic entropy regularization further enhances the performance by enforcing low entropy on network outputs.

- Predicting Simple Paths: In structured tasks like shortest path prediction and preference learning, models utilizing semantic loss exhibit enhanced coherence, yielding predictions that better respect structured constraints.

- Generative Models - Constrained Adversarial Networks (CANs):

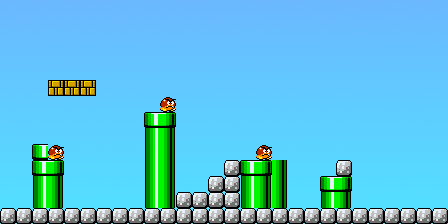

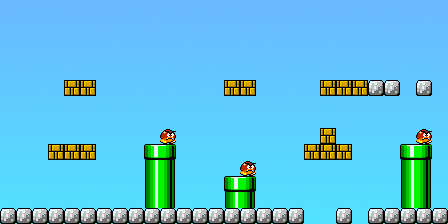

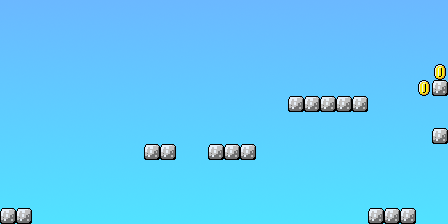

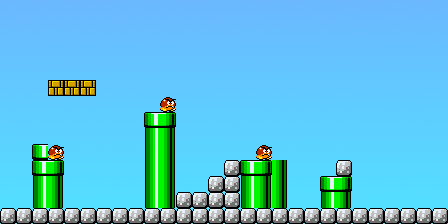

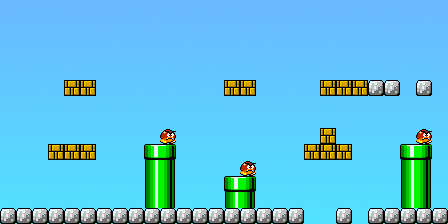

- Super Mario Bros (SMB) Level Generation: CANs outperform traditional GANs by incorporating logical constraints into the generation process, leading to higher validity and playability of generated game levels.

- Molecule Generation: CANs effectively generate chemically valid molecules by enforcing domain-specific constraints, with improved diversity and higher chemical property scores.

Figure 2: Examples of SMB levels generated by GAN and CAN. Improvement in validity and playability when using CANs.

Implications and Future Directions

This research offers a robust framework for integrating symbolic constraints into neural networks, facilitating better performance in structured domains. The paper suggests future work in extending neuro-symbolic approaches to even broader applications and refining computational efficiency for large-scale, complex constraints.

Conclusion

The integration of semantic loss and neuro-symbolic entropy regularization represents a significant stride in melding symbolic reasoning with neural networks. By ensuring outputs adhere to logical structures, these approaches substantially enhance the performance of both predictive and generative models, demonstrating the potential for broader application across AI domains.