- The paper presents a novel framework that leverages LLMs to automatically generate and optimize governing equations from observed data.

- It combines self-improvement and evolutionary strategies to iteratively refine candidate equations using symbolic math tools and optimization methods.

- The approach reduces reliance on complex algorithms and prior domain knowledge, achieving promising accuracy on nonlinear PDEs and ODEs.

LLM4ED: LLMs for Automatic Equation Discovery

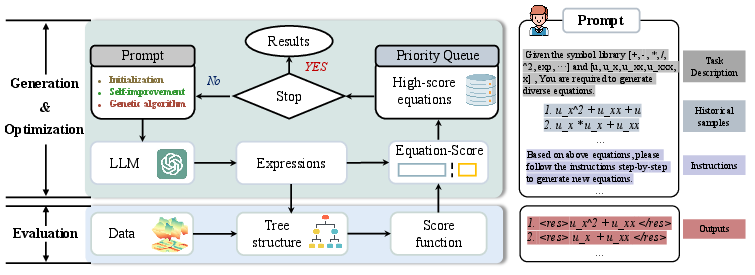

This paper presents a framework leveraging LLMs to automatically discover governing equations from observed data. Traditionally, symbolic mathematics methods have dominated this domain but often involve complex algorithmic design and are reliant on prior domain knowledge. This new approach utilizes LLMs to generate and optimize mathematical equations using natural language prompts, bypassing the need for intricate algorithm implementations.

Proposed Framework

The core idea behind the LLM4ED framework involves guiding LLMs using prompts to perform two main tasks: generation and optimization of equation candidates. The framework consists of the following stages:

- Equation Generation:

- LLMs generate a diverse set of equations in string format from a defined symbol library and problem description.

- Optimization:

Methodology

Initialization

Equations are generated via LLMs using predefined symbol libraries. This initial generation forms a starting population for evaluation and optimization.

Evaluation

Equations are parsed using symbolic math tools (e.g., Sympy) to convert string formats into expression trees. Then, constants within these expressions are determined through optimization methods such as sparse regression for PDEs and BFGS for ODEs. The quality of each equation is rated using a scoring function that combines accuracy of fit and expression complexity.

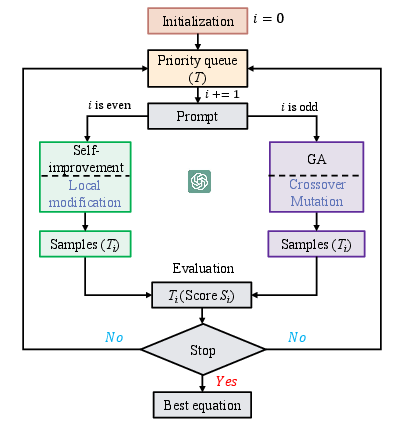

Optimization Strategies

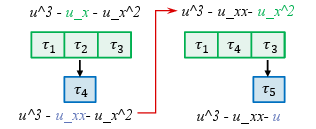

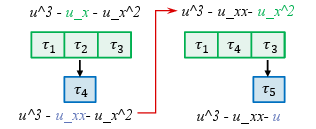

Utilizes previous high-scoring equations to guide LLMs in crafting refined versions through local modifications, helping to remove redundancies and introduce new variations.

Figure 3: Self-improvement process executed by LLMs.

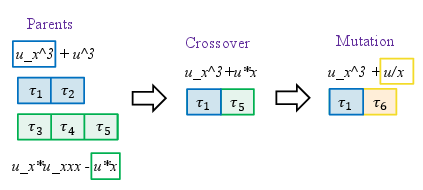

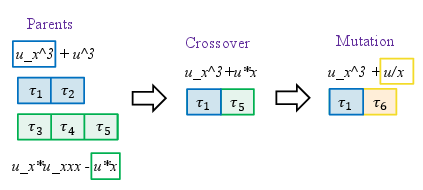

LLMs conduct global searches by applying genetic operators such as crossover and mutation on a population of high-quality equations.

Figure 4: Crossover and mutation executed by LLMs.

Experimental Results

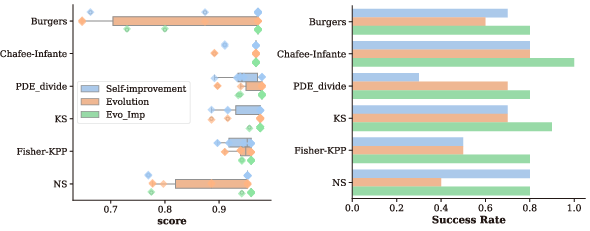

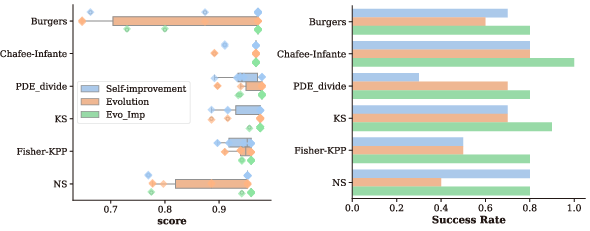

Evaluation of the framework was performed on various nonlinear dynamic system equations, notably PDEs and ODEs. The results indicate strong performance with low coefficient errors across multiple scenarios, such as Burgers', Chafee-Infante, and Kuramoto-Sivashinsky equations. Additionally, comparisons with state-of-the-art models showed that the LLM4ED framework provides comparable or superior performance in terms of stability and usability.

Figure 5: Discovered results under different optimization methods.

Practical Implications and Future Work

The framework is a breakthrough in reducing the learning curve and operational complexity associated with equation discovery. By automating the generation and optimization of equations, it broadens access to these techniques beyond expert domains. Future iterations of the framework could integrate more sophisticated prompts to refine its search space further and apply streamlined evaluation criteria for more complex systems with sparse or noisy data.

Conclusion

LLM4ED has shown its potential to transform the field of automated equation discovery by effectively combining the generative and reasoning capabilities of LLMs. This paper concludes with the promising application prospects of LLMs in scientific knowledge discovery, encouraging further exploration and refinement of this AI-driven methodology.