Timeline-based Sentence Decomposition with In-Context Learning for Temporal Fact Extraction

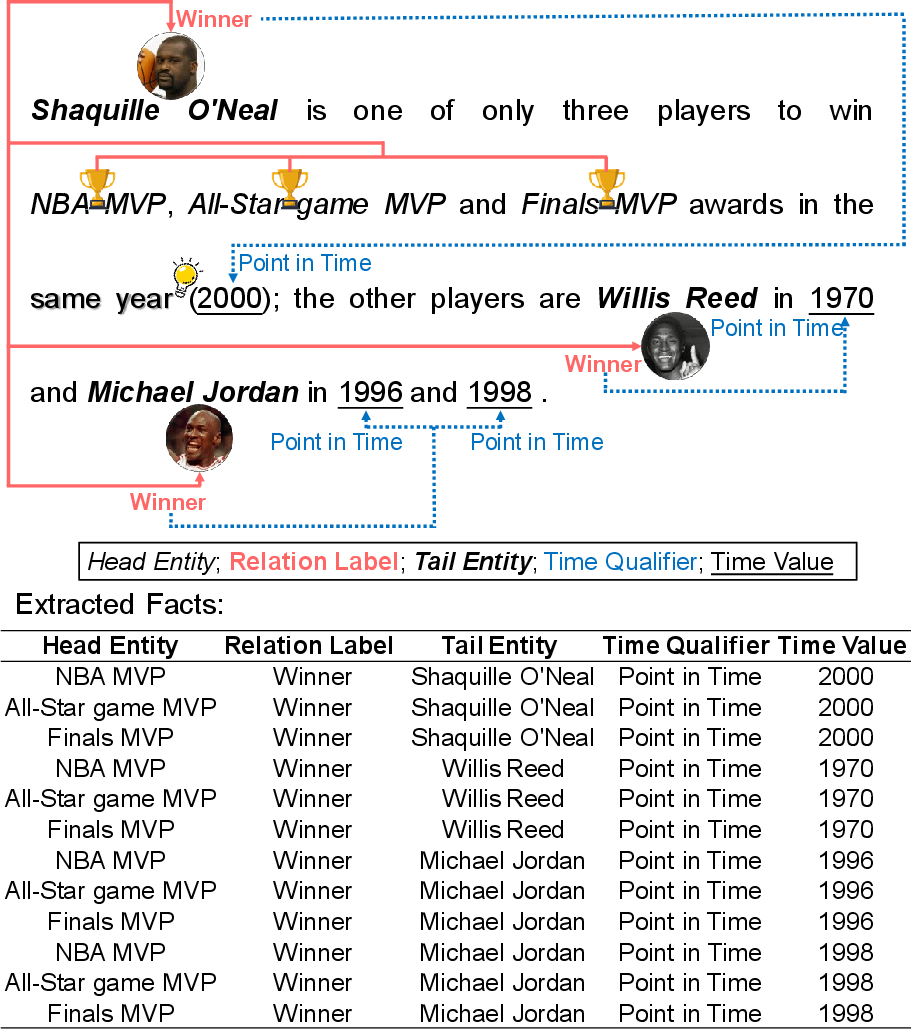

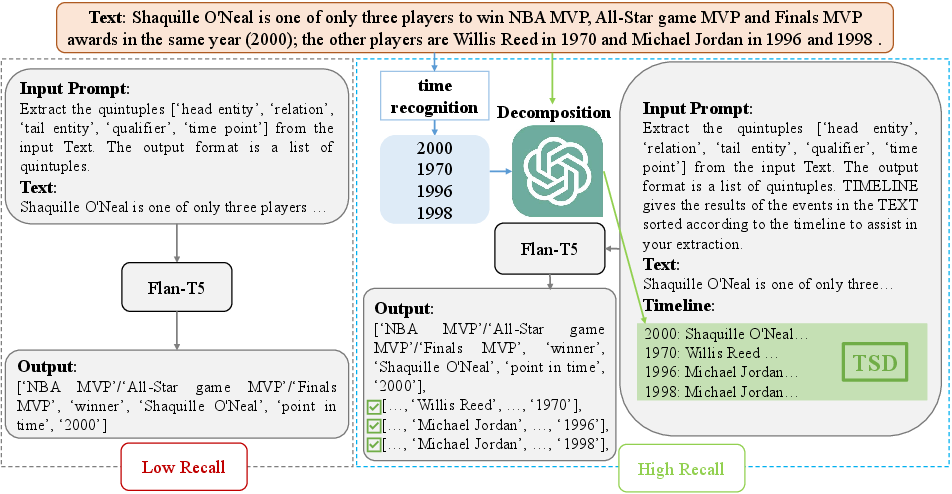

Abstract: Facts extraction is pivotal for constructing knowledge graphs. Recently, the increasing demand for temporal facts in downstream tasks has led to the emergence of the task of temporal fact extraction. In this paper, we specifically address the extraction of temporal facts from natural language text. Previous studies fail to handle the challenge of establishing time-to-fact correspondences in complex sentences. To overcome this hurdle, we propose a timeline-based sentence decomposition strategy using LLMs with in-context learning, ensuring a fine-grained understanding of the timeline associated with various facts. In addition, we evaluate the performance of LLMs for direct temporal fact extraction and get unsatisfactory results. To this end, we introduce TSDRE, a method that incorporates the decomposition capabilities of LLMs into the traditional fine-tuning of smaller pre-trained LLMs (PLMs). To support the evaluation, we construct ComplexTRED, a complex temporal fact extraction dataset. Our experiments show that TSDRE achieves state-of-the-art results on both HyperRED-Temporal and ComplexTRED datasets.

- Language models are few-shot learners. In Advances in Neural Information Processing Systems 33: Annual Conference on Neural Information Processing Systems 2020, NeurIPS 2020, December 6-12, 2020, virtual.

- Angel X. Chang and Christopher D. Manning. 2012. Sutime: A library for recognizing and normalizing time expressions. In Proceedings of the Eighth International Conference on Language Resources and Evaluation, LREC 2012, Istanbul, Turkey, May 23-25, 2012, pages 3735–3740. European Language Resources Association (ELRA).

- Multi-granularity temporal question answering over knowledge graphs. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2023, Toronto, Canada, July 9-14, 2023, pages 11378–11392. Association for Computational Linguistics.

- A dataset for hyper-relational extraction and a cube-filling approach. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, EMNLP 2022, Abu Dhabi, United Arab Emirates, December 7-11, 2022, pages 10114–10133. Association for Computational Linguistics.

- Scaling instruction-finetuned language models. CoRR, abs/2210.11416.

- Semantic framework based query generation for temporal question answering over knowledge graphs. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, EMNLP 2022, Abu Dhabi, United Arab Emirates, December 7-11, 2022, pages 1867–1877. Association for Computational Linguistics.

- Enriching timebank: Towards a more precise annotation of temporal relations in a text. In Proceedings of the Tenth International Conference on Language Resources and Evaluation (LREC’16), pages 3844–3850.

- Understanding in-context learning via supportive pretraining data. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2023, Toronto, Canada, July 9-14, 2023, pages 12660–12673. Association for Computational Linguistics.

- Edg-based question decomposition for complex question answering over knowledge bases. In The Semantic Web - ISWC 2021 - 20th International Semantic Web Conference, ISWC 2021, Virtual Event, October 24-28, 2021, Proceedings, volume 12922 of Lecture Notes in Computer Science, pages 128–145. Springer.

- Question decomposition tree for answering complex questions over knowledge bases. In Thirty-Seventh AAAI Conference on Artificial Intelligence, AAAI 2023, Thirty-Fifth Conference on Innovative Applications of Artificial Intelligence, IAAI 2023, Thirteenth Symposium on Educational Advances in Artificial Intelligence, EAAI 2023, Washington, DC, USA, February 7-14, 2023, pages 12924–12932. AAAI Press.

- Erdal Kuzey and Gerhard Weikum. 2012. Extraction of temporal facts and events from wikipedia. In 2nd Temporal Web Analytics Workshop, TempWeb ’12, Lyon, France, April 16-17, 2012, pages 25–32. ACM.

- Bart: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. arXiv preprint arXiv:1910.13461.

- Unified demonstration retriever for in-context learning. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2023, Toronto, Canada, July 9-14, 2023, pages 4644–4668. Association for Computational Linguistics.

- Tirgn: Time-guided recurrent graph network with local-global historical patterns for temporal knowledge graph reasoning. In Proceedings of the Thirty-First International Joint Conference on Artificial Intelligence, IJCAI 2022, Vienna, Austria, 23-29 July 2022, pages 2152–2158. ijcai.org.

- Local and global: Temporal question answering via information fusion. In Proceedings of the Thirty-Second International Joint Conference on Artificial Intelligence, IJCAI 2023, 19th-25th August 2023, Macao, SAR, China, pages 5141–5149. ijcai.org.

- Temporal knowledge extraction from large-scale text corpus. World Wide Web, 24(1):135–156.

- Dbpedia spotlight: shedding light on the web of documents. In Proceedings the 7th International Conference on Semantic Systems, I-SEMANTICS 2011, Graz, Austria, September 7-9, 2011, ACM International Conference Proceeding Series, pages 1–8. ACM.

- Haithem Mezni. 2022. Temporal knowledge graph embedding for effective service recommendation. IEEE Trans. Serv. Comput., 15(5):3077–3088.

- OpenAI. 2023. GPT-4 technical report. CoRR, abs/2303.08774.

- Llama: Open and efficient foundation language models. CoRR, abs/2302.13971.

- Llama 2: Open foundation and fine-tuned chat models. CoRR, abs/2307.09288.

- Tempeval-3: Evaluating events, time expressions, and temporal relations. arXiv preprint arXiv:1206.5333.

- Revisiting relation extraction in the era of large language models. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2023, Toronto, Canada, July 9-14, 2023, pages 15566–15589. Association for Computational Linguistics.

- Coupling label propagation and constraints for temporal fact extraction. In The 50th Annual Meeting of the Association for Computational Linguistics, Proceedings of the Conference, July 8-14, 2012, Jeju Island, Korea - Volume 2: Short Papers, pages 233–237. The Association for Computer Linguistics.

- Harvesting facts from textual web sources by constrained label propagation. In Proceedings of the 20th ACM Conference on Information and Knowledge Management, CIKM 2011, Glasgow, United Kingdom, October 24-28, 2011, pages 837–846. ACM.

- Timely YAGO: harvesting, querying, and visualizing temporal knowledge from wikipedia. In EDBT 2010, 13th International Conference on Extending Database Technology, Lausanne, Switzerland, March 22-26, 2010, Proceedings, volume 426 of ACM International Conference Proceeding Series, pages 697–700. ACM.

- Temporal knowledge graph reasoning with historical contrastive learning. In Thirty-Seventh AAAI Conference on Artificial Intelligence, AAAI 2023, Thirty-Fifth Conference on Innovative Applications of Artificial Intelligence, IAAI 2023, Thirteenth Symposium on Educational Advances in Artificial Intelligence, EAAI 2023, Washington, DC, USA, February 7-14, 2023, pages 4765–4773. AAAI Press.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.