Know in AdVance: Linear-Complexity Forecasting of Ad Campaign Performance with Evolving User Interest

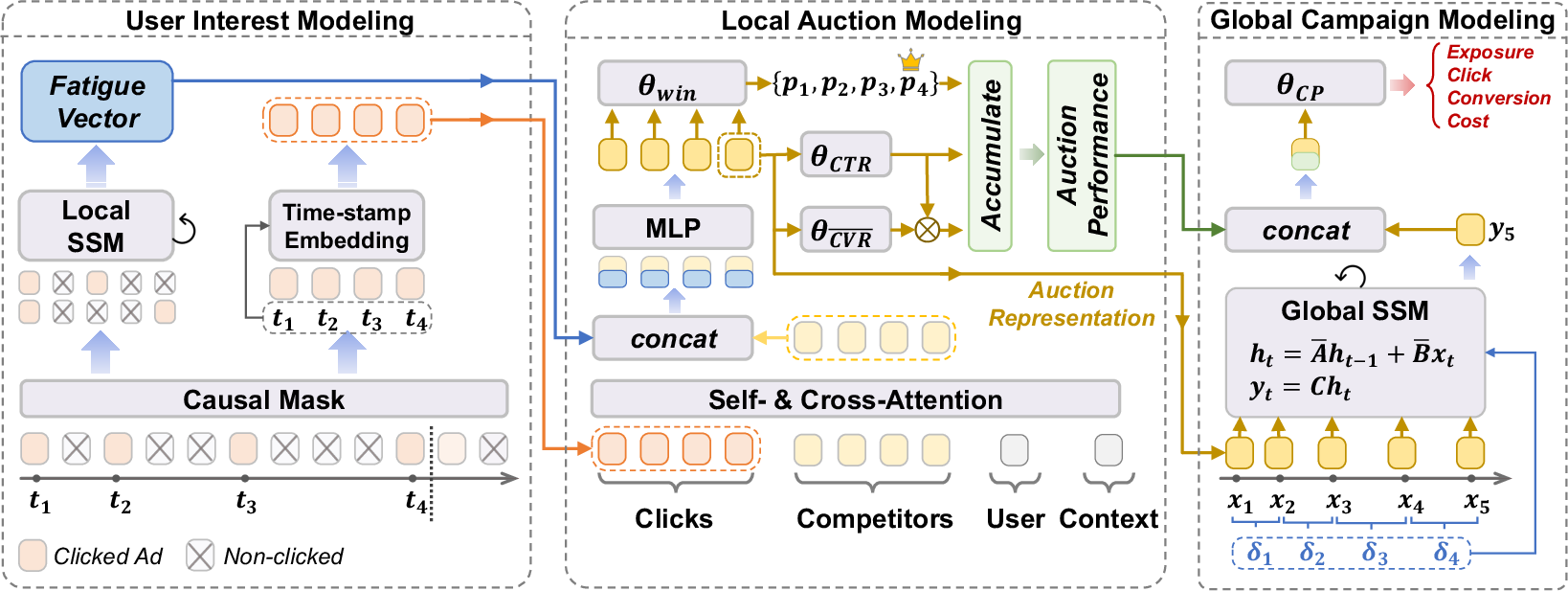

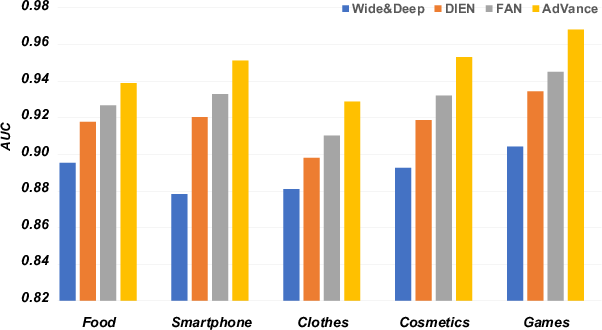

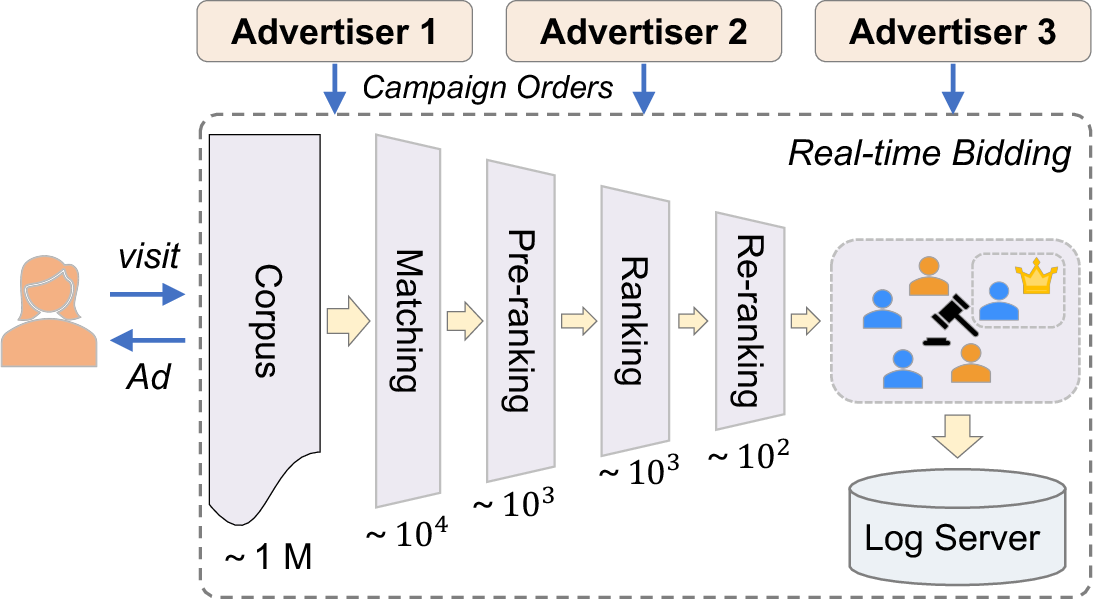

Abstract: Real-time Bidding (RTB) advertisers wish to \textit{know in advance} the expected cost and yield of ad campaigns to avoid trial-and-error expenses. However, Campaign Performance Forecasting (CPF), a sequence modeling task involving tens of thousands of ad auctions, poses challenges of evolving user interest, auction representation, and long context, making coarse-grained and static-modeling methods sub-optimal. We propose \textit{AdVance}, a time-aware framework that integrates local auction-level and global campaign-level modeling. User preference and fatigue are disentangled using a time-positioned sequence of clicked items and a concise vector of all displayed items. Cross-attention, conditioned on the fatigue vector, captures the dynamics of user interest toward each candidate ad. Bidders compete with each other, presenting a complete graph similar to the self-attention mechanism. Hence, we employ a Transformer Encoder to compress each auction into embedding by solving auxiliary tasks. These sequential embeddings are then summarized by a conditional state space model (SSM) to comprehend long-range dependencies while maintaining global linear complexity. Considering the irregular time intervals between auctions, we make SSM's parameters dependent on the current auction embedding and the time interval. We further condition SSM's global predictions on the accumulation of local results. Extensive evaluations and ablation studies demonstrate its superiority over state-of-the-art methods. AdVance has been deployed on the Tencent Advertising platform, and A/B tests show a remarkable 4.5\% uplift in Average Revenue per User (ARPU).

- Flamingo: a Visual Language Model for Few-shot Learning. Advances in Neural Information Processing Systems (NIPS) 35 (2022), 23716–23736.

- Layer normalization. arXiv preprint arXiv:1607.06450 (2016).

- Guy E Blelloch. 1990. Prefix sums and their applications. (1990).

- A Unified Framework for Campaign Performance Forecasting in Online Display Advertising. arXiv preprint arXiv:2202.11877 (2022).

- Tianqi Chen and Carlos Guestrin. 2016. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. 785–794.

- Wide & deep learning for recommender systems. In Proceedings of the 1st workshop on deep learning for recommender systems. 7–10.

- Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv preprint arXiv:1406.1078 (2014).

- Ying Grace Cui and Ruofei Zhang. 2013. Campaign Performance Forecasting for Non-guaranteed Delivery Advertising. US Patent App. 13/495,614.

- Flashattention: Fast and memory-efficient exact attention with io-awareness. Advances in Neural Information Processing Systems 35 (2022), 16344–16359.

- Dentsu. 2022. Global Ad Spend Forecast. https://www.dentsu.com

- Deep session interest network for click-through rate prediction. arXiv preprint arXiv:1905.06482 (2019).

- Hungry Hungry Hippos: Towards Language Modeling with State Space Models. In The Eleventh International Conference on Learning Representations.

- Albert Gu and Tri Dao. 2023. Mamba: Linear-time sequence modeling with selective state spaces. arXiv preprint arXiv:2312.00752 (2023).

- Efficiently Modeling Long Sequences with Structured State Spaces. In International Conference on Learning Representations.

- DeepFM: a factorization-machine based neural network for CTR prediction. arXiv preprint arXiv:1703.04247 (2017).

- Query-dominant User Interest Network for Large-Scale Search Ranking. In Proceedings of the 32nd ACM International Conference on Information and Knowledge Management. 629–638.

- Practical lessons from predicting clicks on ads at facebook. In Proceedings of the eighth international workshop on data mining for online advertising. 1–9.

- Sepp Hochreiter and Jürgen Schmidhuber. 1997. Long short-term memory. Neural computation 9, 8 (1997), 1735–1780.

- Transformer quality in linear time. In International Conference on Machine Learning. PMLR, 9099–9117.

- Predicting the Performance of an Advertising Campaign. US Patent App. 14/292,277.

- Method and system for forecasting a campaign performance using predictive modeling. US Patent App. 14/747,706.

- Deephit: A deep learning approach to survival analysis with competing risks. In Proceedings of the AAAI conference on artificial intelligence, Vol. 32.

- Time interval aware self-attention for sequential recommendation. In Proceedings of the 13th International Conference on Web Search and Data Mining. 322–330.

- FAN: Fatigue-Aware Network for Click-Through Rate Prediction in E-commerce Recommendation. In International Conference on Database Systems for Advanced Applications. Springer, 502–514.

- Modeling task relationships in multi-task learning with multi-gate mixture-of-experts. In Proceedings of the 24th ACM SIGKDD international conference on knowledge discovery & data mining. 1930–1939.

- Entire space multi-task model: An effective approach for estimating post-click conversion rate. In The 41st International ACM SIGIR Conference on Research & Development in Information Retrieval. 1137–1140.

- Mega: Moving Average Equipped Gated Attention. In The Eleventh International Conference on Learning Representations.

- Exploring the limits of weakly supervised pretraining. In Proceedings of the European conference on computer vision (ECCV). 181–196.

- Ad Impression Forecasting for Sponsored Search. In Proceedings of the 22nd International Conference on World Wide Web. 943–952.

- Search-based user interest modeling with lifelong sequential behavior data for click-through rate prediction. In Proceedings of the 29th ACM International Conference on Information & Knowledge Management. 2685–2692.

- User behavior retrieval for click-through rate prediction. In Proceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval. 2347–2356.

- Product-based neural networks for user response prediction. In IEEE 16th international conference on data mining (ICDM). IEEE, 1149–1154.

- Deep Landscape Forecasting for Real-time Bidding Advertising. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 363–372.

- Steffen Rendle. 2010. Factorization machines. In 2010 IEEE International conference on data mining. IEEE, 995–1000.

- Predicting clicks: estimating the click-through rate for new ads. In Proceedings of the 16th international conference on World Wide Web. 521–530.

- High-resolution Image Synthesis with Latent Diffusion Models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 10684–10695.

- Item-based collaborative filtering recommendation algorithms. In Proceedings of the 10th international conference on World Wide Web. 285–295.

- Self-Attention with Relative Position Representations. In Proceedings of NAACL-HLT. 464–468.

- Simplified State Space Layers for Sequence Modeling. In The Eleventh International Conference on Learning Representations.

- Sequence to sequence learning with neural networks. Advances in neural information processing systems 27 (2014).

- Attention is all you need. Advances in neural information processing systems 30 (2017).

- Grandmaster level in StarCraft II using multi-agent reinforcement learning. Nature 575, 7782 (2019), 350–354.

- Pointer networks. Advances in neural information processing systems 28 (2015).

- Deep & cross network for ad click predictions. In Proceedings of the ADKDD’17. 1–7.

- A Search-based Method for Forecasting Ad Impression in Contextual Advertising. In Proceedings of the 18th International Conference on World Wide Web. 491–500.

- CLOCK: Online Temporal Hierarchical Framework for Multi-scale Multi-granularity Forecasting of User Impression. In Proceedings of the 32nd ACM International Conference on Information and Knowledge Management. 2544–2553.

- Deep censored learning of the winning price in the real time bidding. In Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 2526–2535.

- Predicting winning price in real time bidding with censored data. In Proceedings of the 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. 1305–1314.

- Efficient Ad-level Impression Forecasting based on Monotonicity and Sampling. In 2021 7th International Conference on Big Data Computing and Communications (BigCom). IEEE, 180–187.

- Predicting advertiser bidding behaviors in sponsored search by rationality modeling. In Proceedings of the 22nd International Conference on World Wide Web (Rio de Janeiro, Brazil) (WWW ’13). Association for Computing Machinery, New York, NY, USA, 1433–1444. https://doi.org/10.1145/2488388.2488513

- Multi-task learning for bias-free joint ctr prediction and market price modeling in online advertising. In Proceedings of the 30th ACM International Conference on Information & Knowledge Management. 2291–2300.

- Metaformer is Actually What You Need for Vision. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 10819–10829.

- Effectively Modeling Time Series with Simple Discrete State Spaces. In The Eleventh International Conference on Learning Representations.

- Leaving No One Behind: A Multi-Scenario Multi-Task Meta Learning Approach for Advertiser Modeling. In Proceedings of the Fifteenth ACM International Conference on Web Search and Data Mining (Virtual Event, AZ, USA) (WSDM ’22). Association for Computing Machinery, New York, NY, USA, 1368–1376. https://doi.org/10.1145/3488560.3498479

- Bid-aware gradient descent for unbiased learning with censored data in display advertising. In Proceedings of the 22nd ACM SIGKDD international conference on Knowledge discovery and data mining. 665–674.

- Deep interest evolution network for click-through rate prediction. In Proceedings of the AAAI conference on artificial intelligence, Vol. 33. 5941–5948.

- Deep interest network for click-through rate prediction. In Proceedings of the 24th ACM SIGKDD international conference on knowledge discovery & data mining. 1059–1068.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.