- The paper uncovers a universal microstructure in DNNs by revealing log-normal weight distribution patterns analogous to financial arbitrage equilibrium.

- The paper uses empirical analysis of BERT and Llama-2 architectures to demonstrate consistent statistical patterns across network layers.

- The paper suggests that these equilibrium-inspired patterns can guide the design of more robust, interpretable, and efficient deep learning models.

Arbitrage Equilibrium and the Emergence of Universal Microstructure in Deep Neural Networks

This paper investigates the arbitrage equilibrium concept in the context of deep neural networks (DNNs) and how it correlates with the emergence of universal microstructures. The authors explore the statistical characteristics of weights in neural network layers across different architectures and hypothesize a consistent structural pattern that emerges akin to arbitrage equilibria in financial markets.

Methodology

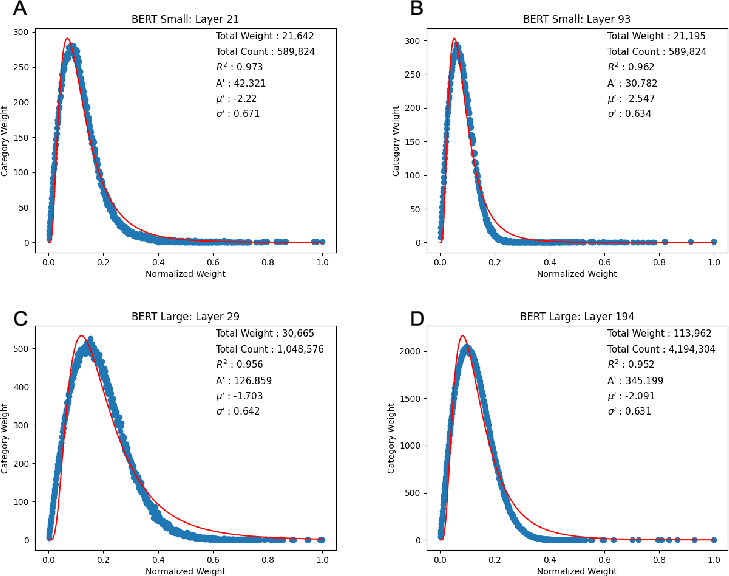

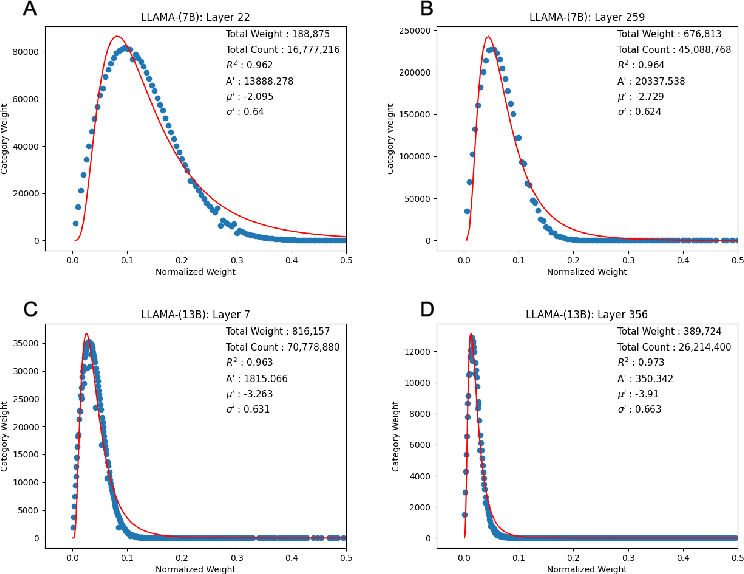

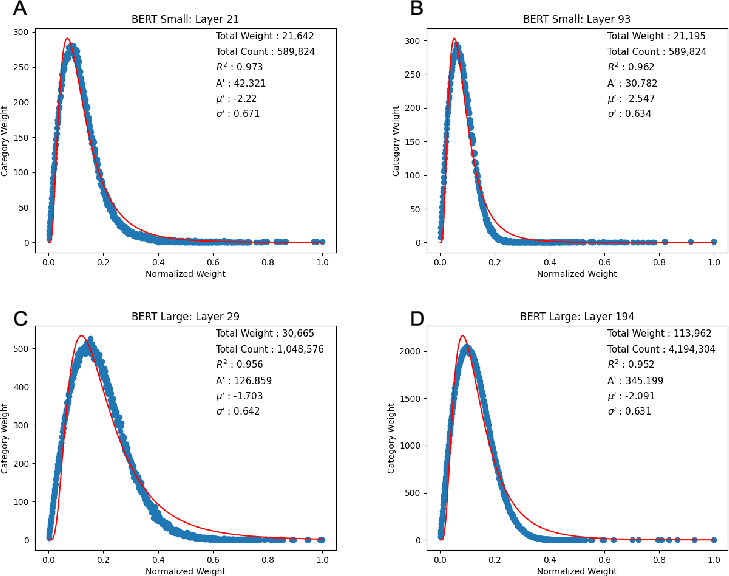

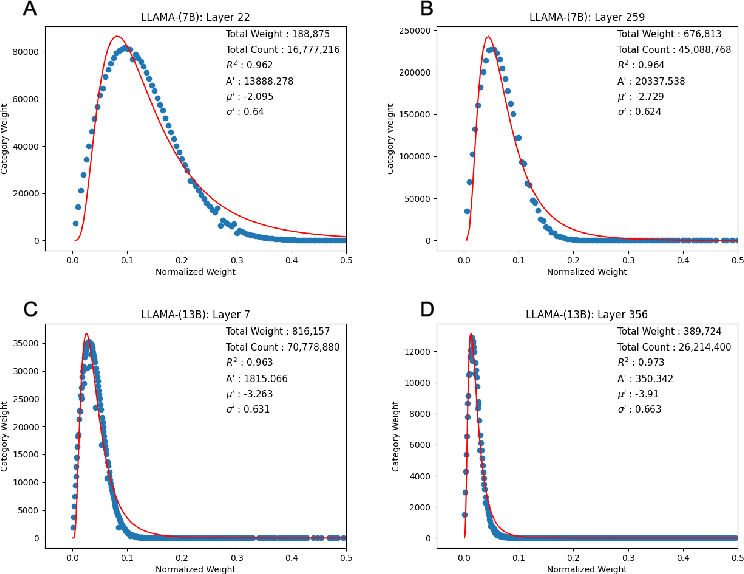

The study employs an analytical approach to explore the distribution of weights across various networks, including BERT (Small and Large), and Llama-2 (7B and 13B). The central hypothesis revolves around the log-normal distribution of these weights and their consistent patterns across networks. This analysis is supported by empirical data obtained from examining the layers of these networks to identify patterns that suggest a form of statistical equilibrium.

Figure 1: A depiction of the log-normal distribution characteristics in BERT-Small and BERT-Large networks, highlighting the arbitrage equilibrium concept.

Results

The authors present a detailed layer-by-layer exploration of the networks, providing evidence that supports the hypothesis of a universal microstructure within DNNs. They observed consistent replicable statistical patterns when analyzing the weight distributions across layers of different neural architectures. These patterns resemble financial market equilibria, suggesting that arbitrage principles could extend into the computational world, particularly in the formation and stabilization of neural network structures.

Figure 2: Comparative analysis of weight distributions in Llama-2 7B versus 13B networks, showcasing the emergence of a unified microstructure.

Discussion

The findings imply that neural networks, much like financial systems, self-organize towards an equilibrium state that minimizes systemic inefficiencies. This microstructural uniformity across different architectures suggests that DNNs may be operating under intrinsic constraints that drive them toward optimality akin to arbitrage conditions. These insights can influence future neural network design by promoting architectures that inherently possess stable and predictable microstructural properties.

The concept of arbitrage equilibrium applied in this context opens new avenues for theoretical research and practical applications, such as designing networks with enhanced interpretability and robustness by leveraging these identified microstructures.

Conclusion

The paper successfully bridges concepts from financial systems and neural network architectures, opening doors to new interdisciplinary methodologies. The consistency in weight distribution patterns across diverse networks signifies that DNNs adhere to a form of equilibrium that minimizes inefficiencies, much like in systems of arbitrage. Future research might focus on further quantifying these patterns and exploring how they can be harnessed to improve model performance and generalization capabilities. This work provides a foundation for developing DNNs guided by principles traditionally applied in financial domains.