- The paper introduces a novel AI weather forecasting model that employs cross-level attention to improve vertical atmospheric interactions.

- It utilizes a 3D Swin U-Net transformer on ERA5 climatological data, achieving competitive RMSE improvements over the IFS HRES baseline.

- The study demonstrates high-resolution forecasting with reduced computational costs, setting a benchmark for efficient operational meteorology.

ArchesWeather: An Efficient AI Weather Forecasting Model at 1.5° Resolution

Introduction

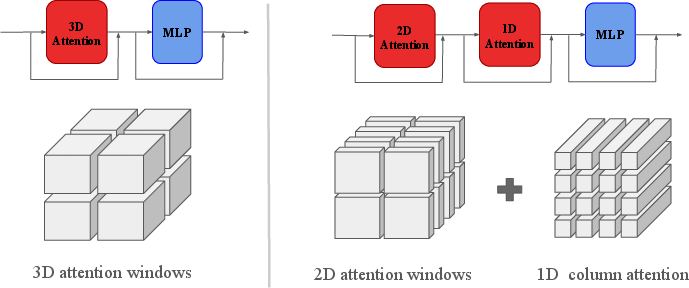

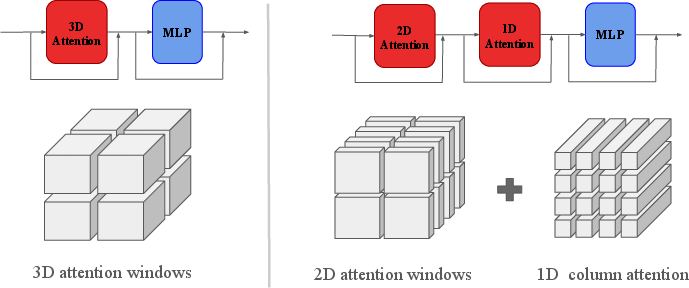

The paper "ArchesWeather: An efficient AI weather forecasting model at 1.5° resolution" (2405.14527) introduces an AI model designed to optimize weather forecasting performance using advanced neural architectures. The study leverages the integration of physics-informed inductive priors, which are integral to designing neural networks for atmospheric data. Existing models like Pangu-Weather employ 3D local attention but are outshone by systems deploying non-local attention mechanisms, prompting the development of the sc model—a 1.5° resolution transformer that innovatively combines 2D attention with column-wise interactions to improve forecast accuracy.

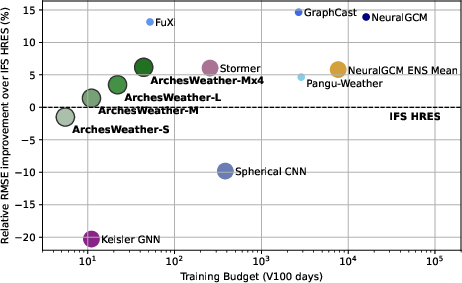

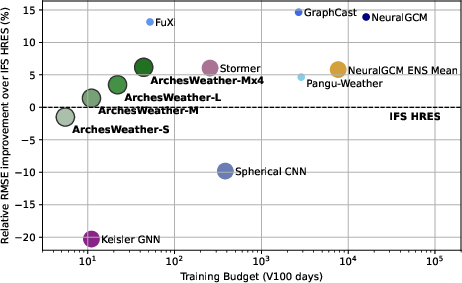

Figure 1: Relative RMSE improvement over the IFS HRES as a function of training computational budget.

Methodological Advances

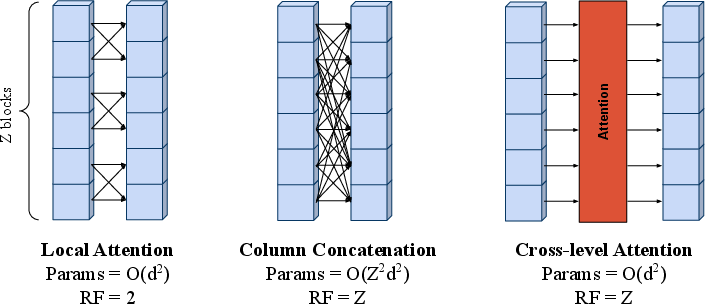

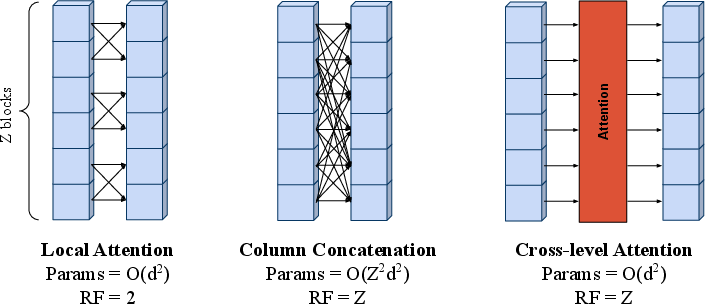

The cornerstone of ArchesWeather is the strategic use of Cross-Level Attention (CLA), deviating from local interaction paradigms. Traditional local attention, though grounded in atmospheric physics that dictate localized interactions, is computationally limiting. CLA enables vertical column-wise feature interactions, thus expanding the receptive computation field vertically without increasing the parameter count excessively. Alternative methods like enlarged attention windows boost computational costs disproportionately, justifying the selection of CLA for this model.

In deploying CLA, sc ensures that each atmospheric layer's interactions are accounted for comprehensively, vastly enhancing computational efficiency and processing coherence across vertical layers.

Figure 2: Comparison of attention schemes used in Pangu-Weather (left) versus CLA (right).

Dataset and Training

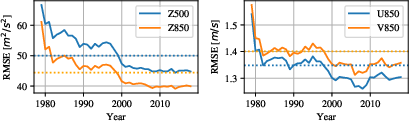

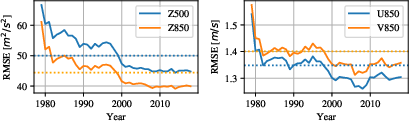

The model utilizes the ERA5 dataset, aligning standard resolution formats for data regridding and employing specific climatological variables across multiple atmospheric levels. The training employs a latitude-weighted RMSE and Relative RMSE improvement metrics. These provide robust evaluations of model performance relative to the International Forecast System High-Resolution (IFS HRES) baseline. The systems' architecture—a 3D Swin U-Net transformer—is fine-tuned on recent ERA5 data samples post-2000 to mitigate historical distribution shifts in data quality.

Figure 3: Geopotential (left) and wind speed (right) RMSE comparison of models with and without fine-tuning.

Results and Discussion

The ArchesWeather model, particularly in its ensemble configurations (M and L variants), demonstrates superior performance over previous state-of-the-art models across several key atmospheric variables. Notably, the model achieves competitive RMSE scores while employing less computational resources, significantly undercutting the training budget required by comparable high-resolution models like NeuralGCM and Pangu-Weather. The CLA mechanism, specifically, is illustrative in improving forecasting skill with a compact parameter architecture compared to extensive parameter reliance in alternative models.

Figure 4: RMSE scores of weather models for lead times up to 10 days.

Conclusion

ArchesWeather sets a benchmark for efficient, high-resolution weather forecast modelling utilizing minimal computational infrastructure. Its core advancements in implementing CLA highlight an evolution in atmospheric modeling architectures, balancing computational expediency with robust multiscale atmospheric interaction modeling. This paves the way for future research avenues, particularly focused on model refinement for region-specific forecasting accuracy enhancements and integration with diffusion models for further downscaling and resolution upgrades. The results and methodologies presented in this study indicate significant potential for broader applications in climate modeling and operational meteorology.