HippoRAG: Neurobiologically Inspired Long-Term Memory for Large Language Models

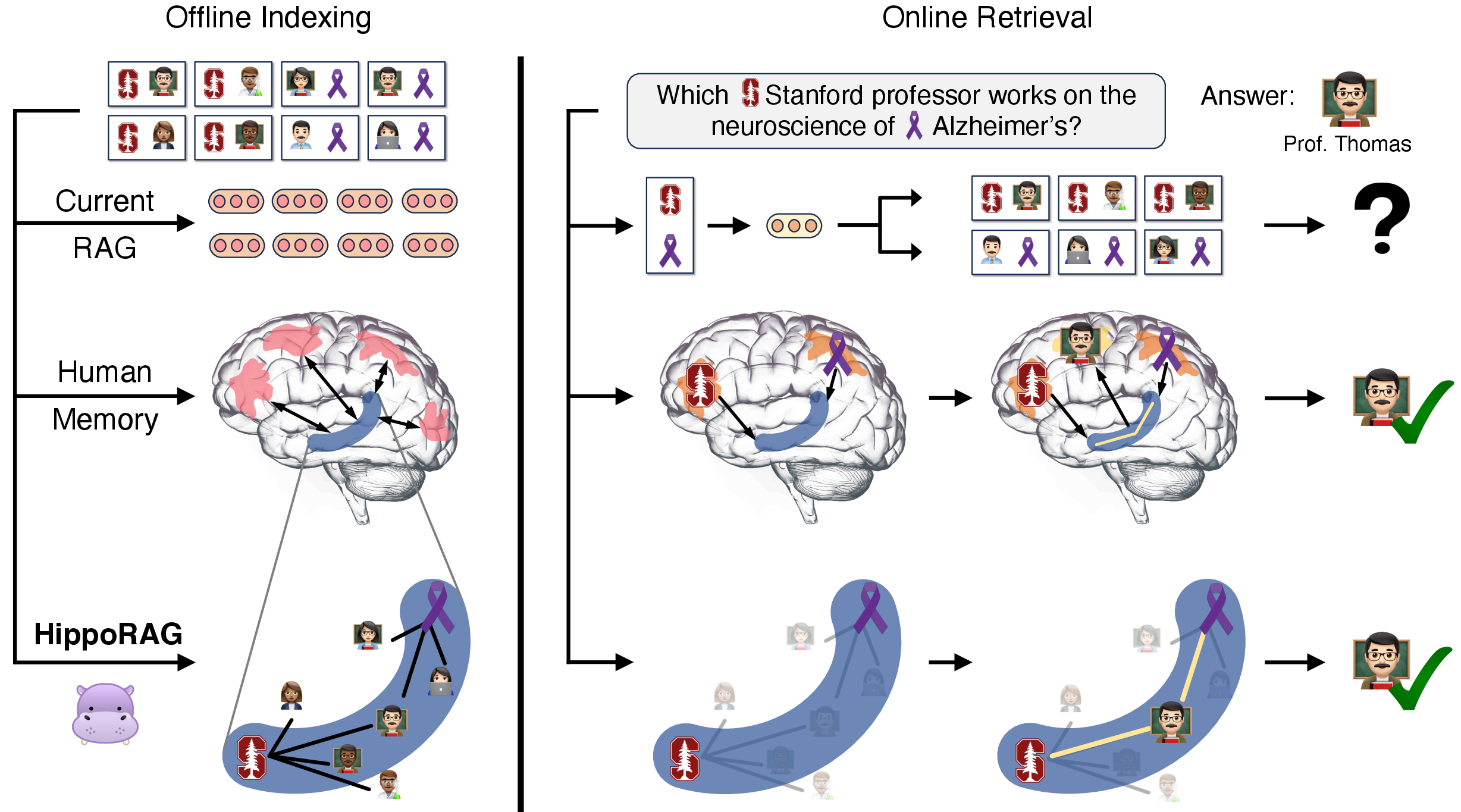

Abstract: In order to thrive in hostile and ever-changing natural environments, mammalian brains evolved to store large amounts of knowledge about the world and continually integrate new information while avoiding catastrophic forgetting. Despite the impressive accomplishments, LLMs, even with retrieval-augmented generation (RAG), still struggle to efficiently and effectively integrate a large amount of new experiences after pre-training. In this work, we introduce HippoRAG, a novel retrieval framework inspired by the hippocampal indexing theory of human long-term memory to enable deeper and more efficient knowledge integration over new experiences. HippoRAG synergistically orchestrates LLMs, knowledge graphs, and the Personalized PageRank algorithm to mimic the different roles of neocortex and hippocampus in human memory. We compare HippoRAG with existing RAG methods on multi-hop question answering and show that our method outperforms the state-of-the-art methods remarkably, by up to 20%. Single-step retrieval with HippoRAG achieves comparable or better performance than iterative retrieval like IRCoT while being 10-30 times cheaper and 6-13 times faster, and integrating HippoRAG into IRCoT brings further substantial gains. Finally, we show that our method can tackle new types of scenarios that are out of reach of existing methods. Code and data are available at https://github.com/OSU-NLP-Group/HippoRAG.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces HippoRAG, a new way to give AI LLMs a better “long-term memory.” It’s inspired by how the human brain stores and connects memories, especially the hippocampus, which helps us link ideas and remember things from small clues. HippoRAG helps AI pull together facts scattered across many documents so it can answer complex questions more accurately, faster, and at lower cost.

Key Questions the Paper Tries to Answer

- How can we help AI systems combine information from different places (like multiple web pages) as easily as humans do?

- Can a brain-inspired method make retrieval-augmented generation (RAG) work better on questions that need multi-step reasoning?

- Can we do this in a single step (quickly) instead of using many slow, back-and-forth steps?

How HippoRAG Works (Simple Explanation)

Think of your brain’s memory like a giant map of connected ideas. When you hear “Stanford” and “Alzheimer’s,” your brain jumps between related ideas to find “a Stanford professor who studies Alzheimer’s.” HippoRAG imitates that.

Here’s the process in two parts:

1) Building the memory map (offline indexing)

- An AI reads lots of short passages and pulls out small facts as triples, like “Thomas Südhof — studies — neuroscience.” These become nodes (ideas) and edges (connections) in a knowledge graph (a big web of linked concepts).

- It also adds “synonym” links so similar names or phrases are treated as connected (like “Stanford U.” and “Stanford University”).

- This creates an “index” similar to the hippocampus in your brain: a web that remembers which things are related.

Analogy: It’s like making index cards for every important noun or phrase and tying them together with strings where there’s a relationship or similarity.

2) Finding answers (online retrieval)

- For a new question, the AI picks out the key names and terms (for example, “Stanford,” “Alzheimer’s”).

- It finds those terms on the graph and uses a graph search method called Personalized PageRank (PPR). You can think of PPR as dropping a bit of “attention paint” on the starting nodes and letting it spread along the most connected paths. The highest-painted areas are likely the most relevant.

- The system then scores the original passages by how much “attention paint” reaches their nodes and picks the best ones to read for the final answer.

A helpful tweak: “Node specificity.” Rare, more specific terms get a little extra weight (like how unusual words can be more helpful than very common ones).

Why this is powerful: Instead of retrieving documents one hop at a time (retrieve → think → retrieve again…), HippoRAG can do “multi-hop” reasoning in a single step by following the web of connections on the graph.

What They Found (Main Results)

The authors tested HippoRAG on three multi-hop question-answering benchmarks:

- MuSiQue

- 2WikiMultiHopQA

- HotpotQA (generally considered easier for multi-hop)

Key findings:

- HippoRAG found the right supporting passages more often than other popular methods (like BM25, Contriever, GTR, ColBERTv2) on the harder datasets (MuSiQue and 2Wiki). On 2Wiki, it improved retrieval by up to about 20 percentage points.

- It answered more questions correctly when used with the same reader model (higher exact match and F1 scores).

- It’s much more efficient: single-step HippoRAG was about 6–13 times faster and 10–30 times cheaper than an iterative method called IRCoT, while getting similar or better results.

- When combined with IRCoT, it got even better—showing the two approaches complement each other.

Why this matters:

- Many real tasks (like scientific reviews, legal summaries, and medical decisions) need integrating information across multiple documents. HippoRAG is good at this, especially when there isn’t a single passage that mentions everything at once.

Why It Matters (Implications and Impact)

- Better long-term memory for AI: Instead of retraining the whole model when new information appears, you can just add new nodes and edges to the knowledge graph. That’s simpler, safer, and cheaper.

- Stronger multi-hop reasoning: HippoRAG can do multi-hop retrieval in one shot, which is faster and more scalable for real-world use.

- Handles “path-finding” questions: These are questions like “Which Stanford professor studies Alzheimer’s?” where you must connect two different groups (Stanford professors and Alzheimer’s researchers). Current systems often fail unless a single document states both facts together. HippoRAG’s “web of associations” finds these links more reliably.

A Few Limitations and Next Steps

- The system uses off-the-shelf parts (like existing LLMs and retrieval tools). With specific training or fine-tuning, it could work even better.

- Building the graph depends on correctly extracting entities and relations; improving this step would reduce errors.

- The team still needs to test how well HippoRAG scales to very large, real-world collections over time.

Bottom Line

HippoRAG takes a smart idea from neuroscience—how the hippocampus links memories—and brings it to AI. By creating a graph of concepts and using a focused graph search, it helps AI find and combine the right pieces of information quickly and accurately. This makes the AI better at answering tough, multi-step questions and points toward a practical way to give LLMs a more human-like, continually updating memory.

Collections

Sign up for free to add this paper to one or more collections.