Human4DiT: 360-degree Human Video Generation with 4D Diffusion Transformer

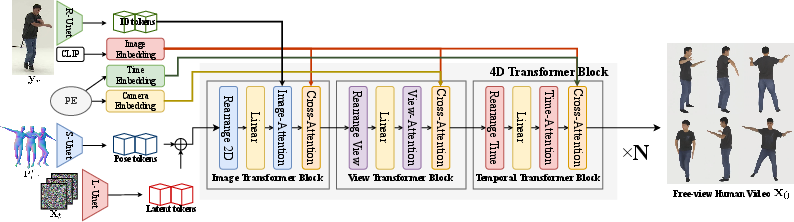

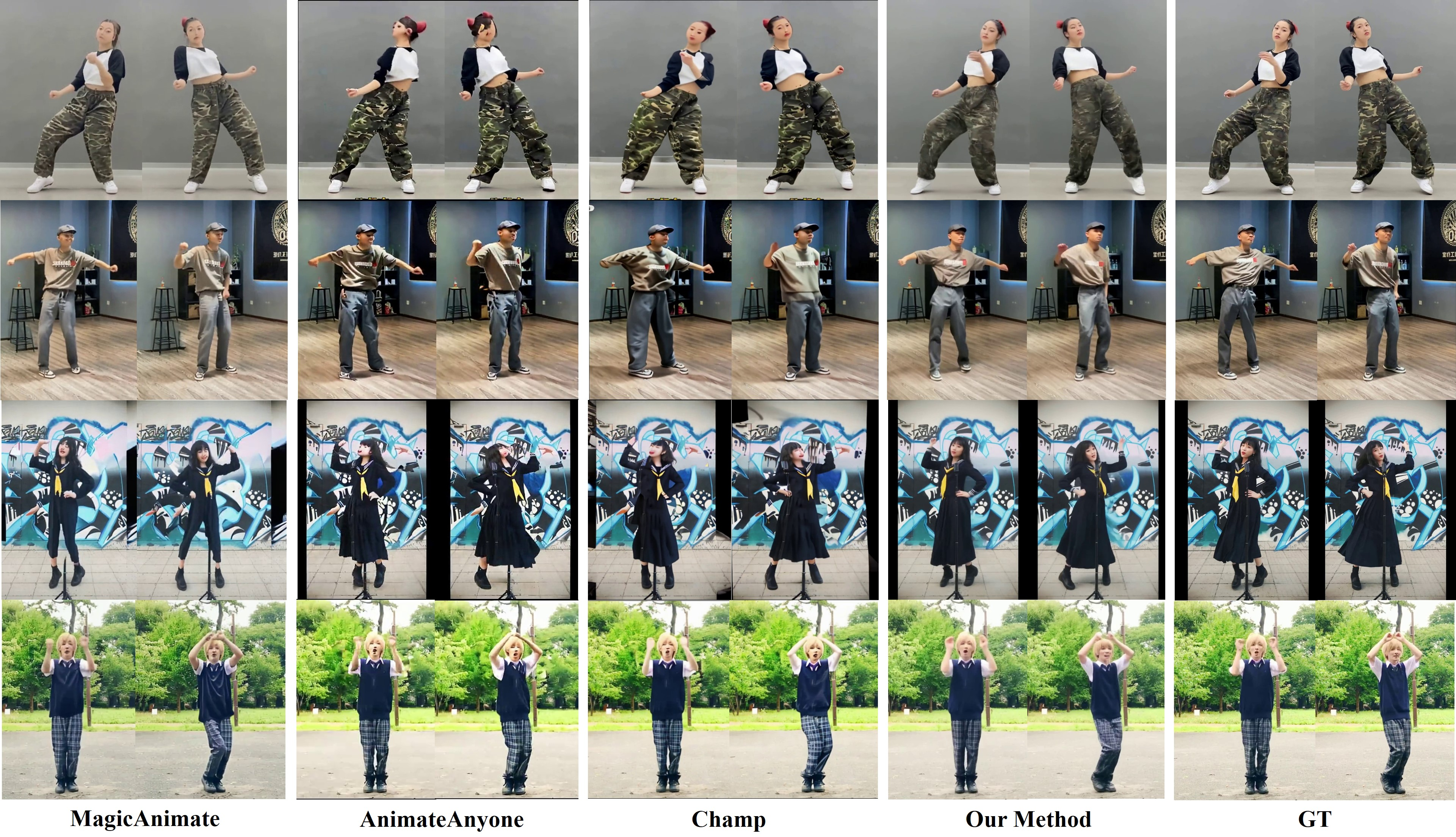

Abstract: We present a novel approach for generating 360-degree high-quality, spatio-temporally coherent human videos from a single image. Our framework combines the strengths of diffusion transformers for capturing global correlations across viewpoints and time, and CNNs for accurate condition injection. The core is a hierarchical 4D transformer architecture that factorizes self-attention across views, time steps, and spatial dimensions, enabling efficient modeling of the 4D space. Precise conditioning is achieved by injecting human identity, camera parameters, and temporal signals into the respective transformers. To train this model, we collect a multi-dimensional dataset spanning images, videos, multi-view data, and limited 4D footage, along with a tailored multi-dimensional training strategy. Our approach overcomes the limitations of previous methods based on generative adversarial networks or vanilla diffusion models, which struggle with complex motions, viewpoint changes, and generalization. Through extensive experiments, we demonstrate our method's ability to synthesize 360-degree realistic, coherent human motion videos, paving the way for advanced multimedia applications in areas such as virtual reality and animation.

- All are worth words: A vit backbone for diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 22669–22679, 2023.

- Person image synthesis via denoising diffusion model. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5968–5976, 2023.

- Bedlam: A synthetic dataset of bodies exhibiting detailed lifelike animated motion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 8726–8737, 2023.

- Dna-rendering: A diverse neural actor repository for high-fidelity human-centric rendering. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 19982–19993, 2023.

- Generative adversarial nets. Advances in neural information processing systems, 27, 2014.

- Cameractrl: Enabling camera control for text-to-video generation, 2024.

- Animate anyone: Consistent and controllable image-to-video synthesis for character animation. arXiv preprint arXiv:2311.17117, 2023.

- Learning high fidelity depths of dressed humans by watching social media dance videos. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 12753–12762, 2021.

- Human-art: A versatile human-centric dataset bridging natural and artificial scenes. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 618–629, 2023.

- Dreampose: Fashion video synthesis with stable diffusion. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 22680–22690, 2023.

- Same: Skeleton-agnostic motion embedding for character animation. In SIGGRAPH Asia 2023 Conference Papers, pages 1–11, 2023.

- Motion-x: A large-scale 3d expressive whole-body human motion dataset. Advances in Neural Information Processing Systems, 36, 2024.

- Zero-1-to-3: Zero-shot one image to 3d object. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 9298–9309, 2023a.

- Syncdreamer: Generating multiview-consistent images from a single-view image. arXiv preprint arXiv:2309.03453, 2023b.

- Wonder3d: Single image to 3d using cross-domain diffusion. arXiv preprint arXiv:2310.15008, 2023.

- Smpl: A skinned multi-person linear model. In Seminal Graphics Papers: Pushing the Boundaries, Volume 2, pages 851–866. 2023.

- Vdt: General-purpose video diffusion transformers via mask modeling. In The Twelfth International Conference on Learning Representations, 2023.

- Latte: Latent diffusion transformer for video generation. arXiv preprint arXiv:2401.03048, 2024.

- Conditional generative adversarial nets. arXiv preprint arXiv:1411.1784, 2014.

- OpenAI. Video generation models as world simulators. https://openai.com/index/video-generation-models-as-world-simulators/, 2024. Accessed: 2024-05-19.

- Scalable diffusion models with transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 4195–4205, 2023.

- Improving language understanding by generative pre-training. 2018.

- Language models are unsupervised multitask learners. OpenAI blog, 1(8):9, 2019.

- High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 10684–10695, 2022.

- U-net: Convolutional networks for biomedical image segmentation. In Medical image computing and computer-assisted intervention–MICCAI 2015: 18th international conference, Munich, Germany, October 5-9, 2015, proceedings, part III 18, pages 234–241. Springer, 2015.

- Zero123++: a single image to consistent multi-view diffusion base model. arXiv preprint arXiv:2310.15110, 2023a.

- Mvdream: Multi-view diffusion for 3d generation. arXiv preprint arXiv:2308.16512, 2023b.

- Deformable gans for pose-based human image generation. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 3408–3416, 2018.

- Appearance and pose-conditioned human image generation using deformable gans. IEEE transactions on pattern analysis and machine intelligence, 43(4):1156–1171, 2019a.

- First order motion model for image animation. Advances in neural information processing systems, 32, 2019b.

- Segmenter: Transformer for semantic segmentation. In Proceedings of the IEEE/CVF international conference on computer vision, pages 7262–7272, 2021.

- A good image generator is what you need for high-resolution video synthesis. arXiv preprint arXiv:2104.15069, 2021.

- Training data-efficient image transformers & distillation through attention. In International conference on machine learning, pages 10347–10357. PMLR, 2021.

- Aist dance video database: Multi-genre, multi-dancer, and multi-camera database for dance information processing. In Proceedings of the 20th International Society for Music Information Retrieval Conference, ISMIR 2019, Delft, Netherlands, 2019.

- Twindom. Twindom 3d avatar dataset, 2022.

- Attention is all you need. Advances in neural information processing systems, 30, 2017.

- Disco: Disentangled control for referring human dance generation in real world. arXiv e-prints, pages arXiv–2307, 2023.

- One-shot free-view neural talking-head synthesis for video conferencing. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 10039–10049, 2021.

- G3an: Disentangling appearance and motion for video generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5264–5273, 2020.

- Segformer: Simple and efficient design for semantic segmentation with transformers. Advances in neural information processing systems, 34:12077–12090, 2021.

- Magicanimate: Temporally consistent human image animation using diffusion model. arXiv preprint arXiv:2311.16498, 2023.

- Direct-a-video: Customized video generation with user-directed camera movement and object motion. arXiv preprint arXiv:2402.03162, 2024.

- Generating holistic 3d human motion from speech. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 469–480, 2023.

- Function4d: Real-time human volumetric capture from very sparse consumer rgbd sensors. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR2021), 2021.

- Tokens-to-token vit: Training vision transformers from scratch on imagenet. In Proceedings of the IEEE/CVF international conference on computer vision, pages 558–567, 2021.

- Closet: Modeling clothed humans on continuous surface with explicit template decomposition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 501–511, 2023a.

- Adding conditional control to text-to-image diffusion models. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 3836–3847, 2023b.

- Fast training of diffusion models with masked transformers. arXiv preprint arXiv:2306.09305, 2023.

- Rethinking semantic segmentation from a sequence-to-sequence perspective with transformers. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 6881–6890, 2021.

- Champ: Controllable and consistent human image animation with 3d parametric guidance. arXiv preprint arXiv:2403.14781, 2024.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.