- The paper introduces MockLLM, a framework that enhances candidate-job matching by simulating realistic mock interviews using LLMs.

- It employs a two-sided handshake protocol where both interviewer and candidate evaluate each other using dialogue history and traditional metrics.

- The framework’s reflection memory mechanism refines prompts for future interactions, leading to improved precision, recall, and F1 scores in recruitment assessments.

MockLLM: A Multi-Agent Behavior Collaboration Framework for Online Job Seeking and Recruiting

Introduction

The paper introduces MockLLM, a framework designed to enhance online job seeking and recruiting processes through the use of LLMs as role-playing interviewers and candidates. MockLLM divides the person-job matching process into two modules: mock interview generation and two-sided evaluation in a handshake protocol. This method augments traditional person-job fitting by leveraging LLMs to simulate interview situations, providing additional data points for evaluating candidates.

Framework Overview

MockLLM is structured into three main components:

- Mock Interview Generation: This module employs LLMs to generate mock interviews through role-playing as both interviewers and candidates. The multi-turn conversational interactions serve as a supplementary data source, augmenting traditional evaluations based solely on resumes and job descriptions.

Figure 1: The framework overview of MockLLM, highlighting the interaction flow among its modules.

- Two-Sided Evaluation in Handshake Protocol: Both parties in the interview evaluate each other using dialogue history, resumes, and job descriptions. The handshake protocol ensures mutual agreement before declaring a match, supporting a more robust person-job fit.

- Reflection Memory Generation: Successfully matched cases are stored to refine future interactions, enabling continuous prompt optimization.

Implementation Details

Implementation of MockLLM involves the following key stages:

- Role Initialization: LLMs are prompted to play roles of interviewers and candidates. Functions for interview question generation and response formulation are driven by module-specific prompts.

1

2

|

interviewer = f_role(job_description)

candidate = g_role(resume) |

- Mock Interview Process: Conducted through standardized multi-turn interactions ensuring coherence and relevance to candidate resumes and job descriptions.

1

2

|

question = f_ques(interview_history, resume, job_description, question_prompt)

answer = g_resp(updated_history, resume, job_description, answer_prompt) |

- Evaluation Mechanism: Two-sided evaluation aggregates performance data from both descriptive content and dialogic history.

1

2

|

score_interviewer = f_eval(resume, job_description, interview_dialogue)

score_candidate = g_eval(resume, job_description, interview_dialogue) |

- Reflection Memory: Positive interactions are stored, dynamically modifying prompts to improve upcoming interview rounds.

1

|

prompt_mod = f_mod(existing_memory, new_data) |

Extensive experiments highlight the superior performance of MockLLM. The framework demonstrates improved precision, recall, and F1 scores across traditional and mock interview-based assessments.

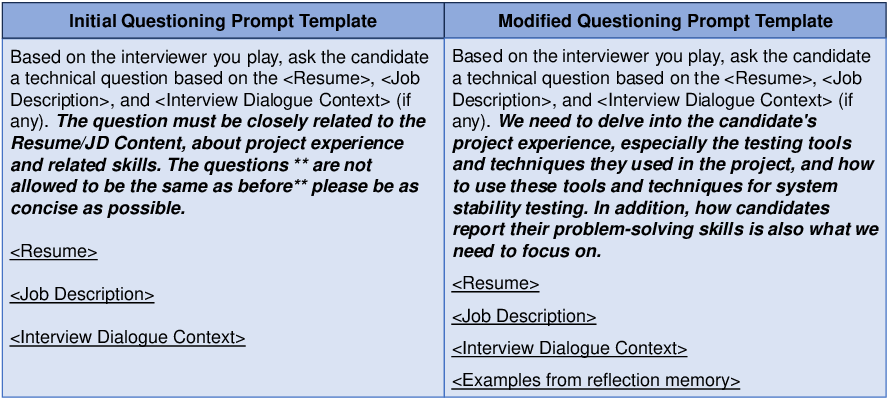

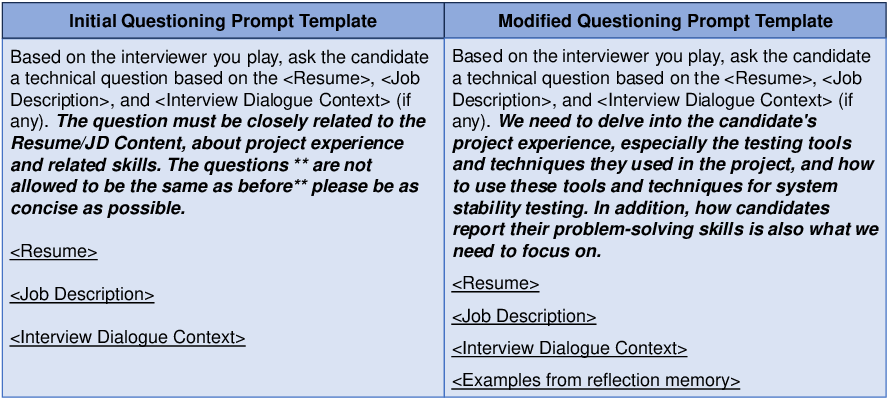

Figure 2: Comparison results before and after questioning prompt modification showing increased specificity post-reflection adjustments.

The evaluation process leverages both machine learning metrics and human evaluations, highlighting MockLLM's capacity to generate coherent, relevant, and diverse interview content. Automatic and human assessments confirm MockLLM's efficacy in generating high-quality mock interviews, leading to more accurate person-job matches.

Conclusion

MockLLM significantly contributes to advancing methodologies in online job recruitment through innovative application of LLMs. By utilizing mock interviews for person-job evaluation, the framework provides a unique approach to enhance the efficiency and accuracy of candidate selection processes. Future research could explore the adaptation of MockLLM to different cultural or language-specific contexts, broadening its applicability in global recruitment scenarios.