AI-based data assimilation: Learning the functional of analysis estimation

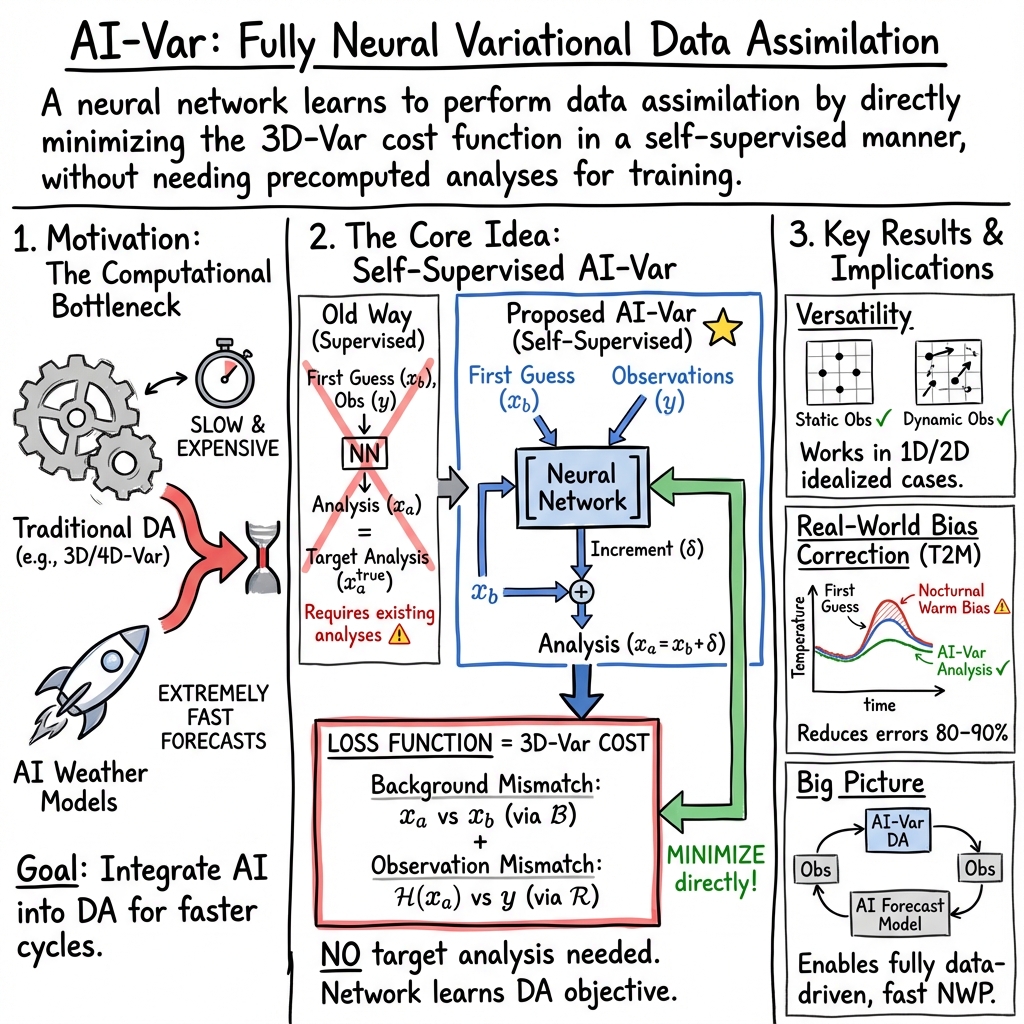

Abstract: The integration of observational data into numerical models, known as data assimilation (DA), is fundamental for making Numerical Weather Prediction (NWP) possible, with breathtaking success over the past 60 years (Bauer et al. 2015). Traditional DA methods, such as variational techniques and ensemble Kalman filters, are basic pillars of current NWP by incorporating diverse observational data. However, the emergence of AI presents new opportunities for further improvements. AI-based approaches can emulate the complex computations of traditional NWP models at a reduced computational cost, offering the potential to speed up and improve analyses and forecasts dramatically (e.g. Pathak et al., 2022; Bi et al., 2023; Lam et al., 2023; Bouallegue et al., 2023). AI itself plays a growing role in optimization (e.g. Fan et al., 2024), which offers new possibilities also beyond model emulation. In this paper, we introduce a novel AI-based variational DA approach designed to replace classical methods of DA by leveraging deep learning techniques. Unlike previous hybrid approaches, our method integrates the DA process directly into a neural network, utilizing the variational DA framework. This innovative AI-based system, termed AI-Var, employs a neural network trained to minimize the variational cost function, enabling it to perform DA without relying on pre-existing analysis datasets. We present a proof-of-concept implementation of this approach, demonstrating its feasibility through a series of idealized and real-world test cases. Our results indicate that the AI-Var system can efficiently assimilate observations and produce accurate initial conditions for NWP, highlighting its potential to carry out the DA process in weather forecasting. This advancement paves the way for fully data-driven NWP systems, offering a significant leap forward in computational efficiency.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about a new way to blend weather observations (like temperatures measured at stations or by planes) with computer model predictions to get the best possible picture of the atmosphere right now. This blending process is called data assimilation. The authors show how a neural network (a type of AI) can learn to do data assimilation on its own, without needing to copy past solutions from a traditional system.

What questions are the researchers asking?

- Can a neural network learn to do the “analysis” step of weather forecasting (the blending of observations with model predictions)?

- Can it do this without being trained on a big pile of past “correct answers” (past analyses), and instead learn just from the rules of how good analyses are normally judged?

- Will it work in simple test cases and in a small real-world example?

How did they try to answer these questions?

Key ideas in simple terms

- First guess (also called “background”): Think of this as a draft map of the weather—what the model predicts before you add new observations.

- Observations: Real measurements—like readings from weather stations or aircraft.

- Analysis: The improved map you get after combining the first guess with the observations in a smart way.

- Observation operator: A tool that takes the model’s map and tells you what a sensor would measure at a certain place (for example, “what is the model temperature at this station?”).

- 3D-Var cost function: A formula that scores how good an analysis is. It penalizes (a) being too far from the first guess and (b) not matching the observations, with weights that describe how trustworthy each source is. You want to minimize this score.

- Background errors (matrix B): Describe typical mistakes and how errors spread in space (like how one thermometer reading influences nearby points).

- Observation errors (matrix R): Describe how noisy the measurements are.

The twist: “Self-supervised” training

Most AI methods for data assimilation copy from existing solutions (they need past analyses as “correct answers” to learn from). The authors do something different:

- They train the neural network to directly minimize the same 3D-Var cost function that traditional systems minimize.

- That means the network needs only the first guess and the observations (plus their “trust” weights), not the final target answers. It learns by practicing the same rule book humans use, rather than imitating past outputs.

Analogy: Instead of showing a student many solved math problems to copy, you teach them the rules for scoring a solution and let them practice until their score is best.

What did they build and test?

- A simple feed‑forward neural network (layers of connected “neurons”) implemented in PyTorch.

- Input:

- The first-guess field (the draft weather map).

- The observations (and, when needed, their locations).

- Loss (what the network tries to minimize while training): the 3D‑Var cost function (match the observations well, don’t stray too far from the first guess, with sensible weights).

- Two observation layouts:

- Static: same station locations every time (like fixed weather stations).

- Dynamic: changing locations (like data from planes), which is harder. They used tricks so the network doesn’t care about the order of observations (permutation invariance).

- Test cases: 1) Simple 1D fields (like sine waves and parabolas on a line). 2) Simple 2D fields (wavy patterns on a grid). 3) A real-world case: 2-meter air temperature over part of Germany, using a reanalysis map as first guess and real station observations. They also provided surface height and time of day to help the model.

They varied:

- Number of observations (few vs many).

- How far observation influence spreads (the “smoothing width” in B).

- How many training samples (practice examples) the network saw.

What did they find, and why does it matter?

- It works: The AI (called AI‑Var) learned to assimilate observations by minimizing the 3D‑Var cost function and produced improved maps (analyses) without needing past analyses as training targets.

- Static observation locations are easier: When stations don’t move, the network learns faster and reaches lower errors.

- More observations help: With more measurements, the analyses get closer to the true fields.

- The smoothing width matters: If you smooth too much (very wide influence), you can blur important details; too little smoothing can make the field noisy. There’s a sweet spot.

- More training data helps, especially for moving (dynamic) observations: Dynamic layouts are harder; the network benefits a lot from seeing many examples. With a lot of data, there’s also a risk of overfitting (getting too specialized on the training set), so you have to watch validation performance carefully.

- Real-world test is promising: In the German 2-meter temperature case, both training and validation loss kept going down, suggesting the method was learning useful adjustments toward observations, with no sign of overfitting yet.

Why it matters:

- Speed and cost: AI‑Var can be much faster than running a full traditional data assimilation solver each time, which is important because modern AI weather models can forecast in seconds—data assimilation is becoming the slow step.

- Independence from past analyses: Not needing a big, high-quality archive of past analyses to train on is a big plus, especially in new regions or for new variables.

What could this change in the future?

- Faster full‑AI weather systems: If both the forecast model and the data assimilation are AI‑based, we could get high‑quality forecasts extremely quickly and cheaply.

- Flexible use of new data sources: The approach can handle changing observation networks (like planes), which are increasingly important.

- Less reliance on massive, expensive traditional systems: This could lower barriers for national weather services, researchers, and companies to build capable forecasting systems.

Practical next steps and limits to keep in mind:

- Better handling of first guesses: In real operations, the first guess comes from the previous analysis; the authors note future work on iteratively improving this.

- Sharper error models (B and R): Smarter, flow‑dependent error descriptions would likely improve results.

- Scaling up: Applying this to full 3D atmosphere variables, satellites, and many observation types will be more complex, but this study shows a clear path.

Takeaway

The authors built an AI that learns to do data assimilation by following the same rule book (the 3D‑Var cost function) that traditional systems use—without needing past “correct” analyses as examples. In simple tests and a real temperature case, it works well, especially with fixed observation sites and enough data. This is a promising step toward fast, fully data‑driven weather forecasting.

Collections

Sign up for free to add this paper to one or more collections.