- The paper demonstrates that synthetic data robustly benchmarks and optimizes automated resonance parameter evaluation.

- It introduces novel error metrics and hyperparameter tuning to improve accuracy in cross-section and strength function fits.

- Results show reliable performance even with incomplete data, emphasizing the need for precise spin group assignments.

Validating Automated Resonance Evaluation with Synthetic Data

The paper "Validating Automated Resonance Evaluation with Synthetic Data" discusses the development of a framework to automate and validate parts of the nuclear data evaluation process. It emphasizes the utilization of synthetic data to enhance the reproducibility, precision, and standardization of fitting procedures, particularly in resonance parameter estimation. This summary explores the key methodologies, results, and implications of the study.

Framework and Methodology

Synthetic Data Utilization

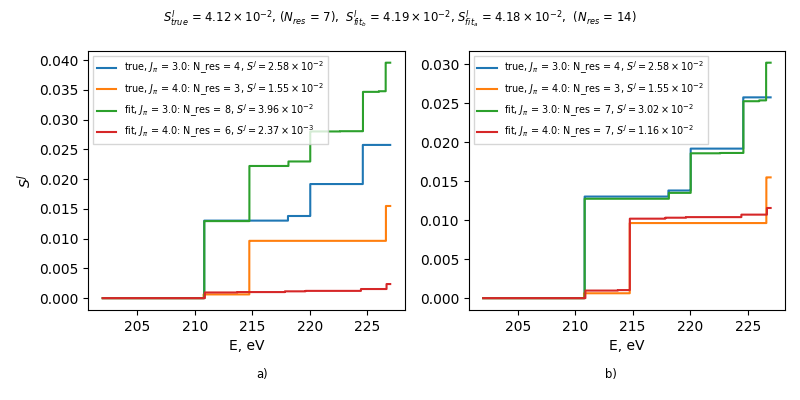

The framework presented in the paper harnesses high-fidelity synthetic data to perform supervised learning, with access to the true solution for benchmarking and optimization of automated routines (Figure 1). This synthetic data mimics real experimental conditions and is generated by adding noise to simulation processes, thereby producing datasets that are statistically indistinguishable from actual observations.

Figure 1: Utilization of synthetic data for assessment and optimization of routines under test and controlled computational experiments.

The process incorporates generating synthetic experimental observables across specified energy ranges, with simulation parameters informed by actual experiments. By introducing metrics that quantify fitting precision in both resonance and cross-section spaces, the framework facilitates rigorous testing and optimization of resonance identification algorithms.

Error Metric Development

The paper introduces an error metric to quantify the quality of resonance data fitting, aiming to optimize hyperparameters involved in the automated evaluation process. This includes metrics based on cross-section space and parameter space, such as:

Results Summary

Hyperparameter Optimization

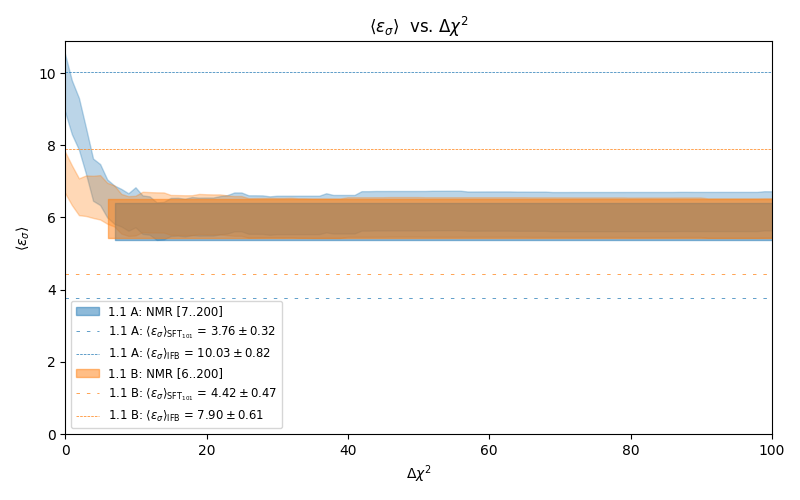

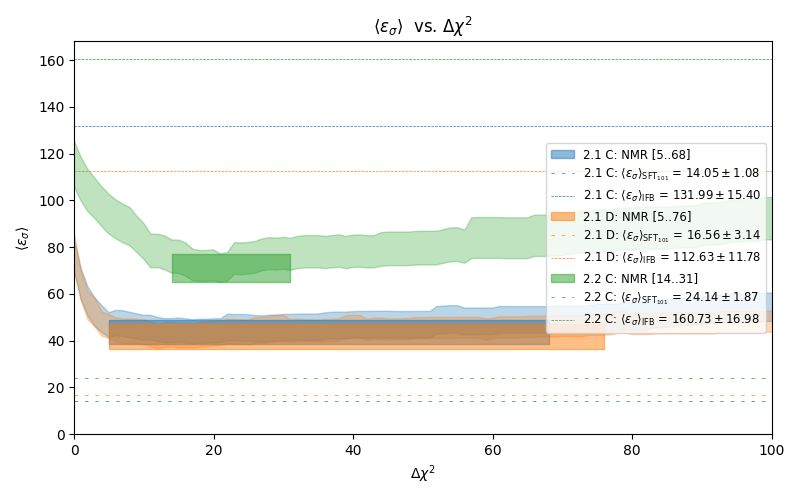

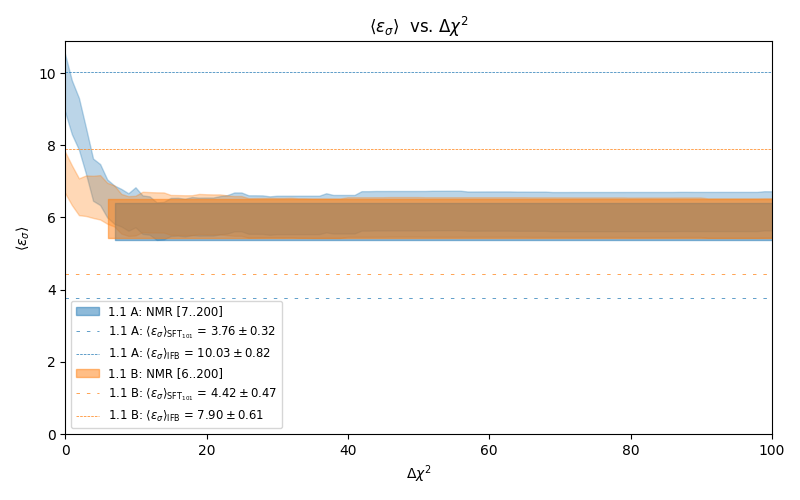

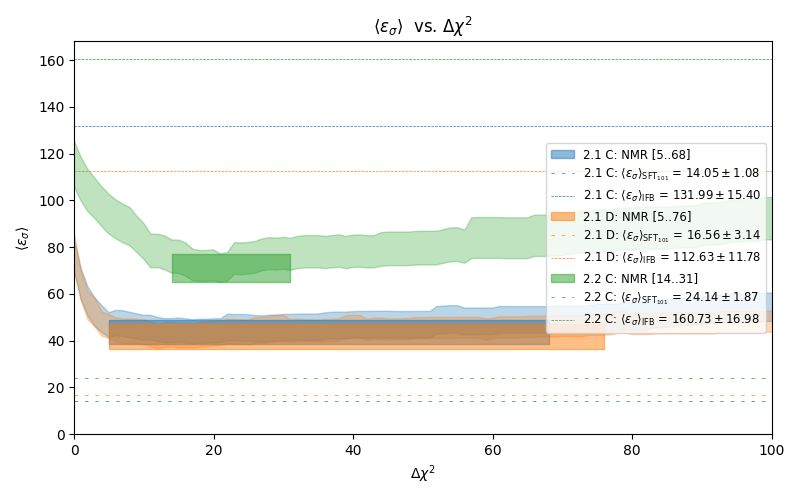

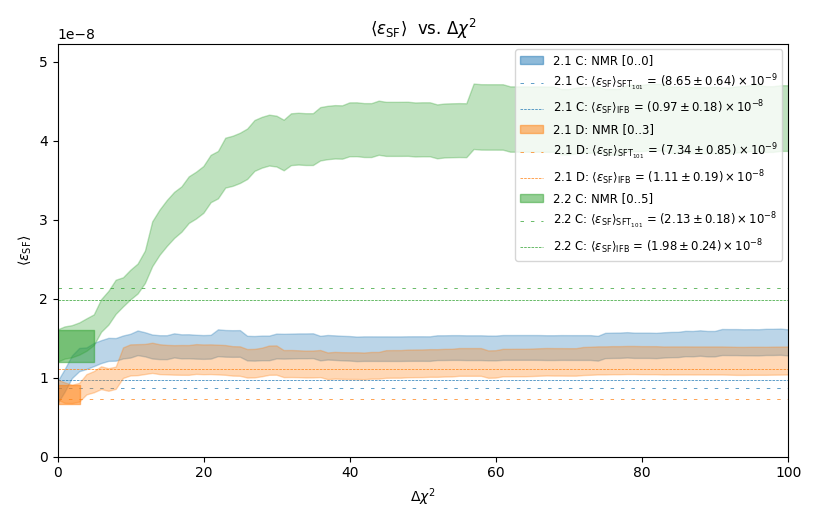

The hyperparameter optimization focuses on maximizing performance metrics like the average error in cross-section space ⟨εσ⟩. The process identifies the optimal Δχ2 threshold value as a critical hyperparameter, adjusting the balance between model complexity and fit accuracy (Figures 7 and 9).

Figure 3: Hyperparameter Delta\chi2 selection based on the error metric ⟨εσ⟩.

The study assesses the automated resonance identification subroutine's performance under various scenarios:

- Correct Data Settings: Optimization shows improved error metrics across test datasets, validating the chosen hyperparameter values.

- Incomplete Data Covariance Matrix: Introducing misreported covariance data affects performance, highlighting the robustness of the hyperparameter-tuned model compared to baseline results.

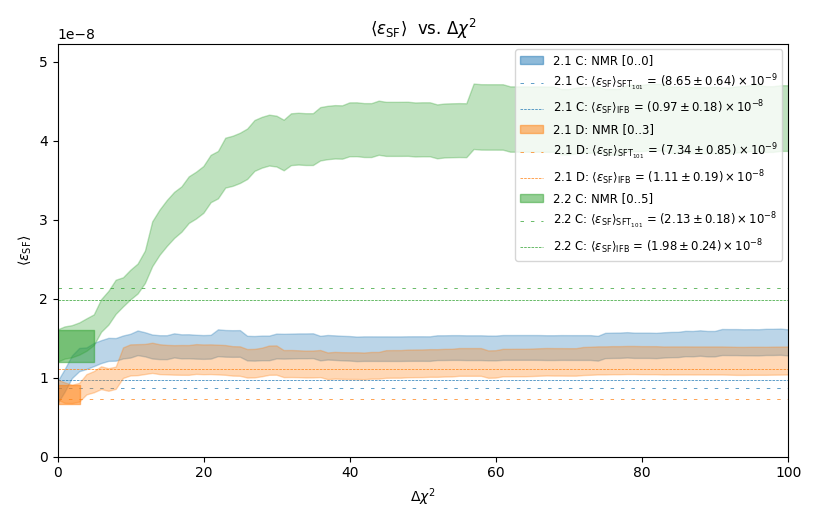

- Multiple Spin Groups: Demonstrates the complexity and need for accurate spin group assignment to enhance fitting precision (Figures 9 and 11).

Figure 4: Comparison of ARIS performance calculated as the average error metric ⟨εσ⟩ with a full data covariance matrix versus incomplete covariance data.

Figure 5: Comparison of ARIS performance using the estimated averaged error metric value $\langle \varepsilon_{\text{PSF} \rangle$.

Implications and Future Research

The paper underscores synthetic data's vital role in nuclear data evaluation, particularly in reducing subjectivity and enhancing reproducibility. The methodologies provide a pathway for systematic optimization of resonance identification routines, pivotal in nuclear reactor design and national security applications. Future research could explore more sophisticated spin group selection techniques and broader applications of synthetic data for comprehensive uncertainty quantification and experimental design optimization.

In conclusion, the findings illuminate pathways to enhance nuclear data evaluation processes using advanced machine learning methods and synthetic data, fostering improvements in reactor safety, design precision, and evaluator training. These advancements are crucial for addressing emerging challenges in nuclear data science and technology.