- The paper introduces an LLM-integrated approach that dynamically generates test inputs based on coverage feedback for Verilog designs.

- It leverages structured prompt engineering and restructured coverage reports to guide the LLM in exploring unexplored code branches.

- Results show the method outperforms random testing in achieving swift code coverage in simple to medium complexity designs.

VerilogReader: LLM-Aided Hardware Test Generation

Introduction

"VerilogReader: LLM-Aided Hardware Test Generation" investigates the integration of LLMs into the process of Coverage Directed Test Generation (CDG). LLMs, renowned for their advanced comprehension and reasoning capabilities, are leveraged as Verilog readers capable of understanding code logic and generating testing stimuli for unexplored code branches. This research diverges from previous work by focusing on enhancing the code coverage aspect, essential for hardware testing, utilizing LLMs in comprehension and dynamic testing contexts. This paper presents how LLMs can systematically improve upon random testing methodologies within the context of hardware verification.

Approach

Framework for LLM Integration

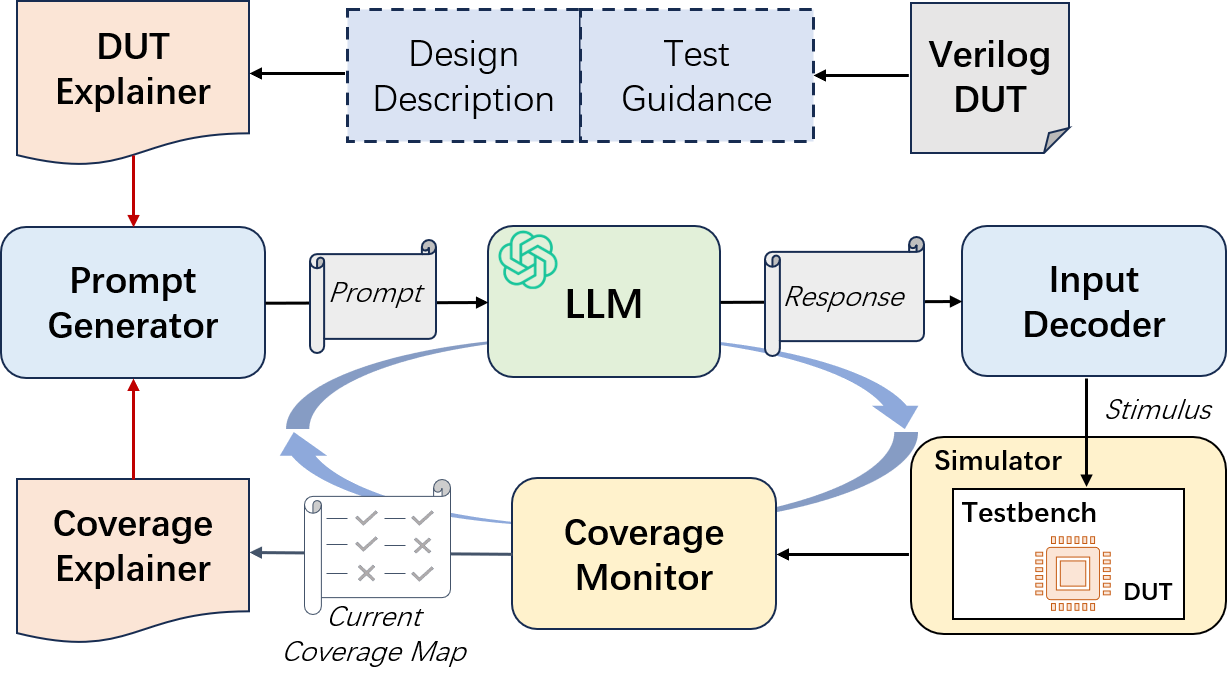

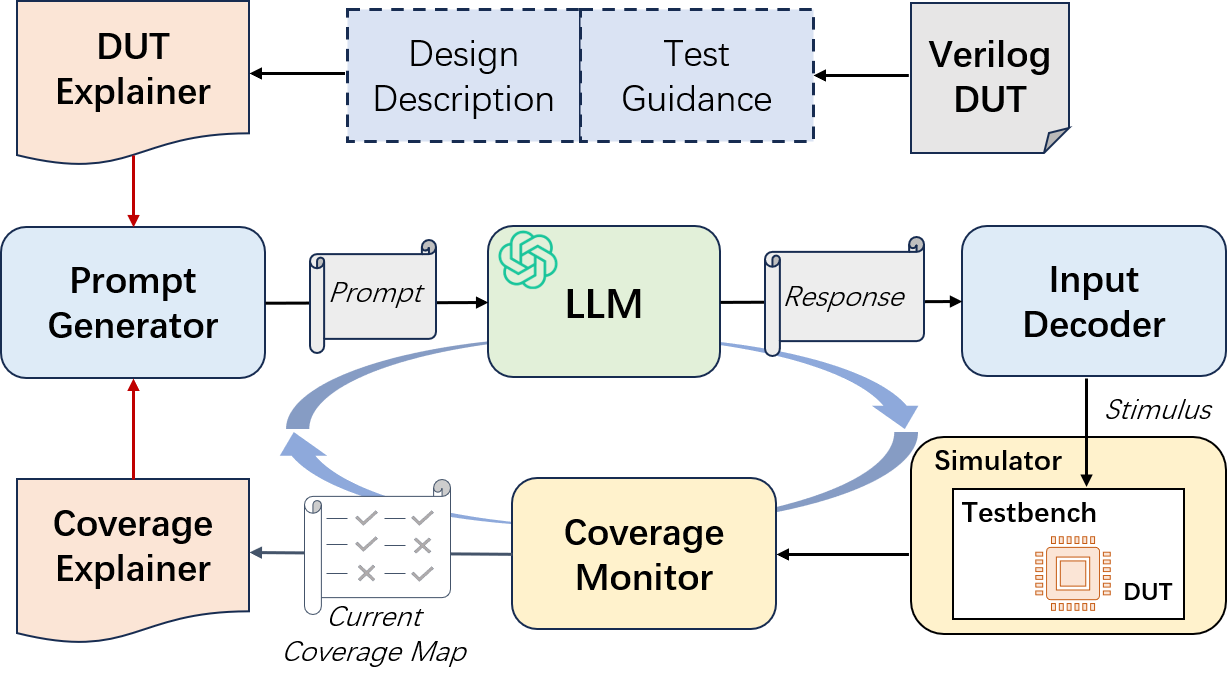

The foundation of this research is the incorporation of an LLM into the CDG process, as illustrated in the LLM-Aided Hardware Test Generation Workflow (Figure 1). In each iteration, the LLM outputs multi-cycle inputs in JSON format, which the Input Decoder subsequently translates into hardware stimuli. The Coverage Monitor then provides LLMs with code coverage data influencing the additional stimuli generation.

Figure 1: LLM-Aided Hardware Test Generation Workflow.

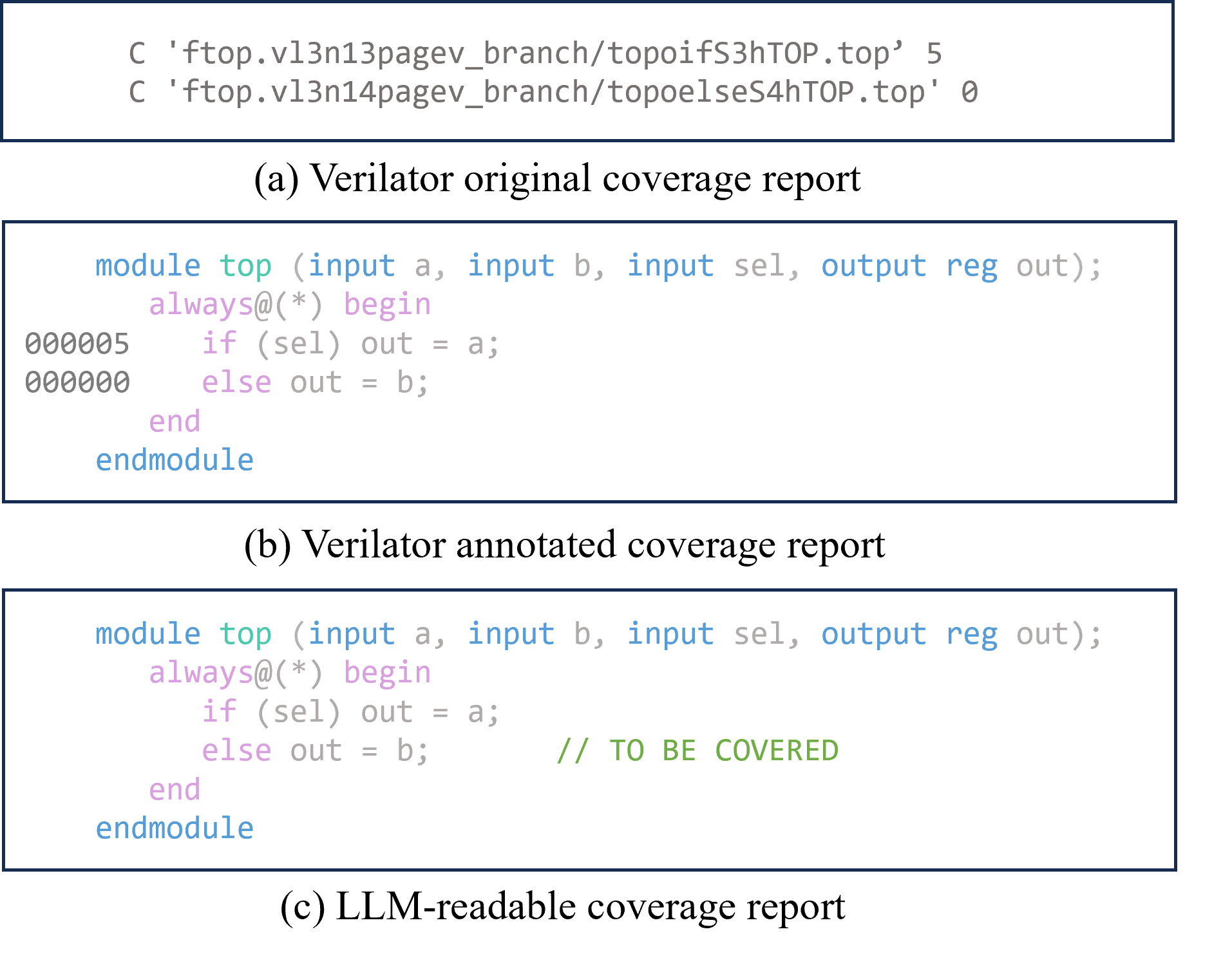

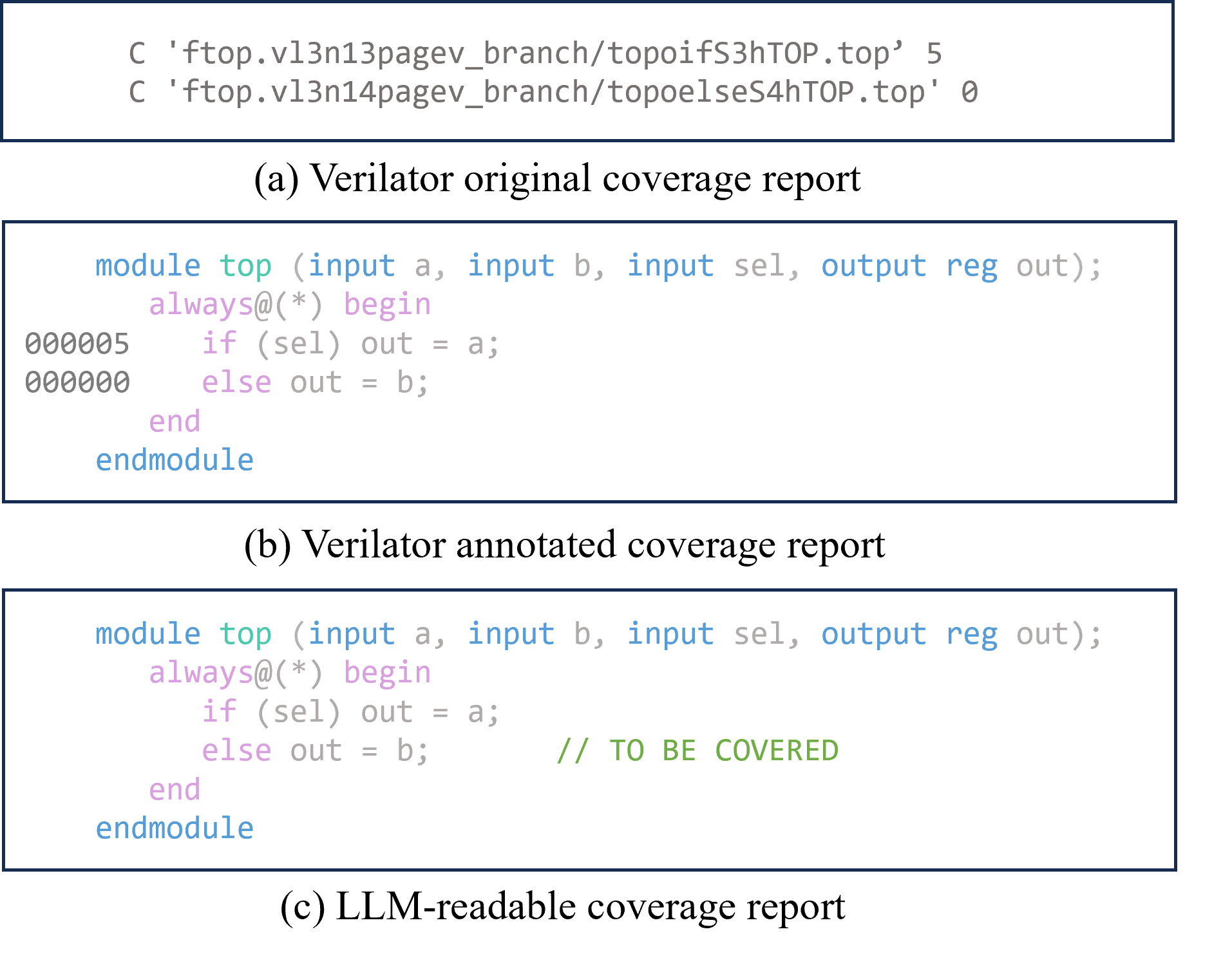

To cater to LLM integration, Verilog data and coverage reports are restructured into more LLM-readable formats through the Coverage Explainer and DUT Explainer modules. The former translates complex simulator coverage reports into formats more amenable to LLM interpretation, focusing on assigning a ‘TO BE COVERED’ flag to unobtained lines, thereby facilitating more efficient LLM processing (Figure 2).

Figure 2: Comparison of three coverage report formats.

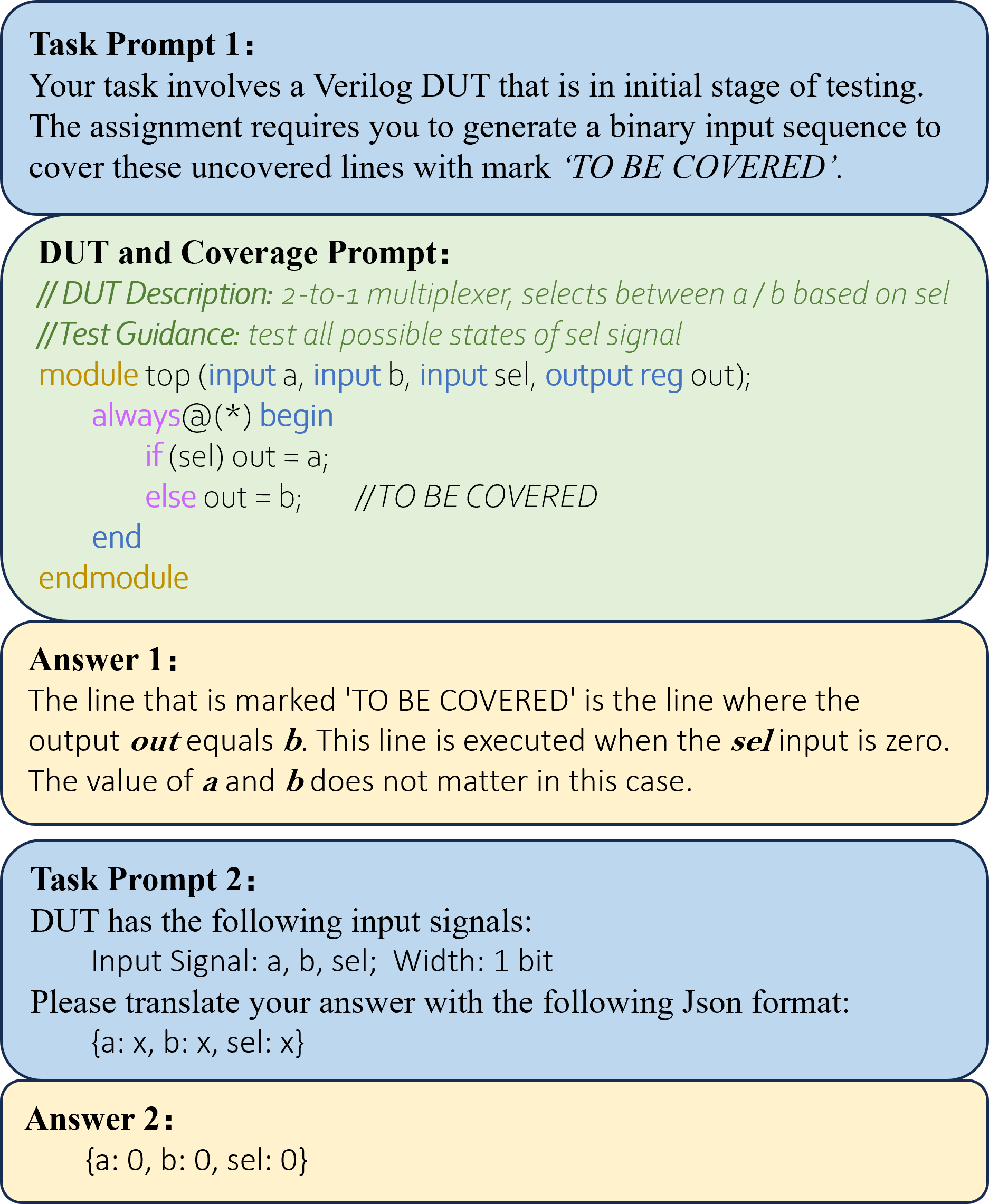

Prompt Engineering

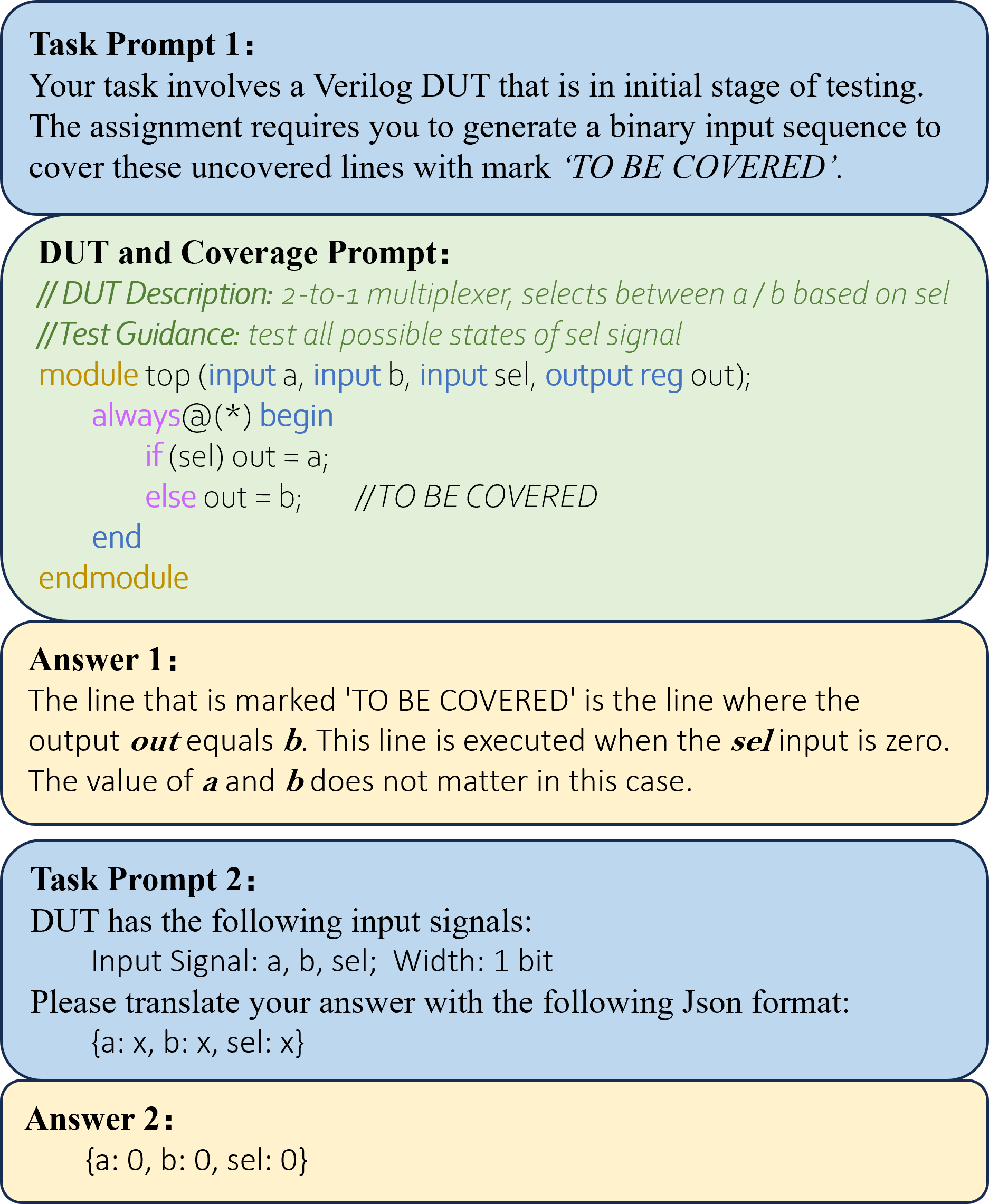

The Prompt Generator applies a methodical approach by segmenting the test generation process into dual rounds of queries to LLM. It facilitates a structured LLM reasoning process about Verilog code and coverage gaps. This approach includes DUT Explainer's enrichment derived from the hardware's design descriptions or additional guided test instructions, thereby providing clear, structured data for LLM evaluation (Figure 3).

Figure 3: Example of prompts and LLM answers.

Evaluation

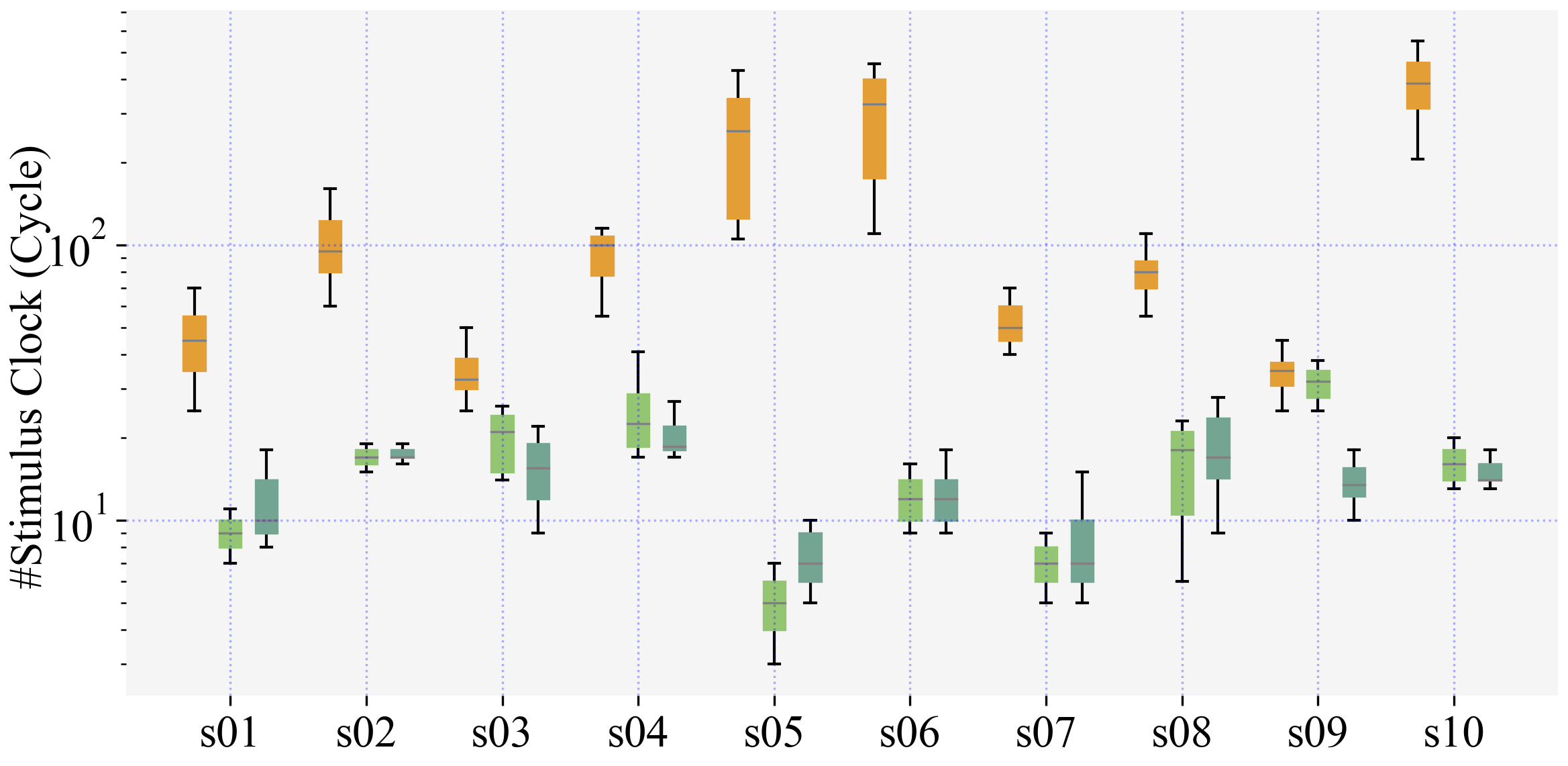

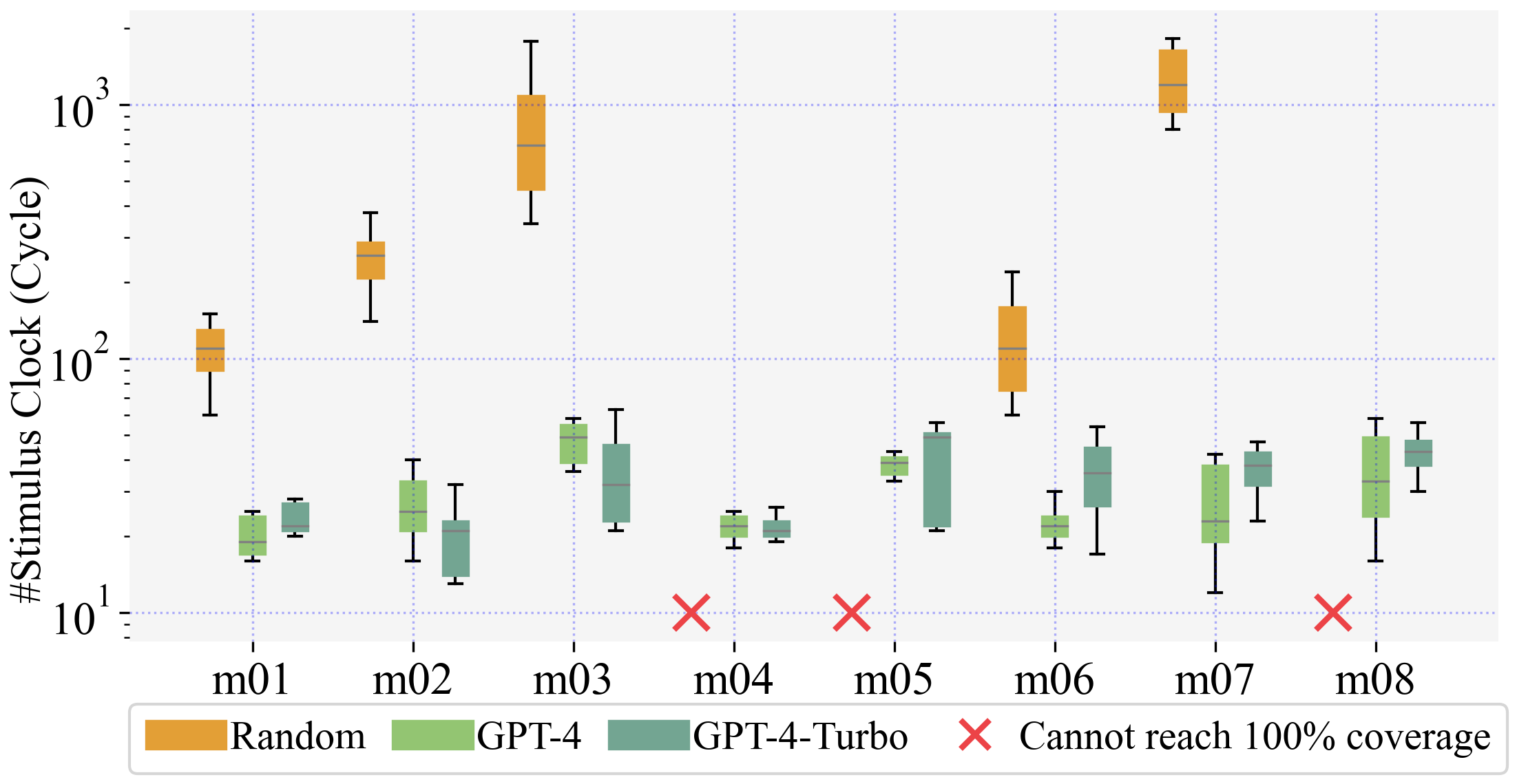

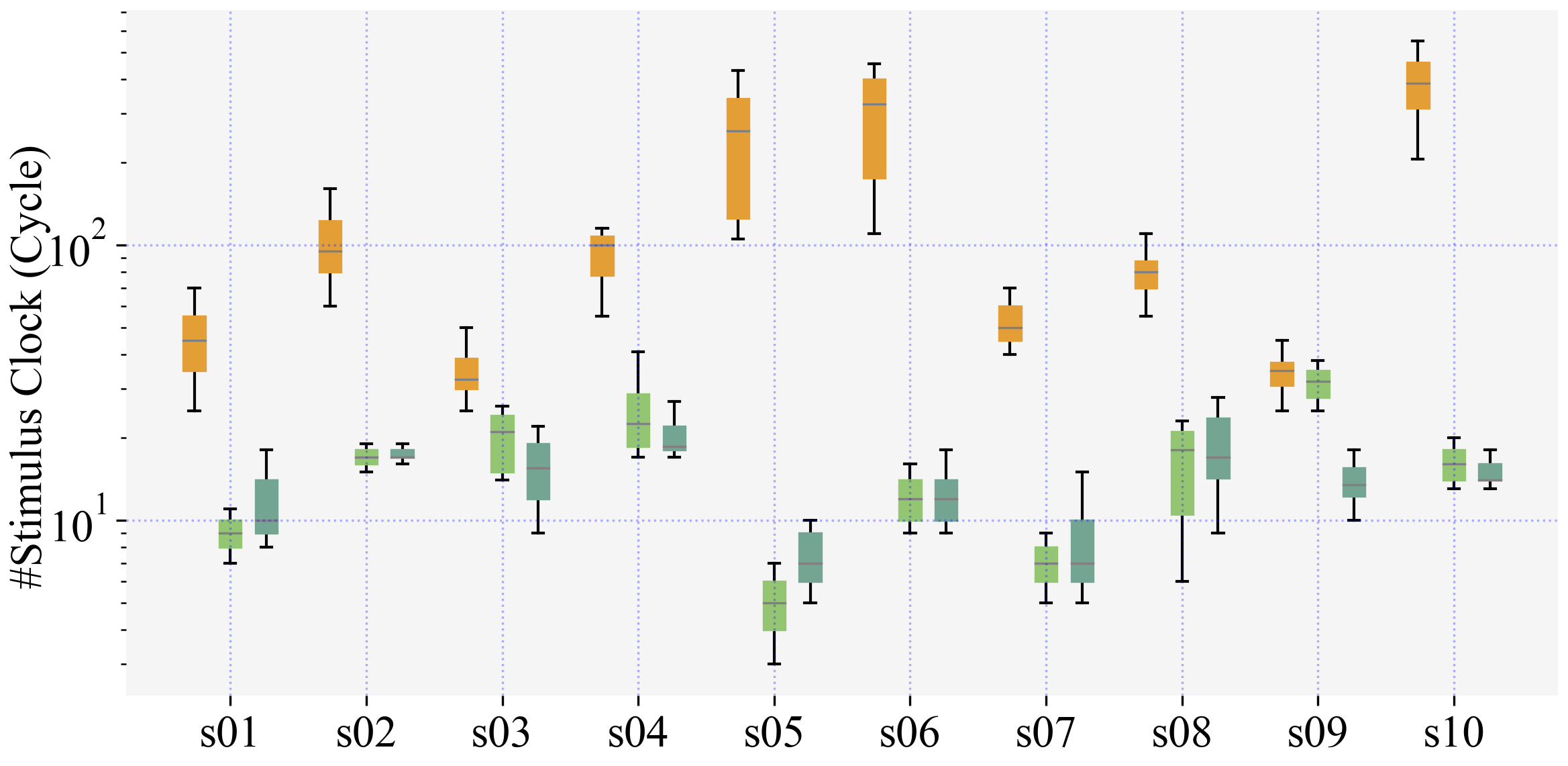

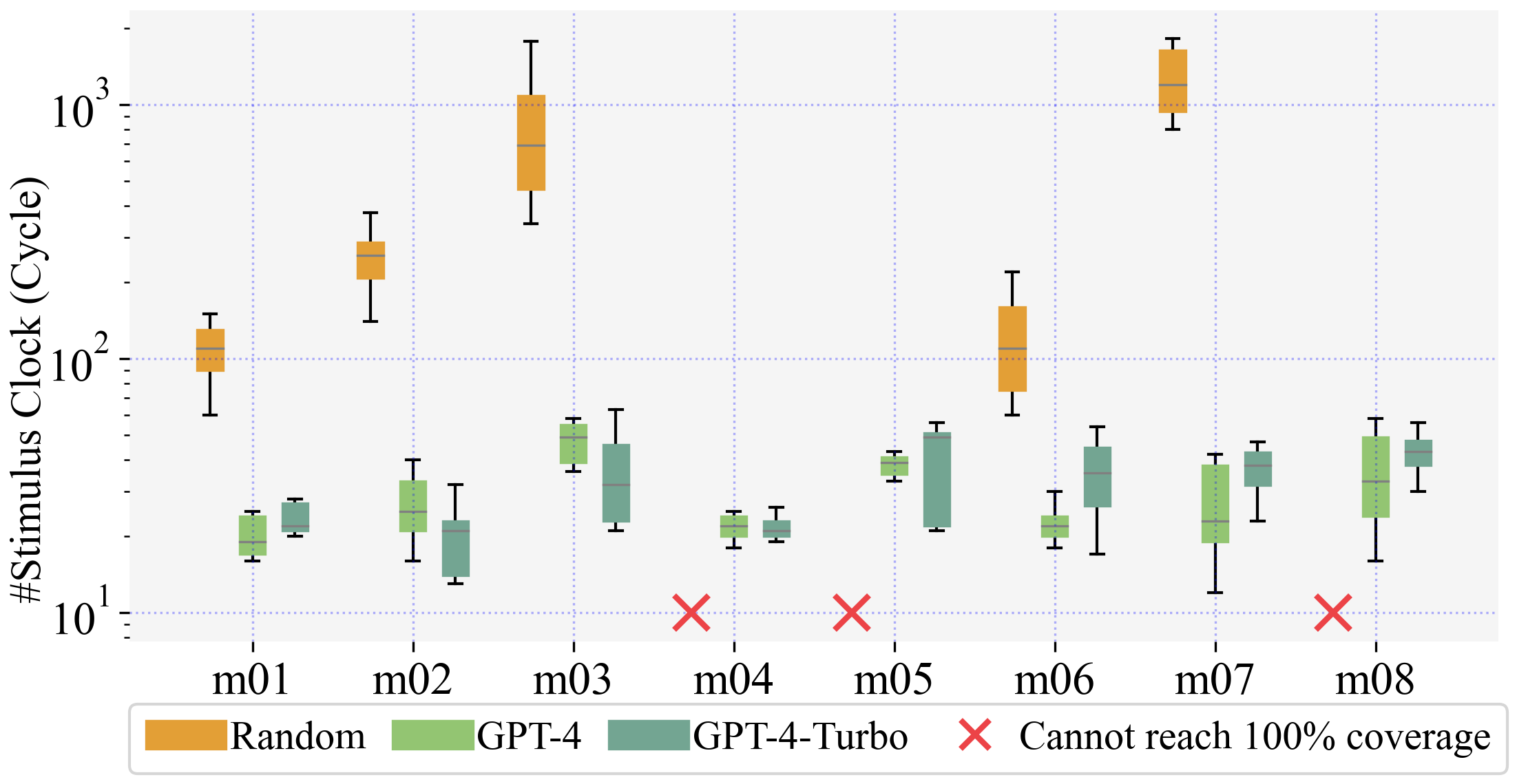

The benchmarking suite consisted of 24 Verilog designs categorized by complexity. These included simple combinational circuits, medium sequential circuits, and complex large-scale FSMs. LLMs demonstrated strong performance, notably outperforming random testing particularly in swift code coverage achievement in simple- and medium-level DUTs (Figure 4).

Figure 4: Comparison of LLM-aided test generation and random testing.

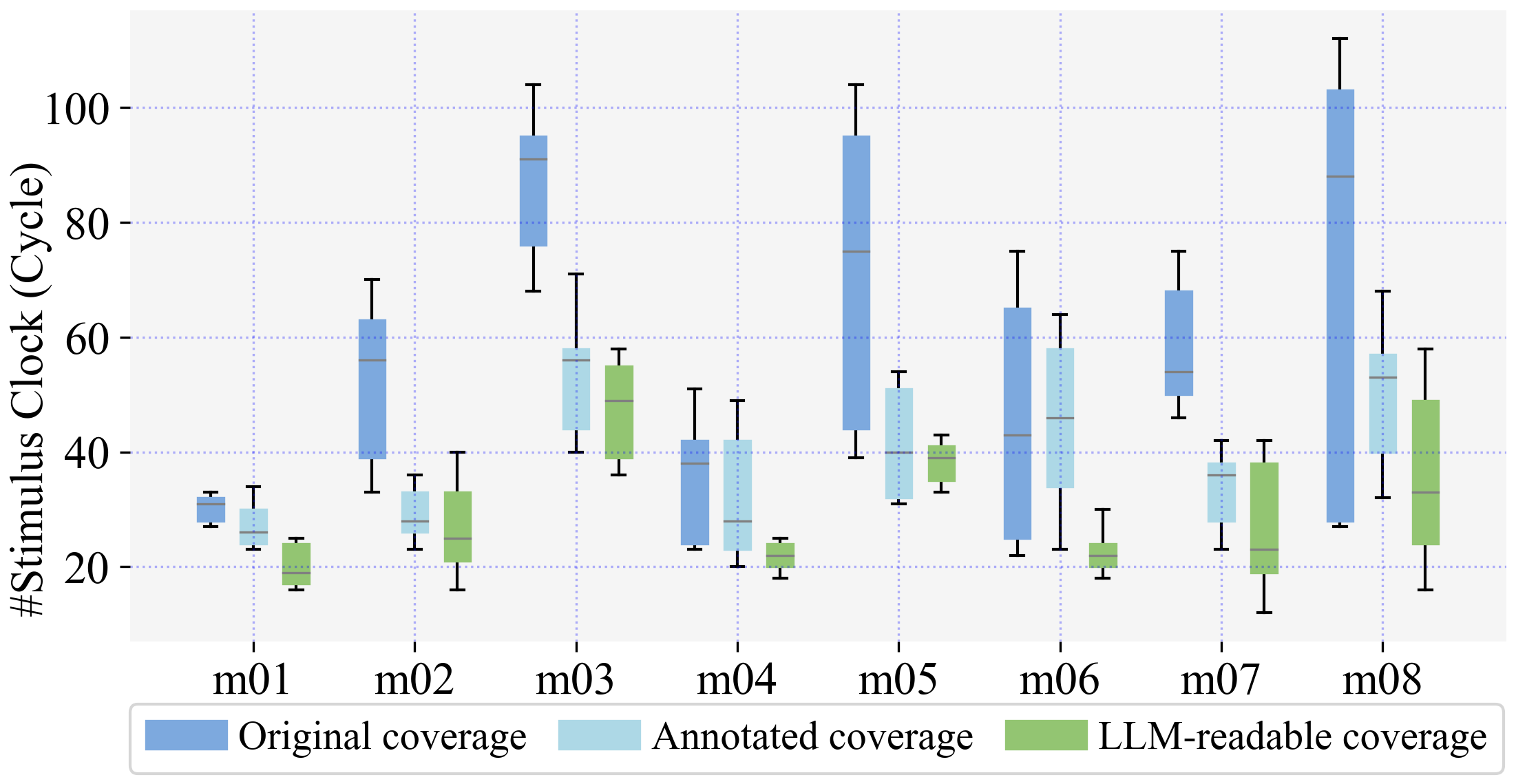

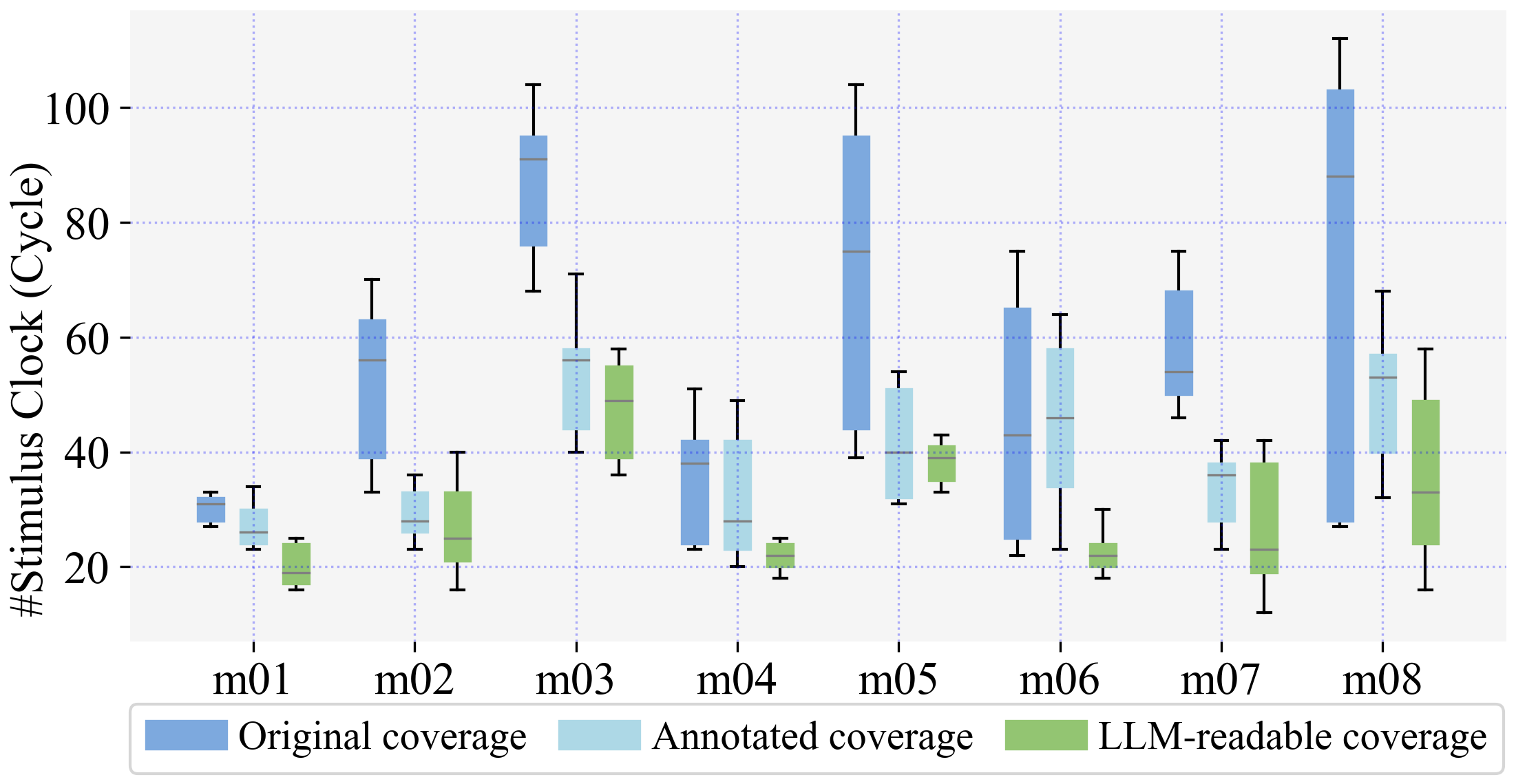

The LLM-readable coverage report format improved CDG processes by optimizing input generation efficiency across designs, as evidenced by coverage achievement with reduced stimuli (Figure 5).

Figure 5: Comparison of coverage explanations.

Discussion

Current LLMs show competence in test generation for smaller, less complex designs but struggle with larger designs, often due to input length limitations inherent to LLM architecture. Enhancements within DUT Explainer, through higher-level abstractions of design and intended tests, could present significant improvements in LLM performance. Future research might focus on combining LLMs with graph-based structural interpreters, like GNNs, to manage complex design analysis more effectively.

Conclusion

This research pioneered the novel use of LLMs as Verilog readers for hardware verification, a fundamentally technical challenge in automatic test generation. Findings indicate LLMs' usefulness in minimizing manual labor by achieving full code coverage in simpler DUTs. Continued exploration into integrating additional AI models for structured data processing offers valuable future directions to enhance LLMs' utility in industrial hardware verification contexts.