- The paper introduces a dual representation using particles and 3D Gaussians to integrate physical dynamics with real-time visual corrections.

- It employs Position-Based Dynamics and RGBD-based initialization to predict and adjust the physical state for accurate tracking.

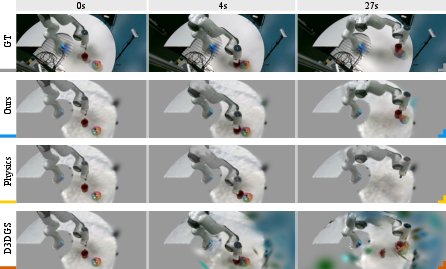

- Experiments in dynamic scenarios demonstrate the framework's ability to maintain robust tracking and accurate rendering under physical constraints.

"Physically Embodied Gaussian Splatting: A Realtime Correctable World Model for Robotics"

Introduction

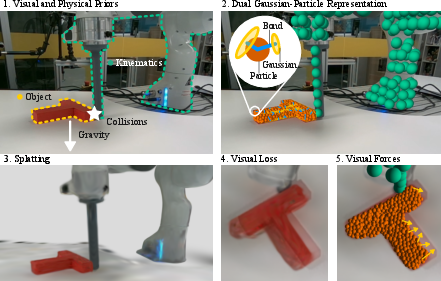

The paper "Physically Embodied Gaussian Splatting: A Realtime Correctable World Model for Robotics" proposes a dual representation framework combining Gaussian splatting with particle-based physics simulation to model the physical world. This approach enhances robotic perception, planning, and control by integrating predictive simulations and visual feedback corrections in real-time. The framework utilizes particles for geometric representation and physics-based prediction, coupled with 3D Gaussians to render visual states from any viewpoint, addressing the limitations of prior models lacking physical constraints.

Methodology

Dual Representation Framework

The proposed framework features a "Gaussian-Particle" system, where particles encompass geometric properties and predict future states using a physics engine, specifically Position-Based Dynamics (PBD). 3D Gaussians, attached to these particles, render the visual state, enabling image generation from varying perspectives. This duality allows the comparison of predicted versus actual images, computing corrective "visual forces" to adjust particle positions in real-time while honoring physical constraints.

Figure 1: Image from a real-world experiment showing the available physical priors (1), the dual Gaussian-Particle representation (2), the predicted visual state of the world (3), and the corrective "visual" forces (5) being applied as a result of a visual discrepancy between the rendered state and the image from the camera (4).

Initialization and Correction

The initialization process uses RGBD data and instance maps to derive the initial state of particles and Gaussians. Particles are initialized using depth data, while Gaussians are optimized to match color and segmentation information. The correction stage employs real-time visual feedback to refine the system's state by adjusting Gaussian parameters, ensuring synchronization with the actual physical environment. The correction utilizes a feedback loop that applies corrective forces derived from visual observations.

Figure 2: The initialization procedure (left) and results from real-world data (right).

Experiments

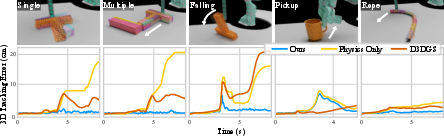

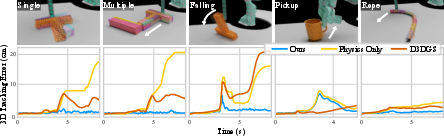

The framework's effectiveness is validated through a series of 2D and 3D tracking experiments, assessing its real-time capability and fidelity in dynamic environments. Experiments include scenarios such as object manipulation and deformation, challenging the system with various dynamic conditions like pushing, falling, picking up, and interacting with deformable objects.

Figure 3: Tracking error on a set of points attached to moving objects on synthetic scenes showing different dynamic conditions that include pushing, falling, picking up, and deformable objects.

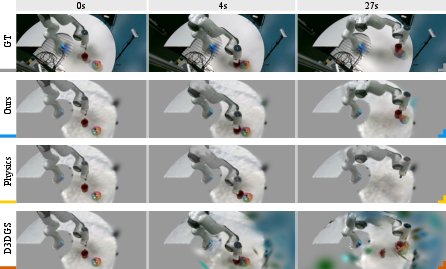

Performance metrics demonstrate competitive results in both simulated and real-world settings, highlighting the dual representation's ability to maintain accurate tracking and rendering under diverse conditions. The system's ability to remain synchronized with the real world over extended periods is noted, though limitations arise in rapidly dynamic scenarios where the current state diverges quickly from predictions.

Discussion and Implications

This research introduces an innovative approach to integrating physical and visual world modeling for robotics. The dual Gaussian-Particle framework extends beyond traditional methods by providing a correctable, real-time model that fuses predictive physical dynamics with immediate visual feedback. This synergy underscores the importance of maintaining fidelity to physical laws while deriving substantial practical insights from visual cues.

Figure 4: A scene's predicted visual state with different methods. The robot interacts with two objects for over a minute. Our method is capable of synchronizing with the real state using a combination of physical prediction and visual correction. Physical prediction alone can estimate the state for a short period but will eventually desynchronize.

The implications for robotics are significant, as this method offers a path toward more autonomous, adaptive systems capable of complex interactions and accurate environmental modeling. The method could be further refined by incorporating more sophisticated initialization techniques and extending the model to wider application domains.

Conclusion

The paper successfully presents a novel, integrated approach to modeling the physical world in robotics, offering real-time corrections through a dual representation of Gaussian splatting and particle dynamics. Future work should address scalability to broader environments and enhance the feedback loop's robustness for even more dynamic and unpredictable scenarios, solidifying the framework's applicability in real-world robotics.