- The paper introduces a fully procedural, open-source system that synthesizes diverse, photorealistic indoor scenes with extensive asset variability using procedural generation.

- The paper details a scalable, simulated annealing-based solver and a symbolic constraint API that enforce spatial, semantic, and ergonomic rules for optimal scene composition.

- The paper demonstrates significant empirical gains in training for vision tasks and seamless export to embodied AI simulators, highlighting its practical impact.

Infinigen Indoors: Photorealistic Indoor Scene Generation via Procedural Synthesis

Overview and Motivation

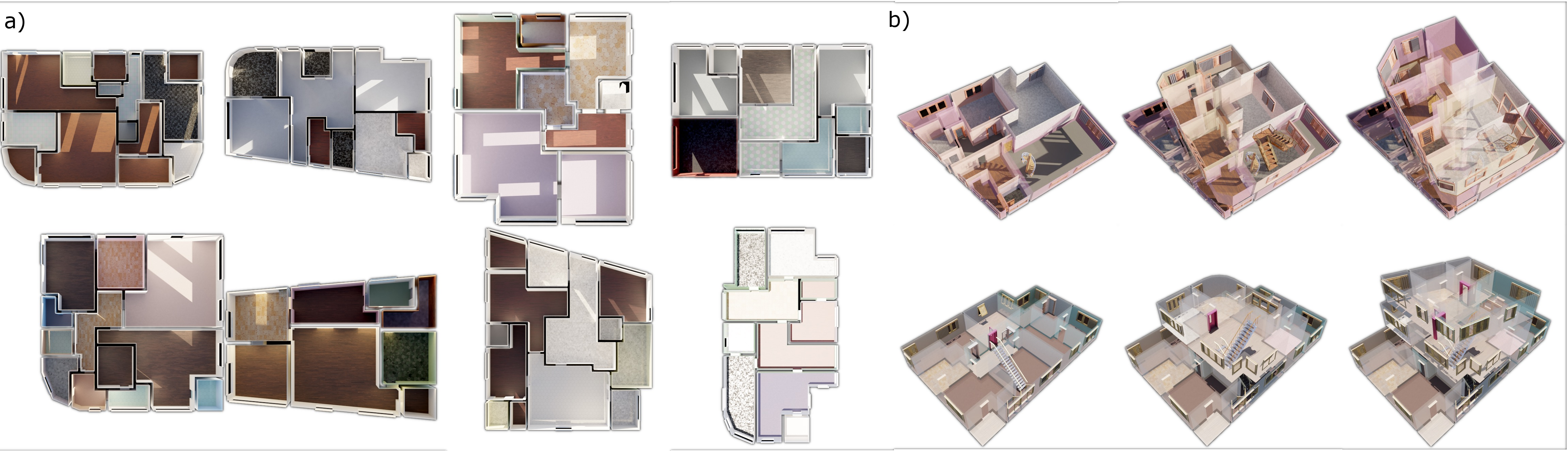

The paper "Infinigen Indoors: Photorealistic Indoor Scenes using Procedural Generation" (2406.11824) introduces a fully procedural, open-source system for generating photorealistic 3D indoor scenes. Built atop Blender and expanding from the original Infinigen system, which focused on outdoor and natural scenes, Infinigen Indoors fills a substantial gap in synthetic 3D data resources for indoor environments. The system procedurally generates a vast array of indoor assets (furniture, appliances, decorations, architectural elements) as well as entire multi-room, multi-story building layouts. Critically, it supports specification and enforcement of semantic, geometric, and ergonomic constraints via a domain-specific API and a scalable arrangement solver, and it enables seamless export to leading embodied AI simulators.

The motivation is clear: large-scale, diverse, procedurally generated, and richly annotated indoor datasets are essential for advancing embodied AI, robotics, and computer vision, particularly in scenarios where real-world data collection is impractical or where labeling 3D ground-truth is challenging.

Procedural Asset and Scene Generation

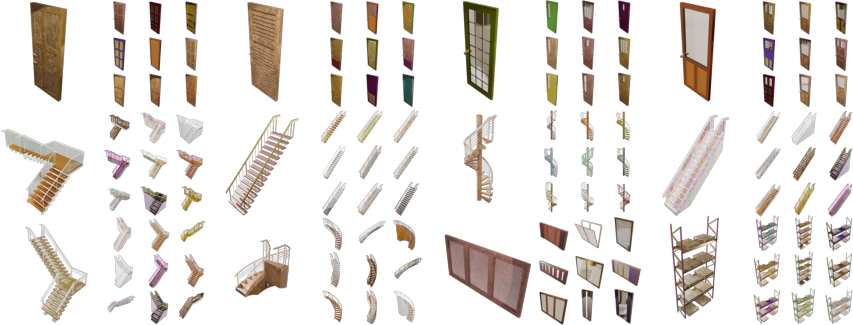

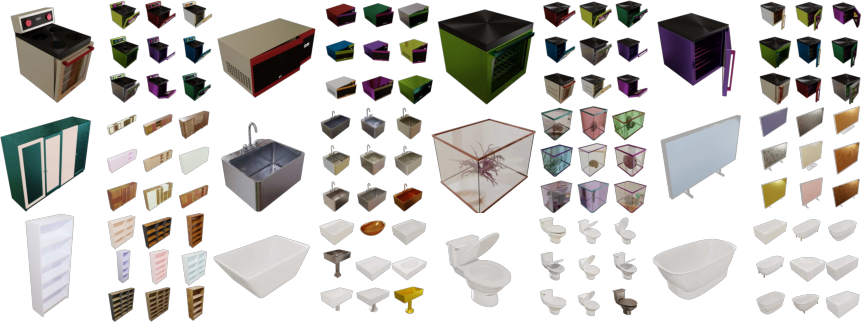

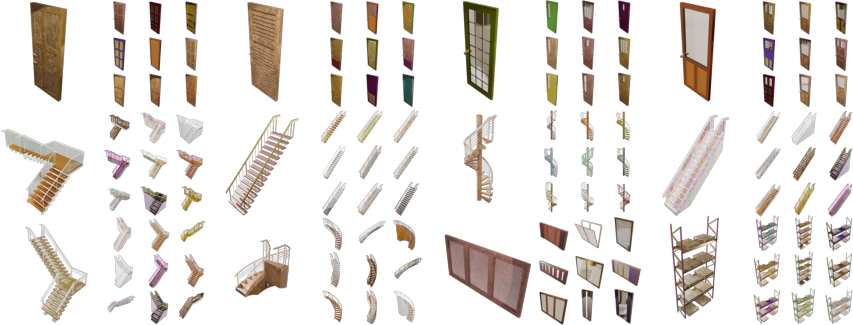

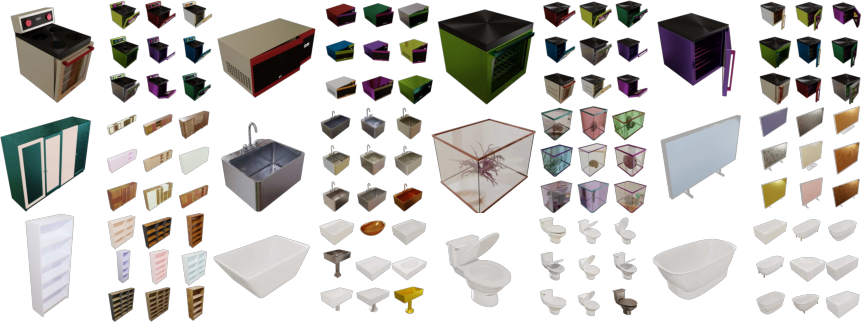

Infinigen Indoors introduces a comprehensive library of 79 procedural 3D asset generators, spanning major categories such as appliances, architectural fixtures, furniture, decorations, and small objects. Each asset generator exposes dozens to hundreds of parameters for stochastic variation and user customization, supporting mesh manipulations, procedural shaders, cloth and soft-body simulation, and fully procedural material creation.

For materials, 30 distinct generators with over 120 parameters collectively cover the majority of visual diversity seen in real-world interiors. These material generators have been demonstrated to encompass 78% of the OpenSurfaces material taxonomy, a significant improvement over earlier procedural systems.

Figure 1: Procedurally generated doors, staircases, and windows, demonstrating intra-class diversity in geometry and structure.

Figure 2: Procedural sample diversity for appliances, living room seating, and bathroom fixtures, all generated parametrically.

Figure 3: Generative tableware and utensils, supporting realistic training data for tasks such as manipulation and object detection.

All assets are built without reliance on any static or third-party library; every facet—geometry, texture, physical properties—is computed algorithmically. This enables both infinite diversity and strict control over the asset production process, supporting domain randomization and bias mitigation strategies in data-centric AI workflows.

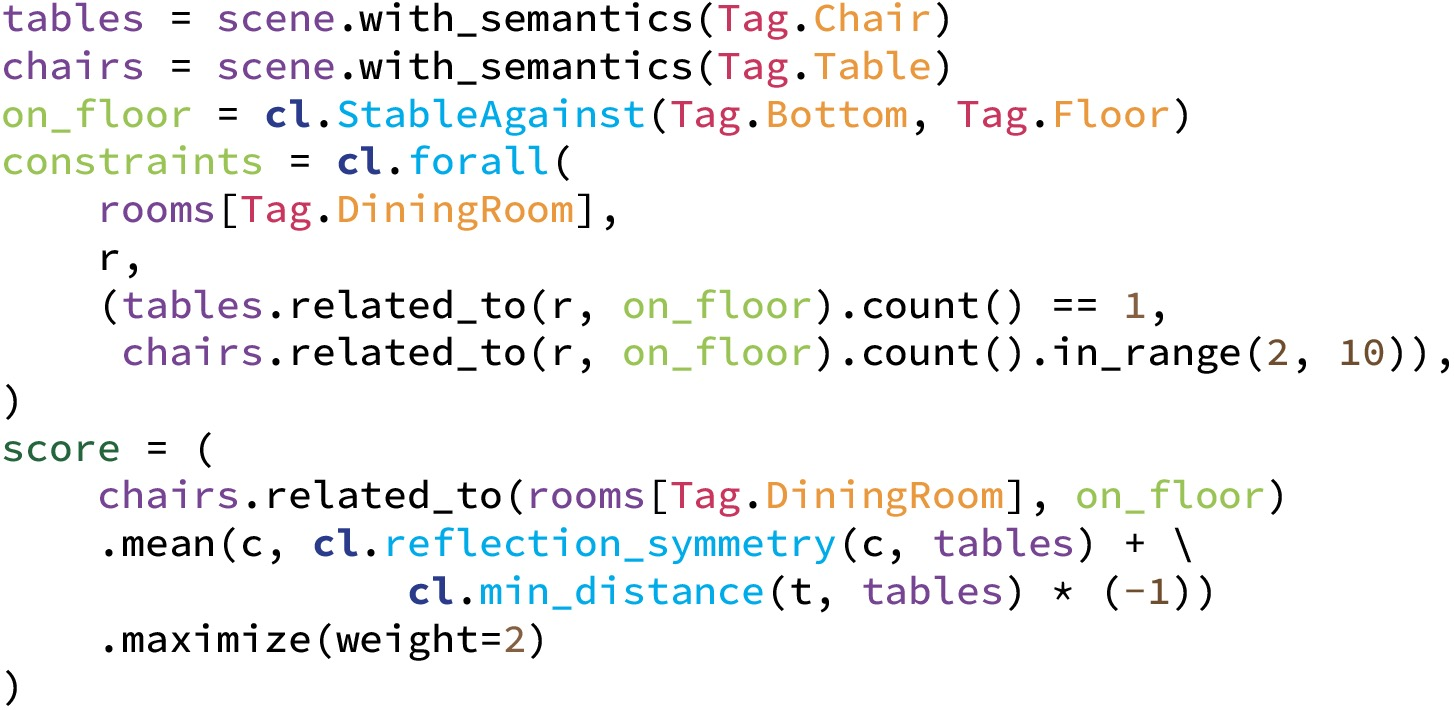

Expressive Constraint-Based Arrangement API

A central innovation is the Constraint Specification API—a high-level, symbolic interface allowing precise declaration of hard and soft constraints on scene composition, grounded in spatial, semantic, ergonomic, and accessibility logic. This system uses composable geometric and relational operators (distance, symmetry, stability, count, area, accessibility, alignment) over object classes and spatial relationships to compute constraint satisfaction.

For example, constraints can encode:

A major contribution is the capacity for these constraints to be instantiated over arbitrary semantic classes (e.g., "all furniture", "all doors in the kitchen") rather than hand-crafting per-instance rules, and for users to customize objectives and constraints in practical, tractable Python code.

Scalable Arrangement Solver

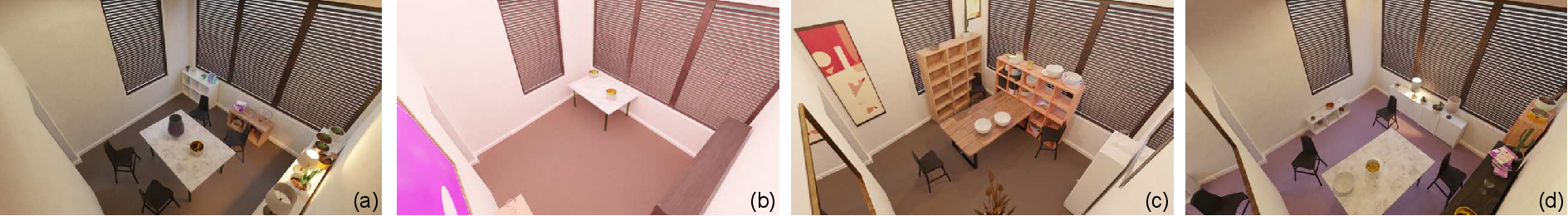

Optimizing scene compositions subject to large numbers of composable constraints is computationally challenging due to the enormous discrete-and-continuous state spaces. Infinigen Indoors addresses this with a staged, simulated annealing-based solver, incorporating both discrete (object addition/removal, pose reassignment) and continuous (translation, rotation, parameter resampling) move types. Scene composition is hierarchically optimized, starting with large structure and progressing to finer-scale placement, respecting inter-object dependencies.

Key optimizations for solver scalability and speed include plane hashing, BVH caching for collision queries, evaluation caching, and move filtering. Ablation results report approximately a 3x solution speedup owing to these engineering advances.

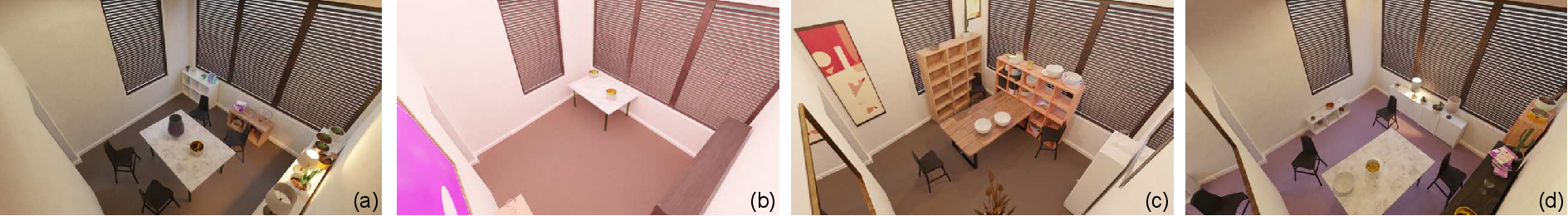

Figure 5: Qualitative ablation—impact of solver iterations and constraint enforcement (collision checking, symmetry) on scene realism and plausibility.

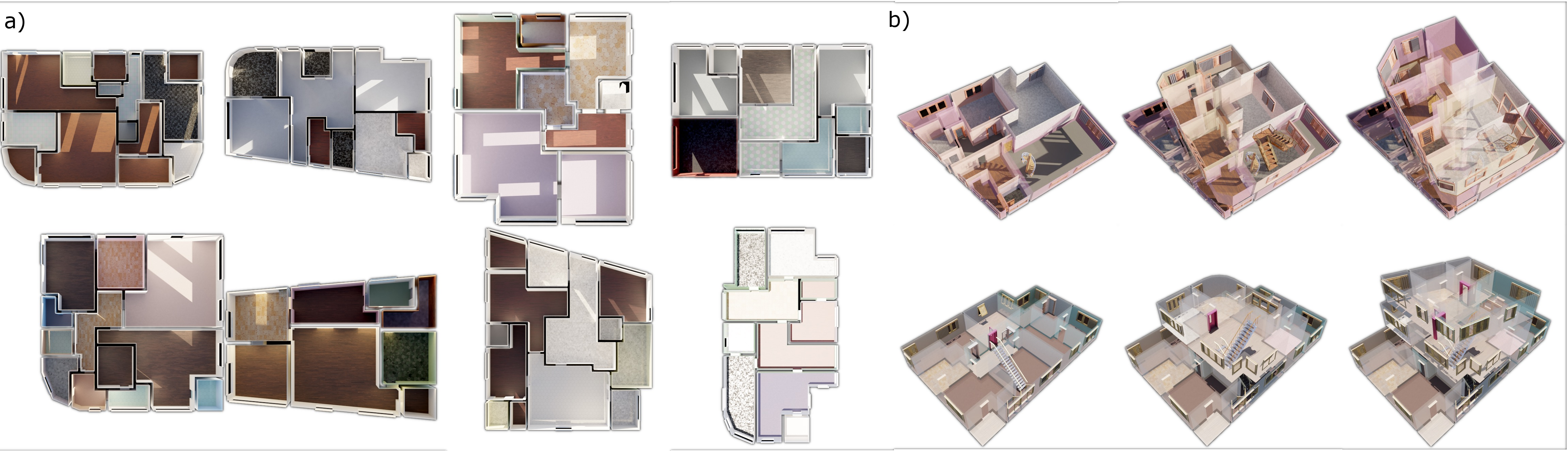

Procedural Floorplan Synthesis

Beyond object placement, Infinigen Indoors procedurally generates multi-room, multi-level architectural layouts. Floorplans are sampled via a probabilistic context-free grammar over room types and connectivity, then optimized using similar constraint-driven techniques to maximize metrics such as accessibility, adjacency, aspect ratio, convexity, and room functionality.

Figure 6: Diverse procedurally generated floorplans illustrating architectural and topological variability, including multi-story layouts with accurate staircase/topological relationships.

Downstream Applications and Empirical Evaluation

The practical utility of Infinigen Indoors is validated through two core experiments:

1. Shadow Removal:

By rendering paired images with and without object shadows, the system can supply rare, high-quality training data for low-level vision tasks such as shadow removal. Quantitative results reveal that including Infinigen Indoors synthetic data increases PSNR/mAP for zero-shot generalization to novel datasets compared to real-data-only training.

2. Occlusion Boundary Detection:

A U-Net trained solely on Infinigen Indoors images outperforms models trained on leading real and synthetic datasets for occlusion boundary estimation in stylized architectural CGI scenes, achieving higher ODS, OIS, and mAP, underlining the generalizability of the generated data for dense prediction tasks.

3. Perceptual Study:

Crowdsourced human studies rate Infinigen Indoors scenes as more realistic and less error-prone than prior methods, with statistically significant improvements in both overall and layout-specific realism.

Figure 7: Exported procedural scenes in Unreal Engine 5 and Omniverse Isaac Sim, confirming compatibility with standard embodied AI simulation pipelines.

Export and Integration for Embodied AI

Recognizing the need for simulator integration, Infinigen Indoors supports one-click export to leading real-time simulation environments via USD and standard mesh formats, with automated UV-mapping, texture baking, and articulation metadata production. This seamless workflow enables rapid deployment of procedurally generated environments in robotics, embodied agents, and mixed-reality research.

Implications and Future Directions

The introduction of a fully procedural, constraint-driven, photorealistic indoor scene generator addresses persistent bottlenecks in embodied AI and 3D vision: the lack of diverse, customizable, and annotation-rich synthetic data. Immediate implications include:

- Accelerated Research: Facilitating rapid data generation for pretraining and evaluation.

- Robotics: Enabling diverse, randomized training for sim2real transfer and robust task planning.

- Data-Centric AI: Allowing researchers to tailor data generation to task-specific biases and requirements.

Theoretically, the separation of constraint specification from solver implementation, along with symbolic, class-based constraint logic, paves the way for future exploration of learned constraint priors, SAT-solver integration, and hybrid symbolic–data-driven arrangement systems. Procedurally generated, photorealistic data at this scale opens opportunities in generative simulation-based training for foundation models, few-shot generalization research, and embodied, autonomous systems.

Given the open-sourcing of both generators and constraint language, the system is positioned for broad community adoption and extensibility. Future work could explore integration with LLM-based scene description, interactive constraint learning, and procedural generation coupled with real-time physics and interactive behaviors.

Conclusion

Infinigen Indoors demonstrates that fully procedural, photorealistic, and semantically controllable 3D indoor scene generation is feasible at large scale, and that such systems can supply superior training data for multiple vision and robotics tasks. The combination of expansive asset variability, symbolic constraint specification, scalable arrangement solving, and practical export pipelines positions Infinigen Indoors as a foundation for the next generation of embodied AI and simulation-based research.

(2406.11824)