- The paper introduces correction formulas to compute accurate word probabilities from subwords, addressing miscalculations in language models.

- It distinguishes between end-of-word and beginning-of-word tokenizers by providing specific methods to handle each scenario.

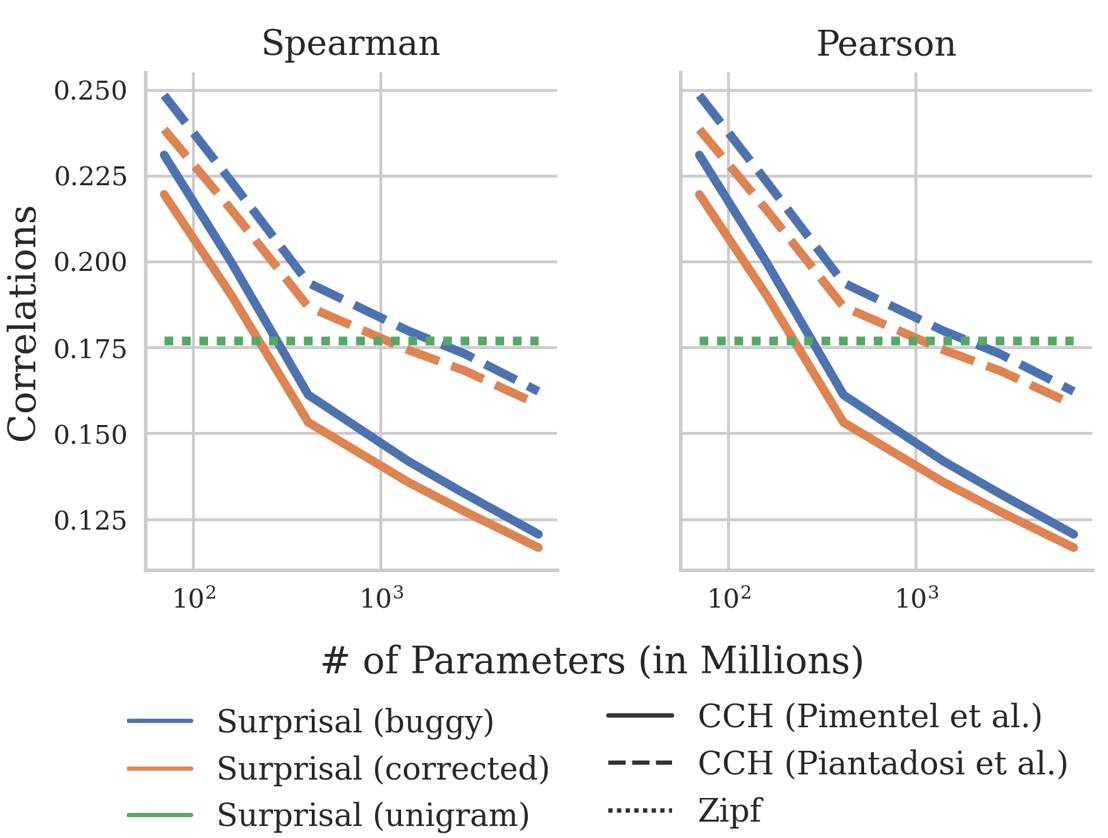

- Empirical results demonstrate improved surprisal prediction and offer new insights into lexical efficiency and sentence comprehension.

How to Compute the Probability of a Word

Introduction

This paper addresses the problem of accurately computing the probability of words when using LMs that operate over subwords. It particularly highlights issues in probability estimation methods in recent linguistic studies, due to complexities introduced by different tokenization schemes (notably beginning-of-word marking tokenizers like those used in GPT models). The research derives mathematical formulations to correct these widespread miscalculations, offering empirical evidence on the effects of these corrections on established studies in sentence comprehension and lexical optimization.

Tokenizer Strategies

The paper distinguishes between two types of tokenizers: end-of-word (eow) and beginning-of-word (bow) marking tokenizers.

- Eow-Marking Tokenizers: Here, subwords indicating the ends of words enable the mapping of subword sequences to words efficiently. The paper confirms that for such tokenizers, it's straightforward to compute the conditional probability of a word in context through a chain rule application.

- Bow-Marking Tokenizers: The complexity arises here because subwords indicating the beginning of words require attention to subsequent subwords to ensure the end of a word, introducing potential bugs. The research provides correction formulas to ensure accurate probability computations.

Subword to Word Probability Conversion

Central to the methodology, the research describes how to convert subword probabilities to word probabilities. It introduces the necessity of marginalizing over potential subword sequences that correspond to the same word due to varying tokenization approaches.

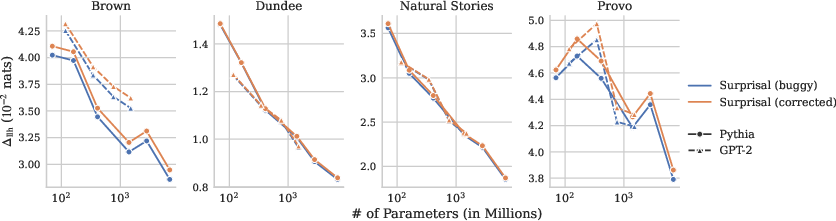

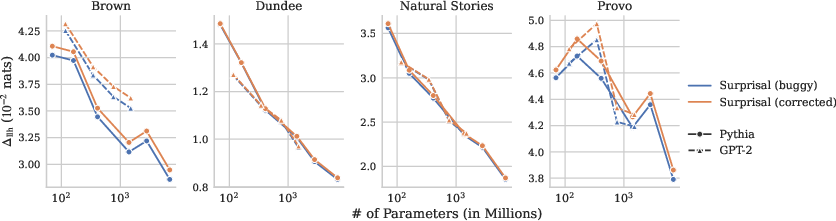

Figure 1: Comparison between regressors with and without surprisal as a predictor using both buggy and correct methods for surprisal estimation across LM sizes.

Implications in Psycholinguistics and Lexical Efficiency

The empirical studies reveal the significant effect of these corrections on previous psycholinguistics research:

Conclusion

The paper contributes significantly to computational linguistics by rectifying methodological oversights in LM analysis. Its implications reverberate in how models are evaluated for language understanding and word usage efficiency, underscoring the importance of methodological precision in NLP research. Going forward, applying these corrections is vital for enhancing empirical analysis reliability in related fields.