- The paper introduces a novel approach using Neural ODEs and robust control to improve the interpretability of LLM state transitions.

- The paper develops a unified optimization framework that balances prediction errors with control efforts to ensure stable latent dynamics.

- The paper's experiments confirm that controlled models yield consistent loss curves and tighter alignment with true outputs.

Unveiling LLM Mechanisms Through Neural ODEs and Control Theory

Introduction

This paper introduces a novel methodological approach that employs Neural Ordinary Differential Equations (Neural ODEs) and robust control theory to enhance the interpretability of LLMs. The primary aim is to improve comprehension of the complex pathways existing between inputs and outputs of LLMs, and to better control outputs to achieve specified performance goals. The paper proposes transforming LLM data into a lower-dimensional latent space informed by Neural ODEs, providing a dynamic model that captures continuous data evolution. Additionally, robust control helps fine-tune the model outputs for consistent quality and reliability.

Theoretical Framework

Ordinary Differential Equations (ODEs) in LLMs

ODEs represent the progression of inputs into outputs within LLMs. They offer a continuous-time model that closely examines how data is transformed throughout the model. The paper posits this framework as an optimal pathway to making state transitions more interpretable. By focusing on both input evolution and output coherence, the ODE framework grants insights into complex model mechanisms, particularly advantageous for understanding language dynamics and dependencies structured in LLM layers.

Robust Control for Stability and Reliability

Robust control theory works in conjunction with Neural ODEs to dynamically maintain the optimal performance of LLMs in the face of uncertainties or input variabilities. It provides a systematic approach to stabilizing model outputs while adjusting to fluctuations within the data or model. By designing a control function that dynamically modifies model parameters, the paper ensures outputs meet reliability standards, crucial for high-stakes applications like autonomous systems and medical diagnostics.

Unified Optimization Framework

The paper introduces a unified optimization framework that balances model fidelity with control efforts. By minimizing deviations through a specifically structured loss function that incorporates prediction errors, regularization terms, and control efforts, the approach ensures that model outputs stay aligned with expected targets. This makes the process conducive to continuous learning and adaptation, a robust foundation for deploying AI at scale.

Methodology

The methodology outlines two distinct yet integrated frameworks using Neural ODEs: with and without control. The first focuses on encoding text data into a latent space and iteratively evolving that latent state via continuous-time models. The second framework introduces robust control mechanisms, allowing for dynamic adjustments of latent states to ensure consistent and accurate model performances. Each framework incorporates step-by-step algorithmic processes to capture dynamic LLM processes and improve transparency.

Experiments

Experimental Setup

Using the "aligner/aligner-20K" dataset, the experiments evaluate model performance through comparative analyses of Neural ODE architectures, with and without control implementations. The dataset's alignment tasks provided a fertile ground for assessing transformations in textual data, allowing a focused examination of control strategies.

Results

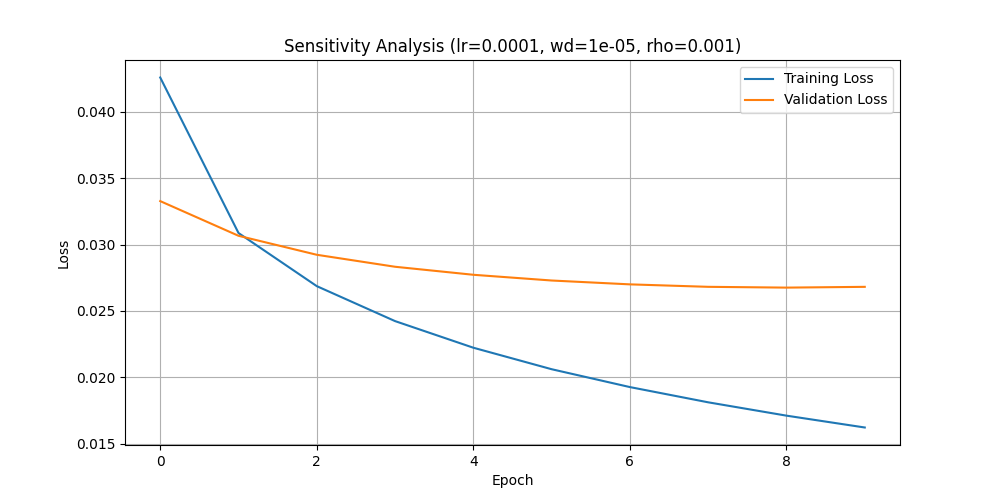

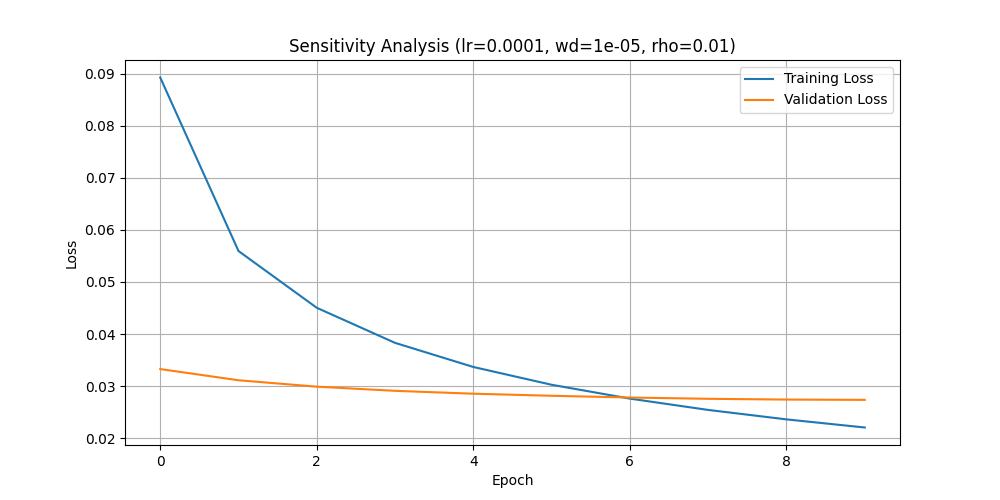

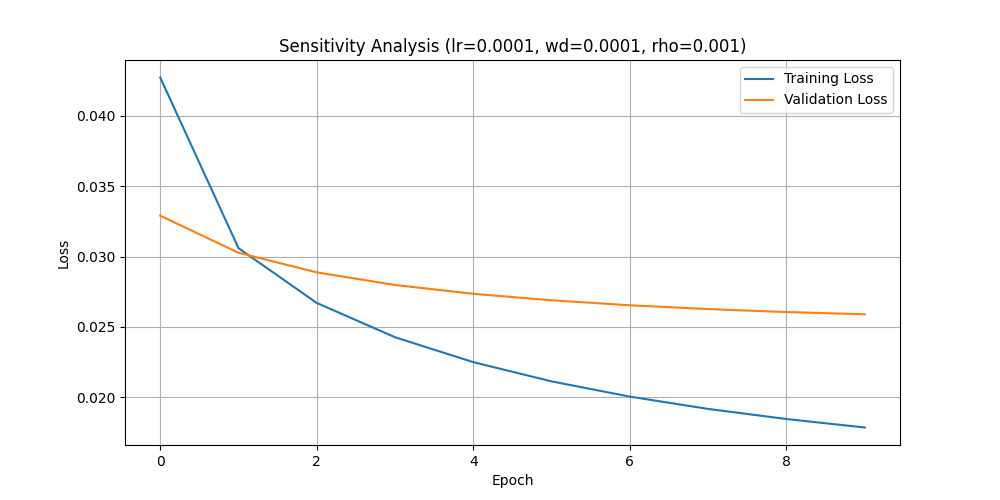

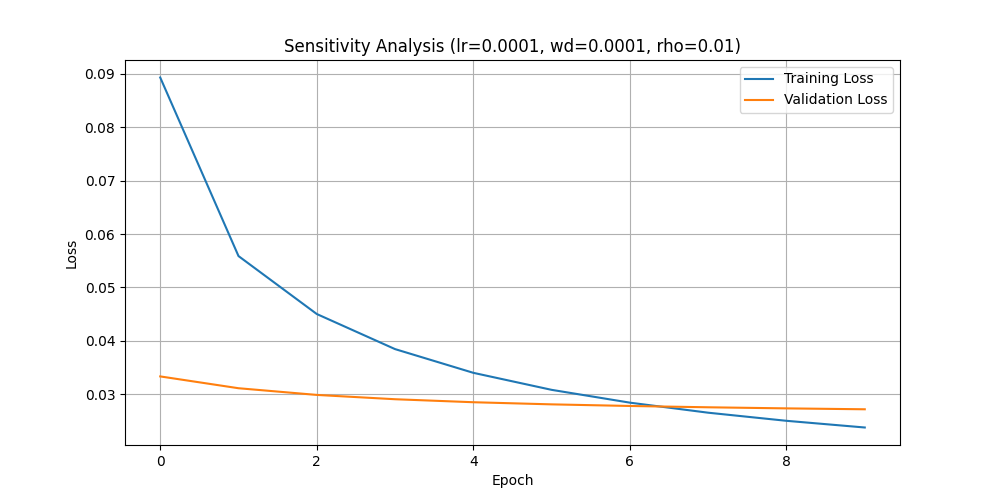

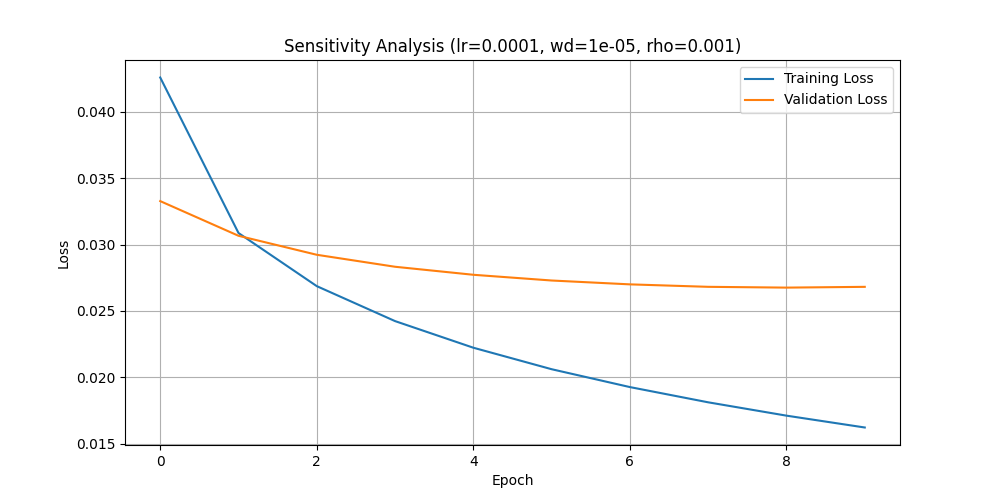

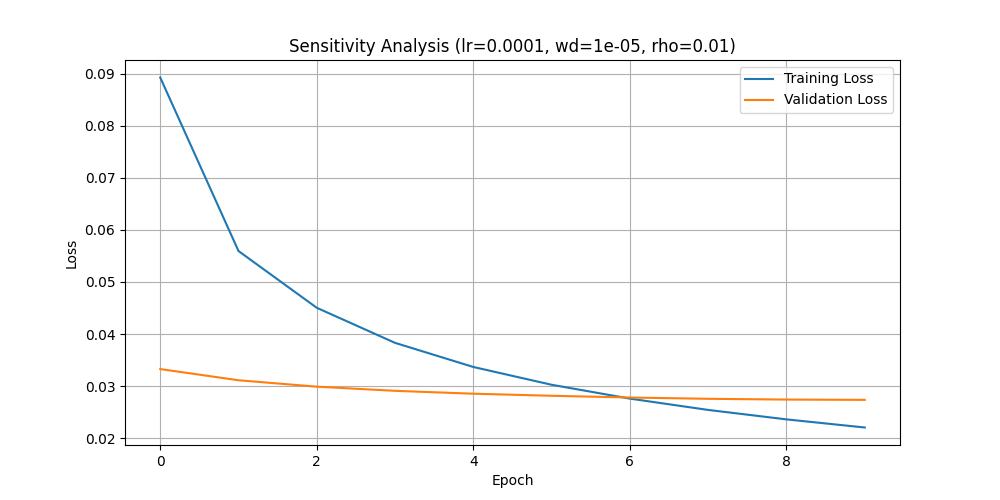

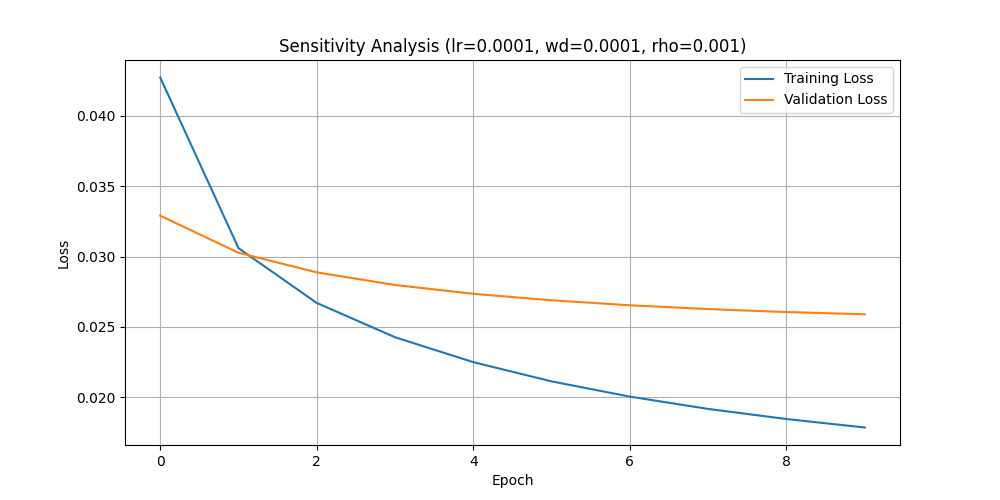

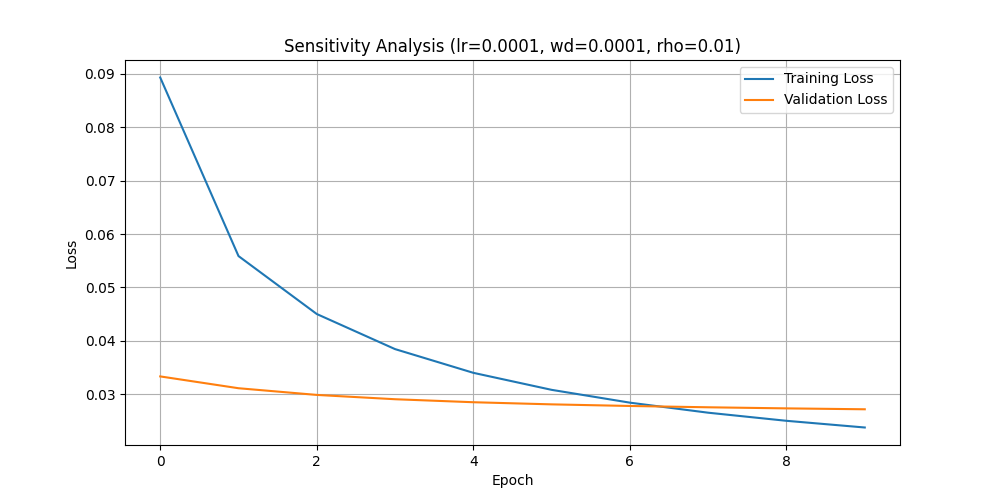

Loss Analysis

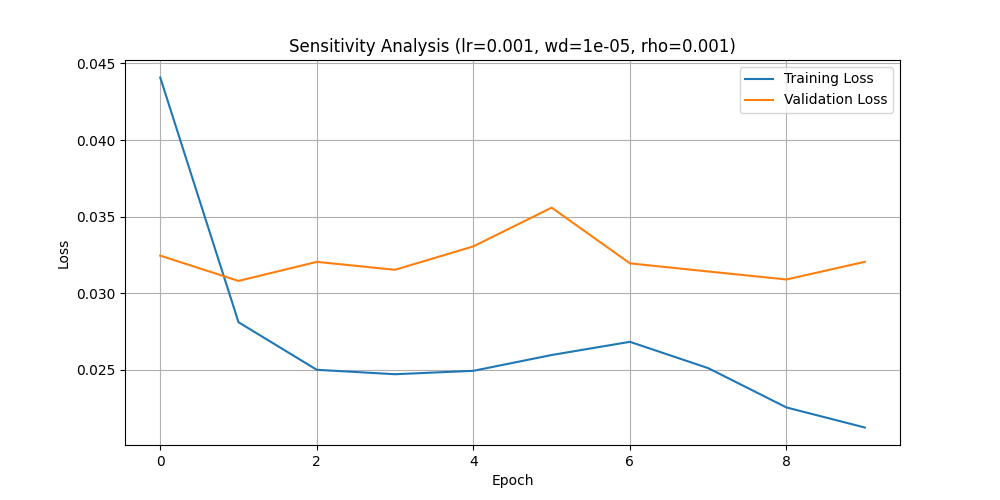

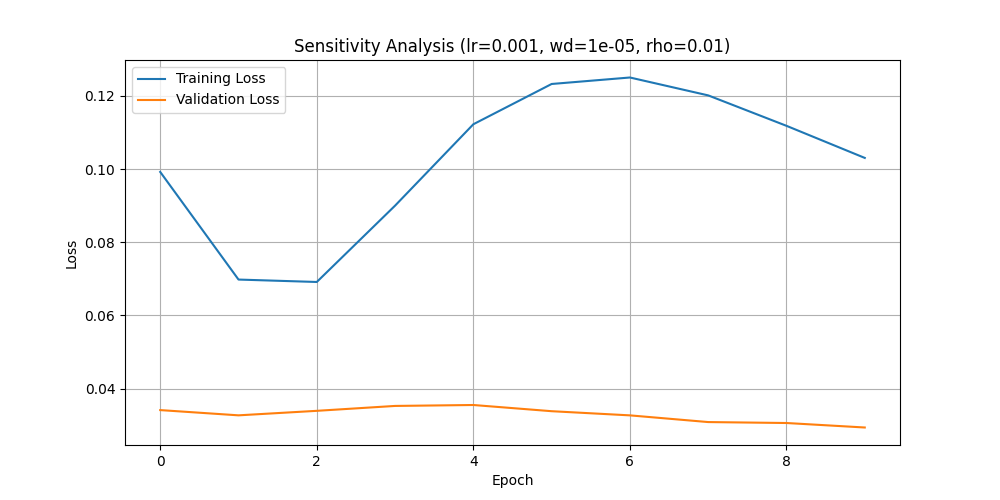

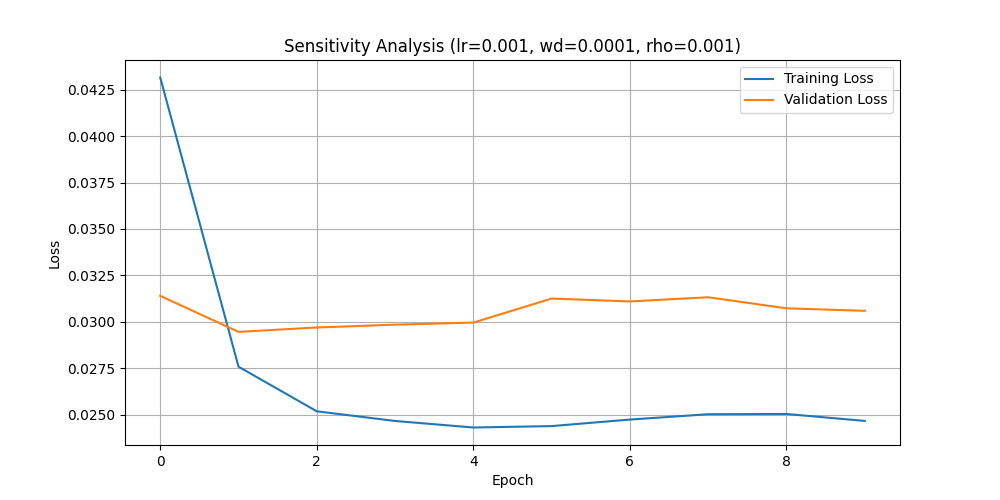

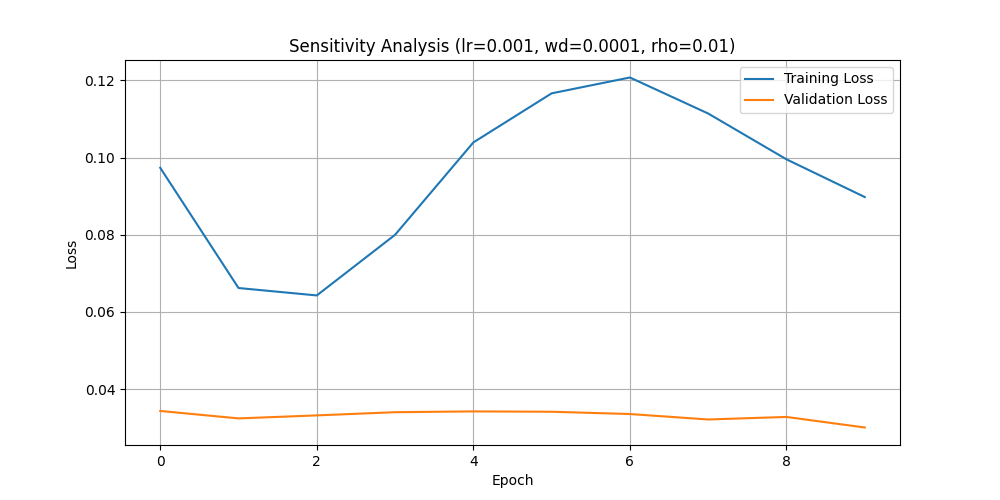

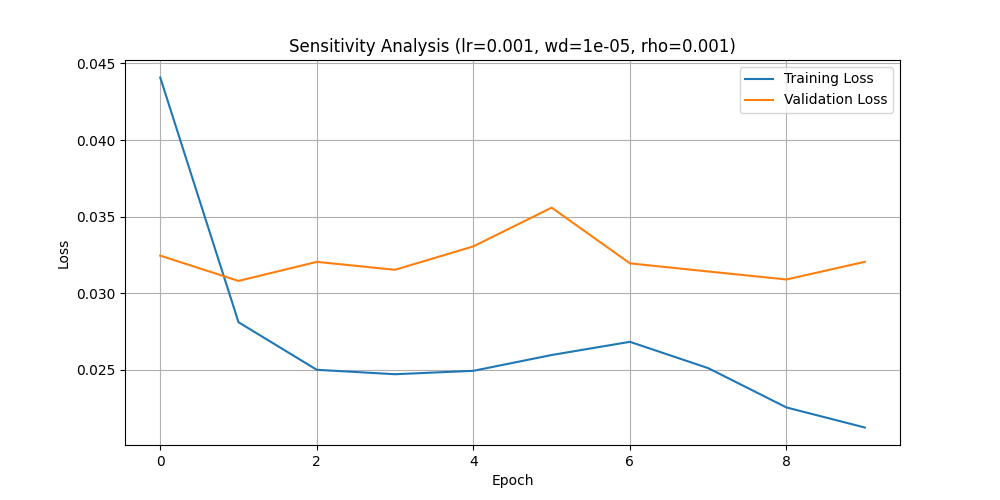

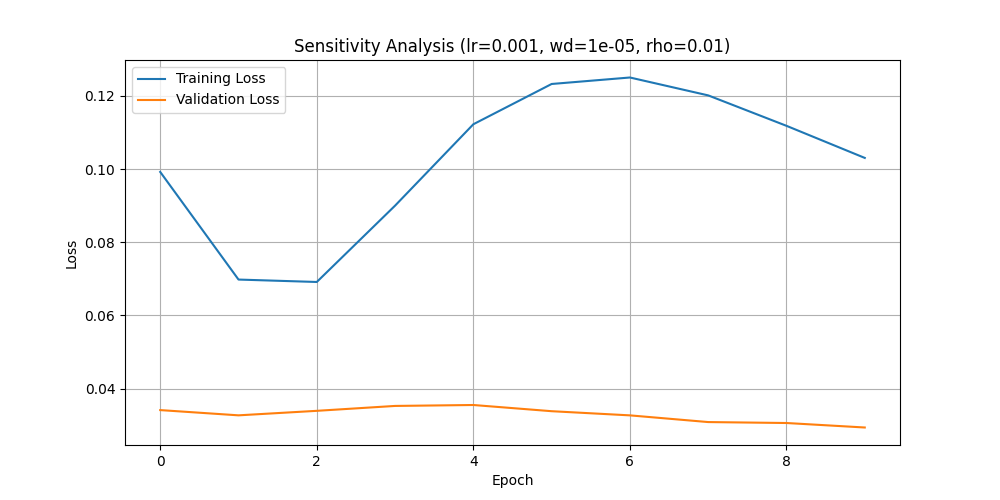

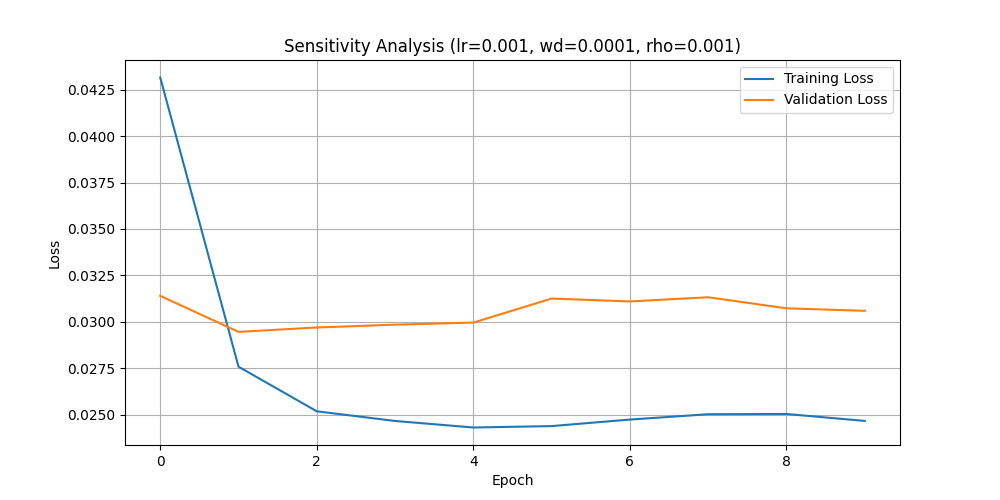

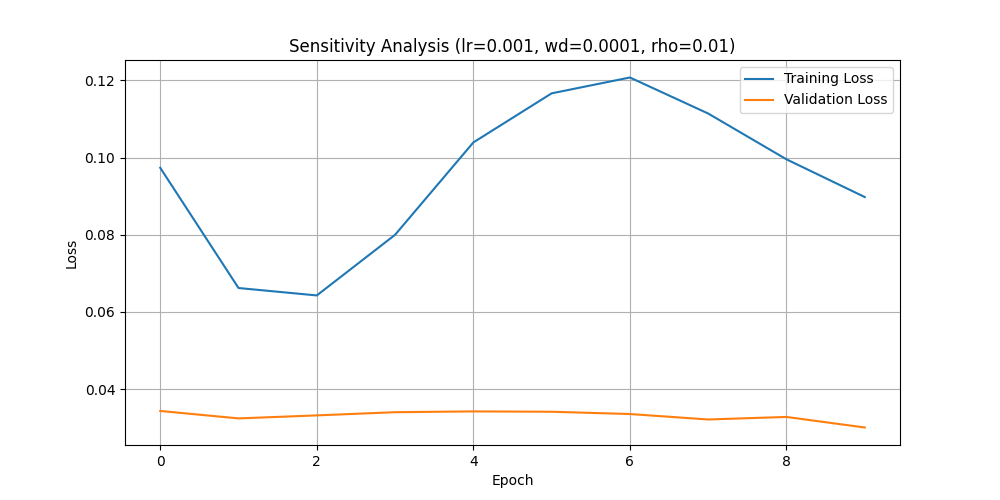

Integrating control mechanisms demonstrated significant improvements in model performance. Where models without control showed fluctuating loss and less structured representations, those with control achieved consistent and stable losses on both training and validation sets (Figure 1).

Prediction Accuracy

Models with control proved to have higher accuracy in predictions against real targets, as visualized by the tighter alignment between predicted and actual values, thus validating the efficacy of robust control strategies.

Latent Space Dynamics

Analyzing latent space showed controlled models arranged data in a more structured, interpretable format compared to uncontrolled models, indicating enhanced data progression understanding within model spaces.

Figure 1: Sensitivity Analysis (lr=0.001, wd=1e-05, rho=0.001)

Discussion

The findings illustrate how incorporating control mechanisms in Neural ODEs enhances both interpretability and robustness in LLMs. The resulting structured and coherent latent spaces, coupled with reduced training-loss variability, support the practical application of these models in AI systems requiring transparency. This research contributes to bridging theoretical advances in AI with practical deployment in ethically sensitive fields.

Conclusion

By merging Neural ODEs with robust control, the paper advances the interpretability and reliability of LLMs, setting a foundation for their use in real-world, high-stakes applications. The approach effectively addresses essential concerns in AI deployment: achieving a balance between model complexity and output reliability. The results here offer valuable insights for continued research on innovative control frameworks and their impacts on AI modeling dynamics.