- The paper introduces a novel framework that combines distributionally robust and adversarially robust optimization for logistic regression.

- It leverages intersecting Wasserstein balls centered on empirical and auxiliary datasets to mitigate overfitting and improve out-of-sample performance.

- Empirical evaluations on UCI and MNIST datasets demonstrate significant robustness gains compared to classical methods.

Distributionally and Adversarially Robust Logistic Regression via Intersecting Wasserstein Balls

Introduction

The paper explores a novel approach to robust logistic regression, addressing both distributional uncertainty and adversarial attacks by utilizing intersecting Wasserstein balls. The failure of empirical risk minimization (ERM) to withstand adversarial attacks necessitates a method ensuring robustness against such perturbations. Traditional adversarially robust optimization (ARO) models, while providing adversarial defense, are susceptible to severe overfitting. The authors propose a framework that combines the strengths of distributionally robust optimization (DRO) with ARO, using auxiliary datasets to refine the decision boundary of logistic regression. This method offers enhanced out-of-sample performance, managing the trade-off between robustness and overfitting.

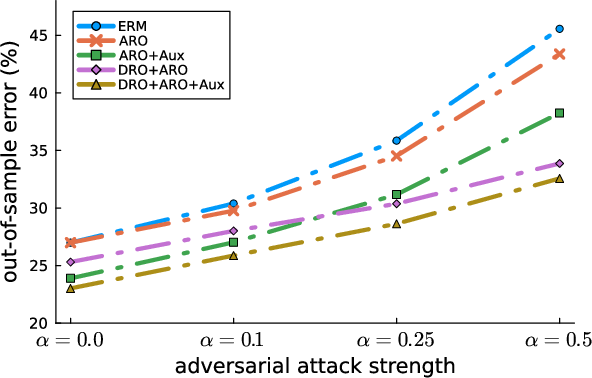

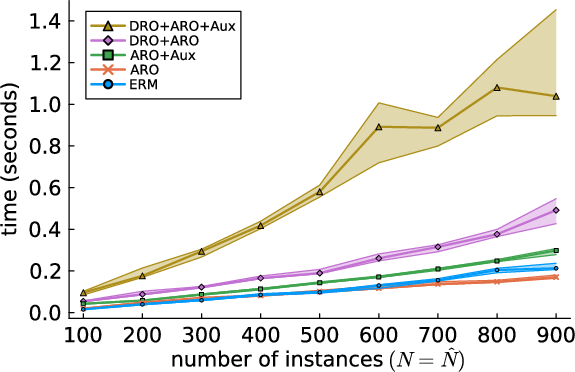

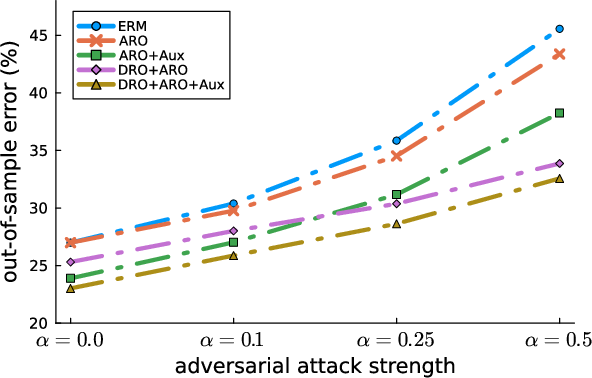

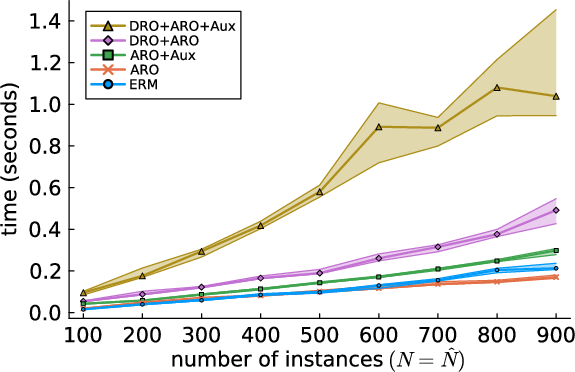

Figure 1: Out-of-sample errors under varying attack strengths (left) and runtimes under varying numbers of empirical and auxiliary instances (right) of artificial experiments.

Methodology

Wasserstein DRO Framework:

The proposed approach formulates a distributionally robust counterpart of ARO. The method involves intersecting the traditional Wasserstein ball, centered on the empirical distribution, with another centered on an auxiliary distribution. The intersection mitigates model conservatism and enhances adaptability to true data distribution shifts. Auxiliary datasets could be synthetic or out-of-domain, offering a refined ambiguity set to counteract adversarial effects effectively. This technique capitalizes on the tractability of the Wasserstein distance and its application in ML robustness.

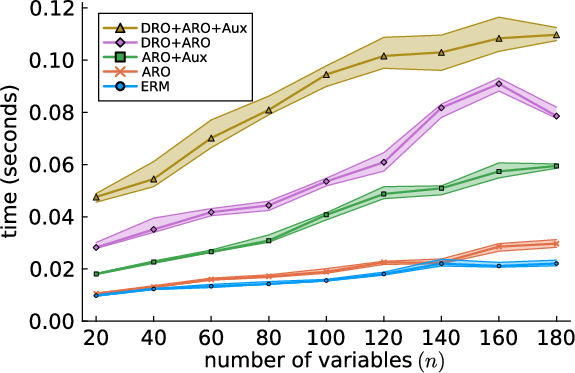

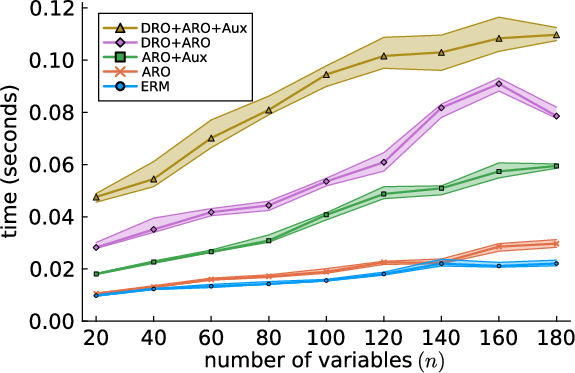

Figure 2: Runtimes under a varying number of features in the artificially generated empirical and auxiliary datasets.

Algorithmic Solution:

The authors reformulate robust logistic regression as a convex optimization problem. An efficient approximation algorithm is introduced, leveraging dual formulations to handle the complexities of intersecting ambiguity sets. The solution maintains a balance among computational efficiency, robust defense, and minimizing out-of-sample error.

Experimental Results

Extensive experiments on UCI datasets and MNIST/EMNIST datasets validate the practical efficacy of the proposed method. The results consistently demonstrate superior out-of-sample performance compared to both classical ARO and ERM methods. The Wasserstein DRO approach, particularly when enriched with auxiliary data, shows significant improvements in robustness without compromising model performance on unperturbed data.

Implications

This research provides a significant leap in robust statistics and ML, accommodating adversarial examples' increasing sophistication. The ability to intersect multiple Wasserstein balls introduces a new paradigm in uncertainty quantification, emphasizing the importance of auxiliary datasets in training robust models.

Conclusion

The intersection of Wasserstein balls offers a powerful mechanism to enhance logistic regression's adversarial and distributional robustness. By addressing both adversarial perturbations and distributional shifts, the proposed framework strikes an ideal balance, offering robust, scalable solutions suitable for various applications. Future research could explore extensions to other loss functions and address computational challenges in high dimensional settings.