- The paper introduces CRAFTBooster, which leverages camera-radar fusion to improve tracking accuracy in autonomous driving.

- It employs three main modules—Inner-modality Matching, Cross-modality Check, and Multi-modality Fusion—to address occlusion and weak reflectance issues.

- Experimental results show IDF1 score improvements of 5-6% on the K-Radar dataset, confirming the framework’s practical benefits.

Boosting Online 3D Multi-Object Tracking through Camera-Radar Cross Check

Introduction

The paper "Boosting Online 3D Multi-Object Tracking through Camera-Radar Cross Check" examines the challenges and complexities of implementing effective sensor fusion techniques in autonomous driving, specifically in 3D Multiple Object Tracking (MOT). The paper introduces a novel framework called Camera-Radar Associated Fusion Tracking Booster (CRAFTBooster), which leverages the respective advantages of cameras and radars to enhance tracking accuracy.

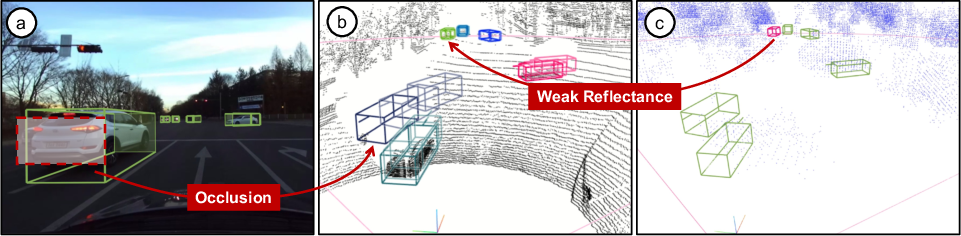

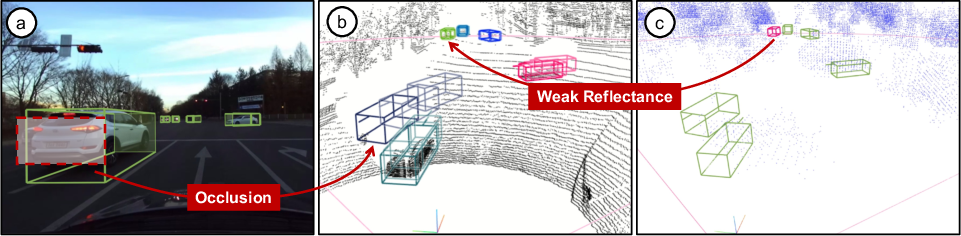

The fusion of data from distinct sensor modalities, such as cameras providing perspective views and radars offering bird's-eye views (BEV), forms the core of the approach. This multi-modality is harnessed to improve detection and tracking capabilities, overcoming obstacles such as occlusion in camera data and weak reflectance in radar data.

Figure 1: The image-based detections frequently confront challenges related to occlusion, whereas the radar-based detections face complications due to weak reflectance.

Methodology

CRAFTBooster Architecture

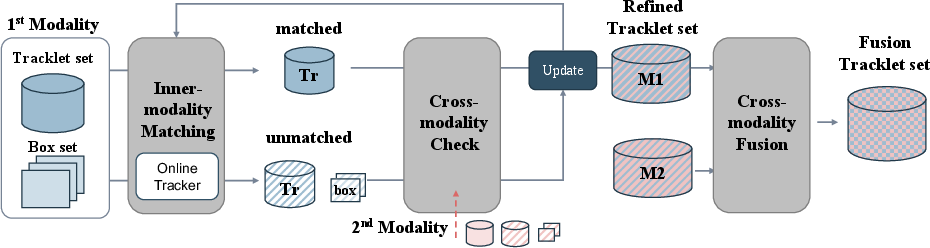

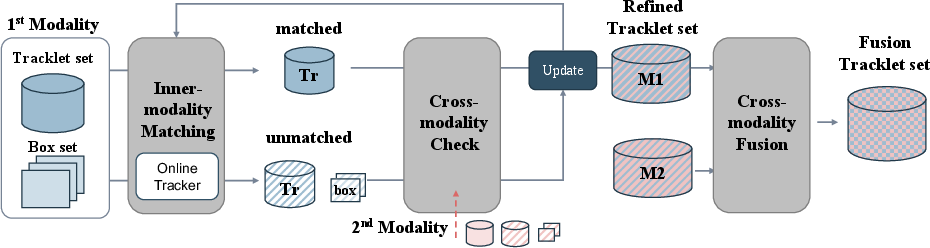

The CRAFTBooster framework comprises three key components: Inner-modality Matching Module, Cross-modality Check Module, and Multi-modality Fusion Module. These modules work collaboratively to ensure effective sensor fusion during the tracking stage:

- Inner-modality Matching Module: Utilizes reliable 3D object detection results from both camera and radar data to perform 3D MOT in each modality independently. It extracts matched and unmatched tracklets essential for subsequent cross-checking.

- Cross-modality Check Module: Enhances unmatched tracklets by leveraging information from the other modality to fill missing data and assesses unmatched detections to confirm their reliability. This module plays a critical role in refining each modality's tracklet set.

Figure 2: CRAFTBooster's architecture, including Inner-modality Matching Module, Cross-modality Check Module, and Multi-modality Fusion Module.

Multi-modality Fusion Module

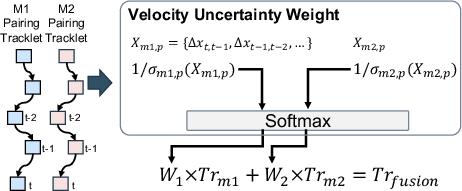

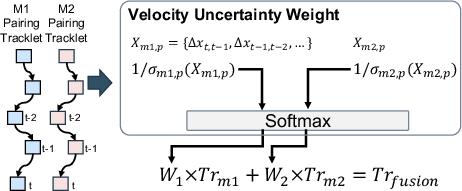

This module focuses on pairing tracklets from both modalities and effectively fusing them based on uncertainty measures to generate improved tracking results. The fusion process employs homoscedastic uncertainty weighting to balance contributions from each modality, ensuring optimized tracklet estimation.

Figure 3: Tracklets Fusion with Homoscedastic Uncertainty, emphasizing velocity uncertainty weighting.

Experimental Results

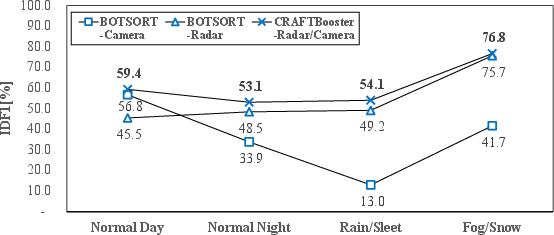

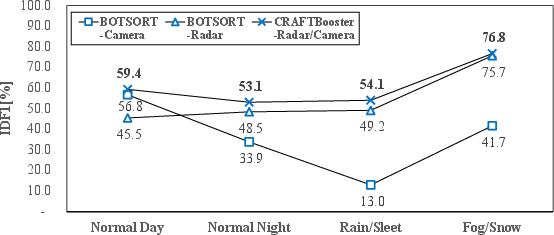

The experiments present compelling improvements in tracking performance when applying the proposed CRAFTBooster methodology to the K-Radar and CRUW3D datasets. The approach significantly elevates IDF1 scores under various weather conditions, reinforcing the robustness of the fusion strategy.

By integrating CRAFTBooster into ByteTrack and BOTSORT trackers, the experiments demonstrate consistent gains of approximately 5-6% IDF1 on K-Radar and 1-2% on the CRUW3D benchmark.

Figure 4: Comparison of IDF1% under various weather conditions on K-Radar dataset.

Limitations and Future Directions

While the CRAFTBooster achieves substantial improvements, it does encounter challenges such as increased false positives (FPs). Addressing FP reduction via enhanced raw data analysis presents a promising avenue for future research.

The integration of additional sensor modalities, like LiDAR, and further exploration into unsupervised learning techniques for sensor fusion could pave the way for even more resilient and accurate 3D MOT systems.

Conclusion

The paper provides a detailed exploration of enhancing 3D multi-object tracking through effective camera-radar fusion strategies. CRAFTBooster stands out as a practical solution that can be implemented with existing online trackers, showcasing its potential impact on advancing autonomous driving systems in real-world scenarios. The methodology not only optimizes tracking performance but also introduces a robust framework for sensor fusion that adapts to challenging environmental conditions.