- The paper reviews state-of-the-art reconstruction methods, comparing NeRF-based and Gaussian Splatting techniques in RMIS settings.

- It demonstrates that while NeRF approaches deliver high-quality reconstructions, they require substantial computational resources for real-time surgery.

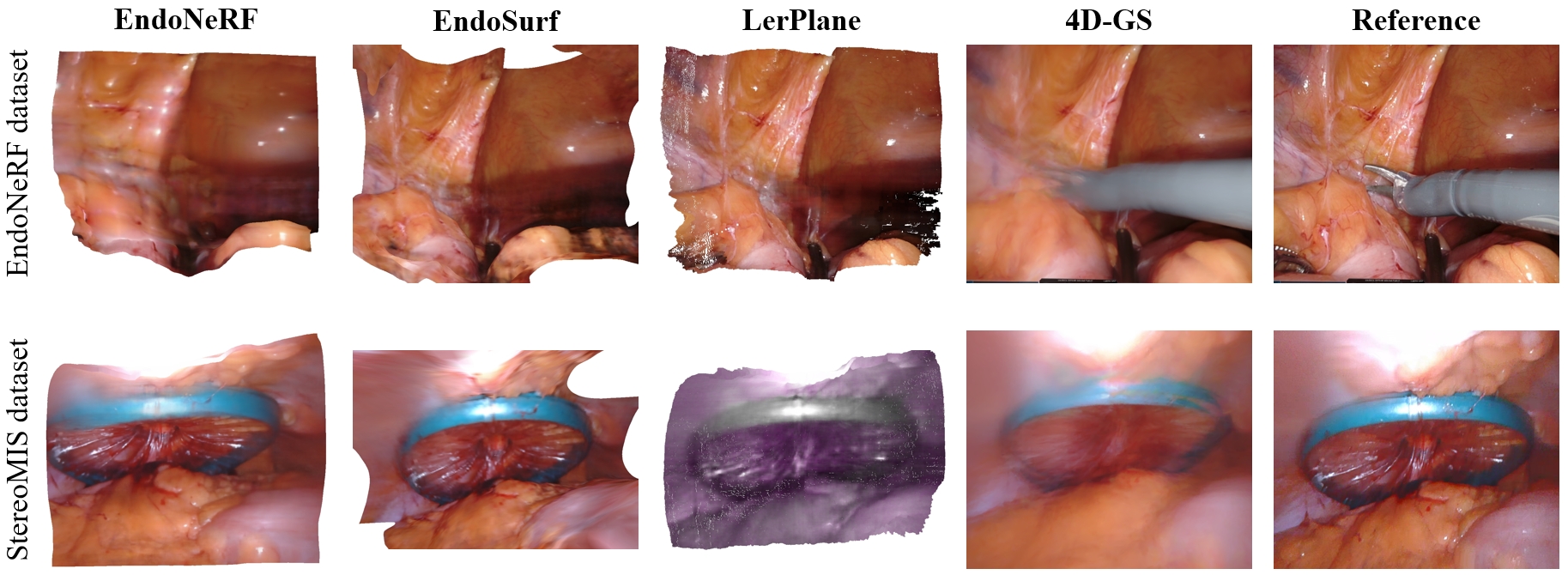

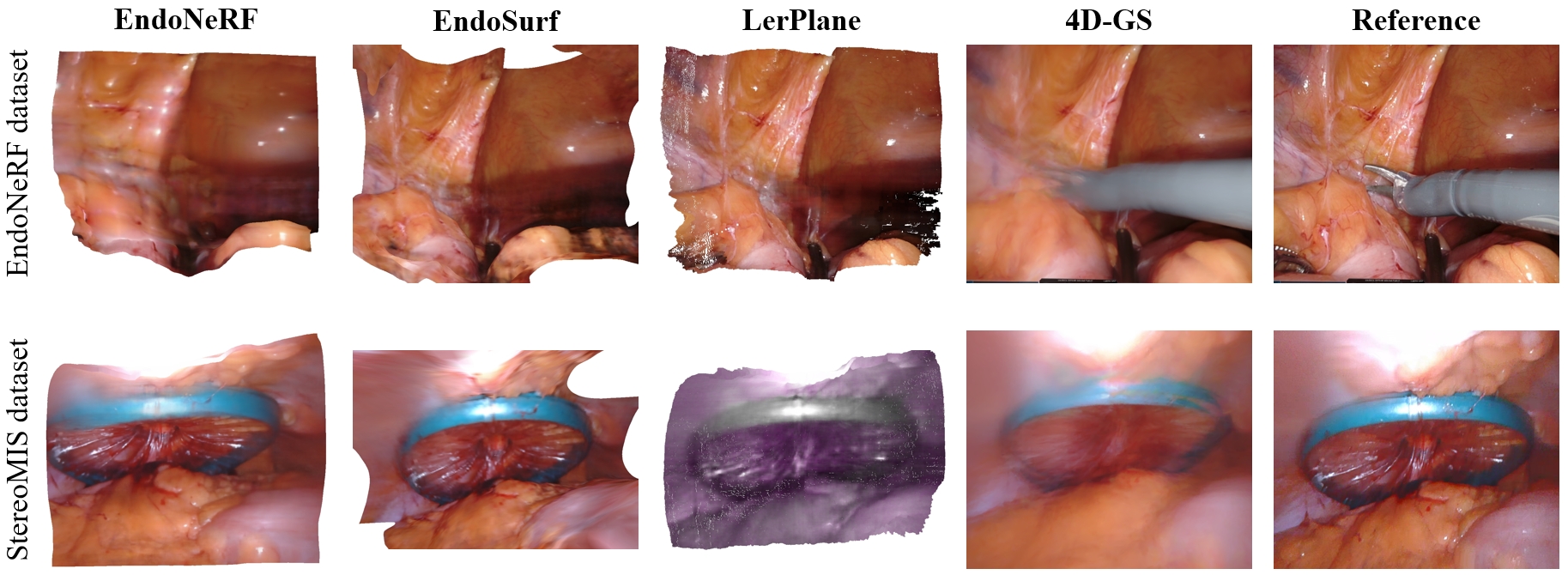

- The study reveals that Gaussian Splatting methods achieve faster inference but face challenges with color fidelity and dynamic tissue deformations.

Introduction

Robotic minimally invasive surgery (RMIS) presents unique challenges to 3D reconstruction, vital for applications such as augmented reality and surgical navigation. In this paper, Xu et al. (2408.04426) explore state-of-the-art (SOTA) techniques for reconstructing surgical scenes from endoscopic video data, focusing on methods that address dynamic scenes and occlusions due to surgical tools. They evaluate both implicit NeRF-based techniques and explicit Gaussian Splatting-based methods, replicating models on diverse datasets to assess performance, training time, inference speed, and resource consumption. The study aims to facilitate real-time, high-quality reconstructions in surgical environments.

Methodologies

NeRF-based Techniques

NeRF (Neural Radiance Field) techniques map spatial points and viewing directions to color and density values, employing volume rendering for scene reconstruction. EndoNeRF expands upon NeRF by integrating ray sampling that prevents tool occlusion and D-NeRF techniques for dynamic scenes, demonstrating effective reconstruction albeit with significant computation demands [wang2022endonerf]. EndoSurf targets reconstructing smooth surfaces using Signed Distance Functions, enhancing scene geometry definition while optimizing rendering through advanced network architectures [zha2023endosurf].

Gaussian Splatting-based Techniques

3D Gaussian Splatting methods represent scenes with explicit 3D Gaussians, projection onto 2D planes simplifies rendering compared to NeRF's complex volume rendering. This approach enables real-time rendering due to reduced computational complexity. LerPlane optimizes learning speed by decomposing 4D scenes into 2D planes, achieving fast training with efficient memory usage, although it struggled with less deformable datasets. 4D-GS extends Gaussian Splatting for dynamic scenes, using voxel representation and interpolation techniques for feature extraction and optimization [wu20234dgaussians].

Figure 1: Visualization Results of 4 models on 2 datasets. EndoNeRF, EndoSurf, and LerPlane on the first dataset give good 3D results. Still, LerPlane on the StereoMIS dataset cannot restore the original color, and 4D-GS presents poor performance on both endoscopic datasets.

Experimental Setup

The researchers conducted evaluations on the EndoNeRF, StereoMIS, and C3VD datasets, assessing models on metrics such as PSNR, SSIM, and LPIPS, while considering practical constraints like GPU usage and inference times. The disparity in results across datasets highlighted the necessity of distinct strategies for deformable versus less dynamic scenes. EndoSurf consistently demonstrated high-quality reconstruction on deformable tissue scenes due to its surface-oriented approach.

Results and Implications

The findings underscore that 4D-GS, while capable of real-time rendering, underperformed in surgical contexts. EndoNeRF and EndoSurf excelled in quality but required substantial computational resources, limiting real-time applicability. LerPlane offered competitive quality with efficient operation, showing promise for real-time integration, particularly in dynamic surgical environments where quick adjustments are critical.

The paper identifies key challenges for future research, notably bridging the domain gap evident between natural and surgical scenes, and evolving Gaussian Splatting to handle surgical occlusions and deformations more adeptly. Optimizing these approaches could drastically improve RMIS precision and workflow efficiency, underscoring the urgent need for innovative methodologies in surgical AI technologies.

Conclusion

In summary, the paper provides an insightful review of transformative technologies for surgical scene reconstruction. It delineates pathways for practical implementation suited to RMIS demands, emphasizing real-time and high-fidelity requirements. The comparative evaluation elucidates the strengths and weaknesses of current models, guiding the trajectory for future AI advancements in robotic surgery that leverage foundational models and robust scene reconstruction techniques to achieve enhanced surgical safety and accuracy.