- The paper characterizes Dockerfile flakiness through a nine-month analysis of 8,132 Dockerfiles, establishing a comprehensive taxonomy of root causes.

- It introduces FlakiDock, an LLM-driven, feedback-enhanced repair system that achieves a 73.55% repair accuracy by combining static and dynamic code analysis.

- Empirical results demonstrate substantial performance improvements over existing baselines, exposing the limitations of traditional static and rule-based repair tools.

Dockerfile Flakiness: Characterization and Repair

Introduction

Dockerfiles are integral to reproducible builds in CI/CD pipelines, yet their non-deterministic failures—termed "flakiness"—have received minimal systematic scrutiny. The paper "Dockerfile Flakiness: Characterization and Repair" (2408.05379) undertakes a longitudinal empirical analysis of Dockerfile flakiness, delivers the first comprehensive taxonomy of root causes, and proposes FlakiDock, an LLM-driven, retrieval-augmented automated repair system. The study's nine-month evaluation of 8,132 Dockerfiles reveals that approximately 9.81% exhibit flaky rebuilds absent any code change. Empirical results demonstrate that FlakiDock resolves Dockerfile flakiness with 73.55% accuracy, outperforming all existing baselines by a wide margin.

Taxonomy and Root Causes of Dockerfile Flakiness

The authors performed 32 rebuilds across 8,132 high-quality Dockerfiles, systematically mining and filtering non-deterministic build outcomes spanning nine months. After removing failures attributed to infrastructure, Docker version skew, and project-specific errors, 798 Dockerfiles (9.81%) demonstrated genuine flakiness. These builds were clustered, labeled, and analyzed (using LLM-driven and manual workflows) to yield a hierarchical taxonomy capturing the landscape of root causes.

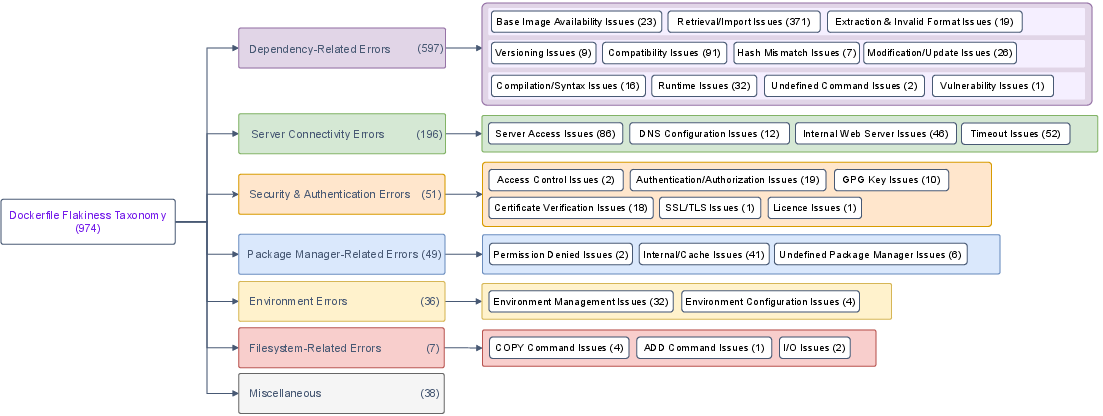

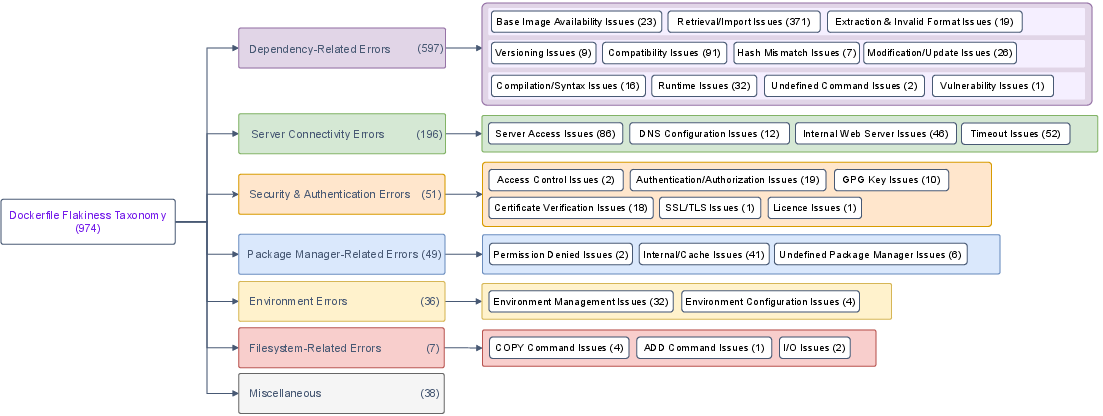

Figure 1: The taxonomy captures fine-grained and orthogonal categories of flakiness drivers in Dockerfiles, dominated by transient or structural dependency errors and server connectivity faults.

The paper identifies core categories:

- Dependency-Related Errors (DEP, 61.29%): Encompasses failures in dependency retrieval, installation (versioning, compatibility, hash mismatches), and post-install custom scripts.

- Server Connectivity Errors (CON, 20.12%): Encompasses HTTP(S) downtimes, DNS misconfigurations, and API surface drift.

- Security/Authentication Errors (SEC, 5.24%): Covers breaking changes in authentication protocols, key deprecations, and new license constraints.

- Package Manager Errors (PMG, 5.0%): Manifest as failures due to registry removals, cache corruption, or internal configuration instability.

- Environment/Filesystem/Other: Remaining failures (cumulative ≈8.2%) were attributed to breaking changes in base image environments (e.g., PEP668), filesystem structure changes, or unclassifiable build output.

This granularity exposes that static analysis rules and linter-centric tools (e.g., Hadolint, Binnacle) do not capture the temporal, dynamic, and external system interactions that render Dockerfiles non-deterministic at build-time.

FlakiDock: LLM-based Automated Flakiness Repair

Recognizing the inadequacy of rule-based tools for repairing dynamic, time-dependent flakiness, the authors introduce FlakiDock, a hybrid system combining LLMs, retrieval-augmented generation, dynamic execution traces, and iterative repair validation.

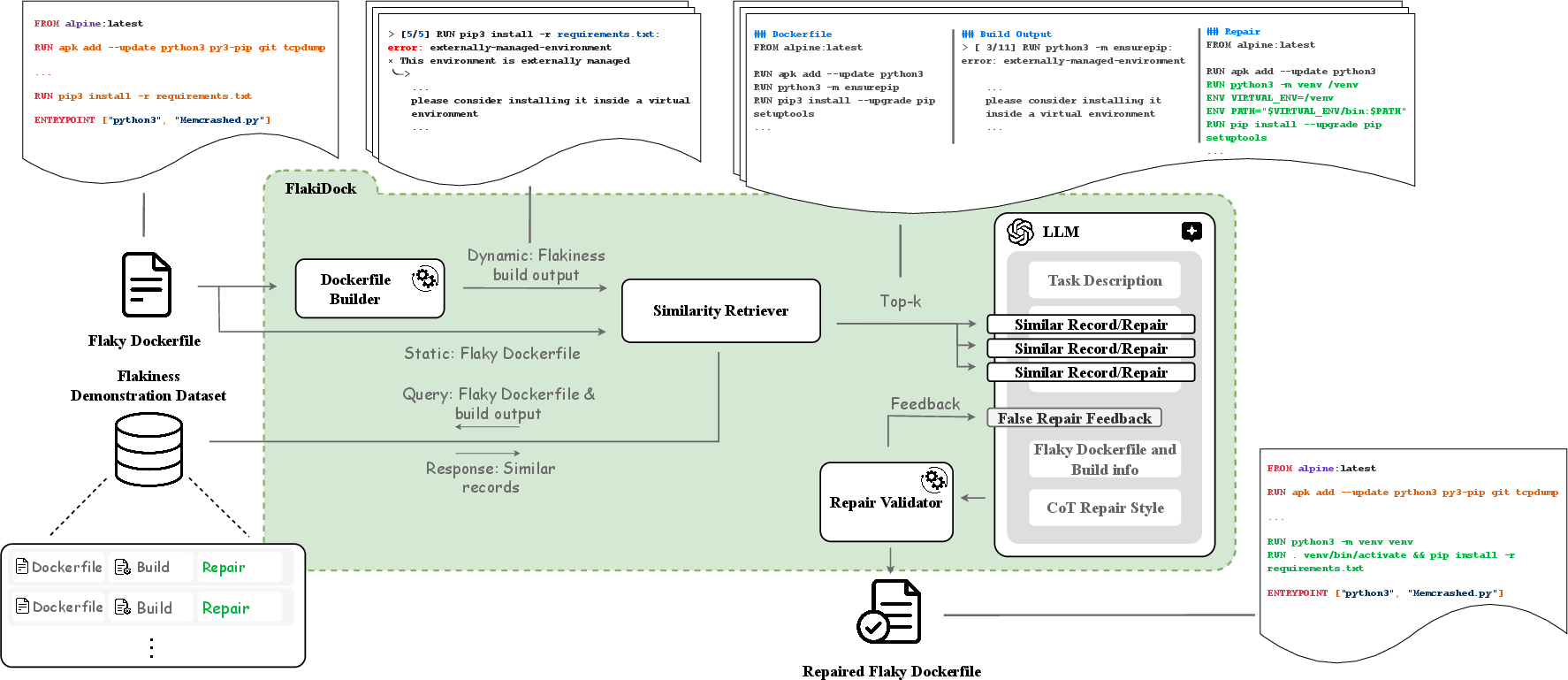

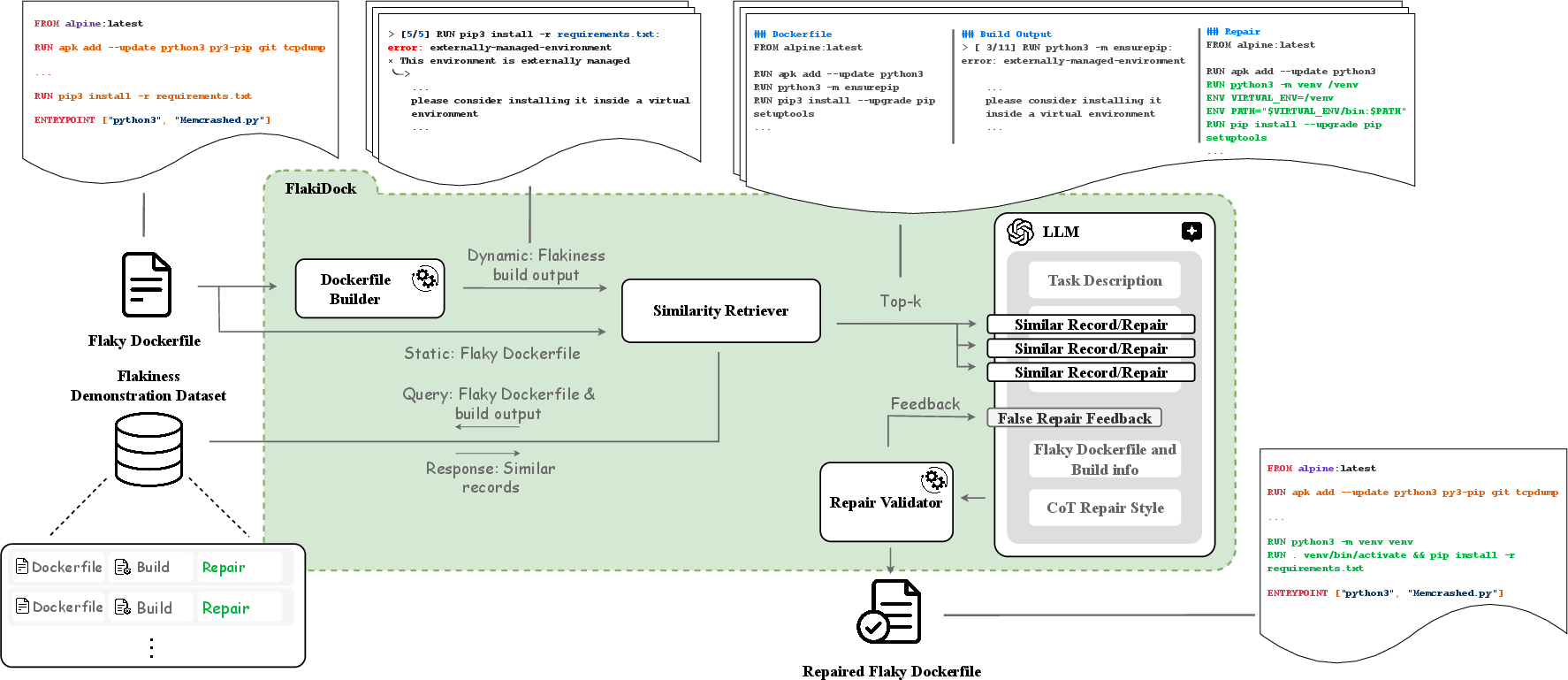

Figure 2: FlakiDock pipeline: dynamic build output extraction, dual-context similarity retrieval, LLM patch generation, and iterative feedback-driven repair validation.

The approach is characterized by:

- Demonstration Dataset Construction: Manual repair and repeated validation of 100 diverse flaky Dockerfiles, labeled per taxonomy, ensure breadth and real-world representativeness.

- Dual-Context Similarity Retrieval: Given a new flaky Dockerfile and build log, FlakiDock retrieves top matches from the demonstration set using combined static (Dockerfile code tokens) and dynamic (failure trace) embeddings.

- RAG-Enhanced LLM Prompting: The LLM (GPT-4, temperature=0 for determinism) receives the failing Dockerfile, the failing log, relevant categorized repair exemplars, and optionally, negative feedback from prior failed iterations.

- Iterative Feedback Validation: Generated repairs are applied and rebuilt (with n>1 iterations to confirm non-flakiness). On persistent failure, build artifacts and failed patches are supplied to the LLM until convergence or a timeout threshold.

Distinctively, repair is not limited to superficial line mutations: environmental initialization, dependency pinning, authentication flow updates, and even adoption of new build paradigms are all within scope.

Evaluation and Comparative Analysis

A head-to-head evaluation against Parfum and direct LLM prompt baselines demonstrates FlakiDock's statistical and practical superiority in automated Dockerfile repair.

Key results:

- 73.55% repair accuracy using FlakiDock (with feedback loop) across 344 persistent flaky cases.

- 12,581% improvement over Parfum (0.58% accuracy), which is ineffective for dynamic errors.

- 94.63% improvement over naive GPT-4 (6.10% accuracy with Dockerfile-only context; 37.79% with Dockerfile+build output).

- Feedback iteration is essential: the presence of an iterative refinement loop boosts FlakiDock's robustness, especially on security and package manager failures.

The following table summarizes FlakiDock's performance w.r.t. core error categories:

| Category |

FlakiDock (feedback) |

GPT-4 (content+log) |

Parfum |

| Dependencies (DEP) |

77.14% |

37.50% |

0% |

| Connectivity (CON) |

44.44% |

44.44% |

11.11% |

| Security (SEC) |

37.50% |

12.50% |

0% |

| Package Manager |

56.25% |

37.50% |

6.25% |

| Environment (ENV) |

81.82% |

59.09% |

0% |

No method was found to repair filesystem-related flakiness automatically, suggesting inherent limitations of current LLMs and code repair solutions given noisy or insufficient logs and the absence of human intuition for outlier scenarios.

Practical and Theoretical Implications

Practical Implications

- Flakiness in Dockerfiles is pervasive and under-recognized; current static linters and rule-based repair tools offer almost no coverage for dynamic or temporal failures seen in CI/CD deployments.

- LLM-based approaches, if provided with dual-context and dynamic repair feedback, can resolve a significant proportion of real-world build failures autonomously, improving build reliability and developer efficiency.

- Feedback-driven generation is essential for practical repair rates.

Theoretical Implications

- Dockerfile flakiness has characteristics both analogous to and orthogonal from traditional test flakiness, notably in its coupling to external, mutable systems (OS packages, API endpoints, registry crawlers).

- The demonstrated superiority of retrieval-augmented and feedback-informed LLMs in this domain challenges the dominance of rule-based and even recent AST-enrichment techniques for config repair.

- The coupling of static analysis, dynamic log mining, and dual-modality retrieval defines an emerging best practice for data-centric program repair in mutable IT and devops environments.

Looking Ahead

The data (Flake4Dock), taxonomy, and FlakiDock implementation are made public, facilitating further research. The authors propose continued extension of FlakiDock to tackle even more complex multi-stage build scenarios, integration with project-specific CI/CD policies, and expansion across diverse operating systems to account for ecosystem-specific manifestation of flakiness.

Conclusion

This study comprehensively characterizes and addresses Dockerfile flakiness, highlighting a high prevalence and the inadequacy of extant static and rule-based tooling for real-world repair. FlakiDock, through LLM-guided, dual-context, and feedback-driven generation, achieves high repair accuracy and substantially outperforms all existing baselines. The taxonomy and methodology define a foundation for future research in CI/CD reliability and dynamic configuration repair.

References: For full experimental details, taxonomy, data, and implementation, see (2408.05379).