- The paper introduces HLSPilot, a novel framework leveraging LLMs to translate C/C++ code into efficient HLS designs for FPGA acceleration.

- It employs a program-tree-based task pipelining strategy that decomposes complex algorithms to maximize parallel hardware execution.

- Experimental evaluations show that HLSPilot-generated designs rival manually optimized HLS solutions in runtime performance.

HLSPilot: LLM-based High-Level Synthesis

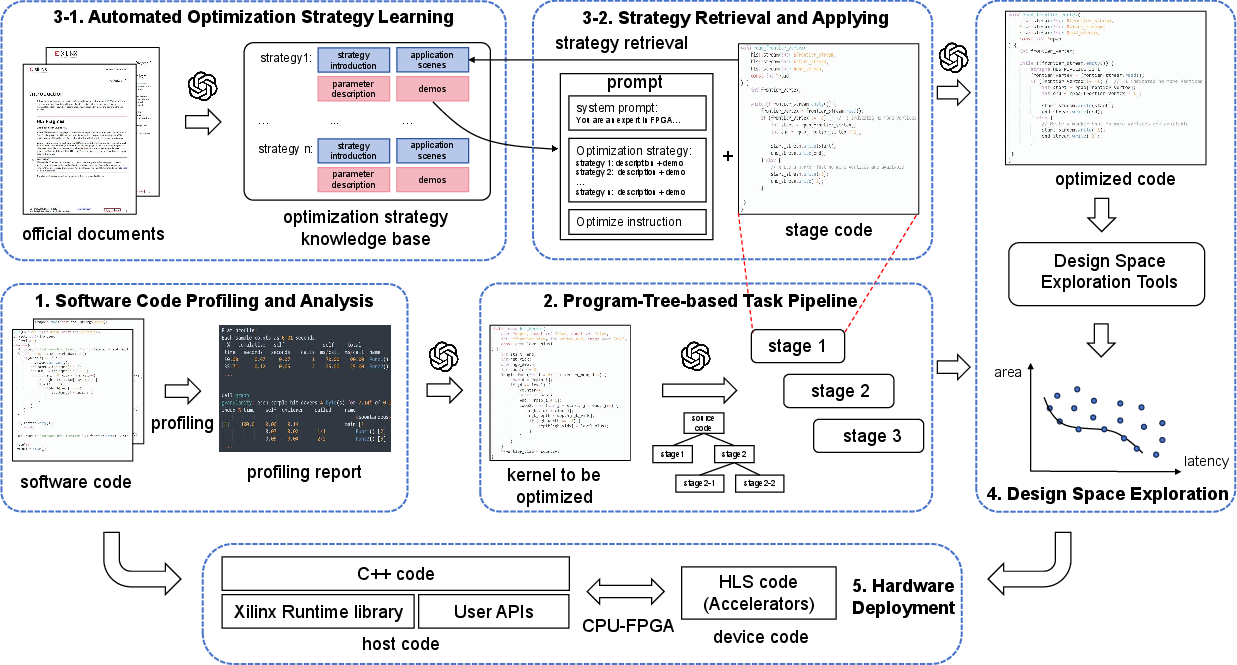

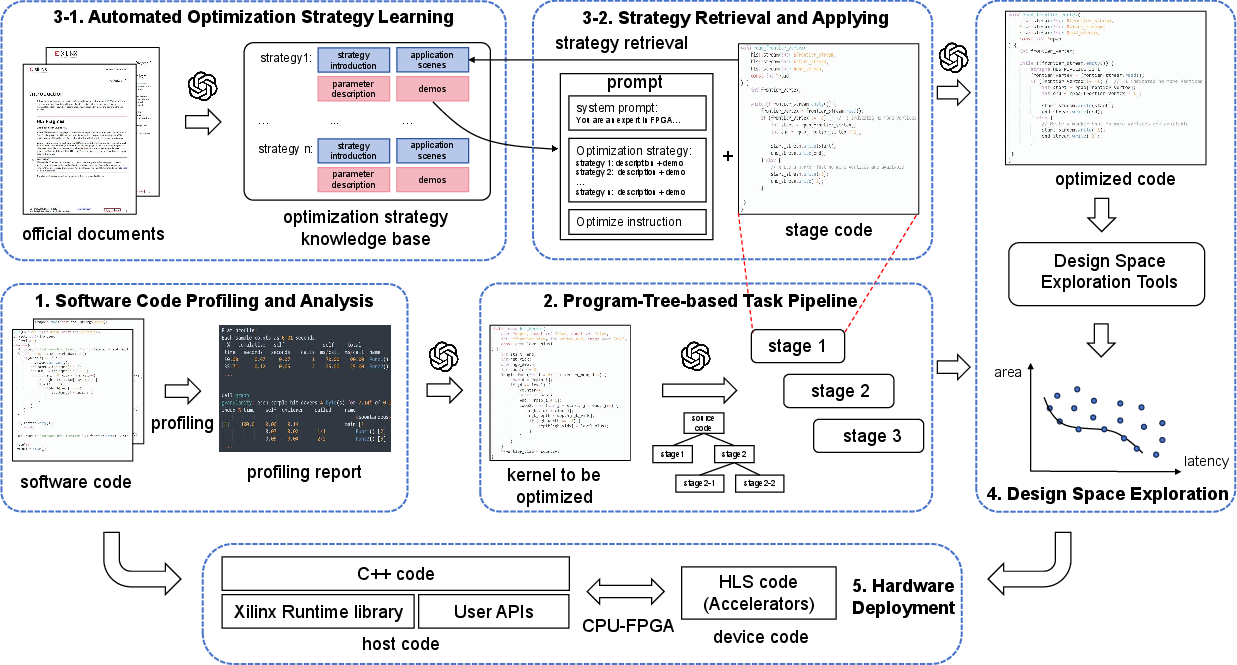

The paper "HLSPilot: LLM-based High-Level Synthesis" presents an innovative methodology that leverages LLMs to bridge the semantic gap between software programming and hardware design. The framework, HLSPilot, focuses on transforming sequential C/C++ code into optimized High-Level Synthesis (HLS) code suitable for deployment on hybrid CPU-FPGA architectures, thus streamlining the high-level application acceleration process.

Framework Overview

HLSPilot integrates several components to automate the process of hardware acceleration. It begins with profiling C/C++ code to identify performance bottlenecks, followed by applying LLMs to generate a refined program structure that allows optimized hardware execution through HLS.

Figure 1: HLSPilot framework.

The framework utilizes a design space exploration (DSE) tool to fine-tune pragma parameters, essential for achieving high performance in FPGA designs. HLSPilot is architected to fully automate the transition from software code to hardware acceleration, thereby lowering the barrier for software engineers to engage in hardware development.

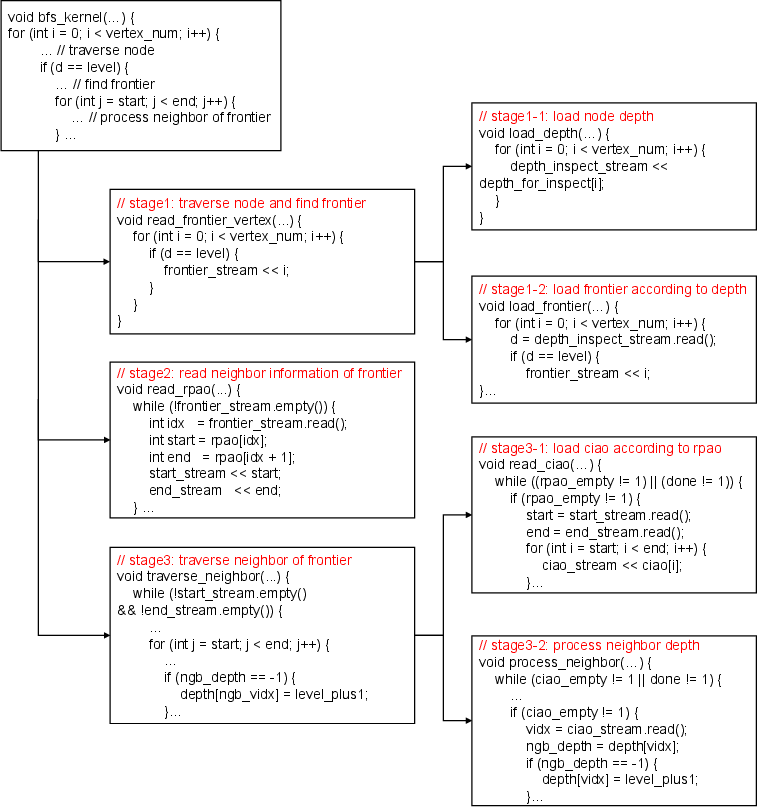

Program-Tree-Based Task Pipelining

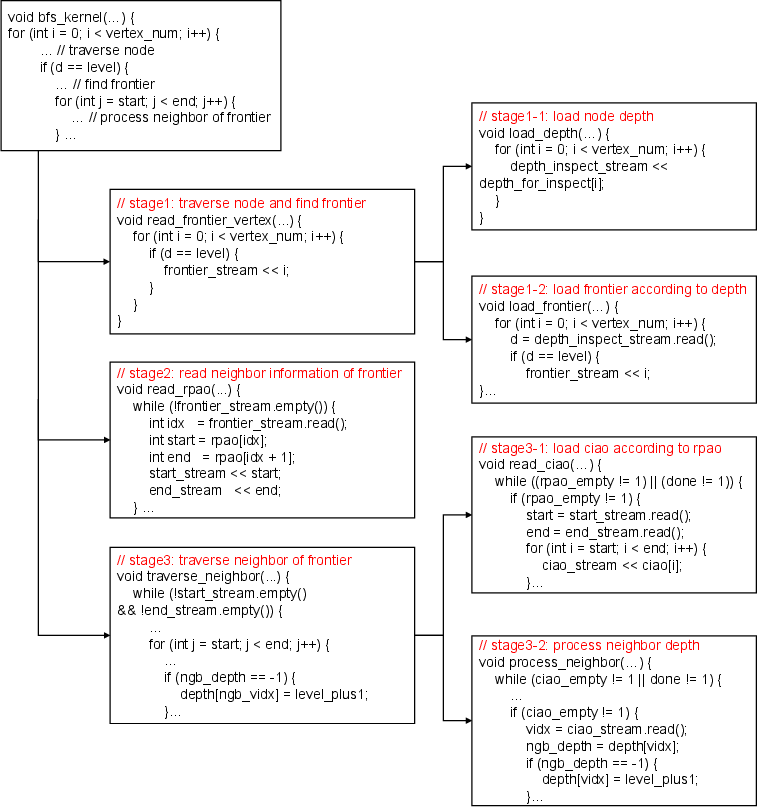

A core component of HLSPilot is its program-tree-based strategy, which decomposes complex algorithms into manageable tasks. This decomposition is crucial for pipelining tasks in a manner that maximizes parallel processing capabilities and minimizes resource contention.

Figure 2: An example of program tree construction. LLM divides BFS with nested loop into multiple dependent tasks for the pipelined execution.

The LLM is employed to iteratively split compute kernels into smaller tasks using a set of predefined decomposition strategies, which allows efficient parallel task execution, ultimately reducing execution time on FPGA platforms.

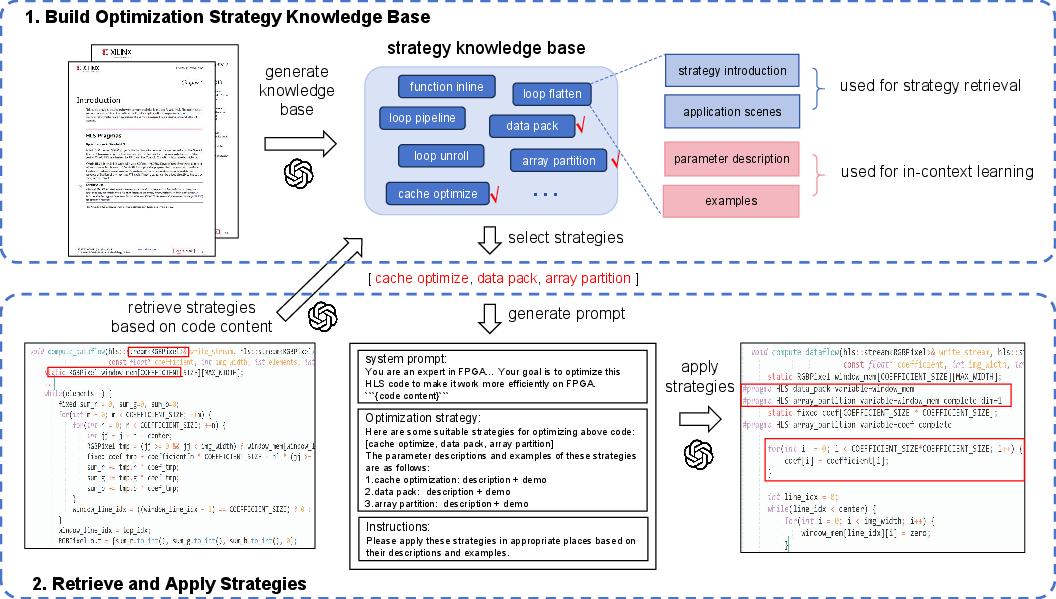

Automatic HLS Optimization

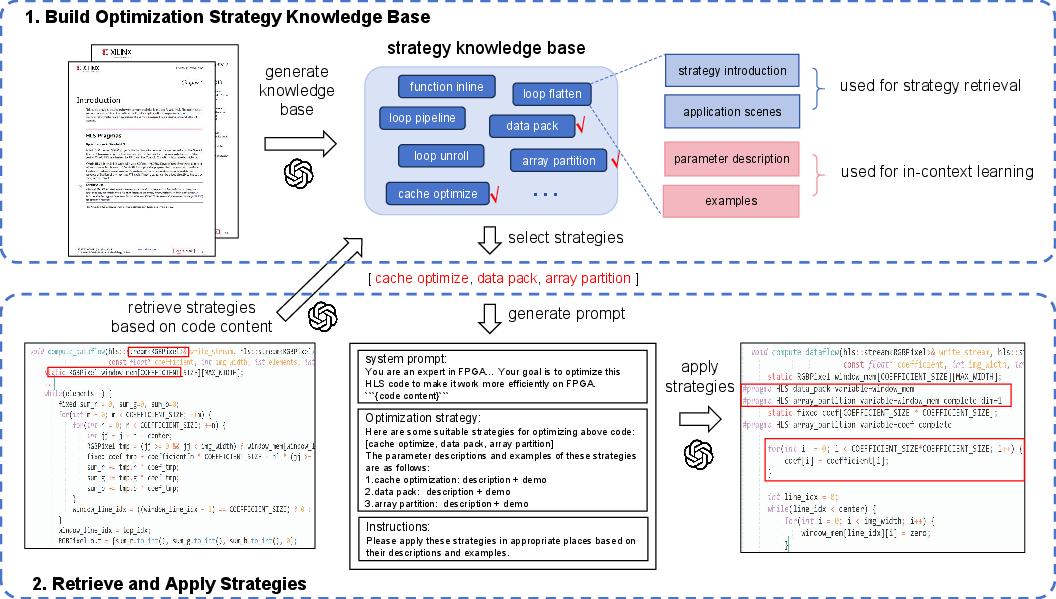

The framework employs a Retrieval-Augmented-Generation-like strategy to automatically learn and apply HLS optimization techniques. This involves extracting structured information from vendor documentation and matching these strategies to the specific code patterns encountered in each task.

Figure 3: Automatic Optimization Strategies Learning and Application.

This automatic application of optimization strategies reduces the manual effort typically required in the HLS development process, allowing for efficient pragma usage and generating HLS designs that are competitive with human-optimized versions.

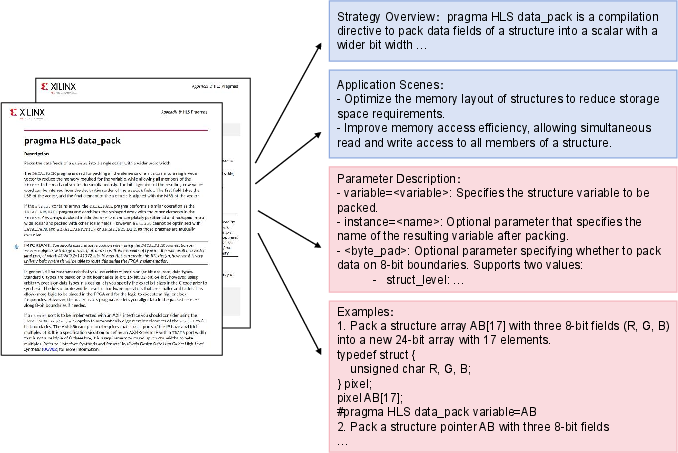

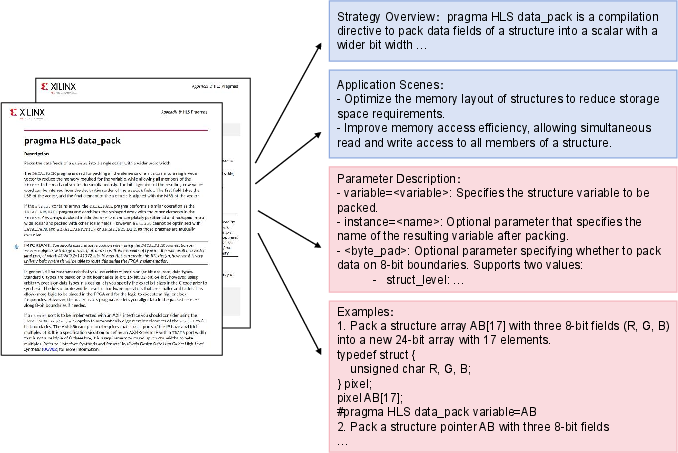

Figure 4: Structured information extracted by HLSPilot. The optimization strategy from documents is summarized into four parts: (1) strategy overview and (2) applicable scenarios for strategy retrieval; (3) parameter description and (4) examples for generating optimization prompt.

Experimental Evaluation

Experiments conducted with HLSPilot on a series of benchmarks demonstrate its capability to generate designs that rival or surpass manually optimized FPGA designs. The benchmarking showed that HLSPilot-generated designs exhibit significant improvements in runtime performance compared to unoptimized versions, highlighting the efficacy of using LLMs in hardware optimization processes.

Conclusion

The HLSPilot framework represents a substantial advancement in the integration of LLMs into the high-level synthesis process. By automating the translation of software code into highly optimized hardware designs, HLSPilot facilitates more efficient hardware design workflows and opens new avenues for software engineers to capitalize on FPGA's acceleration capabilities. The promising results indicate potential for further development and refinement, setting a foundation for future research in LLM-assisted hardware design methodologies.