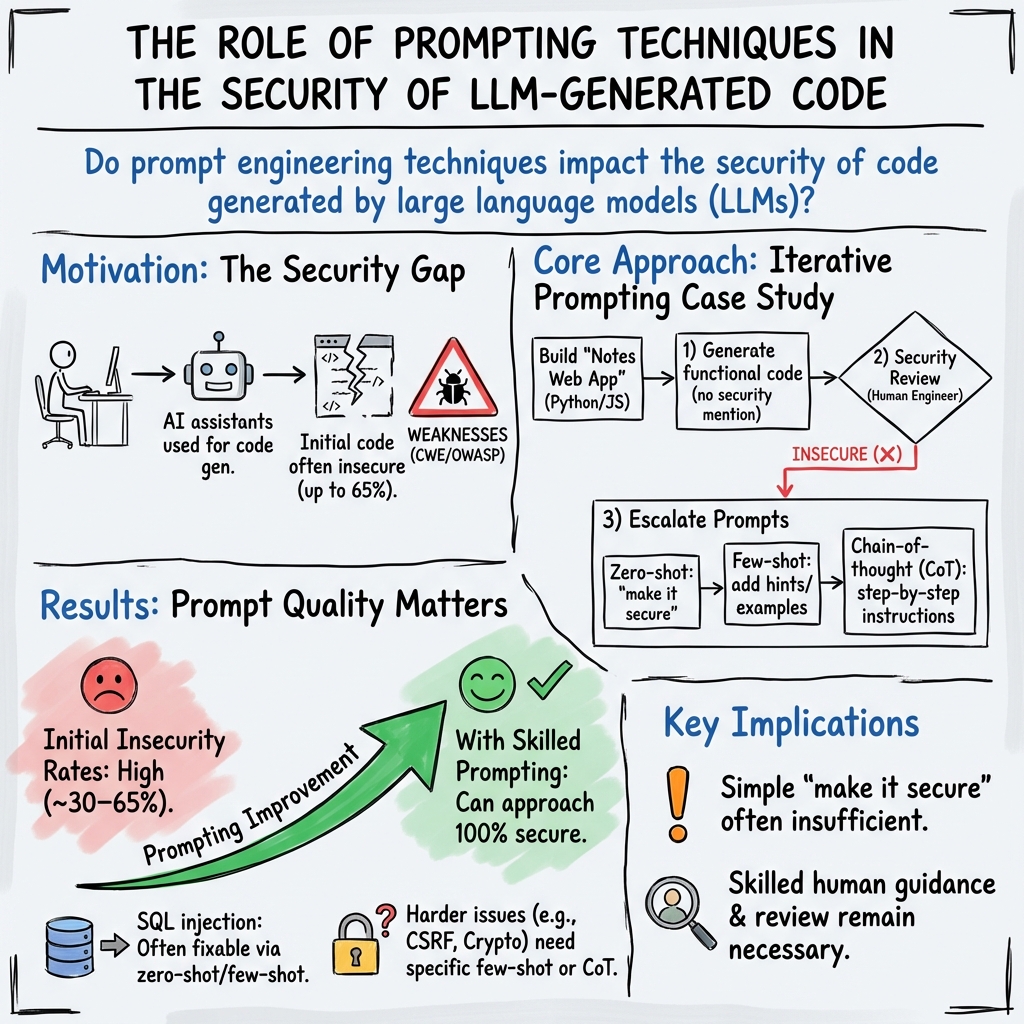

- The paper demonstrates that LLM-generated code initially exhibits up to 65% insecure outputs as measured by the MITRE CWE catalogue.

- The study employs zero-shot, few-shot, and chain-of-thought prompt engineering to assess security improvements in multiple programming languages.

- The findings emphasize that expert guidance and precise prompts are crucial for transforming insecure code into secure, reliable software artifacts.

Summary of "You still have to study" -- On the Security of LLM generated code

The paper "You still have to study" -- On the Security of LLM generated code" (2408.07106) provides an in-depth analysis into the security concerns pertaining to code generated by LLMs. The authors, Stefan Götz and Andreas Schaad, focus on the intersection of AI-assisted code generation and the prevalent security vulnerabilities that may arise from it. By investigating the output from several prominent AI code generation models including ChatGPT, Copilot, CodeLlama, and CodeWhisperer, the research scrutinizes the extent to which AI models can generate secure code and the influence of prompt quality on code security.

Analysis of AI Code Assistants

The paper highlights the pervasive use of AI assistants in software development processes, noting that LLMs are increasingly employed even by novice programmers for routine tasks. However, the security of the code generated by these LLMs presents significant concerns, often failing to meet established security standards. The study utilizes the MITRE CWE catalogue as a benchmark for evaluating code security, revealing that initial outputs from LLMs can result in as much as 65% insecure code. Nevertheless, the paper shows that with extensive guidance from security experts through enhanced prompting techniques, the generated code can become almost entirely secure.

Experiments in Prompt Engineering

Central to the research is the application of varying prompt engineering techniques to evaluate their impact on code security. The authors employ zero-shot, few-shot, and chain-of-thought prompting methods across multiple languages including Python and JavaScript. The study's findings illustrate that while some LLMs possess a latent capability to understand and implement secure coding practices, the efficacy of security implementation is significantly influenced by the specificity and clarity of the prompts used. For instance, results indicate that some models initially generated code devoid of security against SQL injections and path traversal vulnerabilities, but improved drastically when supplied with targeted or iterative prompts emphasizing security measures.

Implications for Code Security

The research underscores the necessity for software developers using AI code assistants to possess a sound understanding of security principles. A key implication of the findings is that merely instructing an AI model to produce "secure" code is insufficient without guidance through specific security-related prompts. This insight is critical for academia and industry, signaling the need for deliberate prompt engineering and active developer involvement to ensure security is prioritized in AI-generated code artefacts.

Future Directions

The authors suggest several future research avenues, including the exploration of AI-assisted code generation in languages beyond Python and JavaScript, such as Rust and C#. They also propose conducting user studies among computer science students to evaluate the educational impact on prompt methodologies. Additionally, the potential integration of static analysis tools with automated feedback loops presents a promising pathway for refining LLM-generated code security.

Conclusion

The paper concludes that while LLMs have enhanced the capabilities of code generation, their proficiency in generating secure code is reliant on adept prompt engineering and the direct involvement of experts knowledgeable in secure coding practices. It calls attention to the ongoing requirement for developers to critically engage with AI models to mitigate security risks—a notion encapsulated in the paper's title, emphasizing that reliance on AI does not absolve developers from the responsibility of ensuring code security.