- The paper presents the integration of LLMs into the claim verification pipeline, highlighting enhanced evidence retrieval and labeling techniques.

- Methodologies such as Retrieval-Augmented Generation, hierarchical prompting, and transfer learning are evaluated for improved accuracy and explainability.

- The survey identifies open challenges including handling irrelevant context, multilingual application gaps, and inherent knowledge conflicts in LLMs.

Claim Verification in the Age of LLMs

Abstract

This survey investigates claim verification systems, highlighting the evolution from traditional NLP-based methodologies to the current incorporation of LLMs. The study reviews key components of the claim verification pipeline and examines recent LLM advancements in evidence retrieval, prompt creation, transfer learning, and generation strategies. It identifies open challenges in handling irrelevant context, multilingual application gaps, and knowledge conflicts inherent in LLMs. Additionally, the survey catalogs critical datasets and shared tasks essential for benchmarking claim verification systems.

Introduction

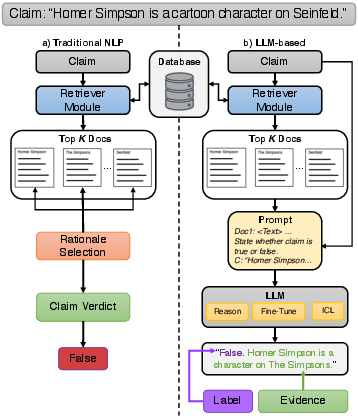

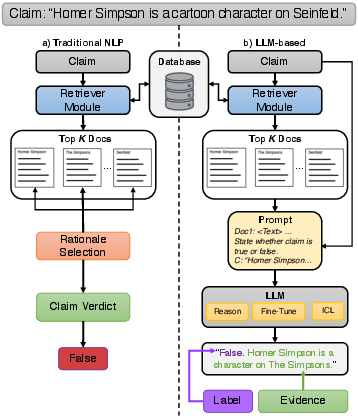

The proliferation of false information on social media and the internet underscores the need for effective claim verification systems. Existing NLP models are increasingly supplemented or replaced by LLMs through techniques like Retrieval-Augmented Generation (RAG), promising enhanced accuracy and the ability to handle massive datasets (Figure 1).

Figure 1: Comparison of claim verification systems between NLP-based (traditional) and LLM-based for claim veracity.

This integration of LLMs potentially reduces error propagation and enhances the explainability of claim verification systems, though challenges such as hallucinations and susceptibility to outdated information persist. The survey by Dmonte et al. is pivotal, delineating components of the verification pipeline and providing insights into novel LLM applications.

Claim Verification Pipeline

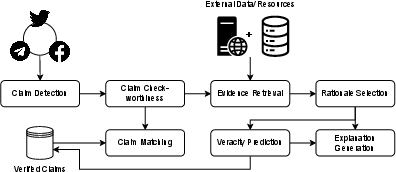

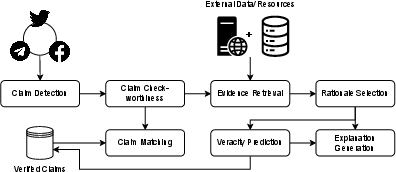

A typical claim verification pipeline includes several stages: claim detection, check-worthy claim identification, claim matching, document retrieval, rationale selection, veracity prediction, and explanation generation (Figure 2).

Figure 2: The archetypal claim verification pipeline and its main components.

Each stage is critical in ascertaining the accuracy of claims, distinguishing opinions from factual assertions, identifying previously verified claims, retrieving relevant evidence, and generating comprehensive explanations. As described, these tasks benefit from advanced evaluation metrics and rapidly expanding datasets, imperative for optimizing claim verification systems.

LLM Approaches

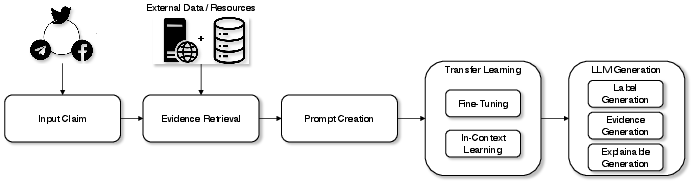

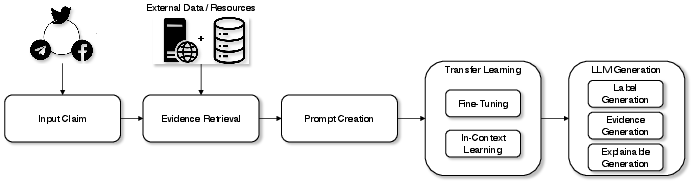

The incorporation of LLMs in claim verification enhances retrieval and generation capabilities, enabling precise veracity assessments via improved evidence analysis and robust prompt strategies (Figure 3).

Figure 3: Claim verification pipeline using LLMs. Instead of traditional automatic claim verification, this pipeline creates a prompt from the retrieved evidence and the input claim as input to the LLM to generate a label, sentence evidence, and/or explanation of its response.

Evidence Retrieval Strategies

LLMs excel in complex, multi-hop claim verification tasks by employing RAG models that mitigate hallucinations and optimize evidence selection. Iterative strategies and sub-claim decomposition further refine retrieval effectiveness by addressing semantic gaps and enhancing document ranking processes.

Prompt Creation Strategies

The efficacy of LLMs is boosted through hierarchical and self-sufficient prompting techniques, offering configurable, last-mile adjustments to the LLMs’ logical operations. These strategies, alongside feedback-informed refinement processes, bolster the adaptability and relevance of the generated outputs.

Transfer Learning Strategies

Fine-tuning and in-context learning represent significant advancements, leveraging rich external datasets to improve the alignment and accuracy of LLMs. Multi-stage approaches utilizing zero-shot and few-shot settings demonstrate the models’ versatility across varied domains.

LLM Generation Strategies

Innovative techniques such as minimal evidence selection and the use of specialized transformers improve label and evidence generation accuracy. These advancements, complemented by CoT reasoning for enhanced explanatory capabilities, position LLMs at the forefront of sophisticated claim verification systems.

Evaluation and Benchmarking

Well-curated datasets and robust metric systems form the backbone of claim verification model evaluation (Table 1). Cross-domain applicability, alongside shared tasks like FEVER and CLEF CheckThat!, facilitate comprehensive benchmarking processes, essential for validating model performance and factual accuracy.

Open Challenges

Addressing irrelevant context and knowledge conflicts are pivotal for enhancing LLM robustness. Emphasis on multilingual capabilities is crucial as models remain primarily constrained to English datasets. Continued research in these areas is vital for advancing claim verification methodologies.

Conclusion

This survey highlights the transformative impact of LLMs in claim verification processes, presenting comprehensive insights into current methodologies and datasets. As LLM technologies evolve, they promise notable improvements in handling misinformation, ensuring veracity assessments are accurate and grounded. This survey lays a foundation for future exploration, aiming to enhance LLM efficiency and applicability across diverse claim verification scenarios.