- The paper introduces LLaMA-Omni, which integrates a pretrained speech encoder, speech adaptor, LLM, and a non-autoregressive streaming decoder for simultaneous text and speech responses.

- It leverages Whisper-large-v3 and Llama-3.1-8B-Instruct to achieve minimal latencies (as low as 226ms) and high alignment accuracy between audio and text outputs.

- The study demonstrates practical applications for assistive, real-time interaction systems through its innovative two-stage training strategy and robust architecture.

LLaMA-Omni: Seamless Speech Interaction with LLMs

Introduction

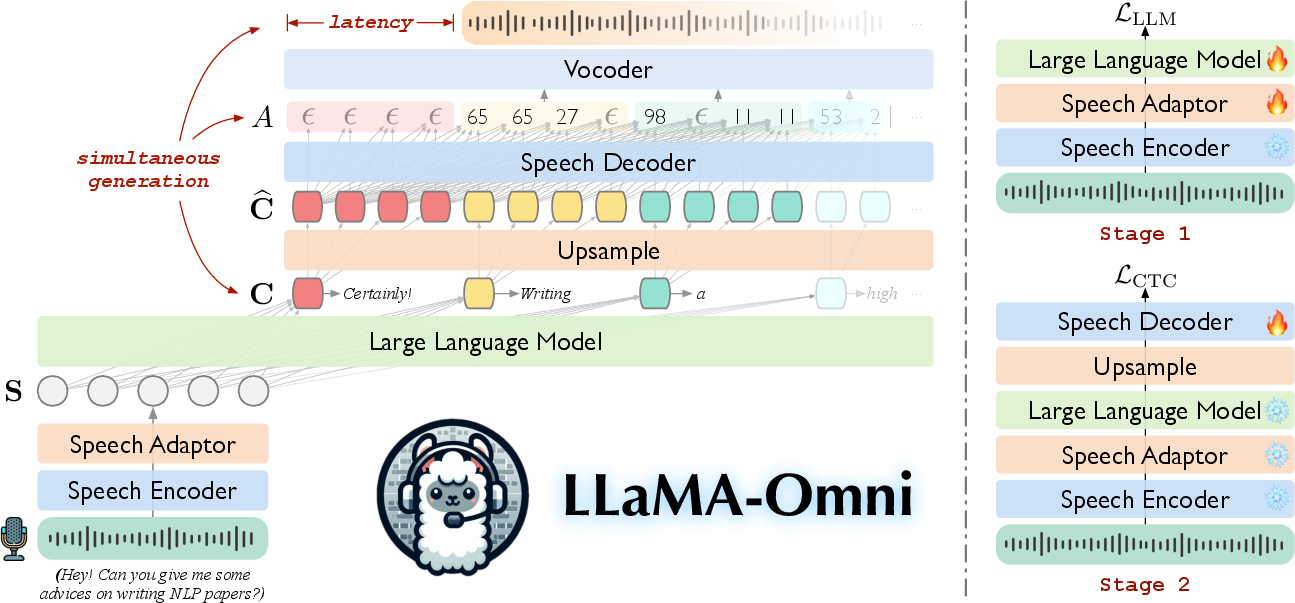

The paper introduces LLaMA-Omni, a novel model architecture designed to facilitate low-latency and high-quality speech interaction with LLMs. Unlike traditional LLMs that primarily support text-based interactions, LLaMA-Omni aims to address the challenge of seamless speech communication by integrating components that eliminate the need for intermediate text transcription. The architecture includes a speech encoder, a speech adaptor, a LLM, and a streaming speech decoder, enabling it to simultaneously generate text and speech responses from speech instructions with minimal latency. This advancement holds significant promise for enhancing user interactions with LLMs, especially in scenarios where speech is a more natural or necessary medium of communication.

Figure 1: LLaMA-Omni can simultaneously generate text and speech responses based on the speech instruction, with extremely low response latency.

Model Architecture

LLaMA-Omni is built upon a series of components: a pretrained speech encoder, a speech adaptor, a LLM, and a streaming speech decoder. The speech encoder utilizes Whisper-large-v3 to capture meaningful speech representations, translating raw audio instructions into a sequence of features without modifying the pretrained parameters. Subsequently, a trainable speech adaptor compresses and projects these features into the LLM's embedding space, effectively bridging the gap between audio inputs and textual comprehension.

The LLM employed is Llama-3.1-8B-Instruct, capable of generating coherent text responses directly from the speech-derived embeddings. The architecture's distinctiveness is underlined by its non-autoregressive (NAR) streaming decoder which, utilizing a Transformer architecture paired with connectionist temporal classification (CTC), concurrently generates speech units with text prefixes, maintaining an efficient translation of thought into speech and text.

Figure 2: Left: Model architecture of LLaMA-Omni. Right: Illustration of the two-stage training strategy for LLaMA-Omni.

Training and Data

The model's efficacy is further bolstered by a meticulously curated dataset, InstructS2S-200K, derived by rewriting existing text-based instructions into formats optimal for spoken interaction. This dataset utilized advanced generative models to produce speech instructions and synthesized responses, ensuring that LLaMA-Omni is trained on data that mimic real-world speech scenarios. Training is executed in two phases: the initial stage focuses on aligning the model with text responses, followed by fine-tuning where the model learns to map its internal state to phonetic units for speech synthesis.

Experimental Evaluation

Experimental results highlight LLaMA-Omni's superiority over existing models like SpeechGPT and audiolinguistic models such as Audiopalm. LLaMA-Omni not only excels in content fidelity and stylistic appropriateness but also achieves exceptionally low response latencies, as minimal as 226ms, and high alignment accuracy between text and speech outputs. This performance ensures that the model can efficiently and effectively handle speech-based instruction without the latency drawbacks of sequential processing approaches.

Implications and Future Work

LLaMA-Omni's architecture offers a promising path towards broader, more naturalistic interactions with LLMs through speech. Its ability to concurrently generate text and speech opens new avenues for application in assistive technologies, real-time translation, and interactive voice response systems. Future research may focus on expanding the model's capabilities to further improve the expressiveness of generated speech and to support even more dynamic interaction scenarios, potentially exploring multimodal enhancements that integrate visual or gestural data alongside auditory inputs.

Conclusion

LLaMA-Omni represents a significant step forward in the integration of speech into interactive LLMs. By leveraging the latest advancements in model architecture and training, it achieves a harmonious blend of low-latency response and high-quality interaction, setting a new standard for speech-enabled LLMs. Its efficiency in training and deployment further suggests that such models could be rapidly integrated into existing systems, advancing the field of applied linguistic AI.