- The paper introduces Phy124, a novel framework that integrates diffusion-based 3D Gaussian generation with MPM-driven dynamics to produce realistic 4D content in about 39.5 seconds.

- The method employs a two-stage process that first creates explicit 3D representations and then applies physical simulations to ensure dynamics that adhere to natural physical laws.

- Experimental results show Phy124's superior fidelity and temporal consistency, highlighting its potential for application in gaming, VR, and animation.

Phy124: Fast Physics-Driven 4D Content Generation from a Single Image

Introduction

The paper "Phy124: Fast Physics-Driven 4D Content Generation from a Single Image" presents a novel approach to generating dynamic 3D objects, described as 4D content, from static images. This method addresses shortcomings in existing models, which typically rely heavily on diffusion models and face challenges in adhering to physical laws and optimizing time constraints. Phy124 distinguishes itself by integrating physical simulations directly into the 4D generation process, ensuring that the generated content aligns with natural physical laws while achieving reduced inference times.

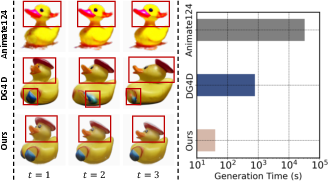

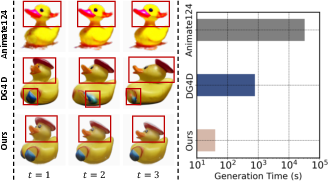

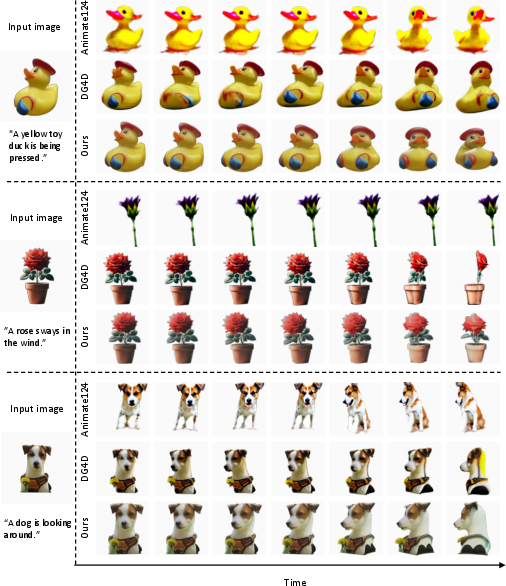

Figure 1: The figure shows a duck being pressed. Animate124 struggles to produce effective motion and often results in abnormal appearances in the 4D content; for instance, the duck in the figure has two beaks. On the other hand, DG4D produces motion that does not adhere to physical laws, such as the abnormal deformations observed in the duck's head and blue wing. In contrast, our approach can generate 4D content with greater physical accuracy. Moreover, our method reduces the generation time to an average of just 39.5 seconds.

Methodology

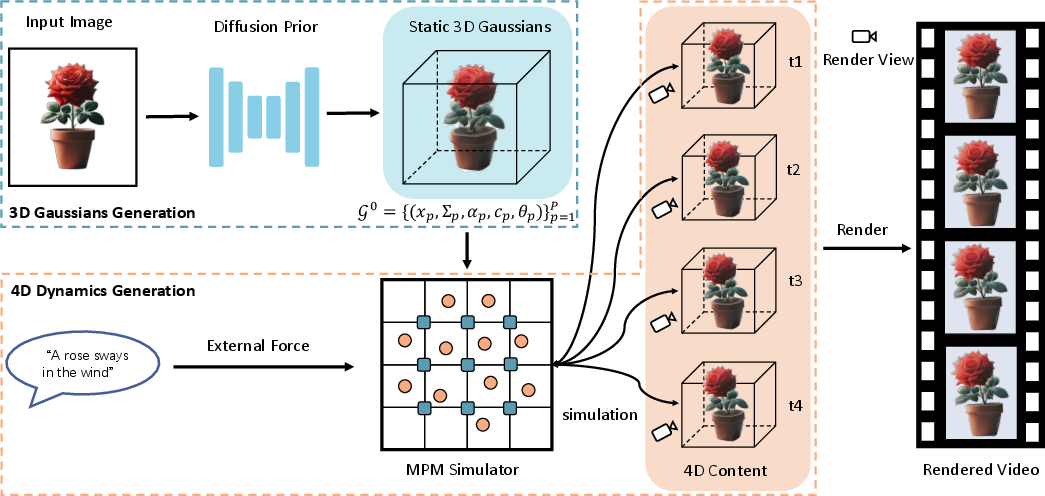

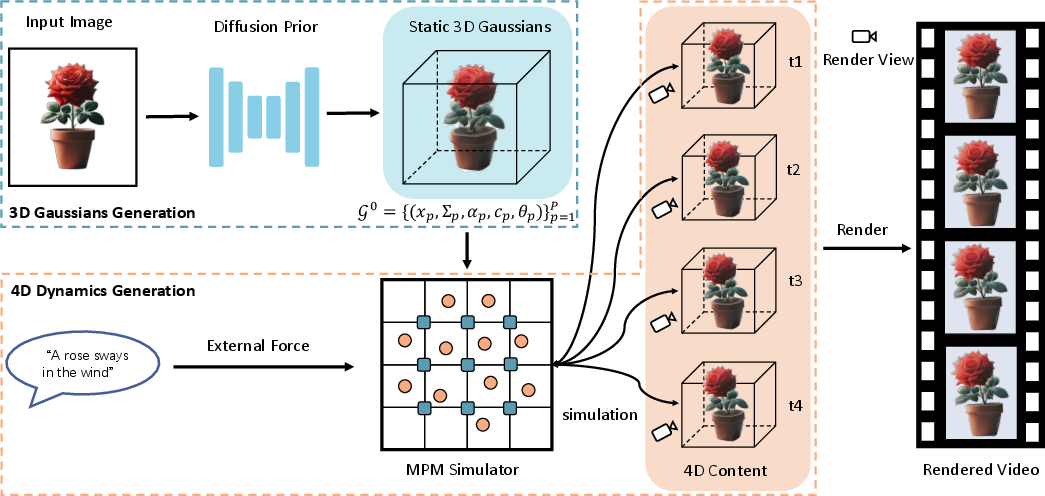

The Phy124 framework is composed of two main stages: the 3D Gaussians Generation and the 4D Dynamics Generation. In the first stage, a static 3D Gaussian is generated from a single input image with the help of image diffusion models. The subsequent stage involves applying the Material Point Method (MPM) to introduce dynamics to these 3D objects, thus generating 4D content.

Figure 2: Framework of Phy124. In 3D Gaussians Generation stage, from an input image, a static 3D Gaussians will be generated under the guidance of the diffusion model. In 4D Dynamics Generation stage, we consider each 3D Gaussian kernel as particles within a continuum and attribute physical properties (e.g., density, mass, etc) to them. Sequentially, we employ MPM to introduce dynamics to the static 3D Gaussians. Meanwhile, users can guide the MPM simulator to generate 4D content that aligns with their desired outcomes by adjusting the external forces.

3D Gaussians Generation

This phase employs diffusion models to generate isotropic Gaussian kernels, which act as explicit representations of 3D objects. These explicit representations facilitate subsequent dynamic simulations by allowing the application of physical properties to the Gaussian kernels, interpreted as particles in space.

4D Dynamics Generation

Incorporating the MPM, each Gaussian kernel—treated as a particle—is endowed with physical properties such as mass and velocity. Through precise simulations, these particles' motions are governed by physical laws, ensuring authenticity in the dynamics generated. This simulation-driven process largely deviates from traditional diffusion methodologies, focusing instead on compliance with physical laws.

Experimental Results

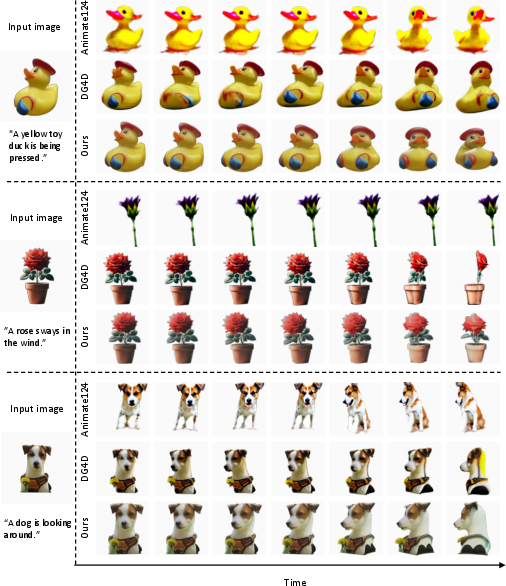

Phy124 undergoes extensive experimental evaluation against leading approaches such as Animate124 and DreamGaussian4D. Both qualitative and quantitative metrics showcase the superiority of Phy124 in generating high-fidelity, physically accurate, and temporally consistent 4D content.

Figure 3: Qualitative comparison with the baseline methods for image-to-4D generation. The description below the input image outlines the 4D content the user aims to generate. For each method, 14 frames of 4D content are generated, and every second frame is selected for display, showing a total of seven frames. Additionally, to compare geometric and temporal consistency across multiple views, the rendering perspective will change with each time step.

Practical Implications and Future Directions

The integration of physical simulations in 4D content generation has profound implications for fields like gaming, VR, and animation, where realistic dynamics are imperative. The methodological shift towards physics-driven processes offers significantly faster generation times, enhancing practical applicability. Moving forward, potential advances may explore more nuanced control via user-defined parameters, further enriching the controllability while maintaining physical veracity.

Figure 4: 4D content generated by applying different external forces. f denotes the external forces applied along the x, y, and z directions.

Conclusion

Phy124 exemplifies a significant methodical advancement in 4D content generation by marrying physics-based simulations with dynamic generation processes, thereby offering a robust framework that surpasses current efficiency and fidelity benchmarks. The approach departs from reliance on diffusion models, ensuring physical coherence and allowing for rapid, controllable generation scenarios. Future work may focus on enhancing the flexibility and perceptual quality of generated content through advanced simulation control techniques or improved Gaussian representation methodologies.

Figure 5: 4D content generated using different 3D generation methods.