- The paper presents a transformer-based model that redefines self-attention using query prototypes for effective knowledge graph reasoning.

- It incorporates structure-aware modules and linearized attention to significantly reduce computational complexity and enhance scalability.

- Experimental results on benchmarks like FB15k-237 and WN18RR demonstrate superior performance in both transductive and inductive reasoning tasks.

In the paper titled "KnowFormer: Revisiting Transformers for Knowledge Graph Reasoning" (2409.12865), the authors propose a novel method utilizing transformer architectures to enhance knowledge graph reasoning tasks. These tasks are critical due to the inherent incompleteness of real-world knowledge graphs (KGs) and the importance of reasoning to infer missing elements. The KnowFormer model seeks to overcome limitations of path-based methods and leverage the capabilities of transformers to improve reasoning performance both in transductive and inductive settings.

Motivation

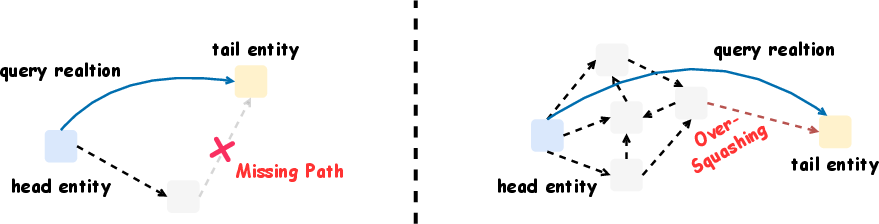

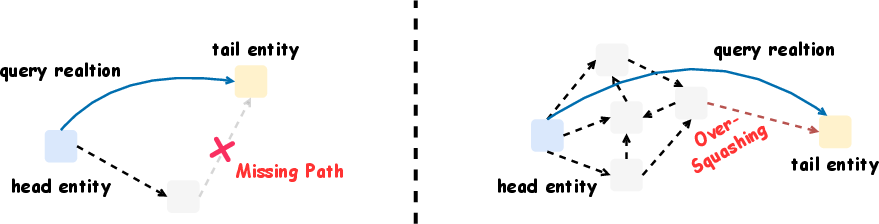

Path-based methods in knowledge graph reasoning show promising performance but face constraints, particularly in message-passing neural networks. These constraints include missing paths and information over-squashing, which limit the effectiveness of these approaches (Figure 1).

Figure 1: Path-based methods could be limited by the missing paths.

Proposed Method

The KnowFormer model introduces a transformer-based approach focusing on attention mechanisms to capture interactions between entities. Unlike methods relying on textual descriptions, it emphasizes the structural information within KGs.

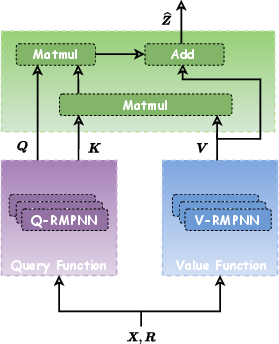

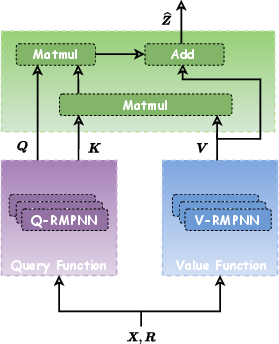

- Attention Mechanism: KnowFormer redefines the self-attention mechanism from the perspective of query prototypes for KGs using path-based information. This redefinition allows for effective modeling of interactions among entity pairs.

- Structure-Aware Modules: To embed structural aspects into the attention calculation, KnowFormer employs structure-aware modules. These are designed to enhance the model's ability to incorporate relational data through query, key, and value computations.

- Efficient Attention Computation: The method is optimized for scalability by using a linear approximation to compute attention scores, reducing computational complexity from quadratic to linear in terms of the number of entities.

Figure 2: Overview of the proposed attention mechanism, which takes entity features $\boldsymbol{X$ as input.

Implementation and Results

Computational Complexity

The proposed model efficiently manages the computational aspects inherent in handling large KGs. The time complexity is reduced by leveraging instance-based similarity measures alongside scalable attention computation techniques, achieving linear complexity concerning the number of facts and entities.

KnowFormer was evaluated across multiple benchmarks, demonstrating superior performance over existing methods in both transductive and inductive reasoning tasks.

- Transductive Tasks: On datasets like FB15k-237, NELL-995, and YAGO3-10, KnowFormer outperformed prominent baseline models in terms of MRR and Hits@N metrics (Table 1).

- Inductive Tasks: The model exhibited robust generalization to unseen entities, surpassing baseline methods across various versions of the test datasets, underscoring its proficiency in handling inductive reasoning tasks (Table 2).

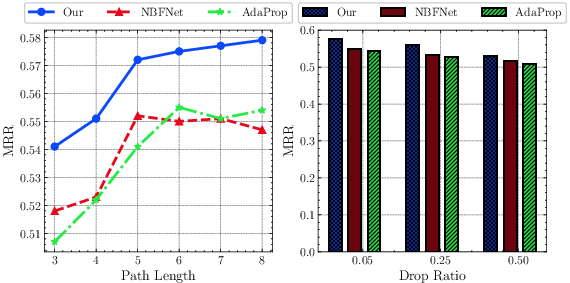

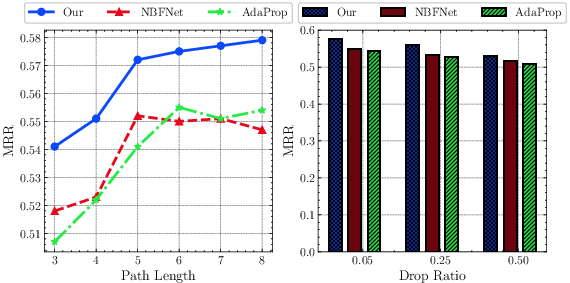

Figure 3: Experimental results on WN18RR. Evaluation of performance under different lengths of reasoning paths and missing facts.

Theoretical Implications and Future Work

Expressivity and Scalability

KnowFormer's reformulation of attention as a smooth kernel extends the expressivity and scalability limits of traditional transformer architectures when applied to KGs. The utilization of a scalable attention mechanism allows KnowFormer to perform well even on large datasets and longer reasoning paths, mitigating issues such as information over-squashing.

Future Directions

The exploration into enhancing transformer models for KGs opens up further avenues of research. Future work might focus on integrating domain-specific knowledge into the attention mechanism or extending the framework to capture more complex relational structures within heterogeneous data sources.

Conclusion

KnowFormer presents a significant step forward in applying transformative models for knowledge graph reasoning. By focusing on entity interactions rather than relying solely on textual embeddings, it provides a scalable and expressive solution to the complex challenge of inferring missing knowledge in KGs. Its architecture and empirical results suggest broader applications in various fields reliant on robust knowledge inference tools.