- The paper introduces the JVID model that combines latent image and video diffusion for superior frame quality and temporal coherence.

- It employs a novel timestep sampling strategy that alternates between image and video denoising models to optimize both quality and consistency.

- Experimental results on UCF-101 demonstrate enhanced performance with improved FID, FVD, and IS scores compared to baseline methods.

JVID: Joint Video-Image Diffusion for Visual-Quality and Temporal-Consistency in Video Generation

Introduction

The paper "JVID: Joint Video-Image Diffusion for Visual-Quality and Temporal-Consistency in Video Generation" introduces the Joint Video-Image Diffusion (JVID) model, targeting the enhancement of video generation in terms of visual quality and temporal consistency. This approach addresses the challenges in video generation posed by high dimensionality and complex spatio-temporal dynamics. It leverages two diffusion models: a Latent Image Diffusion Model (LIDM) and a Latent Video Diffusion Model (LVDM), trained respectively on images and video data, and strategically combines them during the reverse diffusion process.

Methodology

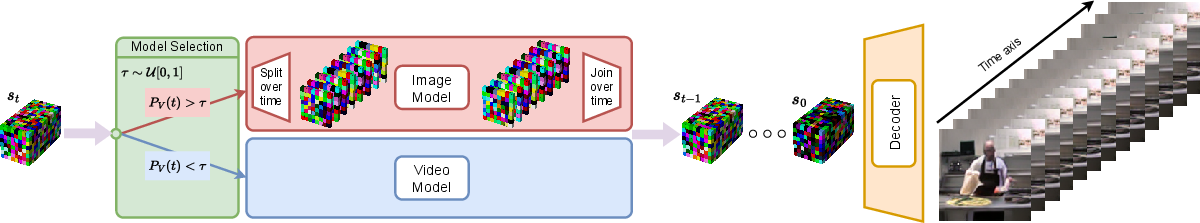

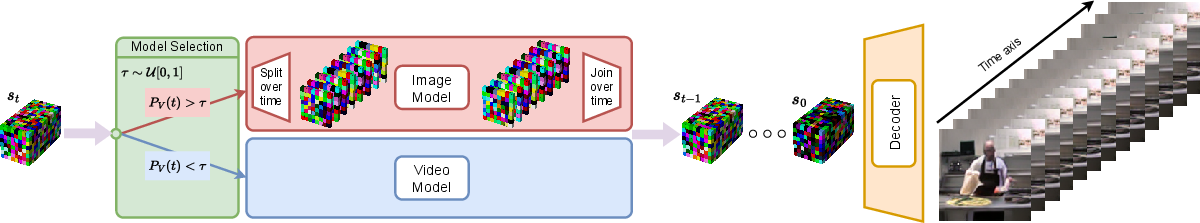

The JVID model integrates LIDM and LVDM via a novel sampling strategy. The LIDM is responsible for refining image quality, while the LVDM maintains temporal consistency across frames. The key innovation lies in mixing these models during the denoising process of the diffusion models, where each timestep involves selecting one model to contribute to the denoising operation, thus merging their capabilities effectively.

Figure 1: Our proposed mixture of denoising model sampling approach. At each step, we select one model, which is used to denoise our noise sample st. When the sample is fully denoised, it is decoded with the VAE decoder to reconstruct the generated video frames.

The paper outlines a method in which noise samples are progressively denoised through different stages, using either the LIDM or LVDM to achieve an optimal balance between image quality and temporal coherence. Another contribution is modeling video and image distributions in a latent space, aligning with methods like latent diffusion models, significantly reducing computational overhead during both training and inference.

Experimental Setup

The experiments are conducted on the UCF-101 dataset, selected for its comprehensive action classes captured in diverse conditions. By encoding video samples using a pre-trained VAE from Stable Diffusion, JVID capitalizes on reduced computational costs and increased efficiency in handling high-dimensional video data. The models are rigorously evaluated based on Fréchet Inception Distance (FID), Fréchet Video Distance (FVD), and Inception Score (IS) across varying resolutions, confirming improvements afforded by the JVID framework.

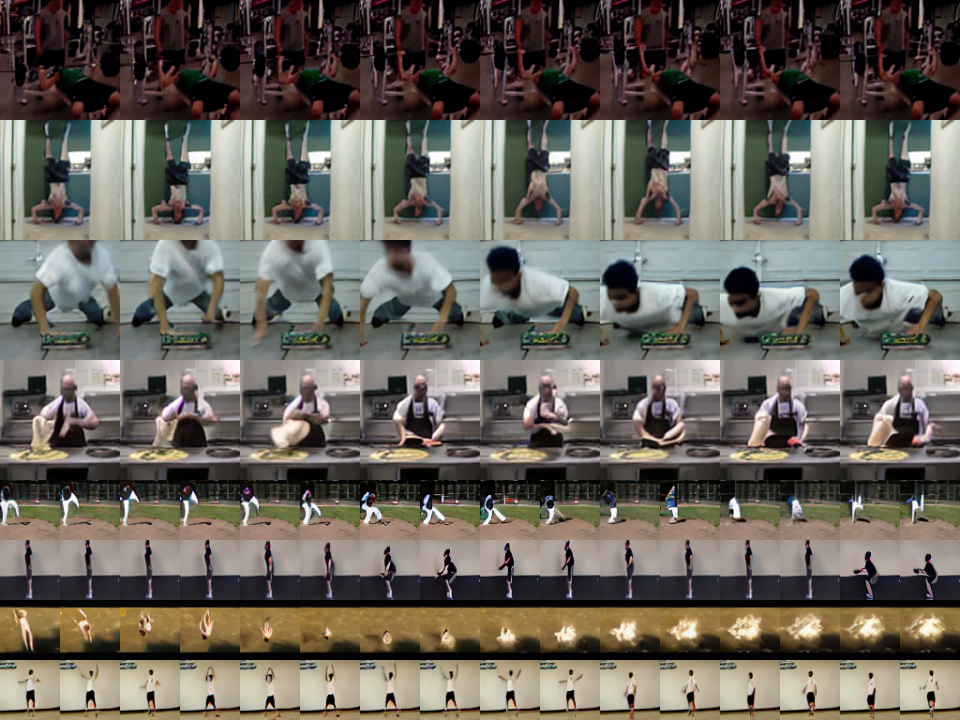

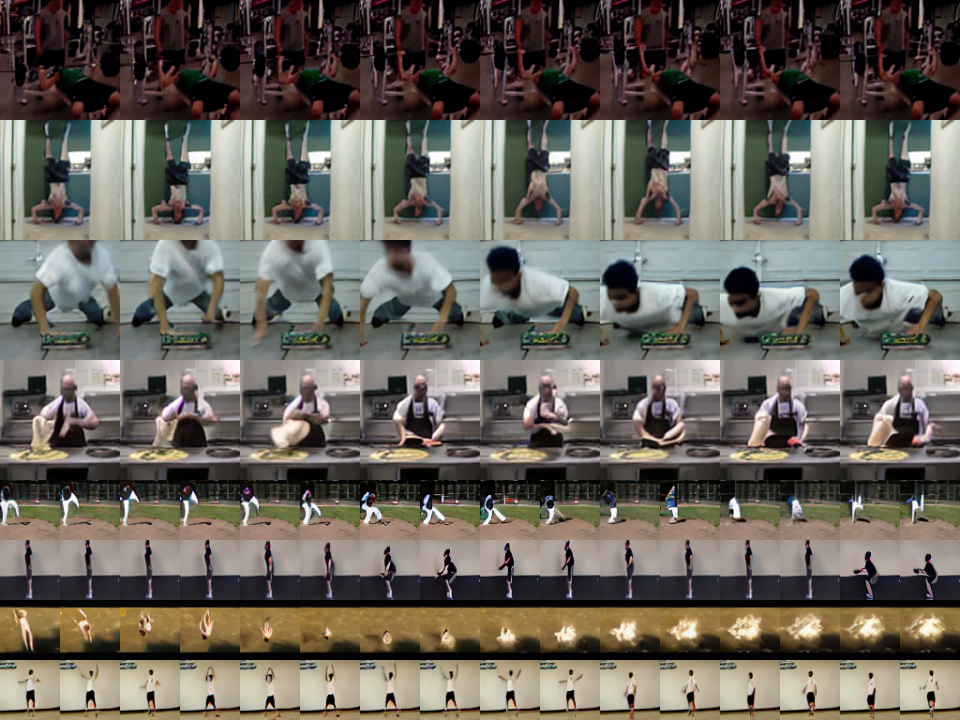

Figure 2: Video samples generated with our JVID model, combining both an image and a video diffusion model during sampling, to produce high quality and temporally coherent videos. Rows 1-4 are generated at 128 × 128, while rows 5-8 are 64 × 64.

Results and Discussion

The results demonstrate that JVID achieves significant visual and temporal improvements in video generation compared to traditional methods. JVID's incorporation of two independently trained models harmonizes the typically conflicting requirements of frame quality and temporal smoothness. Numerical assessments highlight that the JVID model outperforms baseline approaches, though further scaling could improve its competitive stance against state-of-the-art models pre-trained on larger datasets.

This research suggests an efficient solution for synthesizing videos that maintain aesthetic quality across frames, providing a groundwork for further study in conditional and large-scale deployments. Future research could explore its application in domains requiring stringent video quality standards, such as autonomous driving simulations or augmented reality.

Conclusion

The JVID model presents a promising advancement in video generation by integrating image and video diffusion processes, achieving superior temporal coherence and visual quality. This work provides a strategic approach to enhance video synthesis efficiency, inviting future explorations to further optimize and scale the methodology for broader AI applications.