- The paper demonstrates that WeatherFormer narrows the gap between AI-based methods and physical predictors using a novel space-time transformer architecture.

- The methodology integrates space-time factorized blocks and Fourier transformations to reduce computational complexity while enhancing prediction accuracy.

- Experiments on WeatherBench show superior RMSE and ACC metrics, suggesting significant energy savings and improved forecast reliability.

Introduction

The paper introduces WeatherFormer, a transformer-based framework designed to enhance global numerical weather prediction (NWP) using a space-time factorized architecture. Traditional NWP systems rely on solving complex partial differential equations, which are computationally expensive and lead to significant carbon emissions. WeatherFormer seeks to narrow the performance gap between AI-based methods and physical predictors by modeling the dynamics of the atmosphere through a combination of space-time factorized transformer blocks and the Position-aware Adaptive Fourier Neural Operator (PAFNO).

Methodology

Framework Overview

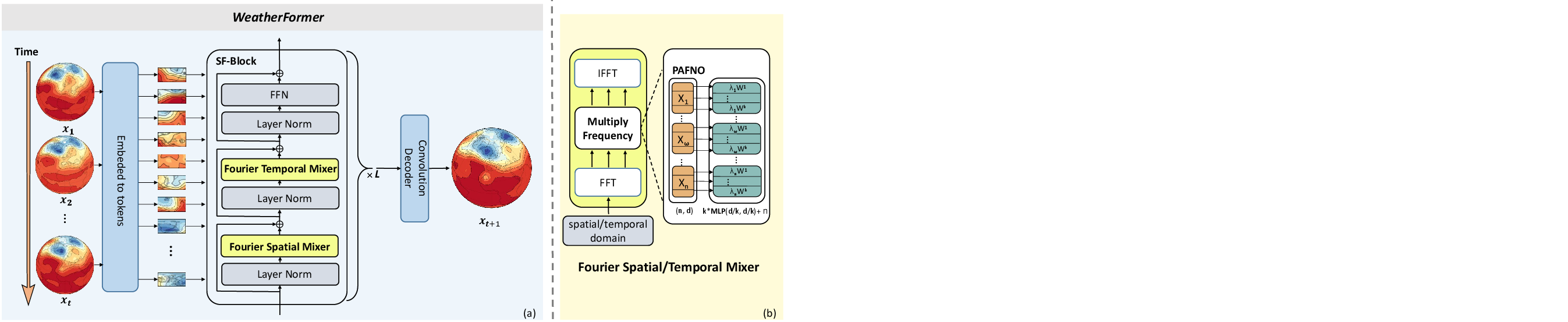

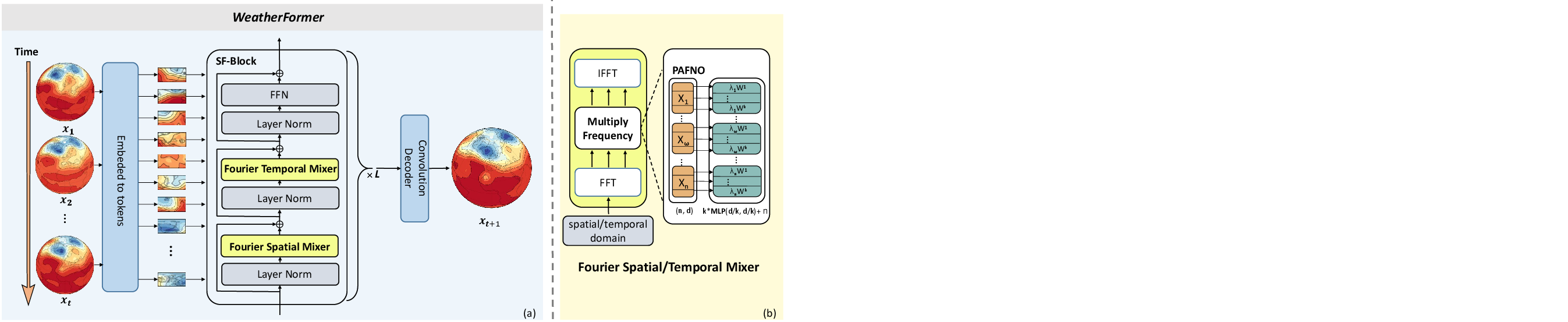

WeatherFormer leverages the spatio-temporal relationships in weather data to improve prediction accuracy. The framework uses a sequence of weather states as input and outputs the future states over multiple time steps. It divides input data into tokens that are processed by a series of SF-Blocks containing both spatial and temporal mixers (Figure 1). These mixers employ a Fourier-based approach to handle high-dimensional data efficiently.

Figure 1: Overview of WeatherFormer, illustrating its architecture leveraging space-time factorized transformer blocks and PAFNO for efficient weather state prediction.

Factorized Space-Time Block

The SF-Block addresses the challenge of processing spatio-temporal data by separating spatial and temporal relationships, reducing the computational complexity. This block uses Fourier transformations to process token information, enabling the model to predict future weather states by considering historical data sequences.

Position Aware Adaptive Fourier Neural Operator (PAFNO)

PAFNO enhances the standard Fourier Neural Operator by introducing position-aware coefficients, allowing the model to utilize spatial information effectively. This approach ensures that the model accounts for spatial dependencies critical to accurate weather predictions, offering improvements over existing data-driven NWP methods.

Experiments and Results

The performance of WeatherFormer was evaluated on the WeatherBench dataset, showing superior results compared to both traditional physics-based models and contemporary deep learning approaches. WeatherFormer demonstrated a closer performance to advanced physical models while maintaining a lower computational footprint.

Performance metrics like RMSE and ACC were used to benchmark against existing methods, highlighting WeatherFormer's efficient and effective prediction capabilities for key meteorological variables like Z500, T850, and T2M. The model also successfully mitigated long-term error accumulation with noise augmentation strategies.

Conclusion

WeatherFormer presents a robust approach to data-driven weather prediction, showcasing potential for significant energy savings compared to traditional NWP methods while achieving competitive accuracy levels. The integration of space-time transformers and adaptive Fourier neural operators positions WeatherFormer as a promising alternative for future weather prediction systems, particularly as the field continues to advance towards more sustainable and efficient methodologies. Future work may focus on further refining these techniques and exploring additional applications in Earth system science and beyond.