- The paper presents a novel method combining rigid-body physics simulation with advanced image-to-video generation.

- It employs a structured framework with perception, dynamics simulation, and rendering modules to ensure physical and visual realism.

- Quantitative metrics and user studies show improved Image-FID and Motion-FID scores compared to previous state-of-the-art models.

"PhysGen: Rigid-Body Physics-Grounded Image-to-Video Generation" Essay

Introduction

The paper "PhysGen: Rigid-Body Physics-Grounded Image-to-Video Generation" presents a novel approach to image-to-video (I2V) generation by integrating model-based physical simulation with data-driven video generation techniques. The framework, PhysGen, addresses the known challenges in generating videos that are both physically plausible and photo-realistic, introducing a method that simulates dynamics grounded in classical mechanics while ensuring aesthetic continuity and realism. This approach promises to extend the application range of I2V models to include realistic, interactive video animations from static images, enhancing user interaction possibilities.

Method Overview

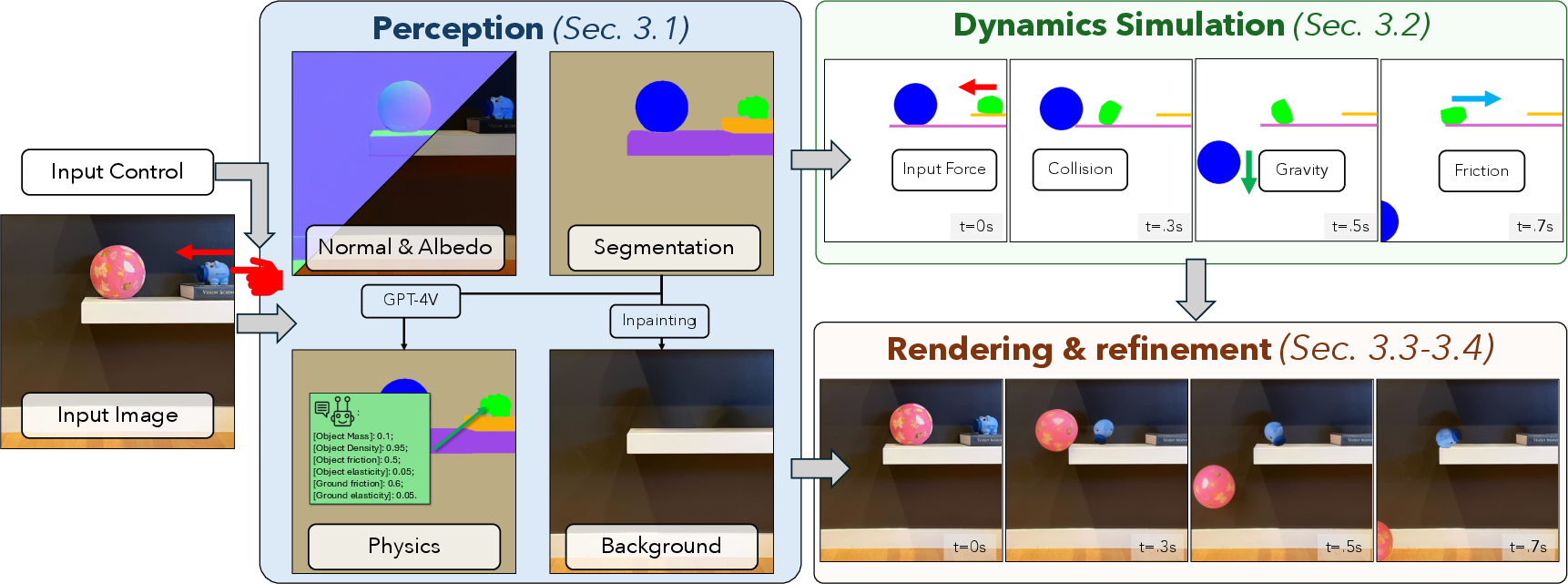

PhysGen operates through three interleaved components: perception, dynamics simulation, and rendering.

- Perception Module

- Dynamics Simulation Module

- The dynamics simulation uses a 2D rigid-body physics approach where Newton's laws govern object motion based on estimated physical properties. Key external forces and simulation parameters include user-specific initial conditions (forces and torques), gravity, friction, and collision dynamics. This is done in image space, facilitating direct coupling with image data.

- Rendering Module

- The rendering involves relighting and refining video frames to respect the simulated dynamics. The relighting adjusts shading based on positional changes and uses generative diffusion models to ensure the video remains visually plausible, refining any aesthetic discrepancies through noise reduction techniques.

Evaluation and Results

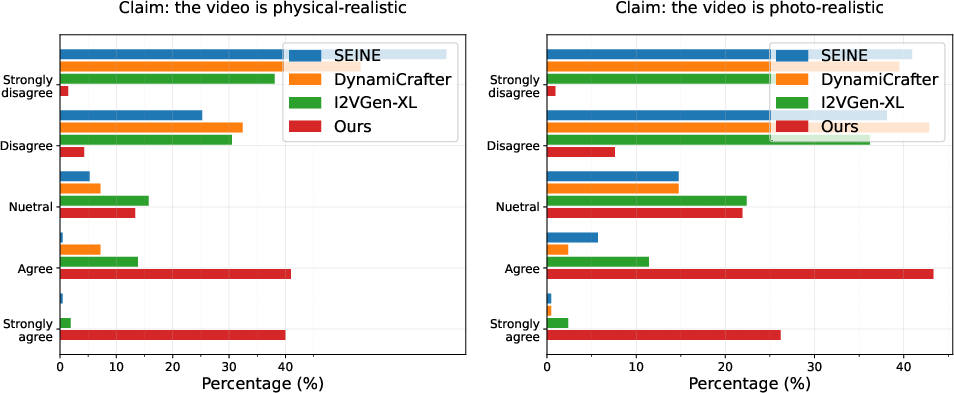

The evaluation entailed both qualitative and quantitative methods, demonstrating PhysGen's capabilities over existing state-of-the-art I2V models such as SEINE and DynamiCrafter. A user study highlighted the superior physical- and photo-realism scores of PhysGen's outputs compared to these prior models, emphasizing its ability to generate realistic and consistent videos even without textual prompts.

Figure 2: Human evaluation score distribution. The distribution of scores shows our method largely outperforms other I2V generative models in both physical-realism and photo-realism. Our average rate is close to agree for both two claims.

Quantitative metrics like Image-FID and Motion-FID indicated that PhysGen achieves lower scores compared to its counterparts, validating its enhanced fidelity and temporal coherence. Furthermore, Generative Refinement enhances visual congruity, demonstrated in detailed motion-capture scenarios.

Implementation and Future Directions

In terms of computational demands, PhysGen requires about 3 minutes per image-to-video generation using standard GPU hardware, showcasing practical feasibility for interactive applications. PhysGen's ability to simulate intricate interactions within varied complex scenes suggests potential for applications in animation, interactive media, and education.

PhysGen's future directions could involve extending its physics simulation capacity to include non-rigid body dynamics and incorporate more comprehensive 3D scene understanding. Expanding the model's contextual vocabulary using multi-modal stochastic depth networks could support handling more diverse inputs with context-aware adjustments.

Conclusion

PhysGen offers a unique blend of physics-grounded dynamics with data-driven aesthetic generation, setting a new benchmark in the field of image-to-video synthesis. By adeptly combining theoretical insights from classical mechanics with cutting-edge AI modelling, this framework paves the way for more interactive and realistic video generation applications, promising to broaden the horizon of possibilities in digital content creation and manipulation.