Harnessing Frozen Unimodal Encoders for Flexible Multimodal Alignment

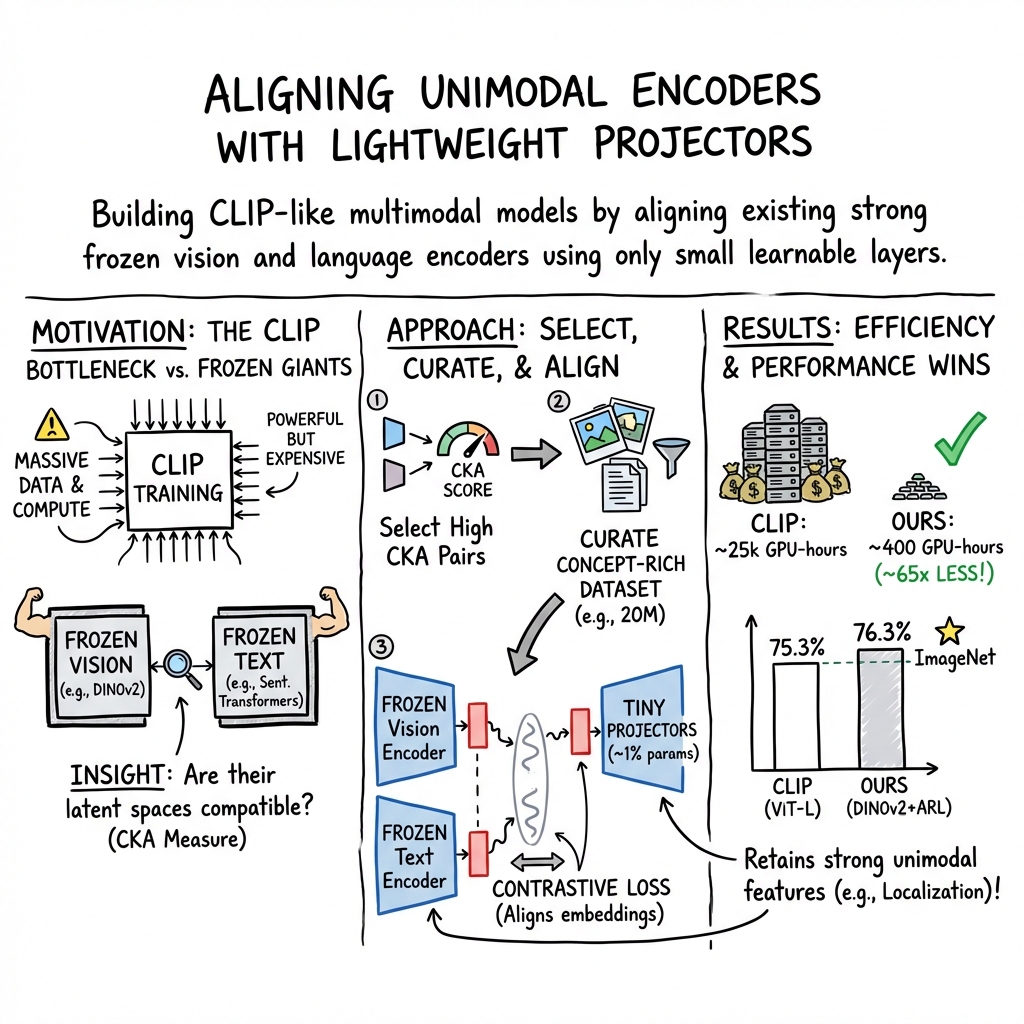

Abstract: Recent contrastive multimodal vision-LLMs like CLIP have demonstrated robust open-world semantic understanding, becoming the standard image backbones for vision-language applications. However, recent findings suggest high semantic similarity between well-trained unimodal encoders, which raises a key question: Is there a plausible way to connect unimodal backbones for vision-language tasks? To this end, we propose a novel framework that aligns vision and language using frozen unimodal encoders. It involves selecting semantically similar encoders in the latent space, curating a concept-rich dataset of image-caption pairs, and training simple MLP projectors. We evaluated our approach on 12 zero-shot classification datasets and 2 image-text retrieval datasets. Our best model, utilizing DINOv2 and All-Roberta-Large text encoder, achieves 76(\%) accuracy on ImageNet with a 20-fold reduction in data and 65-fold reduction in compute requirements compared multi-modal alignment where models are trained from scratch. The proposed framework enhances the accessibility of multimodal model development while enabling flexible adaptation across diverse scenarios. Code and curated datasets are available at \texttt{github.com/mayug/freeze-align}.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper asks a simple question: can we build strong vision–LLMs (that understand images and text together) by reusing already great single-purpose models—one for images and one for text—and just adding a small “adapter” between them? The authors show that the answer is yes. They connect powerful image and text encoders using tiny projection layers, and end up with CLIP-like performance while using far less data and compute.

Key Questions

The paper focuses on three easy-to-understand goals:

- Can we pick pairs of image and text models that are naturally similar, so they’re easy to connect?

- Can we train only small add-on layers (projectors) to make the two models “speak the same language” without retraining the big models?

- Can this simple setup match or beat popular systems like CLIP on tasks such as recognizing images without labels (zero-shot classification) and finding matching images/captions (retrieval)?

How They Did It (Methods, in simple terms)

Think of this like connecting two great devices with a plug adapter:

- You have a great camera (image encoder, like DINOv2) and a great microphone (text encoder, like All-RoBERTa-Large). Each is strong at its own job but they don’t plug into each other.

- Instead of rebuilding the devices, the authors design small “plug adapters” (projection layers) to match their outputs so the two can work together.

There are three main steps:

- Picking compatible models using CKA CKA (Centered Kernel Alignment) is a way to check how similarly two models arrange ideas in their “thinking space.” Imagine two friends sorting the same pile of LEGO bricks by shape and color. If their sorting looks similar, it’s easier to translate between their systems. CKA measures that similarity. The authors show that models with higher CKA are easier to align.

- Curating concept-rich training data They collect a small but diverse set of image–caption pairs covering many concepts (animals, vehicles, foods, etc.). Instead of using everything from the internet, they carefully pick examples likely to be well-matched (image and caption agree) and cover many topics. They build “concept prototypes” (average representations of images for each concept) and then choose captions that are close to those prototypes. This gives them a dense, balanced dataset.

- Training lightweight projectors They freeze the big image and text models (no retraining) and only train tiny MLP layers (the adapters) to pull matching image–caption pairs closer and push non-matching pairs apart (this is called contrastive learning; think “make the right pairs stick together and wrong pairs separate”). They use both “global” information (a summary token) and “local” information (patch/tokens) from the image and text, so the adapter learns broad meanings and fine details.

Main Findings and Why They Matter

Here are the standout results and insights:

- Easy alignment follows high CKA: In both toy tests and real models, the higher the CKA between an image encoder and a text encoder, the easier it was to align them using a simple projector. Translation: pick encoder pairs that “think similarly,” and you won’t need heavy training.

- Strong zero-shot classification with much less data and compute: Their best model (DINOv2 + All-RoBERTa-Large) gets about 76% zero-shot accuracy on ImageNet. That’s on par with or better than popular CLIP setups—but trained on about 20 million examples instead of 400 million, and with about 65× less compute. Only around 1% of total parameters are trained (just the adapters).

- Better retrieval and localization in many cases: On image–text retrieval (finding the right caption for an image or vice versa), the new models match or beat strong CLIP baselines. For zero-shot semantic segmentation (finding object regions in an image without extra training), the model improves significantly over CLIP—thanks to DINOv2’s strong “where things are” features.

- Multilingual performance without multilingual training: By plugging in a multilingual text encoder, their system trained only on English still works well in other languages (like German, French, Japanese, Russian). It often beats models trained on multilingual data, showing the flexibility of the approach.

- Long-text retrieval advantage: Many CLIP models limit captions to around 77 tokens (short). By using a long-context text encoder, their model keeps improving as captions get longer (200–300 tokens), which helps for detailed datasets like DCI (densely captioned images).

Overall: The approach is flexible, powerful, and efficient.

Implications and Impact

- Accessibility: Since you only train tiny adapters instead of big models, you need far fewer resources. This makes it possible for smaller labs and schools to build strong vision–language systems.

- Flexibility: You can “swap” text encoders for specific needs—multilingual tasks, long documents, or domain-specific language—without touching the vision encoder. You can also pick a vision encoder specialized for things like localization or medical images.

- Lower environmental cost: Using much less compute and data reduces the environmental impact of training big AI models.

- Future directions: The paper suggests adding finer-grained training (e.g., patch-level alignment losses) could further boost localization and detail understanding. The simple adapter strategy could be extended to more modalities (audio, 3D, brain signals) by picking compatible encoders and plugging them together.

In short, this work shows you don’t need to rebuild massive models to get great multimodal performance. You can connect the right existing parts with smart, small adapters and still reach state-of-the-art results.

Collections

Sign up for free to add this paper to one or more collections.