- The paper introduces a group Shapley value method to quantitatively decompose parameter contributions in structural economic models.

- The methodology employs constrained weighted least squares to generate interpretable importance tables and robust counterfactual simulations.

- Results highlight key impacts in capital misallocation and globalization, demonstrating the method’s practical utility and empirical alignment.

Group Shapley Value and Counterfactual Simulations in a Structural Model

Introduction

This paper introduces the concept of the group Shapley value to assess the importance of different components in structural economic models through counterfactual simulations. The study expands upon the traditional Shapley value, a solution concept from cooperative game theory used extensively in AI and econometrics for explainability. The group Shapley value provides a decomposition framework for quantifying parameter importance in structural models, offering a means to interpret complex model outcomes quantitatively.

Methodology

The proposed framework employs a group Shapley value decomposition, where parameters are grouped, and their contributions to model outcomes are assessed. This approach maintains the additive nature of Shapley values and allows generating interpretable importance tables similar to regression tables. The authors characterize the group Shapley value as a solution to a constrained weighted least squares problem, thus avoiding issues when input data is incomplete.

The Shapley value's application in counterfactual analysis within structural models like the Roy model highlights its practical utility. By contrasting benchmark and counterfactual parameter settings, the decomposition results provide a clear understanding of each group's contribution to shifts in outcomes, such as income inequality.

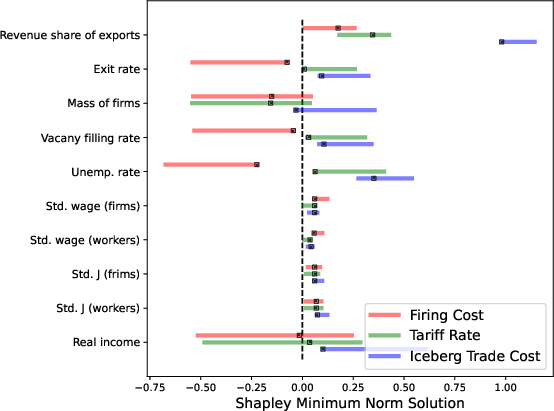

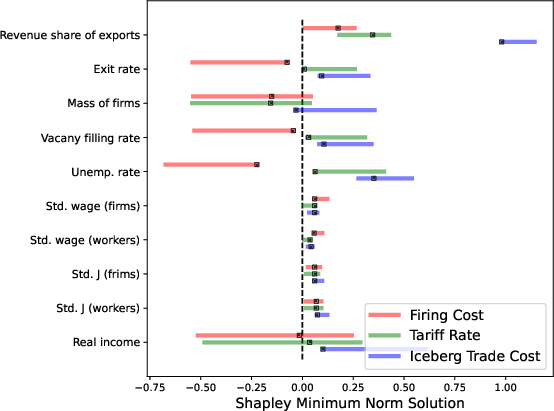

Figure 1: Shapley Minimum Norm Solution with Shapley Bounds.

Applications

Explainable AI

The paper emphasizes the utility of Shapley values in AI model interpretation, underscoring its role in attributing model predictions to input features. Beyond AI, the group Shapley value's potential extends to various domains, facilitating a nuanced evaluation of parameter impacts in intricate models.

Capital Misallocation and Globalization

Two key applications are explored: disentangling capital misallocation sources and evaluating globalization's impact using firm-level data. By revisiting studies like those by \citet{DV2019}, the authors demonstrate how the group Shapley value enhances understanding by offering a comprehensive decomposition that aligns with observed data while avoiding artifacts of traditional approaches like ceteris paribus assumptions.

Results

The Shapley decomposition reveals substantial contributions from parameters related to productivity variance and capital distortion in capital misallocation models. In globalization scenarios, the reduction in iceberg trade costs emerges as a crucial factor influencing real income, while firing costs and tariff reductions impact firm dynamics.

Shapley bounds and minimum norm solutions address scenarios where not all input data is available, providing robust decomposition estimates that align well with empirical findings. This flexibility underscores the method's robustness in real-world applications, where exhaustive simulations may be infeasible.

Implications and Future Work

The introduction of the group Shapley value sets a foundation for a structured and theoretically sound approach to interpreting counterfactual scenarios in economic models. This method, grounded in rigorous axiomatic foundations, facilitates the integration of economic theory with empirical analysis, opening avenues for "interpretable structural economics."

Potential future research directions include extending the method to accommodate multiple parameter sets beyond binary comparisons and addressing computational challenges in large-scale implementations. Such developments would enhance the method's applicability and efficiency in increasingly complex models, maintaining its relevance in both theoretical and applied contexts.

Conclusion

The group Shapley value offers a valuable tool for interpreting the impact of parameter changes in structural models. Its alignment with cooperative game theory principles ensures fair and consistent contribution assessments, translating theoretical concepts into practical insights. This paper's approach provides a promising framework for advancing understanding in economics and beyond, bridging the gap between model complexity and interpretability.