- The paper introduces Adaptive Prompting, a dynamic framework that adjusts prompt structures in real time to enhance reasoning performance.

- It demonstrates iterative validation and error correction that improve benchmarks such as GSM8K and MultiArith, achieving near GPT-4 accuracy.

- The framework enables smaller language models to perform complex reasoning tasks efficiently, reducing reliance on sheer computational power.

Think Beyond Size: Adaptive Prompting for More Effective Reasoning

This essay explores the paper "Think Beyond Size: Adaptive Prompting for More Effective Reasoning" (2410.08130), which introduces a novel dynamic and iterative prompting framework aimed at enhancing the reasoning capabilities of LLMs. The framework, termed Adaptive Prompting, integrates real-time adjustments to prompt structures and validation mechanisms to enable competitive performance in smaller models without relying on large model sizes.

Context and Motivation

LLMs, like GPT-3 and GPT-4, have shown remarkable proficiency in natural language processing tasks owing to their sheer size, often comprising hundreds of billions of parameters. These models are proficient few-shot learners and have achieved state-of-the-art results in tasks demanding complex reasoning, such as those necessitating multi-step logical deductions. Chain-of-Thought (CoT) prompting exemplifies current methods that decompose tasks into manageable reasoning steps. However, these rely on static templates that fail to adjust to task complexity or errors encountered during reasoning.

The exploration of Adaptive Prompting arises from the need to optimize LLMs' interaction with tasks through advanced prompting techniques rather than unbounded scaling, which incurs substantial computational and energy costs without proportional performance enhancement.

Methodology

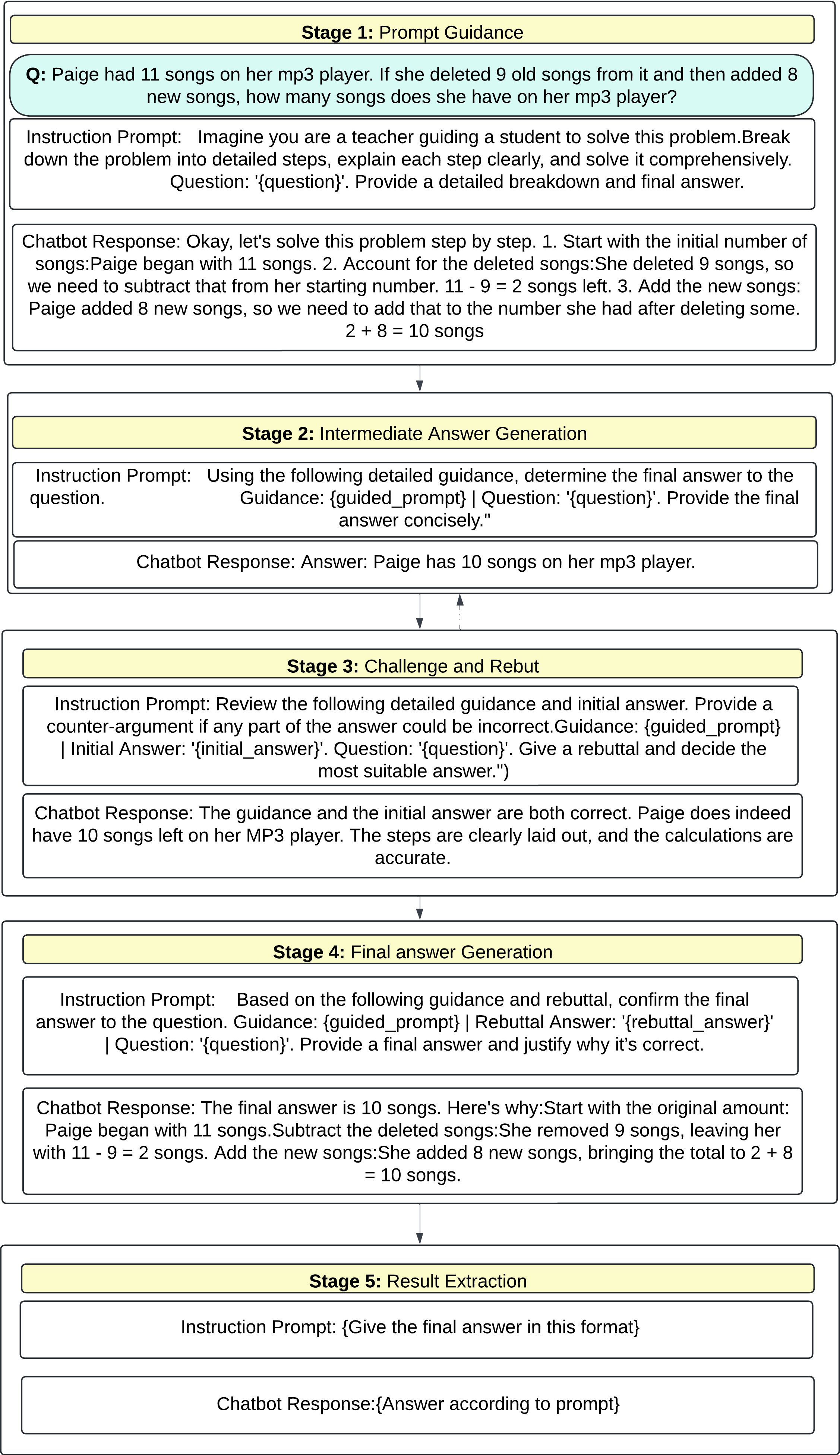

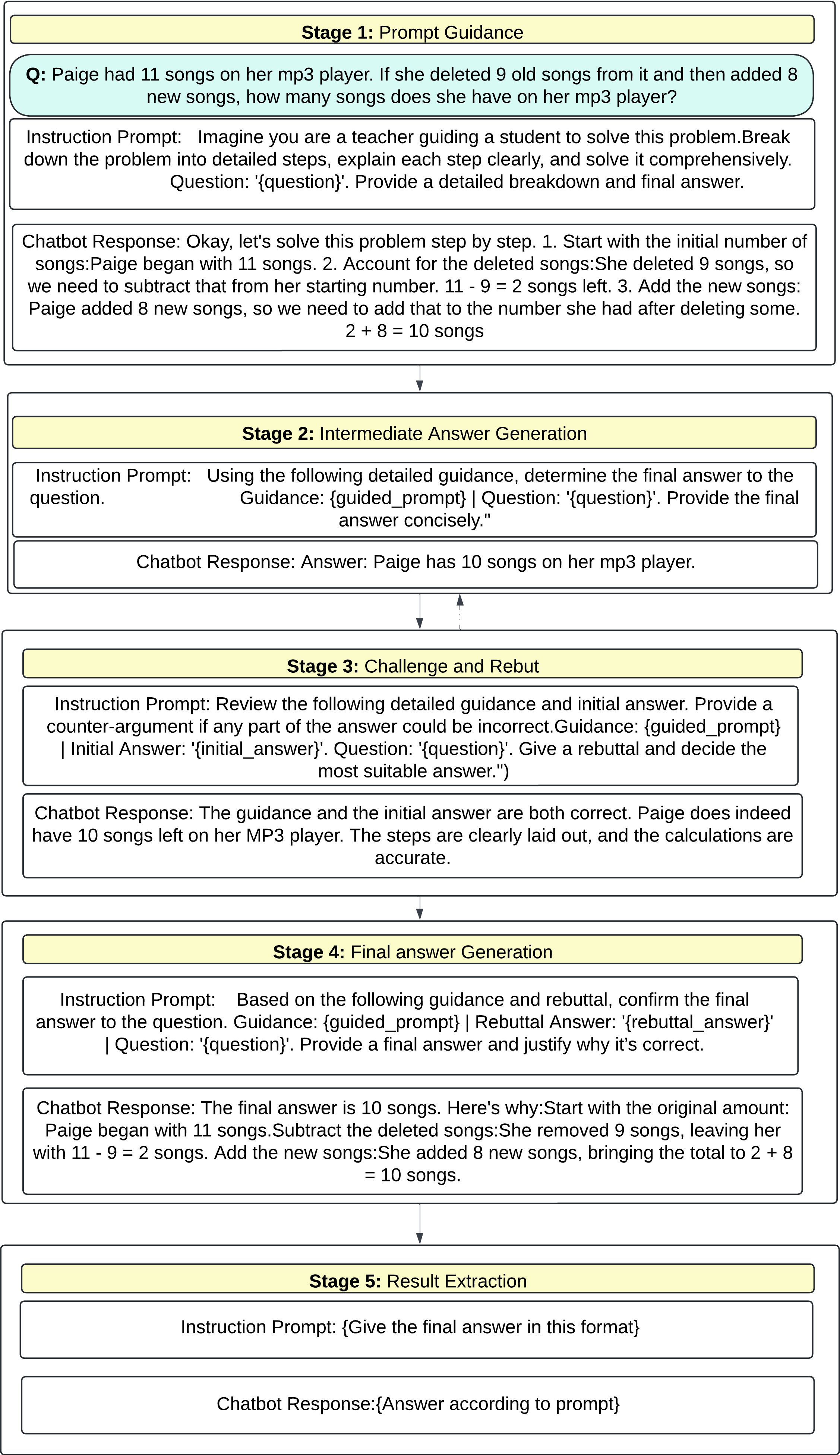

Adaptive Prompting is presented as a multifaceted framework that dynamically modifies prompt structures tailored to the complexity and nature of the task at hand. This framework emphasizes iterative reasoning, incorporating intermediate validation and error-correction mechanisms that surpass the capabilities of static prompting methods.

Figure 1: An illustrative flow of the Adaptive Prompting Framework.

Dynamic Prompt Adjustment: In Adaptive Prompting, prompt generation is not static but evolves based on task dynamics and model responses. This is in stark contrast to static methods where prompts are predetermined and do not adapt.

Iterative Validation and Error Correction: The framework incorporates mechanisms that validate intermediate results, employing a form of self-reflective reasoning akin to human cognitive processes. By iterating on problem-solving steps, Adaptive Prompting identifies and rectifies errors before finalizing an output.

Performance on Reasoning Benchmarks: The paper evaluates Adaptive Prompting against a suite of reasoning benchmarks, such as GSM8K and MultiArith, showcasing enhanced performance over static methods, including substantial gains in arithmetic and commonsense reasoning tasks.

Results and Evaluation

The experimental results underline the effectiveness of Adaptive Prompting in significantly bolstering the reasoning performance of smaller models. Notably, the framework achieves competitive accuracy with models like GPT-4 in several benchmarks, demonstrating this with around 99.44% accuracy on the MultiArith dataset and 98.72% on GSM8K, surpassing static CoT methods in robustness and consistency.

Adaptive Prompting enables smaller LLMs to mimic the reasoning performance of larger, more computationally-intensive models, achieving high accuracy across varied datasets, including arithmetic reasoning tasks, and commonsense reasoning tasks, as illustrated by a marked improvement in the Gemma 9B model’s performance.

Implications and Future Directions

The implications of Adaptive Prompting are profound, suggesting a paradigm shift from reliance on sheer computational power towards smarter, more adaptive task interactions. The framework democratizes access to high-performing AI systems by allowing smaller, more efficient models to perform complex reasoning tasks effectively.

The anticipated future work must address validated performance consistency across diverse domains, emphasizing the establishment of robust, adaptable systems without task-specific calibration. Additionally, further research should concentrate on integrating Adaptive Prompting methodologies into human-AI collaborative environments, potentially enhancing sectors like education and healthcare where complex reasoning tasks intersect with human decision-making.

Conclusion

The paper makes a significant contribution towards efficient, scalable AI by optimizing reasoning processes beyond brute-force scaling of LLMs. Adaptive Prompting represents a pivotal step in developing models that reason adaptively, thereby aligning model performance more closely with real-world application needs while maintaining computational efficiency.