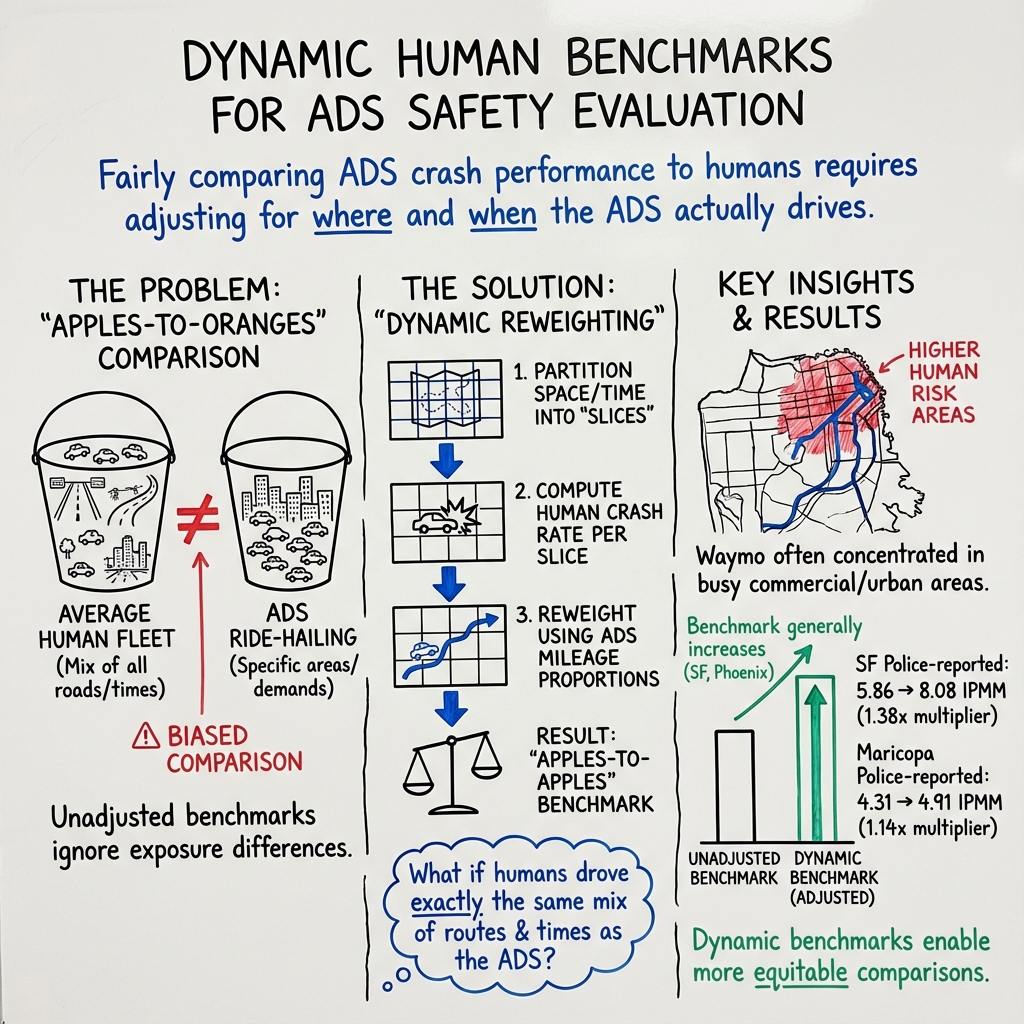

- The paper introduces a dynamic benchmarking approach that adjusts crash rates based on spatial and temporal variations, enabling more accurate ADS performance comparisons.

- It utilizes extensive datasets, including over 20 million miles of Waymo ride-hailing data and crash records from ADOT, SWITRS, and HPMS, to support its analysis.

- The study finds that spatial and temporal adjustments yield significant crash rate variances across regions, thus enhancing the reliability of ADS safety assessments.

Purpose and Scope

The paper "Dynamic Benchmarks: Spatial and Temporal Alignment for ADS Performance Evaluation" (2410.08903) aims to refine the existing methods used for evaluating the performance of Automated Driving Systems (ADS), particularly SAE level 4+ systems deployed by Waymo in ride-hailing services across various regions in the United States. The authors introduce a dynamic benchmarking approach that adjusts for spatial and temporal variations in crash rates between ADS and human drivers. This adjustment accounts for divergent driving patterns without relying solely on coarse geographic benchmarks traditionally used in ADS performance evaluations.

Methodology

Data Collection and Sources

The study utilizes a robust dataset comprising police-reported crash data, vehicle miles traveled (VMT) data, and over 20 million miles from Waymo's ride-hailing operations. The crash data sources include the Arizona Department of Transportation (ADOT) for Maricopa County and the Statewide Integrated Traffic Records System (SWITRS) for Los Angeles and San Francisco counties. Vehicle mile data is derived from the Highway Performance Monitoring System (HPMS) managed by the Federal Highway Administration.

Dynamic Benchmark Calculation

The dynamic benchmark methodology adjusts the crash rate data according to the spatial and temporal distributions of ADS driving. Spatial adjustments utilize the S2 cell framework, a geospatial indexing system that partitions geographic areas into discretized cells of approximately 1.27 square km. Temporal factors focus on time-of-day variations, addressing the ADS's propensity to operate predominantly during higher-risk time frames, such as late afternoons and evenings, particularly in San Francisco.

The dynamic benchmark is computed as a weighted average of segment-specific crash rates, proportional to the volume of ADS mileage within each segment relative to human driving exposure.

Results

The spatial adjustments revealed varied results across different severity levels and counties. Adjusted crash rates were noted to be 10% to 47% higher in San Francisco, 12% to 20% higher in Maricopa, and 7% lower to 34% higher in Los Angeles compared to unadjusted benchmarks. Time-of-day adjustments in San Francisco indicated modest adjustments ranging from 2% lower to 16% higher than unadjusted benchmarks, underscoring the necessity of these calibrations to better align ADS safety assessments with human benchmarks.

Discussion

The dynamic benchmark framework offers a nuanced perspective on ADS performance by accounting for confounding variables in crash data. The study identifies spatial and temporal variations as key factors influencing crash risk and recommends further exploration into other dimensions such as weather, day-of-week, and seasonal effects, which could enhance benchmark accuracy despite current data limitations. The paper suggests ongoing refinements to this methodology to accommodate broader circumstances influencing crash dynamics.

Implications and Future Directions

This research points to practical implications in improving equitable comparisons between ADS and human driving safety benchmarks. The dynamic benchmark methodology represents a notable advancement over conventional benchmarks, facilitating more accurate assessments of ADS's operational safety. Future studies may benefit from more granular data to refine these benchmarks further and incorporate additional influential factors, providing a comprehensive understanding of ADS performance.

Conclusion

The study emphasizes the significance of dynamic benchmarks in effectively evaluating ADS performance by mitigating biases introduced through spatial and temporal disparities. These adjustments ensure more reliable comparisons, vital for assessing ADS deployment in real-world applications and expanding the understanding of safety impact. As ADS technology advances, such methodologies will play a crucial role in optimizing safety evaluations and enhancing public trust in autonomous driving innovations.