- The paper establishes rigorous sparsity bounds using the RFSS problem to determine how a sparse subnetwork can approximate a target network.

- It employs advanced probabilistic methods, including second moment techniques and local limit theorems, to refine classical proofs under the SLTH.

- The findings offer a theoretical basis for model compression, guiding efficient neural network design for resource-constrained applications.

On the Sparsity of the Strong Lottery Ticket Hypothesis

Introduction

The paper "On the Sparsity of the Strong Lottery Ticket Hypothesis" addresses the key limitation in the theoretical underpinnings of the Strong Lottery Ticket Hypothesis (SLTH). This hypothesis suggests that a large, randomly initialized neural network N can be pruned to reveal a sparse subnetwork, or "winning ticket," that approximates any target neural network Nt without training. Previous work on SLTH did not guarantee the size of these pruned subnetworks. The authors of this paper offer a solution by proving tight bounds on the sparsity of these subnetworks using the Random Fixed-Size Subset Sum Problem (RFSS), an extension and refinement of the Random Subset Sum (RSS) Problem.

Strong Lottery Ticket Hypothesis and Sparsity

The SLTH posits that within a randomly initialized network exists a sparse subnetwork that performs as well as a trained large network. However, earlier proofs provided theoretical existence results without specifying the sparsity of the winning tickets. This limitation hampers practical applications, where understanding these parameters is crucial. The paper proposes to address precisely how large N should be to ensure that a highly sparse subnetwork can approximate Nt.

This work significantly improves our understanding by establishing bounds for the RFSS Problem, which now considers subsets of a fixed size. Crucially, this leads to a better practical application of SLTH in dense and equivariant networks, ensuring that the subnetwork is sparse enough to be computationally efficient while still ensuring performance.

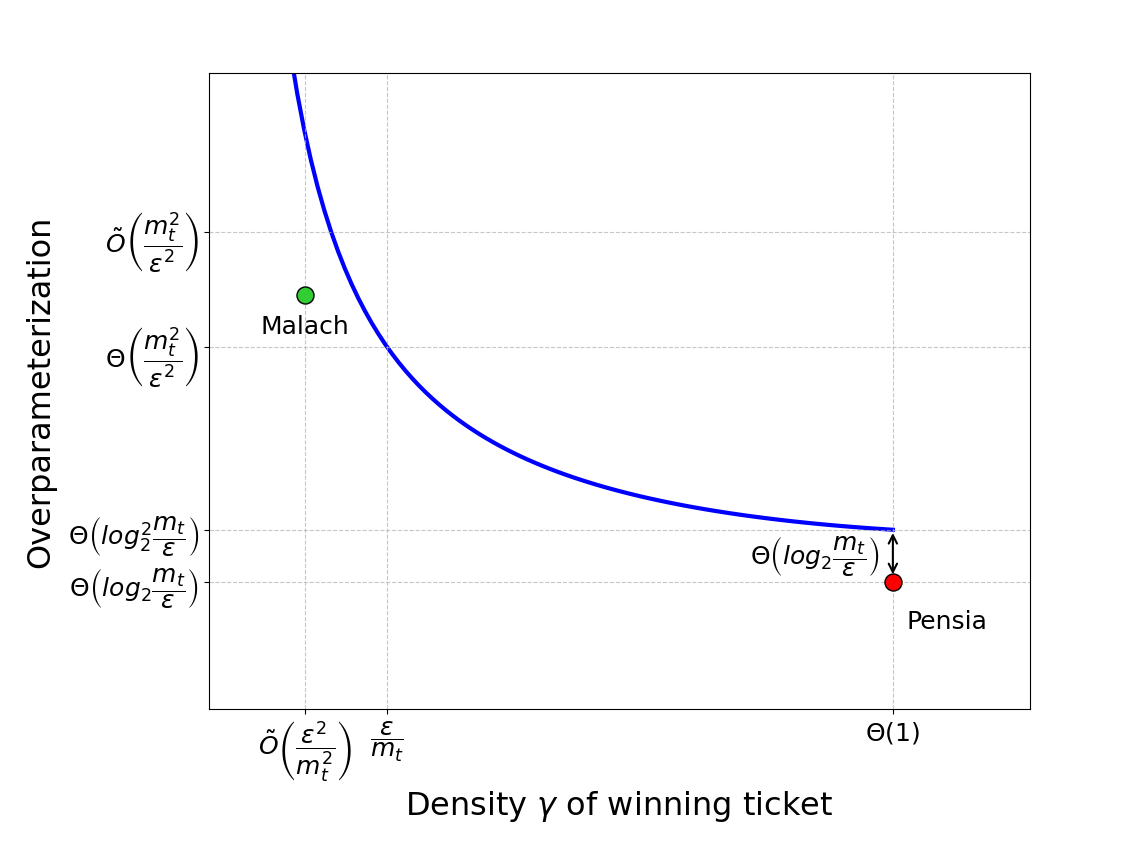

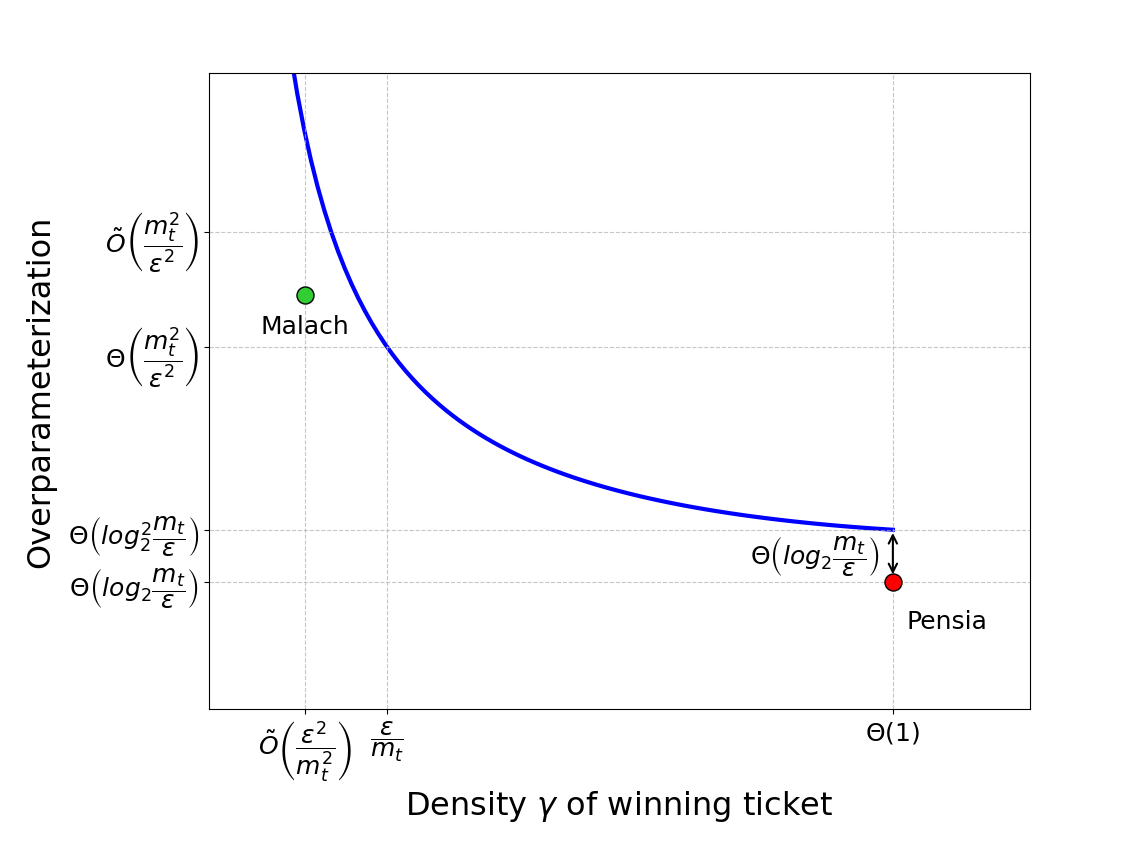

Figure 1: A qualitative plot showing the relationship between the density γ of a winning ticket and the overparameterization required by Theorem \ref{thm:pensia}.

Technical Approach

The authors extend classical proofs by introducing a new technical tool, the RFSS Problem, which tightens the constraints on the subset sizes used for approximating target values. They define a set of conditions under which a random sample can approximate any set target using fixed-size subsets, thus improving upon the RSS problem by addressing sparsity more effectively.

Implementation-wise, this involves setting up bounds and leveraging complex probability and approximation theory, particularly through leveraging sum-bounded probability density functions. The proofs build on second moment methods and a refined application of local limit theorems, marking a significant advance over prior methods.

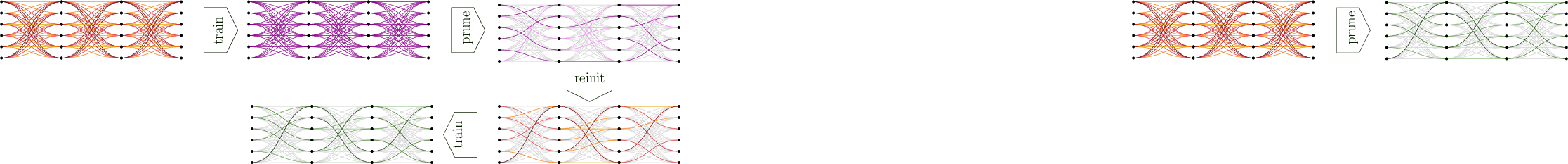

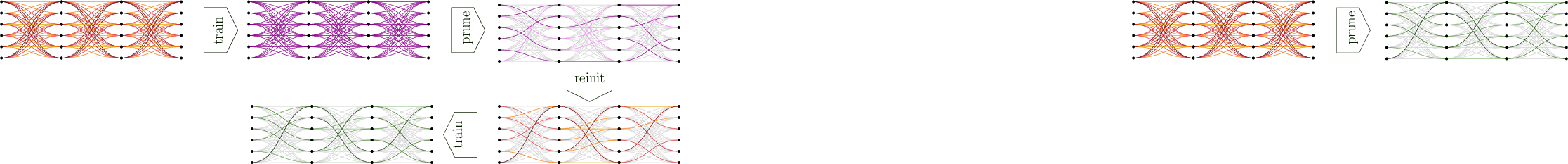

Figure 2: Procedure for identifying Lottery Tickets (LTH) involving iterative pruning and resetting of weights, a precursor step to SLTH.

Results and Implications

The implications of this work are manifold. Firstly, it provides a concrete pathway for practitioners to determine how large a neural network must be to ensure the existence of an optimal sparse subnetwork. This has direct applications in model compression and efficiency improvements, vital for deploying neural networks in resource-constrained environments.

Furthermore, the RFSS Problem's solution paves the way for more refined theoretical constructs in approximation theory, potentially impacting other domains such as error correction in codes or sparse representations in signal processing.

Limitations and Future Work

Despite the theoretical advancements, the problem of reliably finding these subnetworks in practice remains unsolved. Empirical evidence supports their existence, yet algorithmic strategies need refinement for efficient discovery. Future work will focus on developing or improving algorithms capable of uncovering these subnetworks in large random initializations efficiently. Addressing this could bridge the gap between theoretical possibility and practical application, making the SLTH a more feasible solution in applied AI scenarios.

Conclusion

This research offers significant strides in understanding the structural properties of neural networks under the SLTH. By establishing bounds on subnetwork sparsity through the RFSS Problem, it not only addresses a longstanding limitation in the SLTH literature but also sets a foundation for future investigations into practical applications of these subnetworks.