WorldSimBench: Towards Video Generation Models as World Simulators

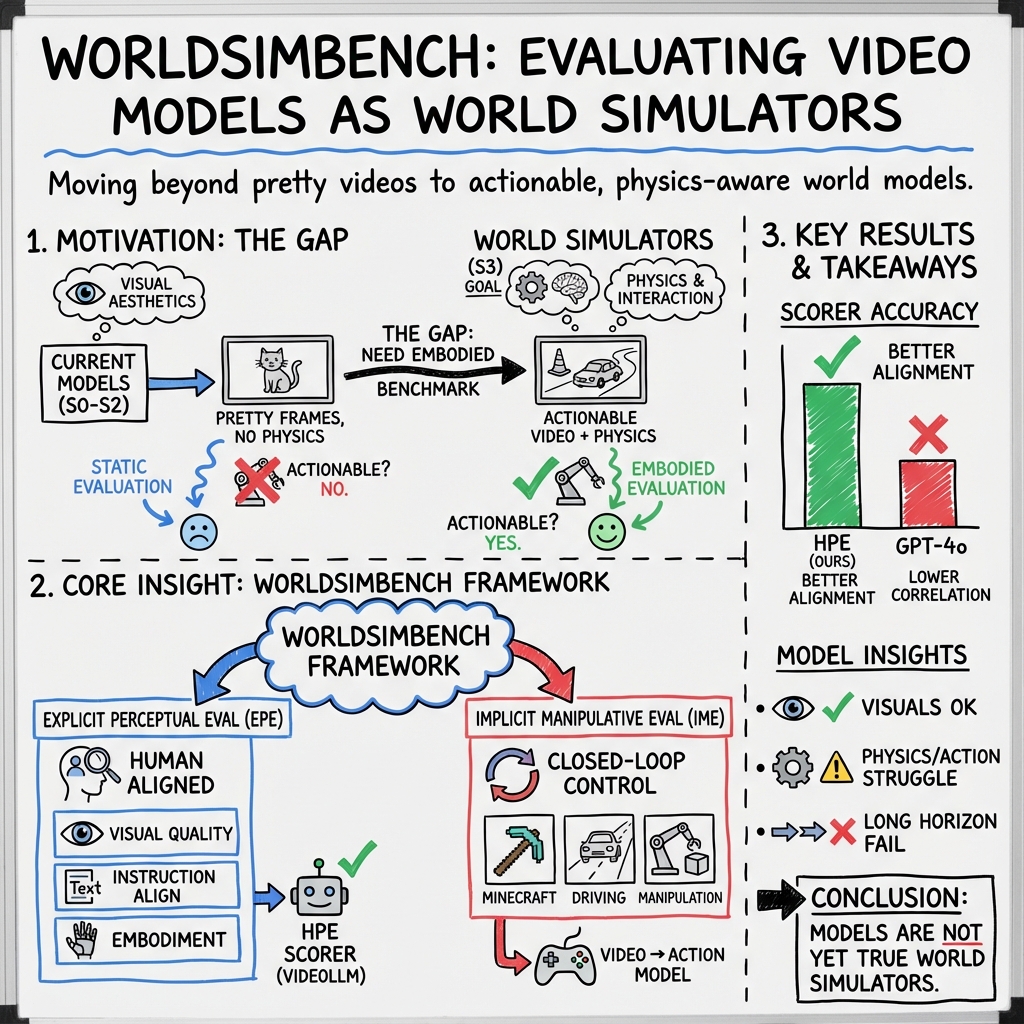

Abstract: Recent advancements in predictive models have demonstrated exceptional capabilities in predicting the future state of objects and scenes. However, the lack of categorization based on inherent characteristics continues to hinder the progress of predictive model development. Additionally, existing benchmarks are unable to effectively evaluate higher-capability, highly embodied predictive models from an embodied perspective. In this work, we classify the functionalities of predictive models into a hierarchy and take the first step in evaluating World Simulators by proposing a dual evaluation framework called WorldSimBench. WorldSimBench includes Explicit Perceptual Evaluation and Implicit Manipulative Evaluation, encompassing human preference assessments from the visual perspective and action-level evaluations in embodied tasks, covering three representative embodied scenarios: Open-Ended Embodied Environment, Autonomous, Driving, and Robot Manipulation. In the Explicit Perceptual Evaluation, we introduce the HF-Embodied Dataset, a video assessment dataset based on fine-grained human feedback, which we use to train a Human Preference Evaluator that aligns with human perception and explicitly assesses the visual fidelity of World Simulators. In the Implicit Manipulative Evaluation, we assess the video-action consistency of World Simulators by evaluating whether the generated situation-aware video can be accurately translated into the correct control signals in dynamic environments. Our comprehensive evaluation offers key insights that can drive further innovation in video generation models, positioning World Simulators as a pivotal advancement toward embodied artificial intelligence.

- Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Frozen in time: A joint video and image encoder for end-to-end retrieval. In Proceedings of the IEEE/CVF international conference on computer vision, pp. 1728–1738, 2021.

- Video pretraining (vpt): Learning to act by watching unlabeled online videos. Advances in Neural Information Processing Systems, 35:24639–24654, 2022.

- Zero-shot robotic manipulation with pretrained image-editing diffusion models. arXiv preprint arXiv:2310.10639, 2023.

- Instructpix2pix: Learning to follow image editing instructions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 18392–18402, 2023.

- nuscenes: A multimodal dataset for autonomous driving. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 11621–11631, 2020.

- Egoplan-bench: Benchmarking egocentric embodied planning with multimodal large language models. arXiv preprint arXiv:2312.06722, 2023.

- Rh20t-p: A primitive-level robotic dataset towards composable generalization agents. arXiv preprint arXiv:2403.19622, 2024.

- Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality. See https://vicuna. lmsys. org (accessed 14 April 2023), 2023.

- Carla: An open urban driving simulator. In Conference on robot learning, pp. 1–16. PMLR, 2017.

- Palm-e: An embodied multimodal language model. arXiv preprint arXiv:2303.03378, 2023.

- Video language planning. arXiv preprint arXiv:2310.10625, 2023.

- Learning universal policies via text-guided video generation. Advances in Neural Information Processing Systems, 36, 2024.

- Bridge data: Boosting generalization of robotic skills with cross-domain datasets. arXiv preprint arXiv:2109.13396, 2021.

- Rh20t: A robotic dataset for learning diverse skills in one-shot. In RSS 2023 Workshop on Learning for Task and Motion Planning, 2023.

- Guiding instruction-based image editing via multimodal large language models. arXiv preprint arXiv:2309.17102, 2023.

- Vista: A generalizable driving world model with high fidelity and versatile controllability. arXiv preprint arXiv:2405.17398, 2024.

- The” something something” video database for learning and evaluating visual common sense. In Proceedings of the IEEE international conference on computer vision, pp. 5842–5850, 2017.

- Ego4d: Around the world in 3,000 hours of egocentric video. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 18995–19012, 2022.

- Animatediff: Animate your personalized text-to-image diffusion models without specific tuning. arXiv preprint arXiv:2307.04725, 2023.

- Minerl: A large-scale dataset of minecraft demonstrations. arXiv preprint arXiv:1907.13440, 2019.

- World models. arXiv preprint arXiv:1803.10122, 2018.

- Mmworld: Towards multi-discipline multi-faceted world model evaluation in videos. arXiv preprint arXiv:2406.08407, 2024.

- Lora: Low-rank adaptation of large language models, 2021.

- Vbench: Comprehensive benchmark suite for video generative models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 21807–21818, 2024.

- Planning with diffusion for flexible behavior synthesis. arXiv preprint arXiv:2205.09991, 2022.

- Open-sora-plan, April 2024. URL https://doi.org/10.5281/zenodo.10948109.

- Lego: Learning egocentric action frame generation via visual instruction tuning. arXiv preprint arXiv:2312.03849, 2023.

- Manipllm: Embodied multimodal large language model for object-centric robotic manipulation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 18061–18070, 2024.

- Steve-1: A generative model for text-to-behavior in minecraft. Advances in Neural Information Processing Systems, 36, 2024.

- Visual instruction tuning. In NeurIPS, 2023a.

- Agentbench: Evaluating llms as agents. arXiv preprint arXiv:2308.03688, 2023b.

- Visualagentbench: Towards large multimodal models as visual foundation agents. arXiv preprint arXiv:2408.06327, 2024a.

- Evalcrafter: Benchmarking and evaluating large video generation models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 22139–22149, 2024b.

- From gpt-4 to gemini and beyond: Assessing the landscape of mllms on generalizability, trustworthiness and causality through four modalities, 2024.

- Calvin: A benchmark for language-conditioned policy learning for long-horizon robot manipulation tasks. IEEE Robotics and Automation Letters, 7(3):7327–7334, 2022.

- OpenAI. Gpt-4o. https://openai.com/index/hello-gpt-4o/, 2024.

- Scalable diffusion models with transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 4195–4205, 2023.

- Mp5: A multi-modal open-ended embodied system in minecraft via active perception. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 16307–16316. IEEE, 2024.

- Language models are unsupervised multitask learners. OpenAI blog, 1(8):9, 2019.

- Lmdrive: Closed-loop end-to-end driving with large language models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 15120–15130, 2024.

- Assessment of multimodal large language models in alignment with human values, 2024.

- Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805, 2023.

- Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023.

- Voyager: An open-ended embodied agent with large language models. arXiv preprint arXiv:2305.16291, 2023a.

- Modelscope text-to-video technical report. arXiv preprint arXiv:2308.06571, 2023b.

- Lavie: High-quality video generation with cascaded latent diffusion models. arXiv preprint arXiv:2309.15103, 2023c.

- Describe, explain, plan and select: Interactive planning with large language models enables open-world multi-task agents. arXiv preprint arXiv:2302.01560, 2023d.

- Dynamicrafter: Animating open-domain images with video diffusion priors. arXiv preprint arXiv:2310.12190, 2023.

- Easyanimate: A high-performance long video generation method based on transformer architecture. arXiv preprint arXiv:2405.18991, 2024.

- Learning interactive real-world simulators. arXiv preprint arXiv:2310.06114, 2023.

- LAMM: language-assisted multi-modal instruction-tuning dataset, framework, and benchmark. In NeurIPS, 2023.

- Flash-vstream: Memory-based real-time understanding for long video streams, 2024a.

- Ad-h: Autonomous driving with hierarchical agents. arXiv preprint arXiv:2406.03474, 2024b.

- Open-sora: Democratizing efficient video production for all, March 2024. URL https://github.com/hpcaitech/Open-Sora.

- Minedreamer: Learning to follow instructions via chain-of-imagination for simulated-world control. arXiv preprint arXiv:2403.12037, 2024.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces WorldSimBench, a new way to test video-generating AI models that try to “imagine” what will happen next in the world and produce videos that can guide real actions. The goal is to move toward “World Simulators” — video models that don’t just look good, but also follow physics, make sense in 3D, and can be turned into correct actions for tasks like driving, playing Minecraft, or controlling a robot arm.

Key Questions and Objectives

The researchers focus on two simple questions:

- How can we organize different “prediction” models by what they output (text, images, videos, or videos you can act on), and how “embodied” they are (how well they connect to the physical world)?

- How can we fairly test high-level video models (World Simulators) from both a human perspective (does it look right?) and an action perspective (does it help complete tasks correctly)?

Methods and Approach (Explained Simply)

Think of WorldSimBench as a two-part report card for future-guessing video AIs:

- The “looks-right” test (Explicit Perceptual Evaluation):

- The team built a dataset called HF-Embodied with 35,701 short videos, each scored by humans on many details like:

- Visual quality (does the video look clean, are backgrounds and moving objects consistent?)

- Condition consistency (does the video follow the instruction given?)

- Embodiment (does motion follow physics, is there depth, are interactions realistic, is it safe in driving?)

- They then trained a “Human Preference Evaluator” — a video understanding model tuned to score videos like a human would. This lets them automatically judge new videos in a human-like way.

- The “does-it-work” test (Implicit Manipulative Evaluation):

- The model generates a “future video” based on an instruction and current observation.

- A video-to-action system translates that future video into control signals (like steering, moving a mouse, or robot arm commands) in a simulator.

- If the actions achieve the goal (like collecting wood in Minecraft, following a route in driving, or opening a drawer with a robot), the video model did well — because its imagined future led to correct actions.

They tested three kinds of tasks (three “embodied scenarios”):

- Minecraft (80C2AE): survival-style tasks like moving, collecting items, chopping trees.

- Driving (C280B5): following routes safely in a car simulator (CARLA).

- Robot manipulation (C2AE80): using a robot arm to do things like pulling a handle or pressing a button (CALVIN).

They also propose a simple hierarchy of prediction models:

- S0: Predicts text (plans or instructions).

- S1: Predicts images (single pictures of future goals).

- S2: Predicts videos (moving scenes) mainly judged by looks.

- S3: Predicts actionable videos — videos that are realistic enough to be converted into correct actions. These are “World Simulators.”

Main Findings and Why They Matter

- The Human Preference Evaluator (their trained scoring model) matched human judgments better than GPT-4o on their tests. This means their “looks-right” test is strong and reliable.

- Most current video generation models struggle with physics and 3D consistency:

- In Minecraft, many models failed to show believable physical interactions (like blocks breaking correctly) or realistic movement.

- In driving, some videos looked okay but often lacked proper depth and realistic elements (like pedestrians and cars), hurting safety and route following.

- In robot tasks, models typically showed neat static scenes but didn’t align well with the instructions, leading to aimless motions.

- When they tried converting the generated videos into actions, performance varied a lot:

- Models that made better, stable trajectories did better on driving and simpler robot tasks.

- Models conditioned on the first frame (to be “situation-aware”) sometimes struggled to keep physics and 3D right over time.

- Overall, today’s video generation models are not yet true World Simulators. They need to improve on physics, instruction-following, and 3D realism to reliably guide actions.

Implications and Impact

WorldSimBench gives researchers and developers a clear, practical way to test whether video-generating AIs are ready to guide real actions in the world. This matters because:

- Better “World Simulators” could help robots work safely and precisely, assist in self-driving systems, and power smarter game agents.

- The HF-Embodied dataset and the Human Preference Evaluator provide tools to align video models with human judgments and physical reality.

- By combining the “looks-right” and “does-it-work” tests, the benchmark pushes the field toward models that are not just pretty but truly useful.

In short, this work lays the groundwork for building video AIs that understand the world well enough to plan and act — a key step toward embodied artificial intelligence.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Below is a concise list of concrete gaps and unresolved questions identified in the paper that future researchers can act on:

- Scenario coverage is narrow: evaluation is limited to three settings (Minecraft/80C2AE, CARLA/C280B5, CALVIN/C2AE80); no tests on other embodied domains (e.g., indoor navigation, deformable object manipulation, aerial/underwater robotics, multi-agent collaboration, AR/VR/egocentric daily living).

- Lack of real-world validation: all closed-loop evaluations are in simulators; no sim-to-real or real-robot/driving trials to test transfer, safety, and brittleness under real sensors and actuators.

- Ambiguous “actionable video” criterion: stage is defined qualitatively; no formal operational test or threshold that determines when a video is “actionable” (e.g., success under standardized video-to-action policies, minimum physical-consistency metrics).

- Evaluator dependence on a single model: the Human Preference Evaluator (HPE) is built by fine-tuning a single Video-LLM (Flash-VStream); no comparison to alternative evaluators (pairwise ranking, BT/PL models, learned reward models), no ensemble or calibration methods to improve reliability.

- Insufficient evaluator validation: the HPE is compared mostly to GPT-4o; missing inter-annotator agreement (e.g., Cohen’s kappa), test–retest reliability, calibration curves, cross-lab replicability, and robustness to distribution shift.

- Risk of evaluator overfitting and Goodhart’s law: HPE is trained on videos generated by the same family of models used for evaluation; no safeguards against gaming (e.g., adversarial/exploit tests, evaluator red-teaming, adversarially generated “score-hacking” videos).

- Limited scale and balance of HF-Embodied dataset: 35,701 tuples across three scenarios and ~20 dimensions may be underpowered per-dimension/per-scenario; no release of per-dimension counts, class balance, or difficulty distribution; unclear coverage of rare/edge cases.

- Subjective dimension definitions: constructs like “Perspectivity,” “Embodied Interaction,” “Trajectory,” and “Scenario Alignment” are not validated for construct validity or orthogonality; no evidence they comprehensively span physical/embodied fidelity.

- Mixed scoring scales hinder comparability: Minecraft is scored on a binary 1–2 scale while others use 1–5; sensitivity and resolution of scores across scenarios are not harmonized; no analysis of scale effects on conclusions.

- Prompt generation bias and coverage: prompts are LLM-expanded and manually filtered; no formal coverage metrics, diversity audits, or balance constraints; no robustness to re-prompting, paraphrases, or instruction ambiguity.

- Sparse sampling for explicit evaluation: only 5 prompts per dimension × 5 videos per prompt; no confidence intervals or statistical power analysis; evaluation variability due to generator stochasticity not quantified.

- Physical law adherence not directly measured: reliance on human judgments for “physics plausibility”; absent explicit physics/geometry tests (e.g., depth/motion consistency, Newtonian plausibility checks, kinematic feasibility, 3D reconstruction consistency).

- Conflation in closed-loop evaluation: performance mixes generator quality and the capacity/limitations of the video-to-action policy; no ablations to isolate generator contribution (e.g., using an oracle planner, ground-truth future videos, or standardized “policy-as-a-service” baselines).

- Missing oracle and baseline controls: no comparison to policies fed ground-truth futures/goals or to hand-crafted goal renderings; no “no-generator” or “random-generator” controls to bound performance.

- Latency and real-time feasibility unmeasured: no reporting of end-to-end latency (video generation + policy inference), throughput, hardware requirements, or control instability caused by lag—critical for closed-loop deployment.

- Horizon length and memory not analyzed: no systematic study of video length, refresh cadence, planning horizon, or memory mechanisms on task performance and evaluator scores.

- First-frame conditioning paradox left unexplained: first-frame conditioned models underperform in Minecraft, but root causes (dataset bias, 3D coherence, temporal conditioning, training objectives) are not dissected.

- Distribution shifts untested: no stress-testing for OOD scenes, lighting/weather, long-tail assets, sensor noise, occlusions, or adversarial perturbations; robustness and safety under shift remain open.

- Limited sensing modalities: evaluation assumes text and RGB (plus first frame in some cases); no tests with depth, LiDAR, proprioception, audio, or multi-sensor fusion needed in many embodied settings.

- Safety is scenario-limited: “Safety” is only quantified for driving; no safety metrics for manipulation (e.g., force limits, collision risk, damage potential) or Minecraft (e.g., risk-aware exploration).

- Task complexity and compositionality: long-horizon, multi-step, compositional, and counterfactual tasks are not emphasized; no benchmarks for causal reasoning or “what-if” predictive control.

- Reproducibility and fairness of fine-tuning: all generators and policies are fine-tuned on scenario-specific datasets; it’s unclear if training setups are uniform across models; no ablations on training data size/quality to ensure apples-to-apples comparison.

- Potential data leakage: overlap between internet videos used for generator fine-tuning and evaluator training is not audited; leakage could inflate evaluator alignment and performance estimates.

- Evaluation granularity for feedback: while annotators provide reasons, the HPE outputs only scalar scores; no automatic reason generation, error localization, or diagnosers to guide model improvement.

- Generalization beyond three scenarios: authors note applicability “beyond robots,” but no pathway or tools are provided to extend dimensions, prompts, and simulators to other domains (e.g., healthcare, household egocentric tasks, industrial assembly).

- Standardization of sub-task decomposition: closed-loop pipelines rely on unspecified task decomposition; no standard protocol or tool for consistent decomposition across scenarios and models.

- Benchmark governance and anti-overfitting policies: no procedures for hidden test sets, periodic refresh, or blinded evaluation servers to mitigate overfitting to fixed prompts/dimensions/evaluator.

- Ethical and legal aspects: no discussion on annotator demographics/bias, consent and licensing for internet-sourced videos, or the ethical risks of using generative content to drive physical systems.

- Open question on training World Simulators with feedback: while evaluation is established, the paper does not explore how to use HPE scores or closed-loop outcomes as learning signals (e.g., RL/reward modeling) to iteratively improve models.

- Unclear mapping of scenario labels: cryptic scenario names (80C2AE, C280B5, C2AE80) may impede adoption; no explicit taxonomy or rationale for these codes or their extensibility to new categories.

Glossary

- Aesthetics (AE): An evaluation dimension assessing the overall visual appeal and quality of generated videos. "Aesthetics (AE)"

- Actionable video: A generated video that can be reliably translated into executable control actions for an agent. "actionable videos (i.e., the videos that can be translated into actions)"

- Background Consistency (BC): An evaluation dimension measuring whether the background remains coherent and stable across frames. "Background Consistency (BC)"

- CALVIN: A benchmark and simulator for language-conditioned robot manipulation tasks. "We employ CALVIN~\citep{mees2022calvin} as the robot manipulation simulator"

- CARLA: An open-source urban driving simulator used for autonomous driving research and evaluation. "We conduct standard closed-loop evaluations using the CARLA~\citep{dosovitskiy2017carla} simulator"

- Closed-loop evaluation: An evaluation setup where model outputs influence subsequent inputs through real-time interaction, enabling continuous feedback. "closed-loop task evaluation"

- Degrees of Freedom (DOF): The number of independent parameters that define the configuration of a mechanical system, such as a robot arm. "7-DOF (degrees of freedom)"

- Diffusion transformer: A generative modeling approach combining diffusion processes with transformer architectures to produce high-quality sequences. "With the advancement of diffusion transformer~\citep{peebles2023scalable}"

- Embodied Interaction (EI): An evaluation dimension judging the plausibility and physical realism of interactions with objects in generated videos. "Embodied Interaction (EI)"

- Embodied intelligence: AI systems that perceive, act, and learn within physical or simulated environments, emphasizing interaction with the world. "especially in embodied intelligence"

- Embodied scenarios: Task settings that require physical reasoning or interaction, used to evaluate embodied capabilities. "three representative embodied scenarios: 80C2AE, C280B5, and C2AE80"

- Explicit Perceptual Evaluation: A human-aligned assessment focusing on perceptual factors like visual fidelity, condition adherence, and embodiment. "Explicit Perceptual Evaluation"

- Foreground Consistency (FC): An evaluation dimension assessing the coherence and stability of foreground elements over time. "Foreground Consistency (FC)"

- Goal-based policy: A control policy that selects actions by conditioning on a target goal state inferred from inputs (e.g., video). "a pre-trained IDM or a goal-based policy"

- HF-Embodied Dataset: A human-annotated video assessment dataset with fine-grained feedback across multiple embodied dimensions. "HF-Embodied Dataset"

- Human Preference Evaluator: A video scoring model trained to align with human judgments for multi-dimensional evaluation. "Human Preference Evaluator"

- Implicit Manipulative Evaluation: An assessment that measures how well generated videos can be translated into control signals for task performance. "Implicit Manipulative Evaluation"

- Instruction Alignment (IA): An evaluation dimension measuring how well the generated content follows the input instruction. "Instruction Alignment (IA)"

- Inverse Dynamics Model (IDM): A model that predicts the action causing a transition between states, used to convert video predictions into actions. "IDM"

- Key Element (KE): An evaluation dimension for the rendering quality and consistency of crucial entities (e.g., pedestrians, vehicles). "Key Element (KE)"

- LoRA: Low-Rank Adaptation; a parameter-efficient fine-tuning technique that adds trainable low-rank matrices to pre-trained models. "Only LoRA~\citep{hu2021loralowrankadaptationlarge} parameters are trained."

- MineRL: A Minecraft-based simulation environment used for reinforcement learning and embodied agent research. "We employ MineRL as the Minecraft simulator"

- Pearson linear correlation coefficient (PLCC): A statistic quantifying the strength of linear correlation between two variables. "we employ Pearson linear correlation coefficient (PLCC)"

- Perspectivity (PV): An evaluation dimension assessing the sense of 3D depth and perspective in generated videos. "Perspectivity (PV)"

- Safety (SF): An evaluation dimension assessing whether actions comply with safety rules (e.g., traffic laws). "Safety (SF)"

- Scenario Alignment (SA): An evaluation dimension measuring how well a video matches the specified scenario in the instruction. "Scenario Alignment (SA)"

- Task Instruction Prompt List: A curated set of prompts per scenario and dimension used to systematically generate and evaluate videos. "Task Instruction Prompt List"

- Trajectory (TJ): An evaluation dimension gauging the rationality and physical plausibility of motion paths in videos. "Trajectory (TJ)"

- Video-to-action models: Models that map predicted or goal videos to executable control signals for agents. "video-to-action models"

- VideoLLM: A multimodal LLM specialized for video understanding and reasoning. "a VideoLLM"

- World Simulator: A predictive model that generates physically consistent, actionable videos aligned with executable actions. "World Simulators"

- WorldSimBench: A dual-framework benchmark (perceptual and manipulative) designed to evaluate video generation models as world simulators. "WorldSimBench"

Practical Applications

Practical Applications Derived from WorldSimBench

The paper introduces WorldSimBench—a dual evaluation framework for “World Simulators” (predictive video generation models)—along with a hierarchical taxonomy for predictive models (S0–S3), a fine-grained human feedback dataset (HF-Embodied), a Human Preference Evaluator (HPE) trained via LoRA, and a closed-loop video-to-action evaluation in three embodied scenarios (Minecraft 80C2AE, autonomous driving C280B5, and robot manipulation C2AE80). Below are actionable applications organized by deployment horizon.

Immediate Applications

The following applications can be deployed now, primarily in R&D environments, simulation-based testing, and product QA for embodied AI and generative video systems.

- Industry: benchmarking and QA for video generation models

- Sector: software, robotics, autonomous driving, gaming

- Application: integrate WorldSimBench as a standardized QA suite to assess visual fidelity, instruction alignment, and embodiment (trajectory, perspectivity, safety) across tasks.

- Tools/Workflows: use Explicit Perceptual Evaluation with HPE for rapid model scoring; adopt Implicit Manipulative Evaluation in CARLA/CALVIN/MineRL to test “video-to-action” consistency.

- Assumptions/Dependencies: access to simulation platforms (CARLA, CALVIN, MineRL), LoRA-compatible VideoLLMs, prompt lists, and video-to-action models; acceptance of WorldSimBench metrics in internal QA.

- Robotics R&D: pre-deployment stress testing of manipulation policies

- Sector: robotics, logistics/warehousing

- Application: evaluate robot manipulation pipelines by feeding generated future videos into inverse dynamics or goal-based policies in CALVIN to probe actionability and physical consistency.

- Tools/Workflows: “video-to-action” middleware; task-specific prompt lists; HPE-driven regression tests.

- Assumptions/Dependencies: high-quality robot manipulation datasets, model finetuning capability, domain gap awareness (sim vs. real), real-time inference constraints.

- Autonomous driving R&D: closed-loop evaluation of generative perception/planning

- Sector: mobility, automotive

- Application: use C280B5 in CARLA with WorldSimBench metrics (RC, IS, DS, collisions, red-light violations, offroad infractions) to audit generative video models for route adherence and rule compliance.

- Tools/Workflows: continuous integration tests for driving stacks; HPE for video quality/embodiment scoring; scenario prompt bank for diverse traffic conditions.

- Assumptions/Dependencies: alignment between simulated tasks and real-world policies; model stability under domain shifts; safety governance in lab environments.

- Game AI and agent research

- Sector: gaming, simulation

- Application: evaluate Minecraft agents using video-generative goal setting (80C2AE) and goal-based policies to improve exploration, trajectory, and resource collection behavior with measurable metrics (travel distance, dig depth, inventory).

- Tools/Workflows: MineRL integration; HPE for perception-level scoring; iterative prompt-engineering for embodied tasks.

- Assumptions/Dependencies: task descriptions and scenario coverage; compatibility with text-to-video and TI2V models; dataset licensing.

- Academic benchmarking and reproducible evaluation

- Sector: academia

- Application: adopt WorldSimBench as a common benchmark for embodied generative models; use HF-Embodied Dataset to study preference alignment and multi-dimensional evaluation (aesthetics, instruction alignment, perspective, safety).

- Tools/Workflows: HPE API for scoring; LoRA finetuning recipes; transparent reporting across S0–S3 hierarchy.

- Assumptions/Dependencies: community adoption; dataset accessibility and licensing; compute availability for finetuning.

- Model alignment and training improvements for video generation

- Sector: software/AI tooling

- Application: use HF-Embodied Dataset to fine-tune generative video models for better adherence to physical rules and instruction consistency (akin to RLHF for video).

- Tools/Workflows: LoRA finetuning with Flash-VStream or similar VideoLLMs; multi-dimensional reward models derived from HPE.

- Assumptions/Dependencies: robust generalization across models; ability to scale preference data; alignment stability at inference-time.

- Safety and compliance pre-checks for embodied AI

- Sector: policy, automotive, robotics

- Application: internal safety audits using WorldSimBench’s embodied dimensions (e.g., Safety in C280B5, Embodied Interaction in C2AE80) to identify failure modes before pilot deployments.

- Tools/Workflows: automated scorecards; fail/pass thresholds; scenario-driven risk analysis.

- Assumptions/Dependencies: standards are internal (not yet regulatory); limited to simulation; requires multi-stakeholder review.

- Educational use: labs and course modules

- Sector: education

- Application: course assignments on embodied AI evaluation, physical-rule adherence, and video-to-action conversion using the three scenarios.

- Tools/Workflows: prompt lists, HPE scoring demos, closed-loop tasks in CARLA/CALVIN/MineRL.

- Assumptions/Dependencies: access to compute and simulators; tailored curricula; student-friendly datasets and scripts.

Long-Term Applications

These applications require further research, scaling, robust real-time performance, and broader standardization before practical deployment in real-world environments.

- Household and industrial robots powered by world simulators

- Sector: robotics, consumer devices, manufacturing, logistics

- Application: robots that plan and act using predictive, actionable videos—adhering to physical rules—across diverse tasks (grasp, open/close, avoid obstacles) without explicit task-specific programming.

- Tools/Workflows: real-time World Simulator integrated with control stacks; continuous closed-loop evaluation and self-improvement via HPE and “video-to-action” learning.

- Assumptions/Dependencies: reliable real-time video generation; sim-to-real transfer; robust 3D understanding; safety certification.

- Autonomous driving with generative world models for foresight and risk mitigation

- Sector: mobility, automotive, insurance

- Application: generative foresight modules that anticipate hazards, simulate “what-if” trajectories, and inform planners—audited via WorldSimBench-derived safety metrics.

- Tools/Workflows: on-vehicle world simulator modules; scenario libraries; safety scorecards integrated into driver-assistance pipelines.

- Assumptions/Dependencies: regulatory approval; demonstrated reliability under corner cases; integration with perception/planning stacks; energy-efficient inference.

- Digital twins and operations planning

- Sector: energy, smart manufacturing, warehousing, infrastructure

- Application: physics-aware video simulators for planning workflows (material handling, inspection) with actionability checks; synthetic data generation for training downstream policies.

- Tools/Workflows: WorldSimBench-like evaluation in domain-specific simulators; HF-Embodied-style preference feedback for task realism.

- Assumptions/Dependencies: domain-specific physical rules encoded; scalable synthetic data pipelines; validation against real-world outcomes.

- Healthcare and surgical robotics training

- Sector: healthcare, medical devices

- Application: simulation-rich training and assistance systems that generate physically plausible surgical scenarios and translate predicted videos into actionable guidance.

- Tools/Workflows: secure, anonymized datasets; HPE-like evaluators tuned to medical criteria; closed-loop sim platforms for surgical tasks.

- Assumptions/Dependencies: stringent data/privacy requirements; clinical validation; high-fidelity physics; regulatory pathways.

- Immersive education and VR safety training

- Sector: education, enterprise training

- Application: VR/AR modules with physically consistent, dynamic scenarios that teach safe operation (e.g., lab safety, warehouse procedures) and evaluate action consistency.

- Tools/Workflows: scenario prompt banks; embodied evaluation dimensions ported to VR; performance-based certification metrics.

- Assumptions/Dependencies: generalization of embodied dimensions to VR; user ergonomics; hardware constraints.

- Standardization and certification frameworks for embodied AI

- Sector: policy, standards bodies, procurement

- Application: adopt S0–S3 taxonomy, embodied dimensions, and closed-loop metrics as part of certification, reporting, and procurement standards for embodied AI systems.

- Tools/Workflows: public benchmarks; transparent scorecards; conformance testing suites derived from WorldSimBench.

- Assumptions/Dependencies: multi-stakeholder consensus; legal/regulatory adaptation; reproducibility across vendors.

- Content creation and previsualization with physical plausibility

- Sector: media/entertainment, simulation tools

- Application: video generation for previsualization and creative ideation that respects physical rules (depth, trajectory, object interaction), improving downstream animation and VFX workflows.

- Tools/Workflows: HPE-guided quality assurance; embodied prompt libraries; iterative “generate–evaluate–refine” loops.

- Assumptions/Dependencies: fidelity sufficient for professional use; integration with studio pipelines; licensing of datasets/models.

- General-purpose MLOps for embodied generative models

- Sector: software/AI tooling

- Application: “WorldSim QA” services offering continuous evaluation, regression testing, and preference-alignment training for video generation systems.

- Tools/Workflows: APIs for HPE scoring; scenario management; CI/CD integration with simulators and video-to-action policies.

- Assumptions/Dependencies: ecosystem adoption; cost-effective compute; modularity across vendors and model types.

Collections

Sign up for free to add this paper to one or more collections.