- The paper demonstrates that mice sensory cortex refines neural representations during overtraining even after behavioral performance plateaus.

- Representational similarity matrices and a multilayer perceptron model reveal late-time feature learning and margin maximization.

- The study bridges neuroscience and machine learning by linking biological adaptation to grokking in artificial neural networks.

Do Mice Grok? Glimpses of Hidden Progress During Overtraining in Sensory Cortex

Introduction

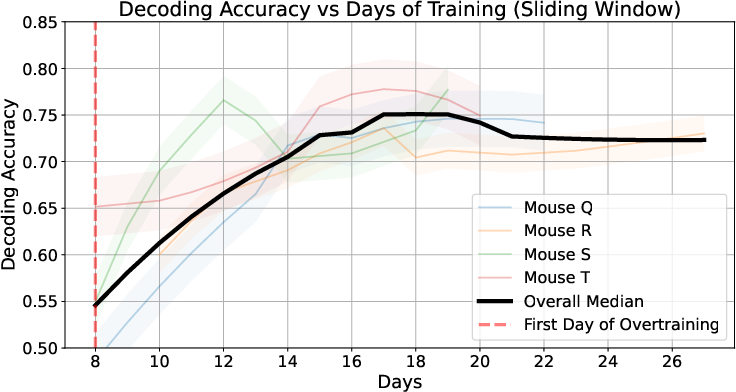

The study investigates the dynamics of representation learning in the sensory cortex of mice during overtraining. It draws parallels between neural representation learning in biological systems and artificial neural networks, particularly the phenomenon known as "grokking" in deep learning. The authors present evidence suggesting that in mice, similar to artificial systems, neural representations continue to evolve even after the behavioral performance reaches a plateau. This paper focuses on a re-evaluation of neural data from the posterior piriform cortex (PPC) collected during a binary odor discrimination task in mice, exploring hidden learning during a phase of overtraining and introducing a computational model to understand these dynamics.

Methodology and Data Analysis

Experimental Setup

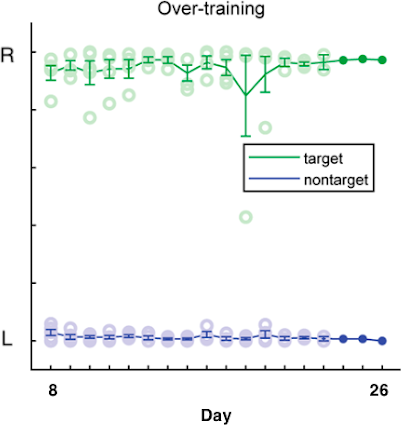

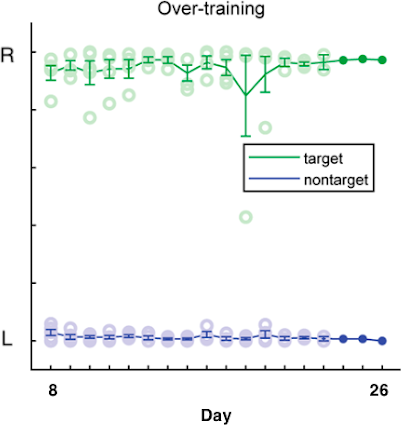

Neural data was collected from the PPC in mice tasked with distinguishing a specific target odor from numerous nontarget odors. The experiment consisted of an initial training period of 8 days, followed by 18 days of overtraining. Behavioral mastery was reached prior to overtraining, allowing for an investigation into representational changes despite stable behavioral performance.

Figure 1: Behavior of mice on a binary discrimination task of odors, where mice indicate their selected choice by licking left or right.

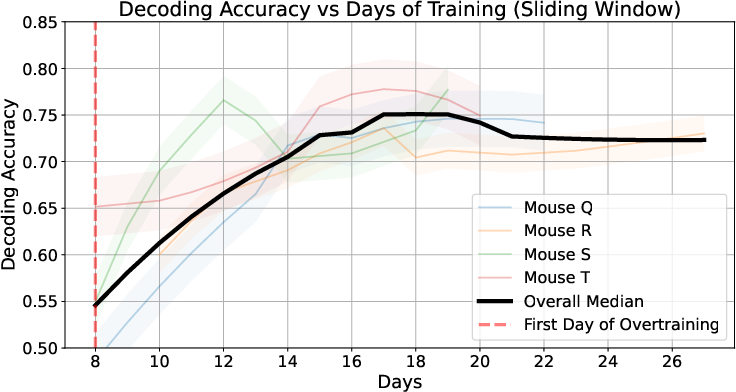

Representational Similarity

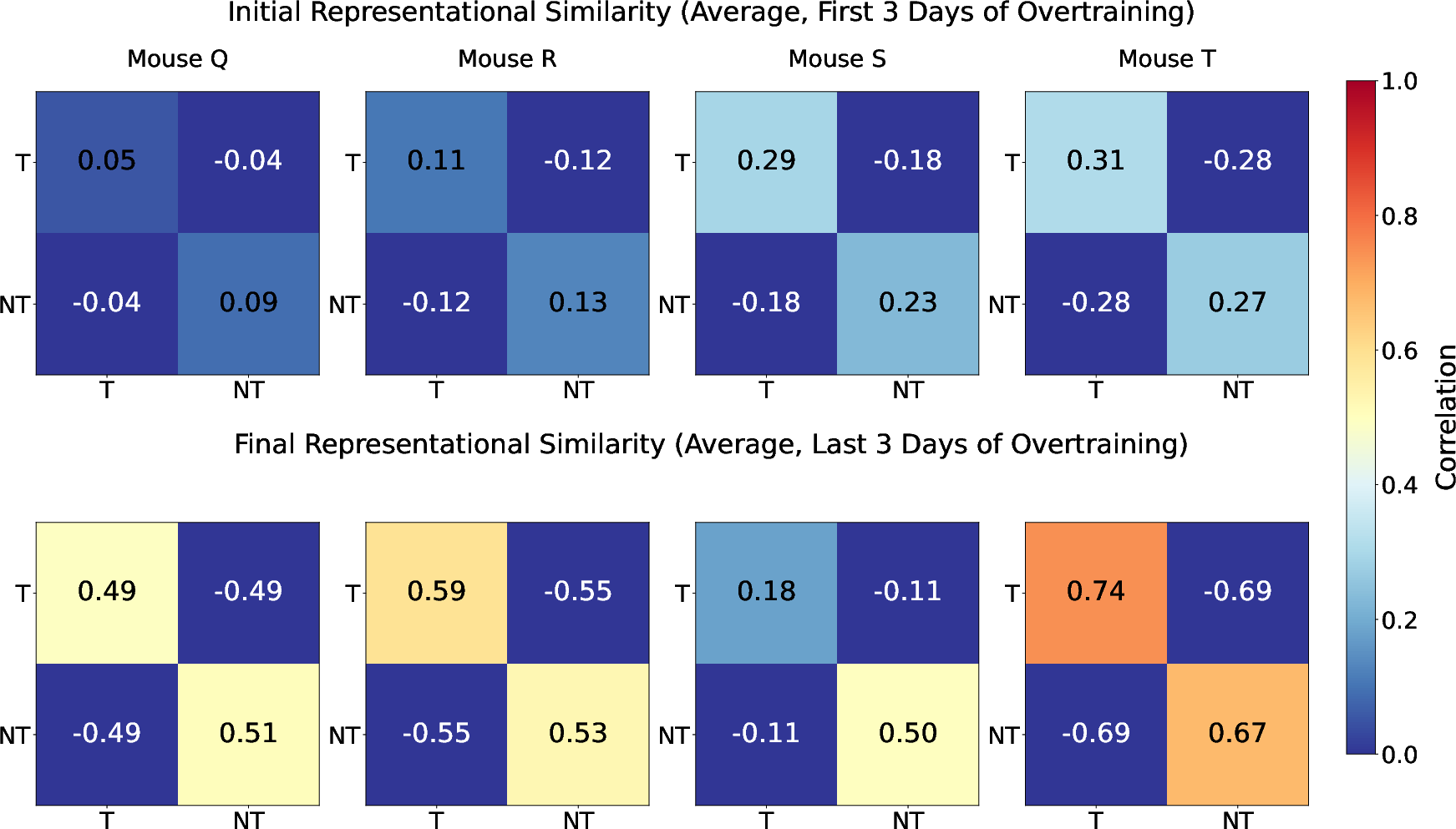

The analysis involved tracking changes in the neural representation of odors during overtraining. Representational similarity matrices were used to measure the correlation between neural population responses to target and nontarget odors. The results indicated that representations for these odors continue to separate despite stable behavioral performance, suggesting ongoing feature learning in the neural embodiments.

Figure 2: Representational similarity matrices plotting average pairwise correlation between target and nontarget population responses from piriform cortex on the first and last days of overtraining.

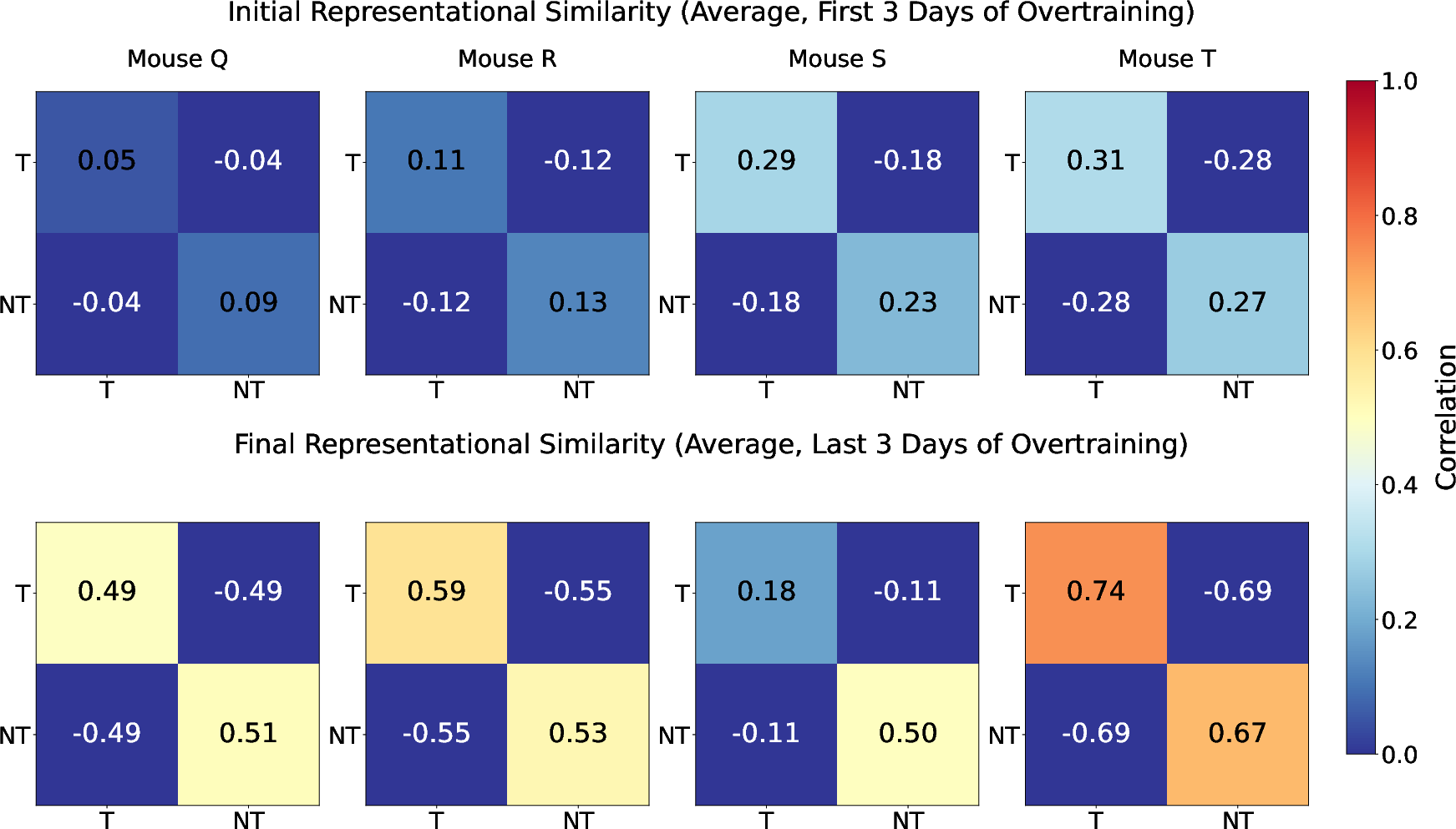

Computational Modeling

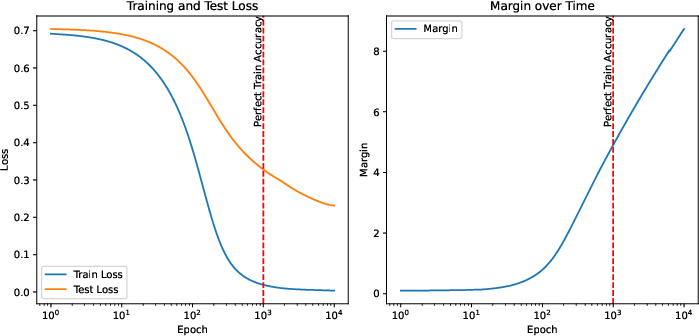

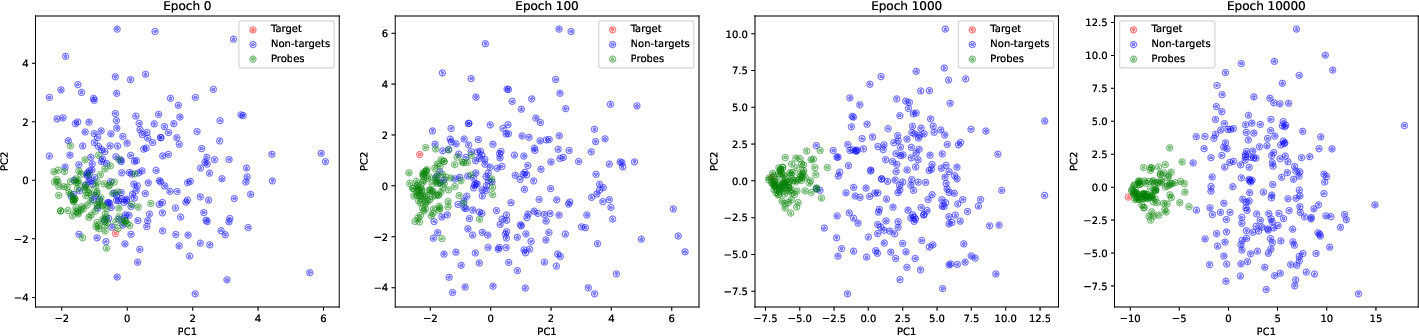

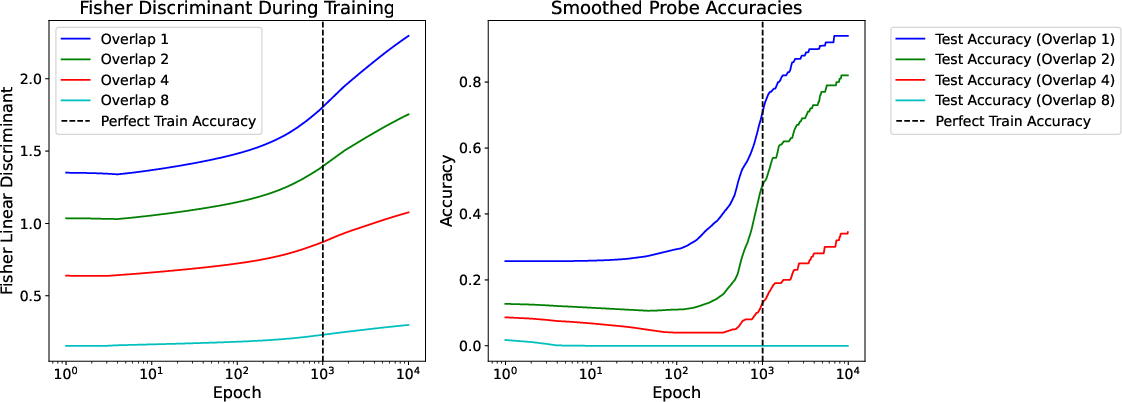

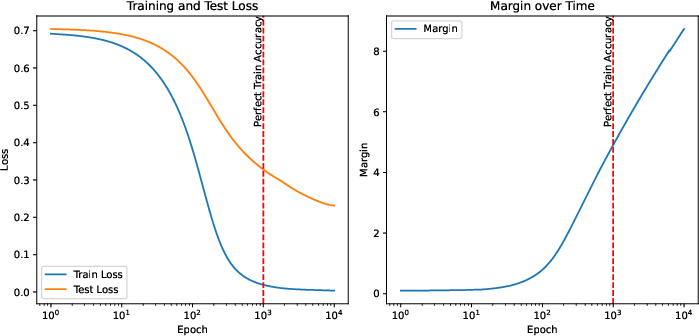

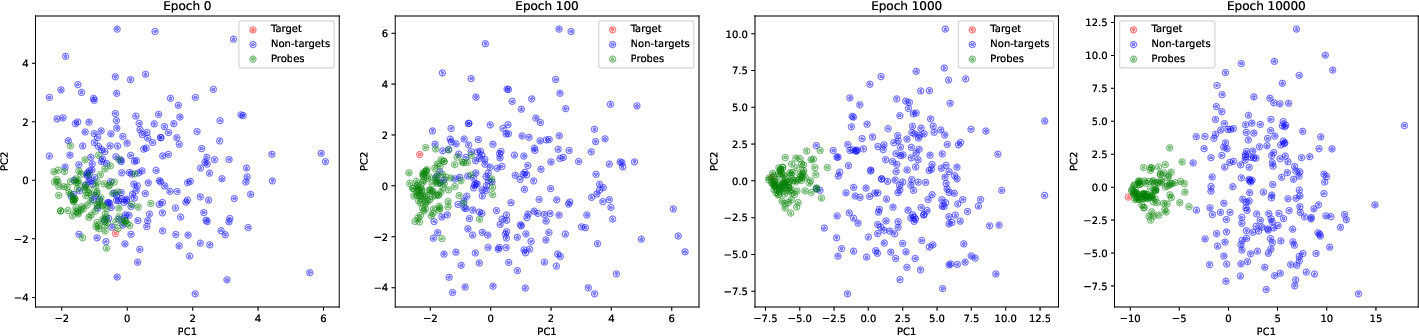

A synthetic model using a simple multi-layer perceptron (MLP) was designed to mimic the learning dynamics observed in the mouse PPC. The model, when trained on the same binary classification task, showed continued improvement in test loss even after training loss had plateaued, reflecting similar late-time representation learning through margin maximization common in grokking.

Figure 3: Recapitulating and interpreting mouse piriform cortex dynamics in a simple model, showing margin development during overtraining.

Feature Learning and Overtraining

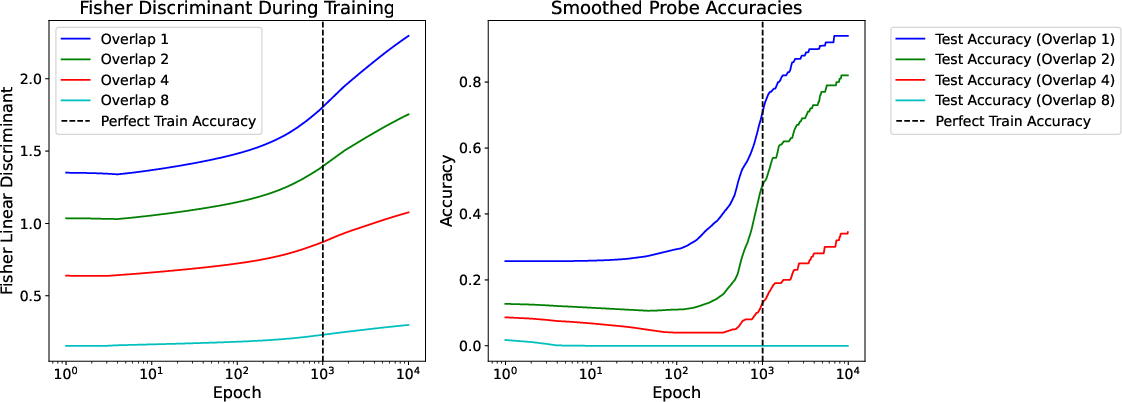

The model revealed that difficult-to-classify probe trials, not part of the training data, were increasingly accurately classified during overtraining, akin to grokking. This reflected a robust late-time learning mechanism driving margin maximization, supporting the hypothesis of continued representational adaptation.

Figure 4: Probe trials are learned in order of increasing overlap with target, tracking the Fisher Discriminant and performance over time.

Biological Insights and Theoretical Implications

The study connects these late-time dynamics in mice to broader theories of sensory cortex functionality and learning. It suggests that biological learning might employ analogous principles as observed in artificial systems, particularly around implicit margin maximization driving more generalizable and robust representations in the brain's sensory cortex.

Furthermore, the theoretical framework outlined provides potential explanations for observed phenomena like the overtraining reversal effect, where overtrained animals adapt more quickly to reversed tasks, underlining the importance of feature learning during extended training.

Conclusion

The paper presents compelling evidence for ongoing representation learning in mouse cortex during overtraining, aligning biological learning processes with phenomena observed in artificial networks. The insights gained bridge gaps between neuroscience and machine learning, presenting avenues for future interdisciplinary research to further unravel the intricacies of learning and adaptation in both biological and computational contexts. The study highlights the potential universality of late-time learning dynamics across different learning systems and tasks, paving the way for deeper investigations into the neural mechanisms underpinning enduring learning capabilities.