Social Media Algorithms Can Shape Affective Polarization via Exposure to Antidemocratic Attitudes and Partisan Animosity

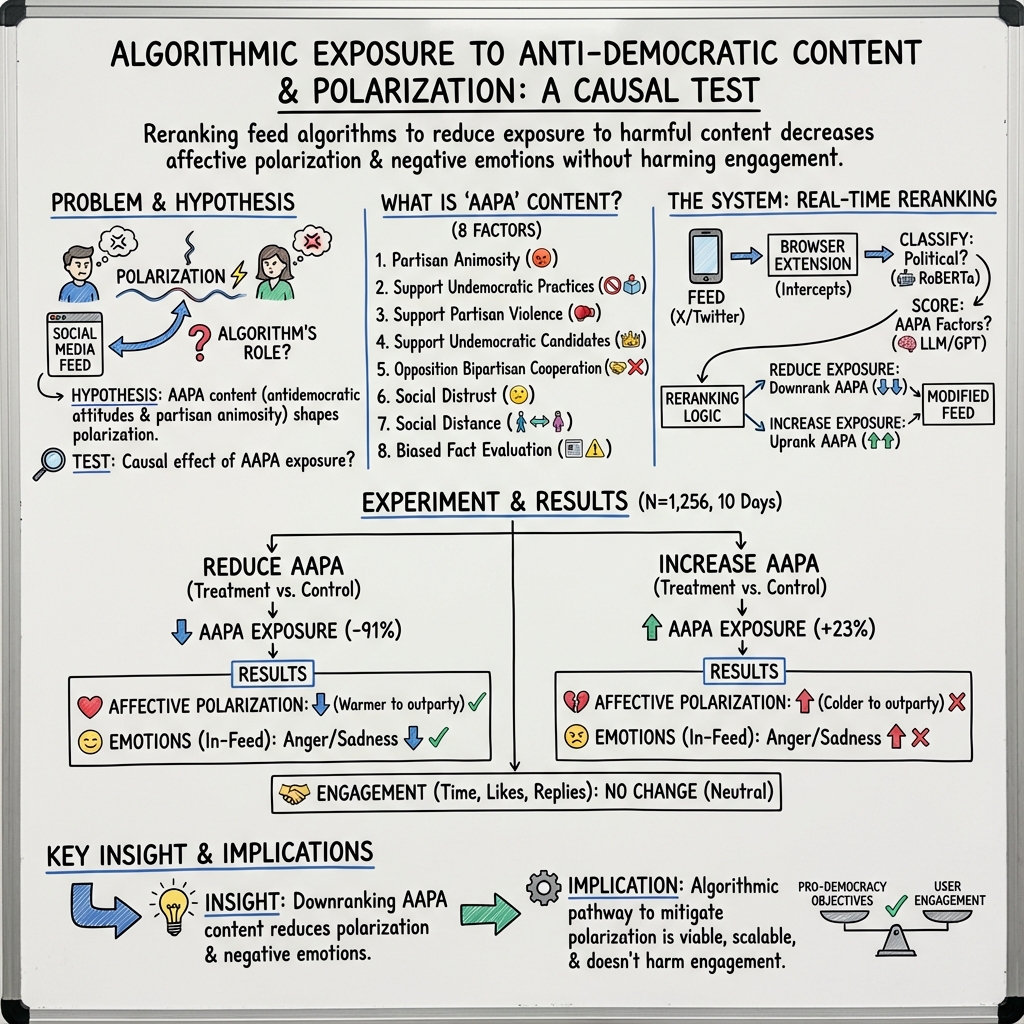

Abstract: There is widespread concern about the negative impacts of social media feed ranking algorithms on political polarization. Leveraging advancements in LLMs, we develop an approach to re-rank feeds in real-time to test the effects of content that is likely to polarize: expressions of antidemocratic attitudes and partisan animosity (AAPA). In a preregistered 10-day field experiment on X/Twitter with 1,256 consented participants, we increase or decrease participants' exposure to AAPA in their algorithmically curated feeds. We observe more positive outparty feelings when AAPA exposure is decreased and more negative outparty feelings when AAPA exposure is increased. Exposure to AAPA content also results in an immediate increase in negative emotions, such as sadness and anger. The interventions do not significantly impact traditional engagement metrics such as re-post and favorite rates. These findings highlight a potential pathway for developing feed algorithms that mitigate affective polarization by addressing content that undermines the shared values required for a healthy democracy.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how social media feeds (like the “For You” page on X/Twitter) can make people feel more or less hostile toward the other political party. The researchers tested whether changing what the algorithm shows—especially posts that encourage anger, distrust, or undemocratic ideas—can change how warm or cold people feel toward those on the “other team” in politics.

What questions did the researchers ask?

They asked two simple questions:

- If people see fewer posts that encourage antidemocratic attitudes and partisan animosity (AAPA), will they feel kinder toward the other political party?

- If people see more of those posts, will they feel harsher toward the other political party?

“Affective polarization” means how emotionally warm or cold people feel toward the other party, not just whether they disagree on issues.

How did they study it?

Think of your social media feed like a playlist that a DJ (the algorithm) creates for you. The team built a safe tool (a browser extension) that worked like a helpful co-DJ. It slightly re-ordered people’s X/Twitter feeds during a 10-day period.

Here’s what they did, in everyday terms:

- They asked 1,256 adults in the U.S. (who use X and identify as Democrat or Republican) to install the browser extension and consent to the study.

- For the first 3 days, nothing changed (this was the baseline).

- For the next 7 days, participants were randomly assigned to one of two experiments:

- Reduced Exposure: the extension pushed down posts with AAPA so you’d see fewer of them near the top.

- Increased Exposure: the extension pulled up AAPA posts so you’d see more of them near the top.

- They used an AI (a LLM, like a very smart reader) to spot political posts and label which ones showed AAPA content.

- AAPA included eight types of messages that tend to harm democracy and increase hostility. In simple terms:

- Strong dislike of the other party

- Support for unfair or undemocratic actions

- Support for political violence

- Support for undemocratic candidates

- Opposition to working together across parties

- Social distrust (believing people can’t be trusted)

- Wanting to keep distance from people in the other party

- Biased judgments about political facts

- While people scrolled, short in-feed surveys popped up occasionally (like quick sliders) to ask how they felt about the other party and whether they felt emotions like anger, sadness, calm, or excitement.

- At the end, participants took a survey outside the platform that measured how warm or cold they felt toward the other party on a 0–100 scale (0 = very cold, 100 = very warm) and how often they felt certain emotions during the week.

What did they find?

The main findings were clear and balanced:

- When AAPA posts were decreased, people felt warmer toward the opposing party. On average, their warmth went up by about 2 points on the 0–100 “feeling thermometer.”

- When AAPA posts were increased, people felt colder toward the opposing party. Their warmth went down by about 2.5 points.

- These effects also showed up inside the feed in real time: fewer AAPA posts made people report less anger and sadness while scrolling; more AAPA posts made people report more anger and sadness. The changes in emotions were immediate but didn’t last much beyond the feed.

- Engagement (likes, reposts, time spent) didn’t change much. In other words, reducing harmful content didn’t seem to hurt typical engagement metrics.

- Importantly, the effects were bipartisan—similar for both Democrats and Republicans.

Why it matters: even small shifts add up. The researchers note that the change in warmth is roughly like reversing or accelerating a few years’ worth of increasing partisan dislike.

Why does it matter?

This study shows that:

- Social media algorithms don’t just reflect what we like; they can shape how we feel about other people—especially those who disagree with us politically.

- Platforms could design their feeds to reduce posts that promote hostility and undemocratic ideas without hurting business metrics like engagement.

- That could help cool down political anger, support healthier conversations, and strengthen trust in democratic norms.

In short, tuning the “DJ” of your feed to avoid content that stirs up hatred and distrust can make online spaces—and our society—a bit kinder and more stable.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper, to guide future research.

- Generalizability beyond X/Twitter web: Assess whether effects replicate on mobile apps, other platforms (e.g., Facebook, Instagram, TikTok, YouTube, Reddit), and in non-U.S. contexts and languages.

- Broader user populations: Test impacts among independents, weak partisans, low-politics users, and those with <5% political content in their feeds to understand population-level effects.

- Longer-term persistence: Evaluate whether affective polarization and emotion effects persist, attenuate, or rebound over weeks/months under continuous or intermittent deployment.

- Dose–response and symmetry: Identify the minimal effective dosage and compare symmetric treatment intensities (the study downranked ~85/day vs. upranked ~11/day) to isolate causal scaling effects.

- Placement effects: Quantify how the position of AAPA posts in the feed (top vs. deep) and timing (time-of-day) modulate outcomes.

- Mechanisms and mediators: Test whether changes are driven by perceived social norms, threat/moralization, outrage contagion, or reduced cross-party empathy using validated mediators.

- Factor-specific causality: Run experiments that selectively up/downrank individual AAPA factors to confirm which components (e.g., opposition to bipartisanship, biased fact evaluation) causally drive the observed effects.

- In-party vs. out-party exposure: Disentangle the impacts of injecting attitudinally aligned AAPA versus exposure to out-party AAPA; test cross-cutting designs and mixed exposures.

- Content diversity trade-offs: Measure whether downranking AAPA inadvertently reduces exposure to legitimate political critique, satire, or minority activism and assess viewpoint diversity impacts.

- Classifier robustness and fairness: Audit LLM-based AAPA classification for partisan, demographic, and linguistic biases; validate across modalities (text, images, video, quotes) and sarcasm/nuance; stress-test against adversarial evasion.

- Threshold design: Revisit the “4 out of 8 factors” threshold—compare alternative thresholds, weighted factors, or continuous scores to optimize precision/recall and outcome efficacy.

- Stability across model versions: Test whether outcomes are stable under different LLMs, prompts, and model updates; establish versioning and reproducibility protocols.

- Inventory constraints: In the increased exposure condition, AAPA posts were sourced from other participants’ feeds; examine biases introduced by limited inventory and test full-inventory access or synthetic counterfactuals.

- Engagement measurement completeness: Augment engagement metrics with dwell time, scroll depth, click-throughs, comment sentiment, and retention beyond 10 days to capture business-relevant effects more fully.

- Network spillovers: Measure whether altering one user’s feed affects their posting, replies, and their network’s behavior and attitudes (contagion/spillover), including non-participants.

- Cross-platform spillover: Assess whether treatment effects transfer to users’ behavior and attitudes on other platforms or offline interactions.

- Offline democratic outcomes: Link interventions to civic behaviors (e.g., voting intent, cross-party conversations, institutional trust, willingness to compromise) and misinformation accuracy.

- Emotional health trajectories: Examine whether short-lived increases/decreases in negative emotions accumulate into longer-term mental health effects (stress, well-being).

- User experience and satisfaction: Beyond “noticed changes,” test user satisfaction, perceived relevance, and autonomy; assess opt-in/opt-out preferences and long-run retention.

- Normative governance: Define transparent criteria and oversight for “pro-democracy” ranking—who decides, how values are operationalized, how to prevent censorship or biased suppression.

- Cultural variability of democratic values: Investigate how AAPA definitions and interventions translate to different political systems, cultural norms, and contested democratic principles.

- Interaction with platform algorithms: Study how platform-level ranking objectives (e.g., engagement, ads, personalization) interact with AAPA downranking and whether platforms adapt or counteract such interventions.

- Combination interventions: Compare AAPA downranking with complementary strategies (e.g., perspective-taking prompts, dialogic content uplift, fact-check cues) and test synergistic effects.

- EMA demand characteristics: Quantify any reactivity from in-feed surveys replacing/adjacent to AAPA posts; test alternative measurement timings or unobtrusive behavioral proxies.

- Position randomization integrity: Ensure survey and insertion positions do not systematically alter feed dynamics; run sensitivity analyses on placement rules and frequencies.

- Compliance and usage heterogeneity: Analyze treatment-on-the-treated vs. intent-to-treat under varying usage intensity; assess differential compliance across heavy/light users and session patterns.

- Policy and legal implications: Map regulatory constraints (e.g., political neutrality, transparency mandates) and evaluate feasibility and risks of deployment at scale.

- Replicability across electoral cycles: Replicate in non-election periods and different political salience contexts to test sensitivity to exogenous polarization levels.

- Data access and sustainability: Address the fragility of post-API research by proposing durable data-sharing and auditing frameworks that enable field experiments without platform resistance.

- Cost–benefit analysis: Quantify trade-offs between small polarization benefits and potential impacts on engagement/monetization to inform platform adoption decisions.

Practical Applications

Immediate Applications

The following applications can be deployed now, leveraging the paper’s validated LLM-based classifier and real-time feed reranking approach, along with its measurement via in-feed (EMA) surveys.

- Platform-level downranking of antidemocratic attitudes and partisan animosity (AAPA) content to mitigate affective polarization (software/social media)

- Tools/products: AAPA scoring microservice (LLM classifier), ranking penalty module, in-feed EMA instrumentation for affective metrics, A/B test harness for “out-party warmth” KPI.

- Workflow: Score candidate items for AAPA; apply rank penalty; monitor affective outcomes via in-feed surveys; iterate weights.

- Assumptions/dependencies: Access to ranking pipeline or client-side interception; classifier robustness to domain shifts; platform willingness to add affective KPIs.

- Brand safety and ad adjacency filters to reduce association with AAPA content (advertising/finance)

- Tools/products: AAPA adjacency score API for SSPs/DSPs; ad placement filters; inventory hygiene reports to advertisers.

- Workflow: Pre-bid/post-bid checks exclude AAPA inventory; continuous monitoring of adjacency risk.

- Assumptions/dependencies: Integration with ad-tech stack; advertiser acceptance of “civility” controls; accurate detection across topical drift.

- Election-season “civility mode” toggles to throttle AAPA exposure (policy/platform operations)

- Tools/products: Risk-triggered ranking weight scheduler; election-period policy flags; monitoring dashboard for affective polarization metrics.

- Workflow: Activate reduced-AAPA weights during high-risk windows; track immediate emotion and out-party warmth via EMA; report to trust & safety teams.

- Assumptions/dependencies: Clear escalation criteria; governance alignment; minimal engagement impact (supported by study but platform-specific).

- Enterprise community moderation and forum health controls (software/community management)

- Tools/products: Moderator assistant for AAPA detection; auto-flag/downrank modules for internal social platforms (e.g., intranet communities, public forums).

- Workflow: Pre/post-publication AAPA scoring; flag escalations; rank penalties rather than removals to preserve speech while reducing harm.

- Assumptions/dependencies: Moderation policy buy-in; false-positive handling; appeals and transparency mechanisms.

- Pre-publication creator and newsroom assistant to reduce AAPA cues in content (media/education)

- Tools/products: Writing assistant/CMS plugin that highlights AAPA phrases/factors and suggests alternatives; editorial dashboards tracking AAPA prevalence.

- Workflow: Draft-scoring; language suggestions; newsroom-level targets for reducing AAPA in headlines and social posts.

- Assumptions/dependencies: Willingness to adopt pro-social language practices; precision sufficient to avoid stifling legitimate critique.

- Consumer-facing “well-being/democracy-friendly feed” browser extension (daily life)

- Tools/products: Open-source extension using AAPA downranking; user controls for filter strength; privacy-preserving local inference where feasible.

- Workflow: Intercept web feeds (e.g., X/Twitter For You) and re-order content; optional in-feed micro-surveys to monitor personal affect.

- Assumptions/dependencies: Web access only (mobile constraints); user consent; platform interface stability.

- Open science field-experiment toolkit for academic studies of ranking interventions (academia)

- Tools/products: Reranking extension framework; standardized EMA survey modules; data pipelines and preregistration templates.

- Workflow: Recruit participants; run randomized downrank/uprank experiments; measure affect and emotion in situ; share code and protocols.

- Assumptions/dependencies: IRB approvals; participant recruitment with sufficient political content; API-less feasibility via client-side interception.

- NGO/election integrity analytics to track AAPA trends and hotspots (civil society)

- Tools/products: AAPA trend dashboards; geographic/topic breakdowns; alerts for spikes in partisan animosity or undemocratic attitudes.

- Workflow: Monitor public feeds; report anomalies; inform civic interventions and media literacy programs.

- Assumptions/dependencies: Data access at scale; ethical data use; collaboration with platforms or scraping policies.

- Public health and digital well-being programs that include “anger/sadness risk” monitoring (healthcare/public health)

- Tools/products: Platform well-being KPIs reflecting immediate negative emotion; opt-in user-level controls to reduce AAPA exposure.

- Workflow: Incorporate emotion metrics into product health reviews; deploy low-friction controls that keep engagement largely stable.

- Assumptions/dependencies: Reliable EMA capture; alignment with health guidelines; guardrails against unintended suppression of legitimate discourse.

Long-Term Applications

The following opportunities will benefit from further research, scaling, platform collaboration, multi-modal coverage, and governance development.

- Cross-platform, mobile-native integration at scale (software)

- Tools/products: Platform APIs or SDKs for real-time AAPA scoring and ranking penalties; mobile client hooks; standardized affective KPIs.

- Workflow: Integrate societal objective functions into recommender stacks across apps; monitor longitudinal outcomes.

- Assumptions/dependencies: Platform cooperation; regulatory incentives (e.g., EU DSA risk mitigation); performance constraints.

- Multimodal AAPA detection covering text, images, video, memes, and audio (AI)

- Tools/products: Cross-modal models; adversarial robustness; multilingual support; open benchmarks.

- Workflow: Train/validate with diverse datasets; deploy inference pipelines; continuous domain-adaptation.

- Assumptions/dependencies: Large labeled datasets; compute; bias audits; resistance to evasion tactics.

- Personalization and fairness-aware societal objective functions with oversight (policy/ethics/AI)

- Tools/products: Weighted objectives balancing civility, diversity, and user autonomy; appeals and transparency interfaces; governance boards.

- Workflow: Multi-stakeholder value-setting; fairness constraints; periodic independent audits.

- Assumptions/dependencies: Consensus on values; rigorous fairness testing; legal frameworks defining acceptable interventions.

- Longitudinal validation linking affective changes to behavior and civic outcomes (academia/public policy)

- Tools/products: Multi-month/year field trials; standardized measures (e.g., out-party warmth), civic participation indicators.

- Workflow: Evaluate persistence and real-world impacts; publish best practices; refine model thresholds.

- Assumptions/dependencies: Access to long-term data; attrition management; confound control in dynamic socio-political contexts.

- Regulatory standards and algorithmic risk audits focused on polarization (policy/regulation)

- Tools/products: Audit protocols for “polarization risk”; mandated reporting on AAPA prevalence and mitigation; certification schemes.

- Workflow: Regulators set metrics; platforms disclose and remediate; third-party auditors verify compliance.

- Assumptions/dependencies: Legal clarity; harmonization across jurisdictions; proportionality to protect free expression.

- Media literacy curricula built around the eight-factor AAPA taxonomy (education)

- Tools/products: Educator guides, classroom simulations of feed reranking, interactive tools showing how content affects affective polarization.

- Workflow: Teach recognition of AAPA cues; practice rewriting content; measure student outcomes.

- Assumptions/dependencies: Curriculum adoption; age-appropriate materials; cultural adaptation.

- Corporate product health dashboards featuring “affective risk” and “democracy-friendly” indicators (industry management)

- Tools/products: Executive dashboards; OKRs tied to AAPA reduction without harming engagement; weekly risk reviews.

- Workflow: Embed in product governance; tie incentives to societal metrics; cross-functional accountability.

- Assumptions/dependencies: Leadership buy-in; reliable measurement; mitigation playbooks.

- Crisis management protocols for platform operations during unrest or elections (policy/platform ops)

- Tools/products: Emergency switches for AAPA throttling; escalation paths; transparency reporting to watchdogs.

- Workflow: Activate controls on trigger conditions; audit impact post-event; refine thresholds.

- Assumptions/dependencies: Clear triggers; minimal unintended suppression; external oversight.

- Localization and cross-cultural adaptation of the AAPA taxonomy (global platforms)

- Tools/products: Region-specific taxonomies; LLMs tuned to local political context; cultural validation studies.

- Workflow: Co-develop with local experts; pilot and iterate; maintain global consistency where appropriate.

- Assumptions/dependencies: Local expertise; multilingual corpora; sensitivity to different democratic norms.

- Extending societal objective functions to other recommendation domains (news/video/search) (software)

- Tools/products: Plugins for news apps, video platforms, and search ranking; shared metrics and governance.

- Workflow: Apply AAPA-aware ranking across discovery surfaces; evaluate cross-domain effects.

- Assumptions/dependencies: Domain-specific relevance trade-offs; user acceptance; interoperability with existing relevance models.

Glossary

- Adjusted P-value: A statistical significance measure corrected for multiple comparisons to reduce false positives. "(=0.013)"

- Affective polarization: Emotional hostility toward opposing political parties, often measured by warmth toward the outgroup. "Of particular concern is whether feed algorithms cause affective polarization---hostility toward opposing political parties"

- Algorithmically curated feeds: Social media timelines ordered by automated ranking algorithms rather than purely chronological order. "in their algorithmically curated feeds."

- Antidemocratic attitudes: Beliefs or expressions that undermine democratic norms and processes, often paired with partisan animosity. "antidemocratic attitudes and partisan animosity (AAPA)"

- Attitudinally-aligned: Content matched to a user’s existing attitudes or partisan identity. "upranked attitudinally-aligned AAPA posts into participants' feeds."

- Baseline period: A pre-intervention time window used to measure initial levels of outcomes for comparison. "the first three days serving as a baseline period"

- Biased evaluation of politicized facts: Interpreting politically relevant facts in systematically partisan or biased ways. "biased evaluation of politicized facts"

- Confidence intervals (95% CIs): Ranges that likely contain the true effect size with 95% confidence. "95% CIs: [0.15, 4.06]"

- Cross-partisan exposure: Seeing content from or about opposing political groups, often used in interventions to reduce polarization. "intervene on cross-partisan exposure"

- Demagoguery: Political rhetoric that appeals to emotions and prejudices rather than reasoned debate. "upranks demagoguery."

- Downrank: Reduce the position or visibility of content in a ranked feed. "downranked all AAPA content"

- Ecological momentary assessment (EMA): Real-time, in-situ measurement of experiences or behaviors within natural environments. "modeled on the ecological momentary assessment approach"

- Engagement loop: A self-reinforcing cycle where user interactions lead algorithms to show more similar content. "self-reinforcing engagement loop"

- Field experiment: A study conducted in real-world settings to test causal effects of interventions. "In a preregistered 10-day field experiment on X/Twitter"

- Feeling thermometer: A scale (0–100) used to measure warmth toward groups (e.g., opposing party). "0--100 degrees on a feeling thermometer"

- Heterogeneity in treatment effects: Differences in how the intervention affects various subgroups or contexts. "We did not observe any significant heterogeneity in treatment effects across the preregistered moderators"

- In-feed survey: A survey instrument embedded directly within the social media feed. "In-feed surveys are occasionally shown throughout the baseline and the intervention period."

- LLMs: AI models trained on vast text corpora to understand and generate language, used here for classification/scoring. "Leveraging advancements in LLMs"

- Latency: The delay between an action and system response, often measured in seconds. "adds a latency of about three seconds"

- Moderators: Variables that may influence the magnitude or direction of the treatment effect. "across the preregistered moderators"

- Opposition to bipartisanship: Resistance to cross-party cooperation and collaboration. "opposition to bipartisanship"

- Outparty warmth: Reported warmth toward members of the opposing political party. "outparty warmth increase of 2.11 degrees"

- Partisan animosity: Negative feelings and hostility directed toward the opposing political party. "partisan animosity"

- Partisan violence: Support for using violence to achieve partisan political goals. "support for partisan violence"

- Post inventory: The platform’s available set of posts that could be shown to users. "the platform's full post inventory"

- Power analyses: Statistical planning to determine required sample sizes to detect expected effects. "based on power analyses performed on pilot studies"

- Preregistered: Documenting study hypotheses, methods, and analyses before data collection to prevent bias. "We preregistered a target of at least 1,100 participants"

- Randomized controlled trial (RCT): An experiment where participants are randomly assigned to treatment or control to infer causality. "Both experiments are randomized controlled trials."

- Reverse-chronological algorithm: Feed ordering that shows the most recent content first without ranking by engagement or relevance. "reverse-chronological algorithm"

- Selective attrition: Differential dropout rates across conditions that can bias results. "no evidence of selective attrition between conditions"

- Social distance: Preferences for reduced interaction or closeness with members of other social or political groups. "social distance"

- Social distrust: Generalized lack of trust in others or institutions, often across political lines. "social distrust"

- Undemocratic candidates: Political figures who oppose or undermine democratic norms and processes. "support for undemocratic candidates"

- Undemocratic practices: Actions or policies that erode democratic rules or institutions. "support for undemocratic practices"

- Uprank: Increase the position or visibility of content in a ranked feed. "upranked a random AAPA post to that position."

Collections

Sign up for free to add this paper to one or more collections.