- The paper introduces an embodied framework utilizing Gaussian memory for progressive 3D occupancy prediction in indoor scenes.

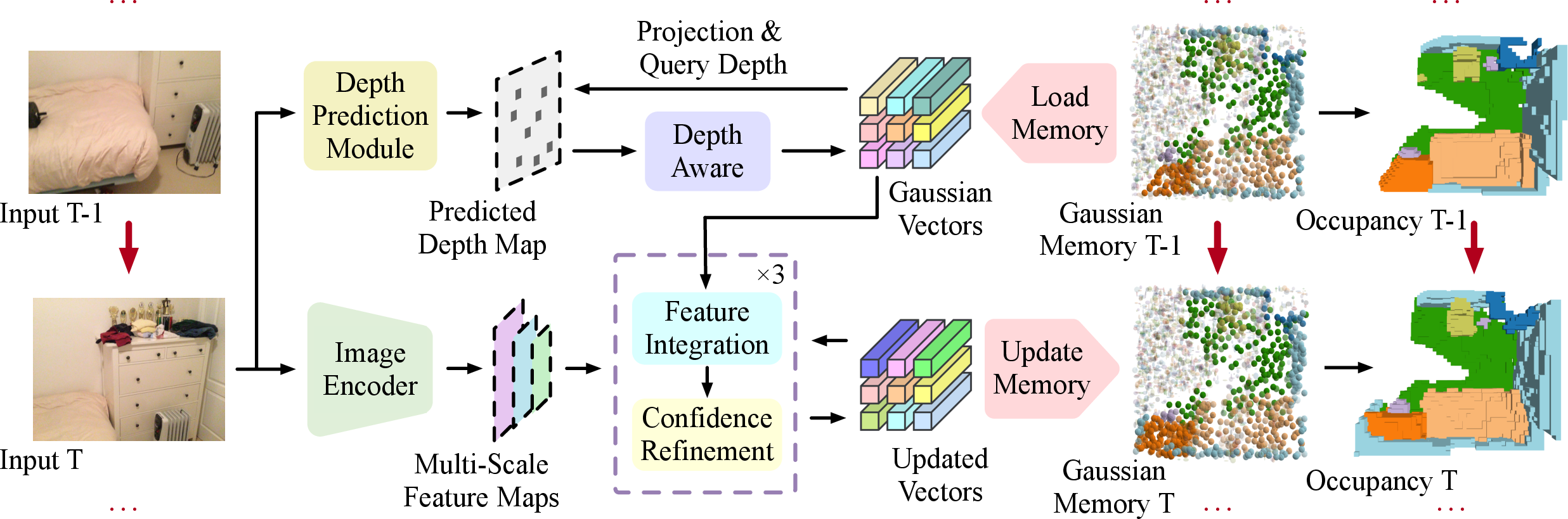

- It integrates monocular RGB features with depth-aware refinement using deformable cross-attention for precise scene updates.

- Experimental results demonstrate superior performance over state-of-the-art methods on the EmbodiedOcc-ScanNet benchmark.

EmbodiedOcc: Embodied 3D Occupancy Prediction for Vision-based Online Scene Understanding

Introduction

The paper "EmbodiedOcc: Embodied 3D Occupancy Prediction for Vision-based Online Scene Understanding" (2412.04380) addresses a key challenge in 3D perception for embodied agents: accurately predicting 3D occupancy in indoor scenes using vision-based observations. Unlike traditional methods that focus on offline perception from limited views, this work introduces a Gaussian-based EmbodiedOcc framework designed for online, progressive scene understanding akin to human exploration.

Methodology

Embodied 3D Occupancy Prediction

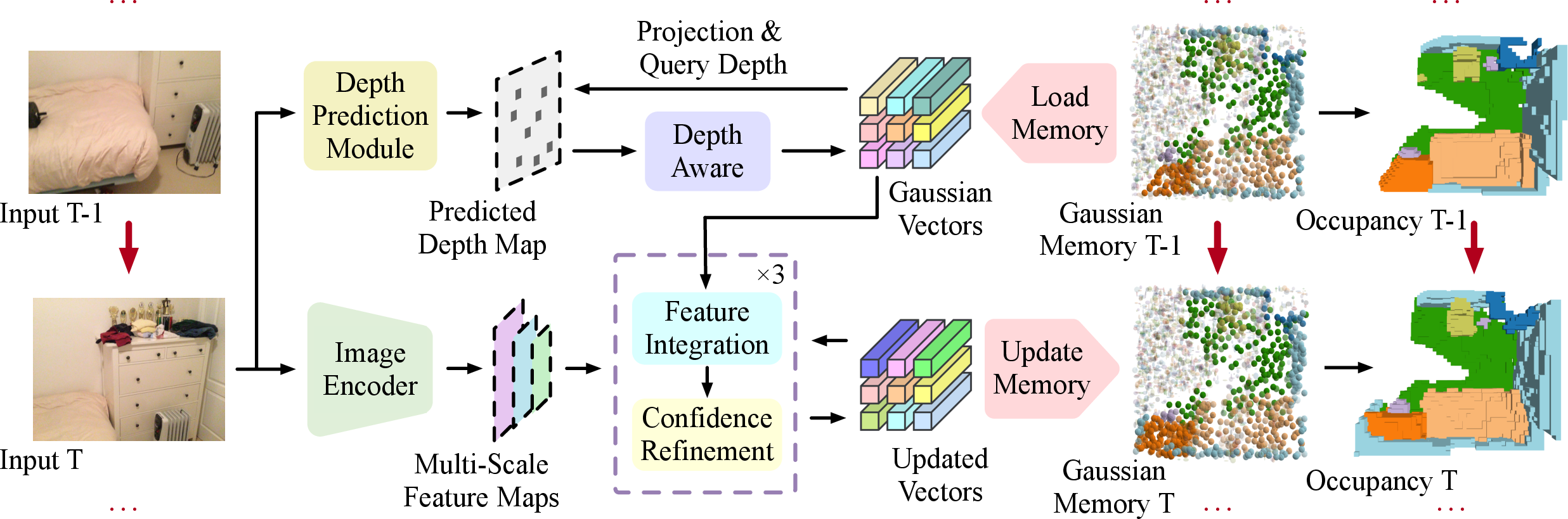

The core of the methodology involves initializing a scene with uniform 3D semantic Gaussians and updating these Gaussians progressively as new data is captured by the agent. For each observation, the method extracts semantic and structural features from the monocular RGB input and integrates them using deformable cross-attention. This approach enables real-time updates to the local regions within the agent's field of view.

Figure 1: Framework of our EmbodiedOcc for embodied 3D occupancy prediction.

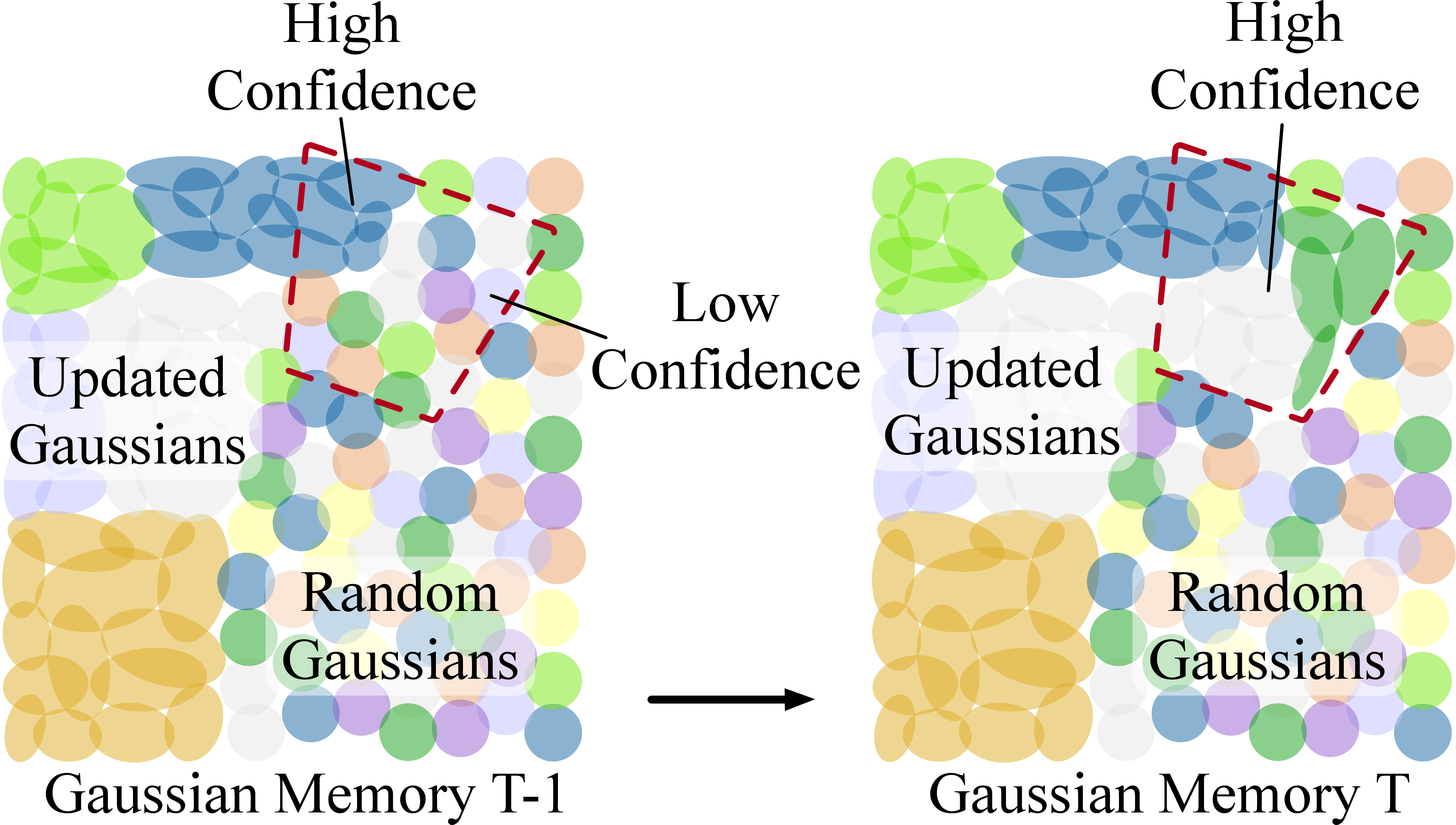

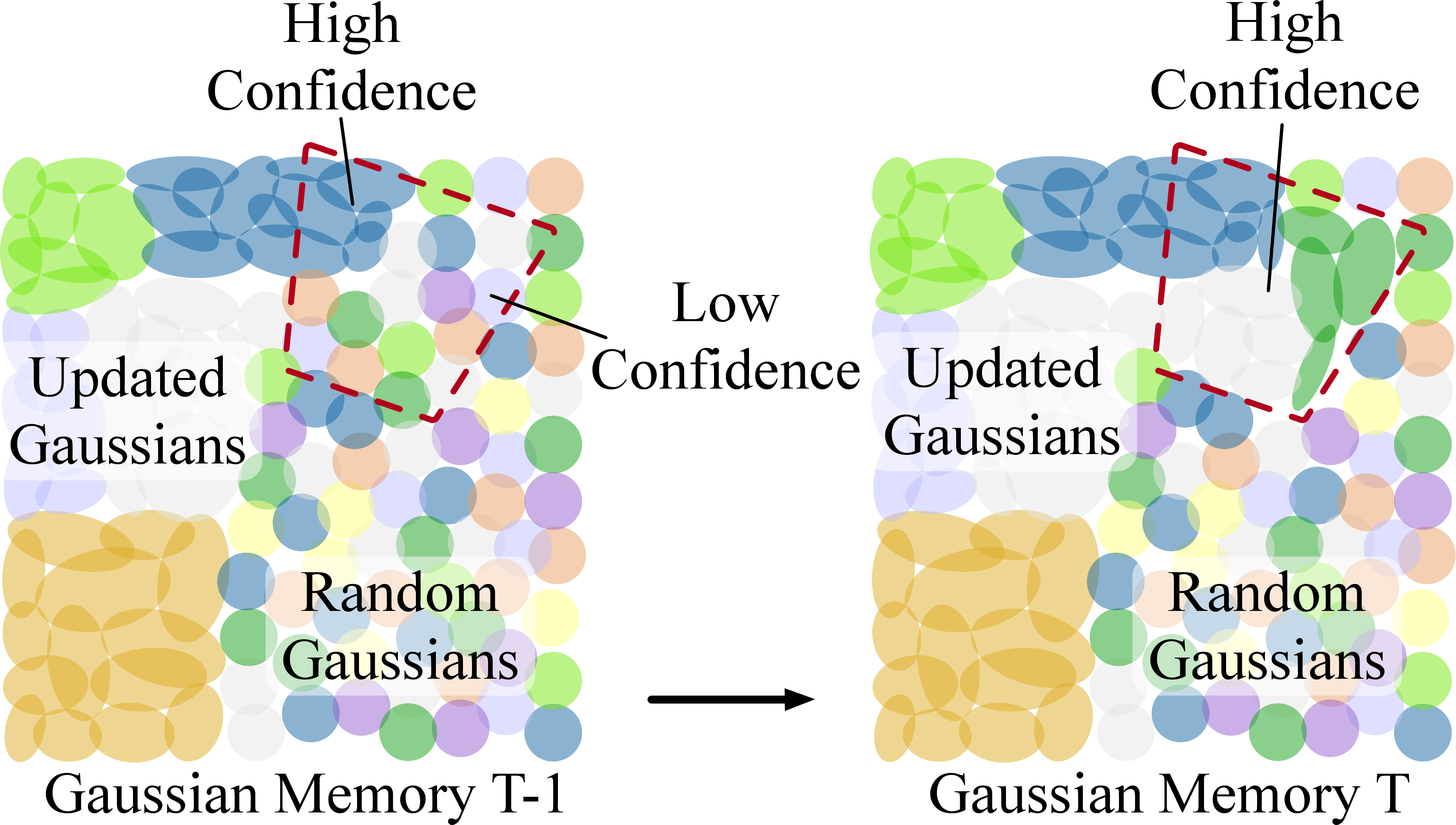

Gaussian Memory

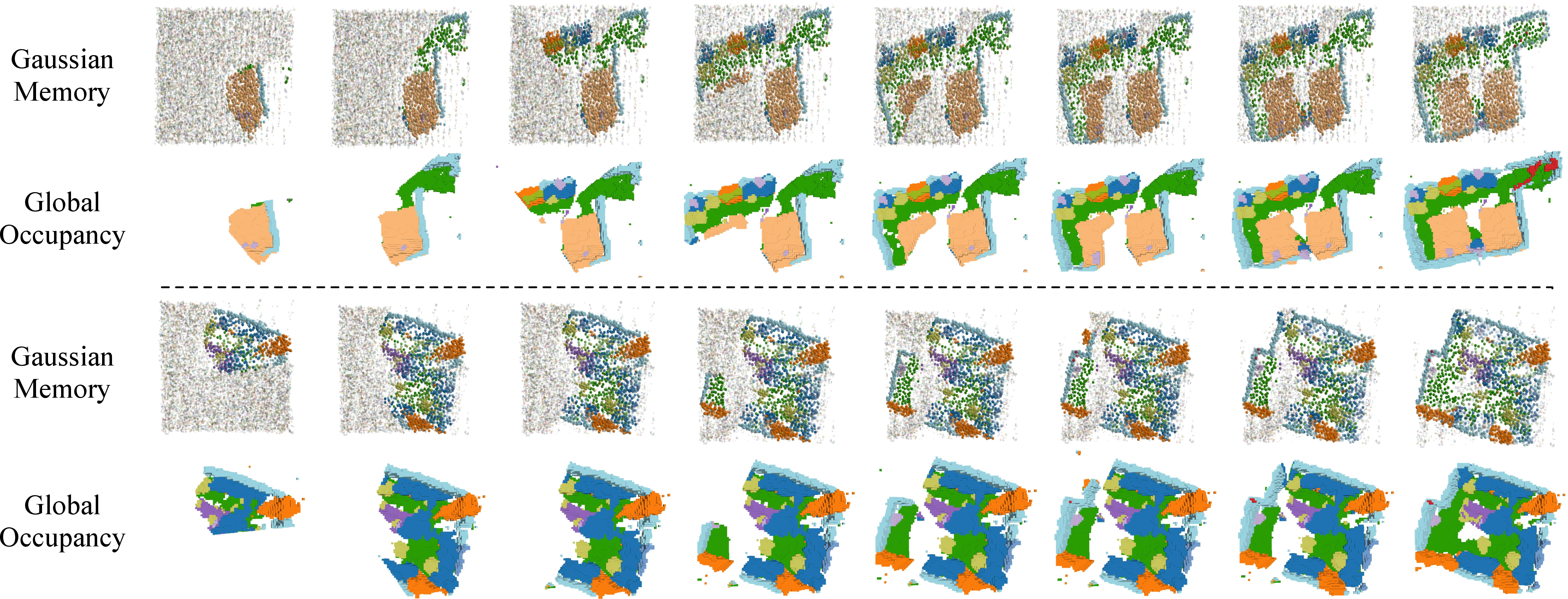

A distinctive feature is the maintenance of a global memory of 3D Gaussians that ensures continuity and consistency across updates. The method simulates human-like scene exploration, continuously refining this memory based on gathered information, and employing Gaussian-to-voxel splatting to produce the global occupancy map. This technique facilitates a comprehensive understanding of the scene structure and semantics.

Figure 2: Illustration of our Gaussian memory.

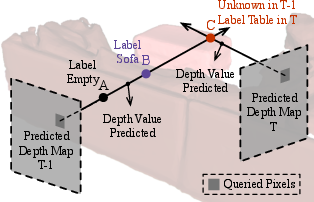

Local Refinement Module

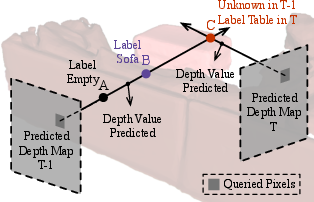

The local refinement module plays a critical role in refining Gaussian representations. It utilizes a depth-aware branch that leverages depth predictions to enhance Gaussian updates. This integration of depth data offers more precise adjustments to Gaussian properties, which is critical in overcoming depth ambiguity—a common challenge in monocular setups.

Figure 3: Motivation of the depth-aware branch.

Further, feature integration combines image features with Gaussian vectors through 3D sparse convolution and deformable attention mechanisms. This multi-stage refinement process is crucial for producing detailed and accurate occupancy predictions.

Experimental Results

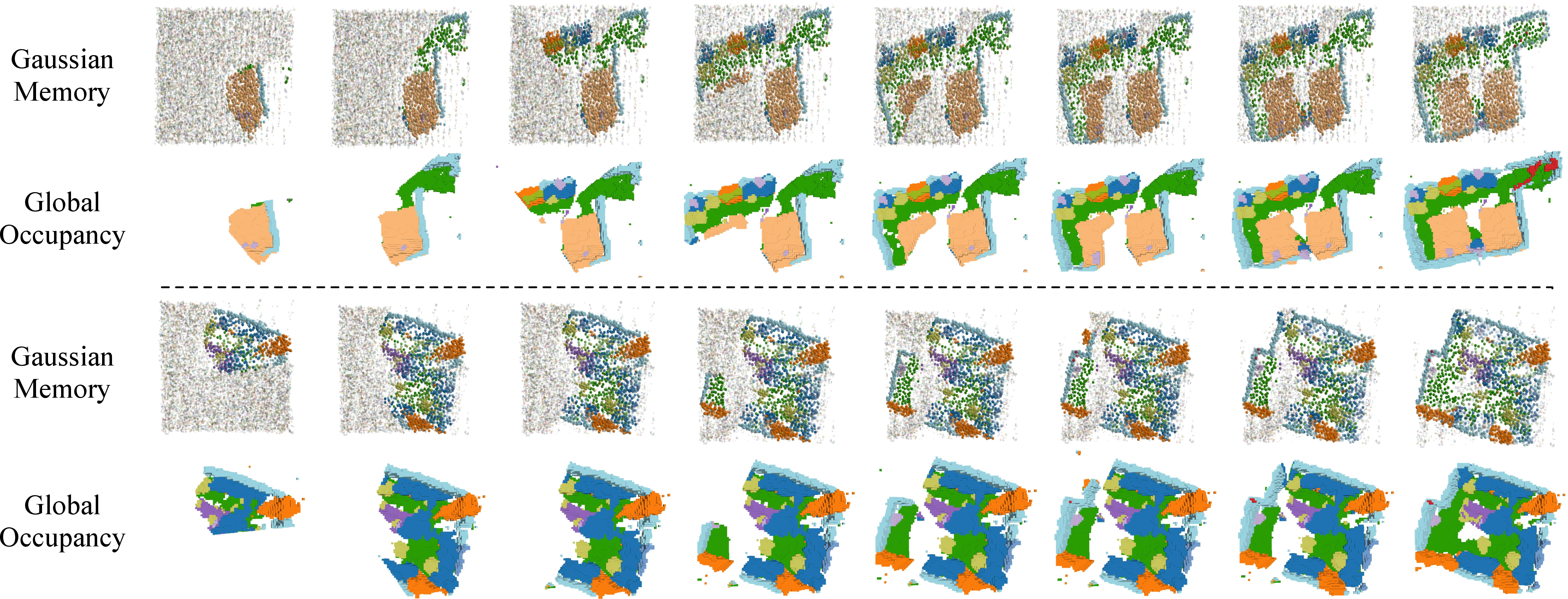

The system's efficacy was evaluated using the EmbodiedOcc-ScanNet benchmark, showcasing superior performance over state-of-the-art methods in terms of both local and embodied occupancy predictions. The proposed method significantly outperformed existing approaches, as demonstrated in the experimental results.

The local refinement module alone demonstrated notable advancements in processing monocular images for local 3D occupancy. When integrated into the full EmbodiedOcc framework, it yielded further improvements in embodied prediction tasks by effectively utilizing the Gaussian memory system for scene comprehension.

Figure 4: Visualization of the embodied occupancy prediction.

Analysis and Ablations

Extensive ablation studies underscored the importance of various components of the EmbodiedOcc framework, such as the depth-aware branch and the Gaussian memory mechanism. Analysis highlighted how these elements contribute to the robust performance of the system, providing insights into the model's architectural choices and parameter settings.

The runtime analysis confirmed the efficiency of the EmbodiedOcc framework, identifying potential optimizations in image and depth feature extraction processes.

Conclusion

EmbodiedOcc marks a significant contribution to the field of embodied AI, presenting a robust framework for online 3D occupancy prediction from monocular inputs. The system's building blocks—Gaussian-based representations, depth-aware refinements, and comprehensive global memory—collectively enhance the embodied agent's ability to perceive and understand complex indoor environments. Future developments may focus on optimizing computational efficiency and extending applicability to diverse scene types and scenarios.