- The paper introduces FlowEdit, an inversion-free method using ODEs to directly map source to target image distributions based on textual prompts.

- It achieves reduced transport costs and improved structural fidelity over traditional inversion-based editing, as evidenced by lower FID and KID scores.

- FlowEdit's reliance on pre-trained T2I models opens avenues for enhanced creative, real-time, and application-specific image editing.

FlowEdit: Inversion-Free Text-Based Editing Using Pre-Trained Flow Models

Introduction

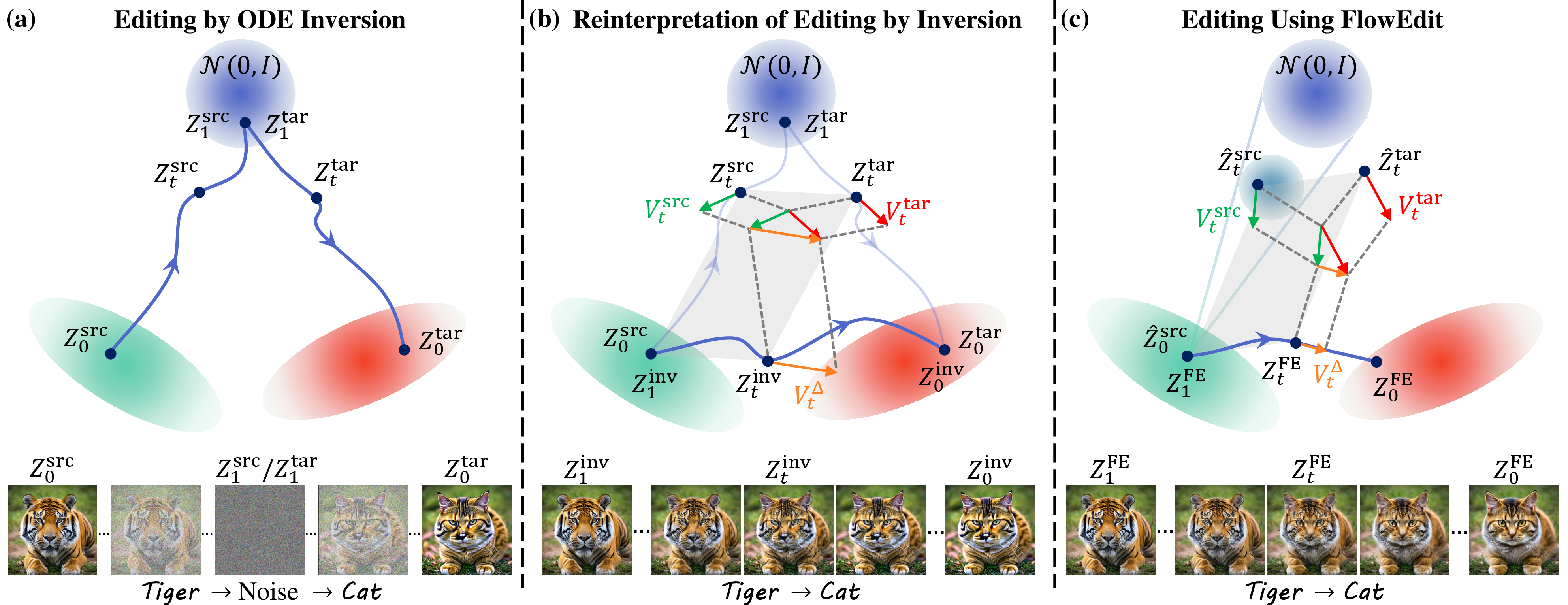

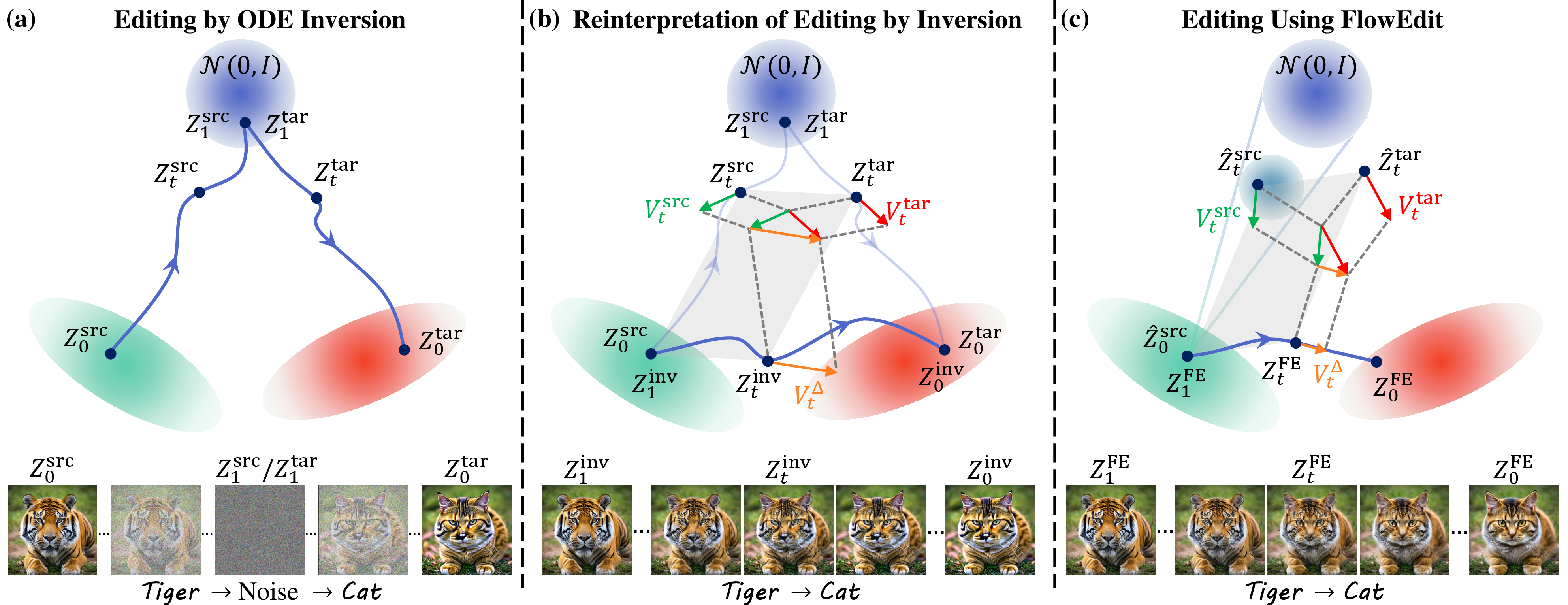

The paper "FlowEdit: Inversion-Free Text-Based Editing Using Pre-Trained Flow Models" introduces a methodology that bypasses traditional image inversion techniques for editing images through pre-trained Text-to-Image (T2I) flow models. This method, denoted as FlowEdit, utilizes an Ordinary Differential Equation (ODE) approach that directly maps the source image distribution to the target image distribution corresponding to textual prompts. By avoiding the inversion bottleneck, FlowEdit reduces transport costs and maintains better structural fidelity to the source image.

Figure 1: Editing by inversion vs.~FlowEdit. (a) illustrates the traditional inversion editing, transporting through Gaussian noise, whereas (b) shows FlowEdit's direct path.

Methodology

Traditional Inversion-Based Editing: Conventional methods convert images into a noise map before re-sampling them using modified prompts. These techniques often grapple with discrepancies in preserving the source's structure, especially when altering generated images. Intermediate steps, such as feature map injection during inversion, are implemented to enhance fidelity but lack model transferability.

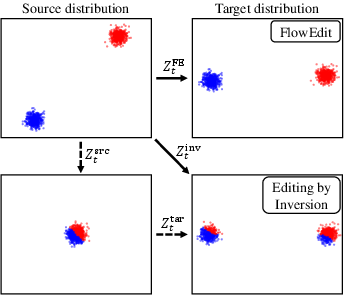

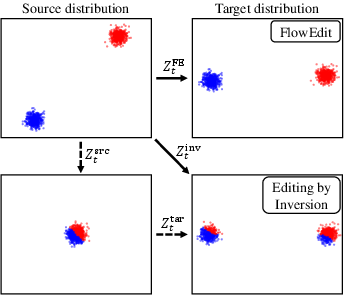

FlowEdit Approach: FlowEdit employs a flow model that is both inversion-free and optimization-free. Instead of traversing through Gaussian noise, FlowEdit devises a more direct solution path between the source and target distributions using ODEs. This approach results in significant reduction of the overall transport cost, a critical factor in maintaining the integrity of complex image edits. FlowEdit achieves this by constructing a low-cost transport map that optimally aligns modes in source and target distributions.

Figure 2: Source to target pairings reveal FlowEdit's reduced transport cost compared to inversion-based maps.

Practical Implementation

Algorithmic Innovations: FlowEdit runs iterative steps, continually mapping the source distribution directly to the target using ODEs tailored to each editing scenario. The manifold structure of the underlying diffusion process enables FlowEdit to balance the structural preservation and adherence to target semantics effectively.

Hyperparameter Sensitivity: The FlowEdit process is sensitive to hyperparameters governing the number of timesteps and averaging noise samples. The results indicate that even small deviations in ODE step sizes can adversely impact results, emphasizing the need for precise calibration of the algorithmic process.

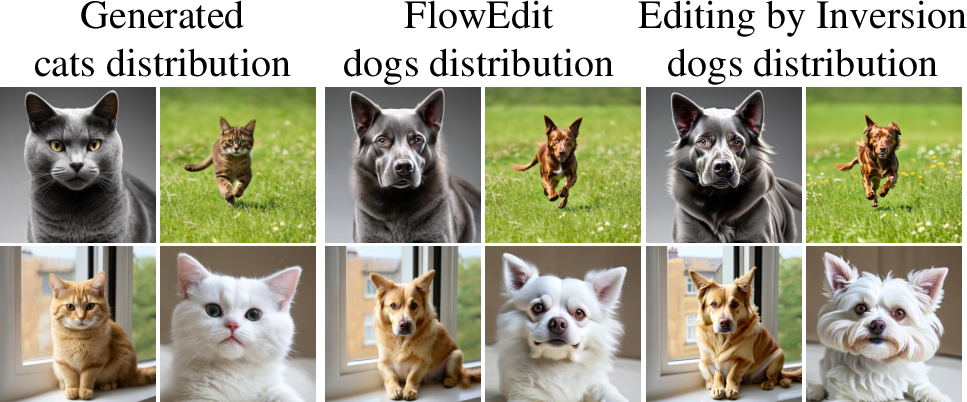

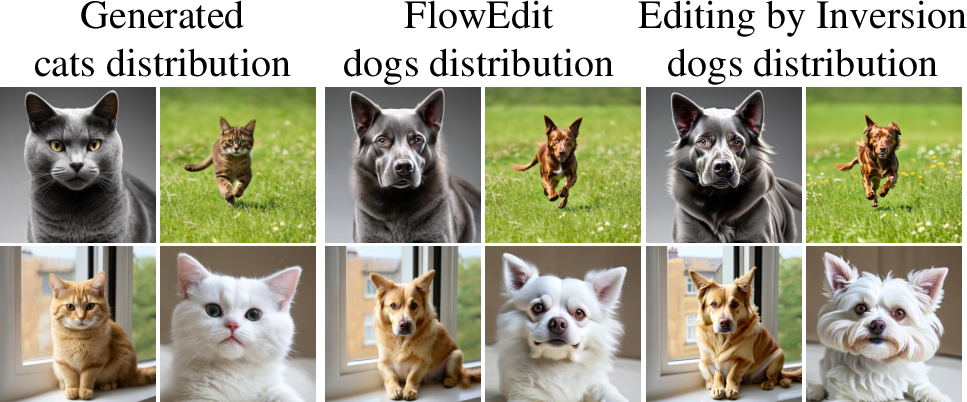

Figure 3: Cats and dogs experiment, demonstrate lower transport costs and improved fidelity scores (FID, KID) for FlowEdit.

Results

The empirical evaluation demonstrates FlowEdit's prowess in editing tasks requiring fine-grained structural fidelity. On tasks such as converting images of cats to stylistically altered dogs, FlowEdit consistently outperformed inversion-based approaches, evident in both qualitative assessments and FID/KID metrics. FlowEdit's edits preserved more of the original image structure, a benefit attributed to the direct noise-free pathway executed during the edit process.

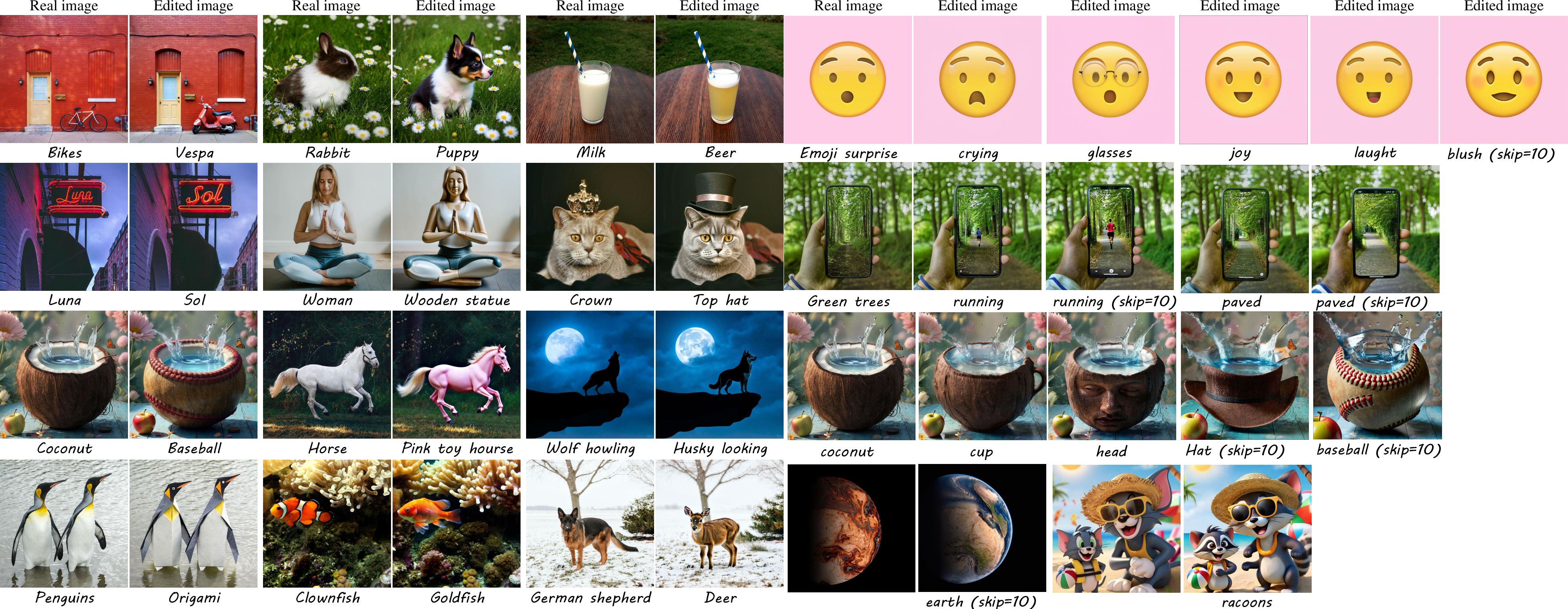

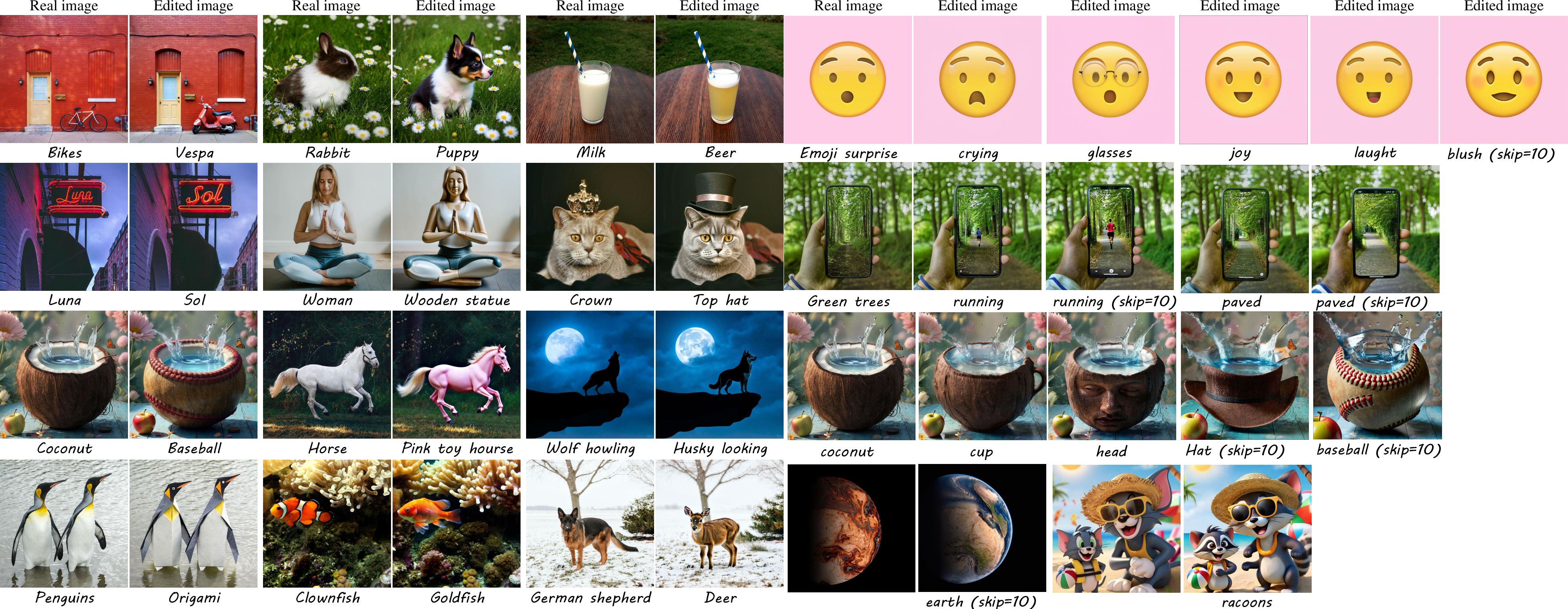

Figure 4: FlowEdit shows successful edits with marked structural preservation on diverse edits across various datasets.

Conclusion and Future Work

FlowEdit represents an advancement in T2I editing, offering a solution that seamlessly blends model architecture independence with improved editing quality. While effective in maintaining image structure, certain limitations remain, particularly in scenarios demanding extensive alterations over large image regions. Future research could focus on enhancing FlowEdit's adaptive capabilities in scenarios requiring extensive global edits, as well as exploring applications in video editing and real-time rendering scenarios.

This method showcases potential for significant impact in creative industries, where high flexibility and fidelity in image alteration are paramount. Future extensions of this work are poised to refine ODE pathways further and possibly integrate style elements permitting richer, more complex editing possibilities.