- The paper demonstrates the integration of a human-like AI interviewer using Android ERICA for real-time, multilingual qualitative interviews in an academic setting.

- It leverages advanced speech processing and dialogue management with prosody-based backchannel generation to ensure natural conversational flow.

- The system, evaluated at SIGDIAL 2024 with positive feedback from 69% of participants, offers promising implications for automating qualitative research.

Human-Like Embodied AI Interviewer: Integrating Android ERICA in Academic Settings

Introduction

The development of an AI interviewer capable of conducting nuanced interviews at an international conference represents a significant step in the integration of AI into social sciences. The paper outlines the deployment of an android-equipped, human-like interviewing system, leveraging conversational agents to deliver real-time interviews. This system, for the first time, combines advanced interactional capabilities such as attentive listening and user-fluency adaptation with a post-interview data processing and analysis framework, providing a fully automated end-to-end interviewing solution. The deployment at SIGDIAL 2024, involving 42 participants, demonstrated the system's capability to perform comparably to human interviewers in generating engaging and meaningful dialogues.

Systems and Architecture

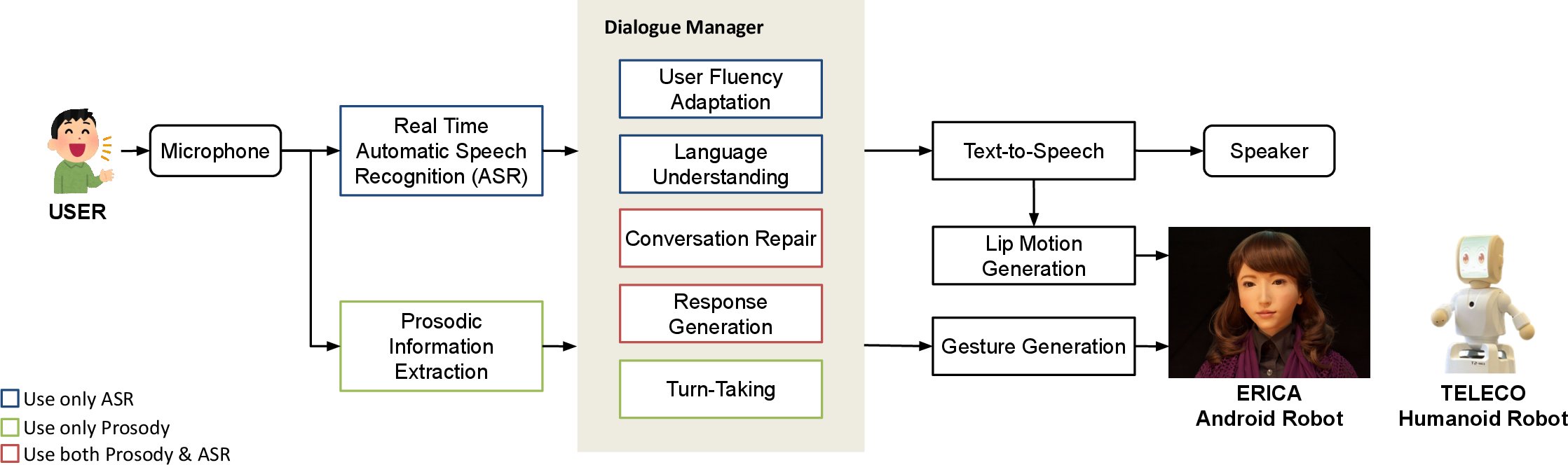

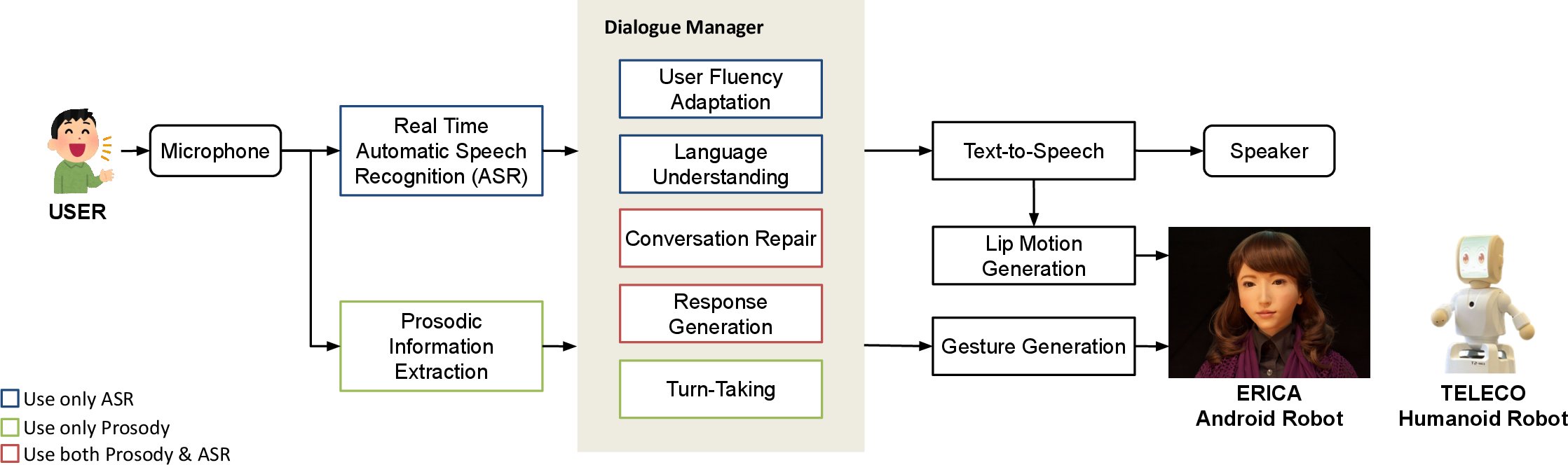

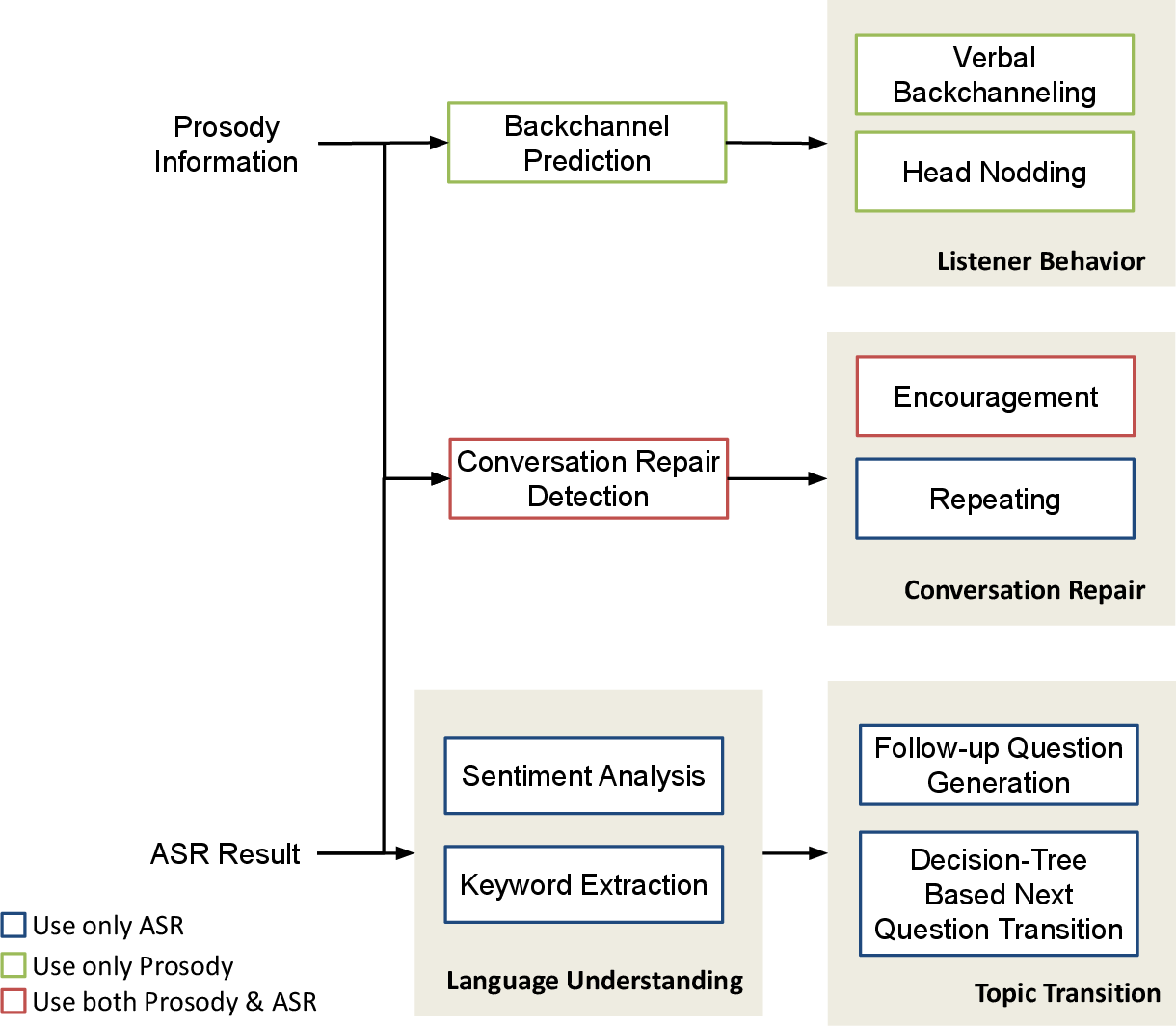

The architecture of the human-like interview system is the foundation of its functional capabilities. The system employs a speech processing module, dialogue manager, and post-interview processing components, each contributing to the system's overall interaction quality and data handling capabilities.

Figure 1: Overall architecture of human-like interview system.

Key functionalities include multilingual ASR, prosody-based backchannel generation, and user fluency adaptation. The integration of LLMs enables context-sensitive dialogue management, ensuring the flow of conversation remains natural and contextually relevant.

Methodology

Speech Processing

Automatic Speech Recognition (ASR) and the extraction of fundamental prosodic features, such as frequency and power, facilitate nuanced speech recognition that underpins the system's understanding and response. By employing a VAP-based Multilingual Turn-Taking Module, the system maintains conversational flow across languages, enhancing its adaptability in an international conference setting.

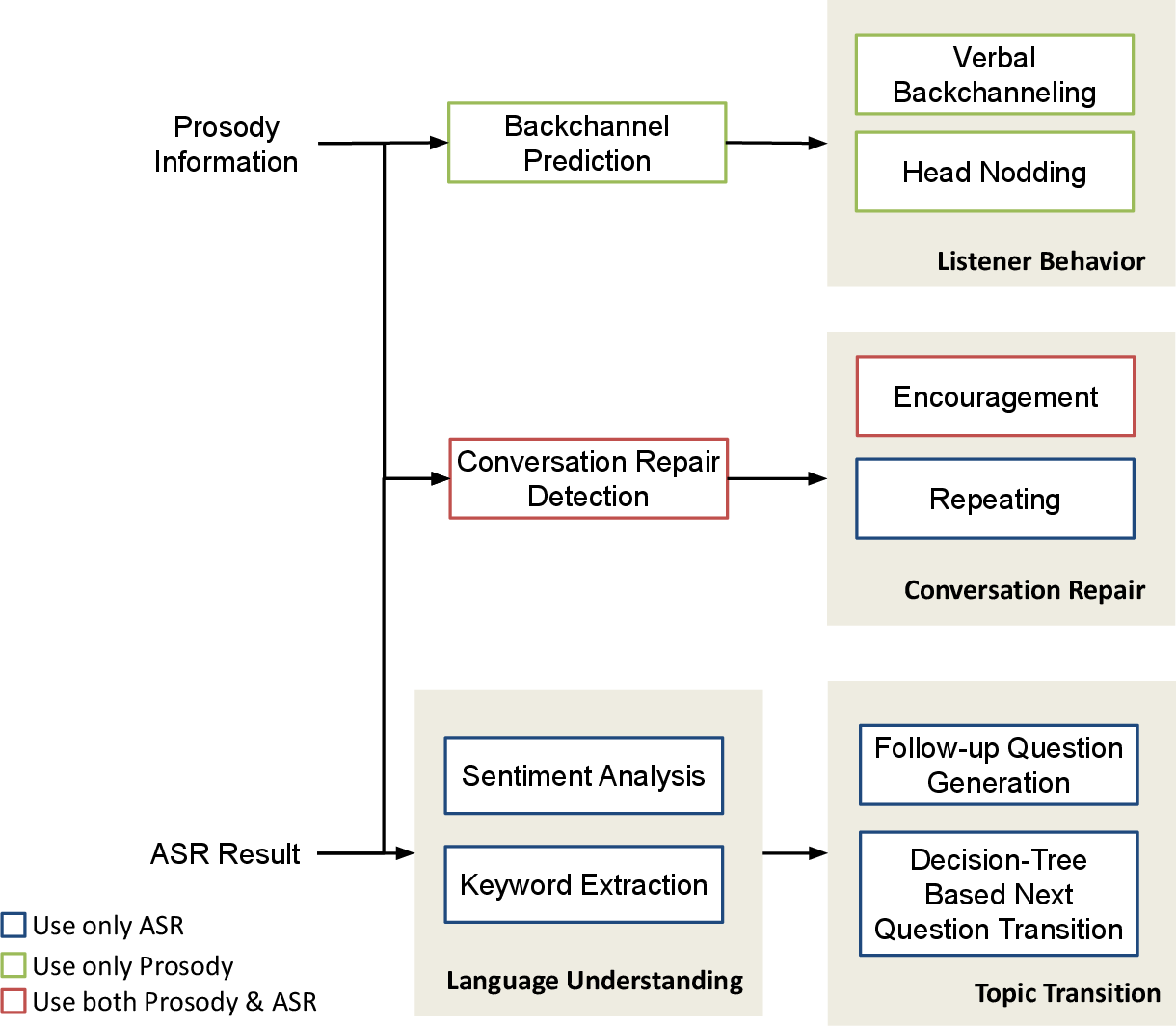

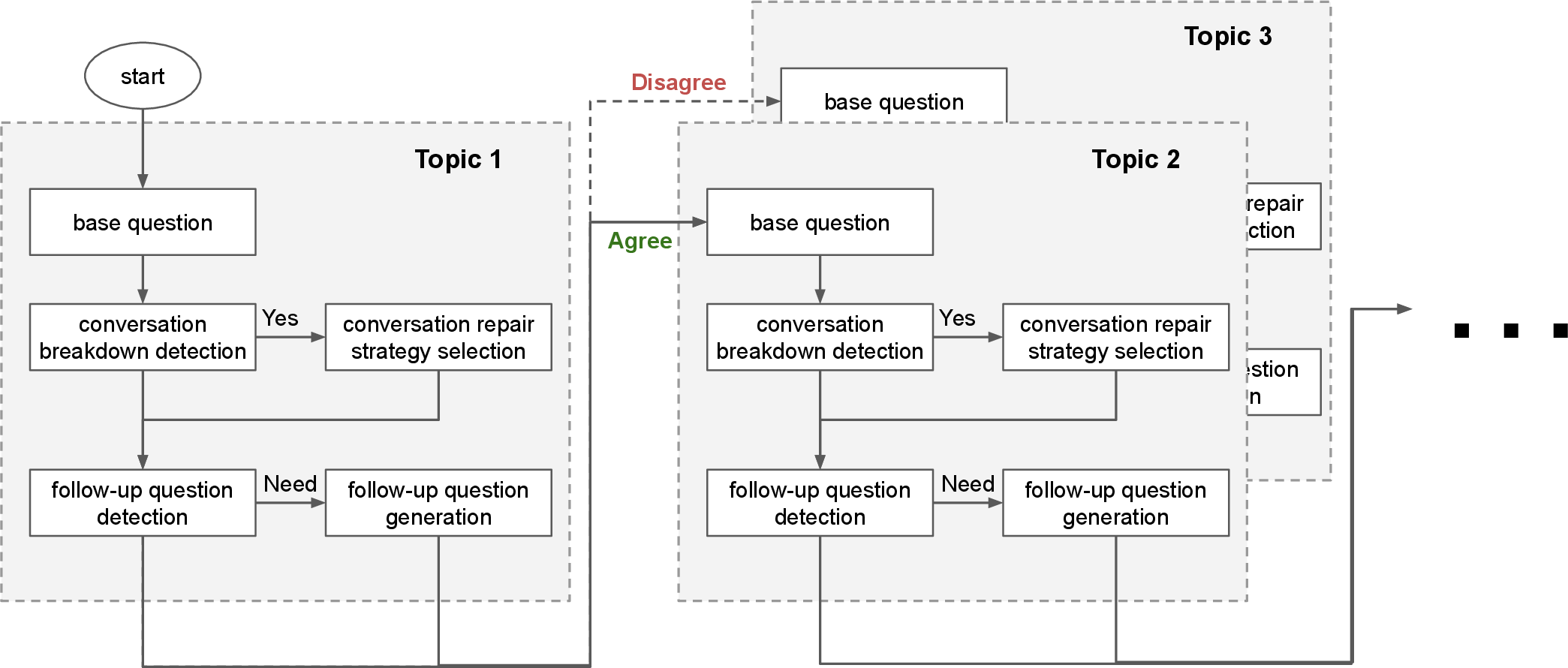

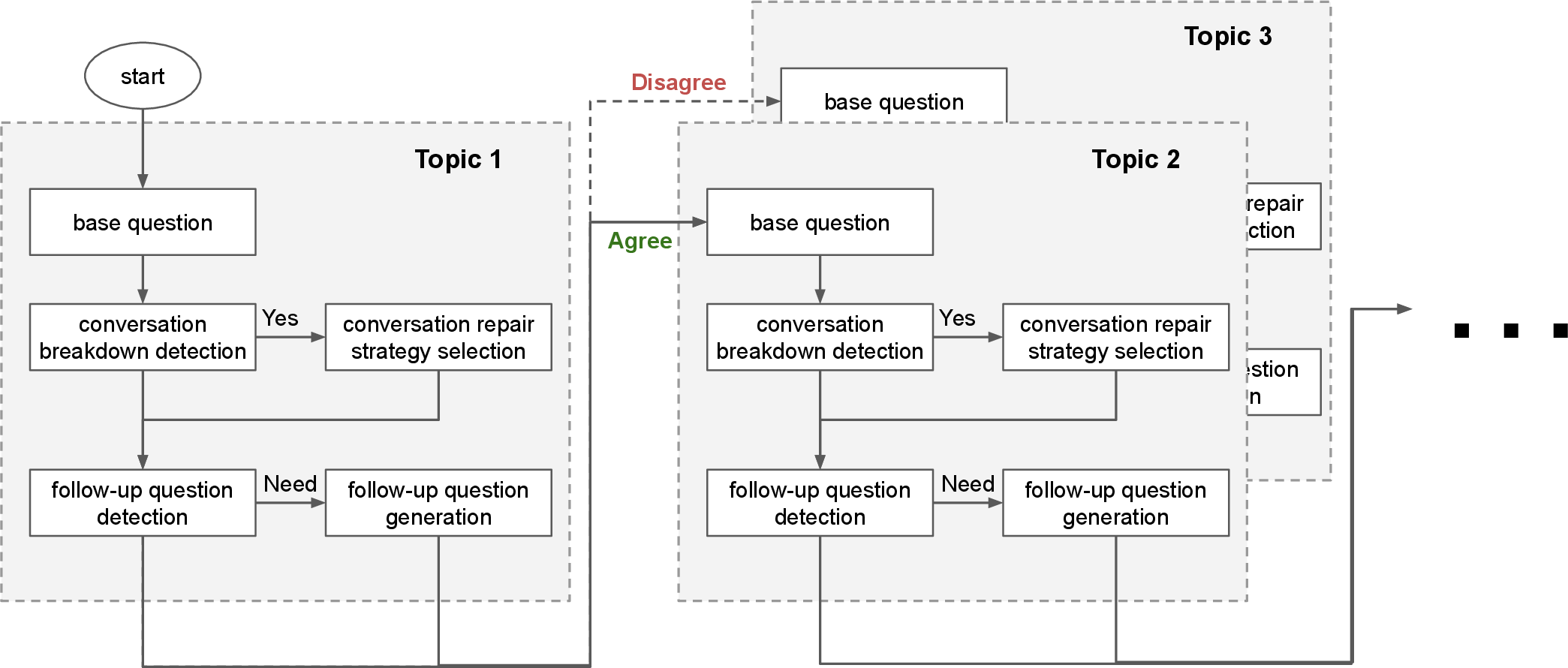

Dialogue Management

At the core of the dialogue system is the Dialogue Manager, equipped with language understanding capabilities to interpret user input and model backchannel predictions accurately. The blend of conversation repair modules and prosody-based turn-taking enables the system to handle conversational breakdowns effectively, ensuring smooth interaction.

Figure 2: Overall architecture of interview system response generation.

Implementation in Real-World Settings

The system's deployment at SIGDIAL 2024 allowed for real-world evaluation in an academic setting. Two robots, ERICA and TELECO, were employed as conversational agents. Their human-like features were designed to facilitate more engaging and realistic interactions with conference participants, judged positively by 69% of them.

Results and Analysis

The case study revealed that the system was highly effective in facilitating interactive and memorable experiences, supported by positive participant feedback. However, some limitations were apparent, such as the repetitive nature of template-based questions and synthesized backchannels that lacked natural tonality. These aspects underscore the need for further refinement to enhance human-like interactions.

Figure 3: Photo of interview dialogue with ERICA by SIGDIAL participant.

Conclusion

This implementation of a human-like embodied AI interviewer demonstrates a promising approach to automating qualitative interviews in academic settings. By integrating advanced conversational capabilities with comprehensive data processing and analysis, such systems can significantly reduce the resource burdens of conducting qualitative interviews. Future work will focus on enhancing adaptive question generation and refining the backchannel synthesis to further bridge the technology gap toward human-level interaction.

Limitations and Future Work

Despite the successful deployment and positive initial feedback, the limited sample size of 42 participants restricts the broad applicability of the results. Moreover, the reliance on fixed templates in the questioning process suggests a need for further experimentation with dynamic LLM-driven question generation to maintain engagement without sacrificing analytic stability. Additionally, the system's current design, focusing solely on verbal interaction, underscores the necessity of incorporating multimodal inputs for improved context awareness and user experience.

In conclusion, continuing these lines of enhancement will likely further the system's integration capabilities at global conferences, potentially revolutionizing how qualitative research is conducted and analyzed.