- The paper introduces a novel multimodal approach that combines chest X-rays, EHRs, and ECG data for noninvasive T2DM screening.

- The joint fusion ResNet-LSTM model achieved a 2.3% AUROC improvement over the CXR-only baseline, demonstrating enhanced predictive performance.

- The study underscores the potential of integrated deep learning models to facilitate early T2DM detection in resource-limited clinical settings.

Deep Learning-Based Noninvasive Screening of Type 2 Diabetes with Chest X-ray Images and Electronic Health Records

This paper presents a novel approach for noninvasive screening of Type 2 Diabetes Mellitus (T2DM) by leveraging deep learning techniques with Chest X-ray (CXR) images and Electronic Health Records (EHRs). It addresses the limitations of conventional unimodal ML approaches which require extensive feature engineering and are confined to single data modalities. The research explores two multimodal deep learning architectures: a multimodal transformer and a joint fusion ResNet-LSTM, demonstrating the potential for improved T2DM prediction through integrated clinical data.

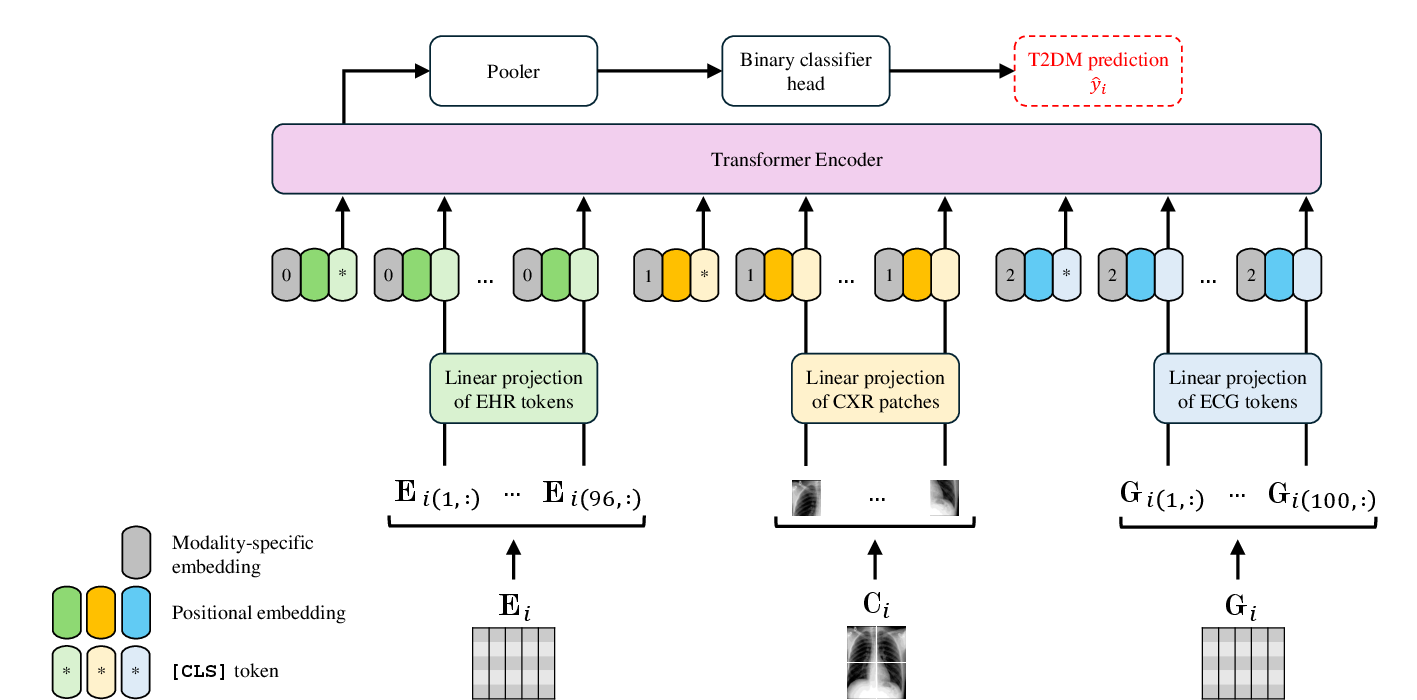

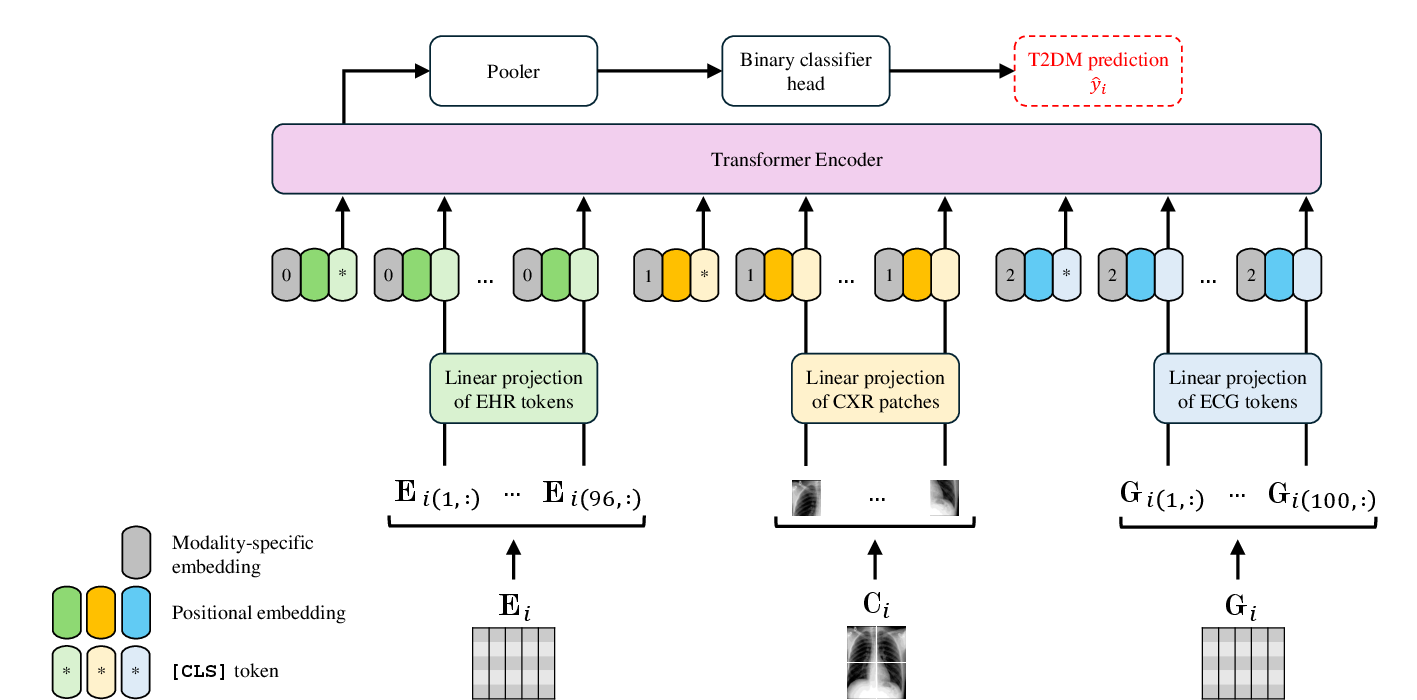

Figure 1: ViLT model architecture with the $\mathbf{D_{\boldsymbol{E+C+G}$} dataset.

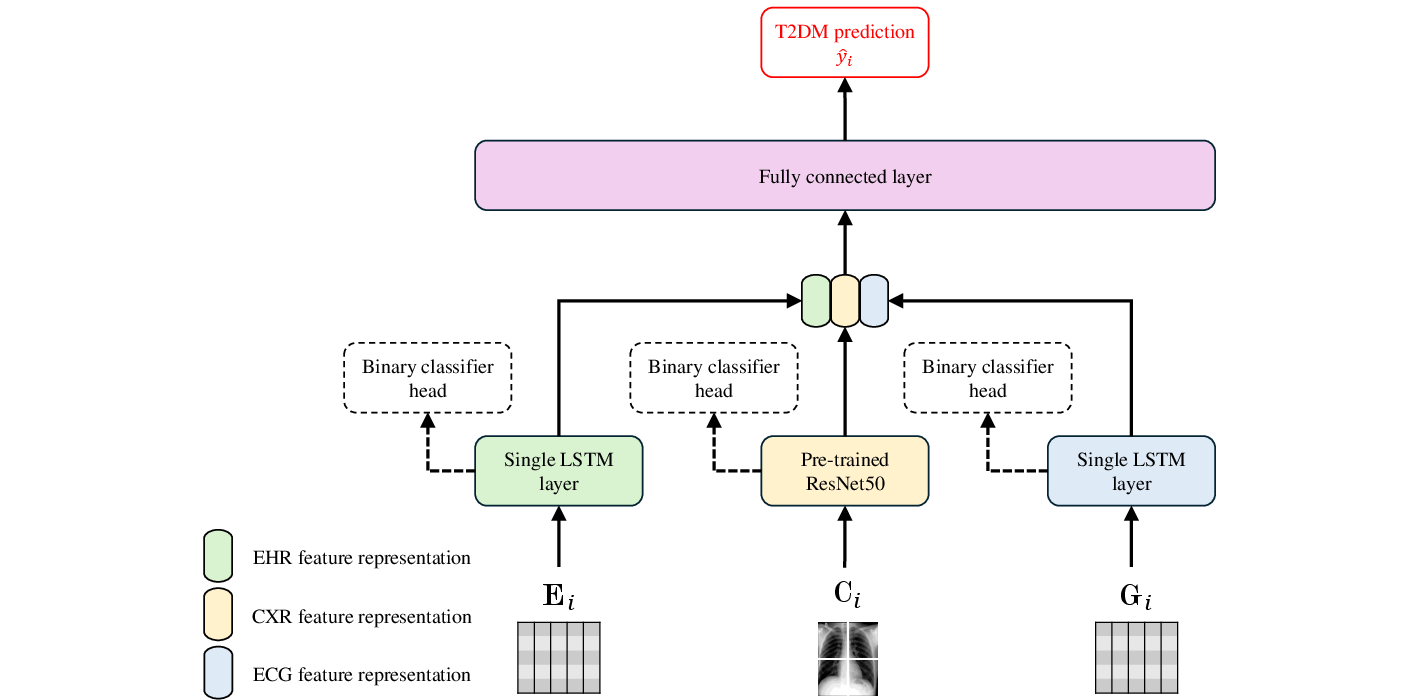

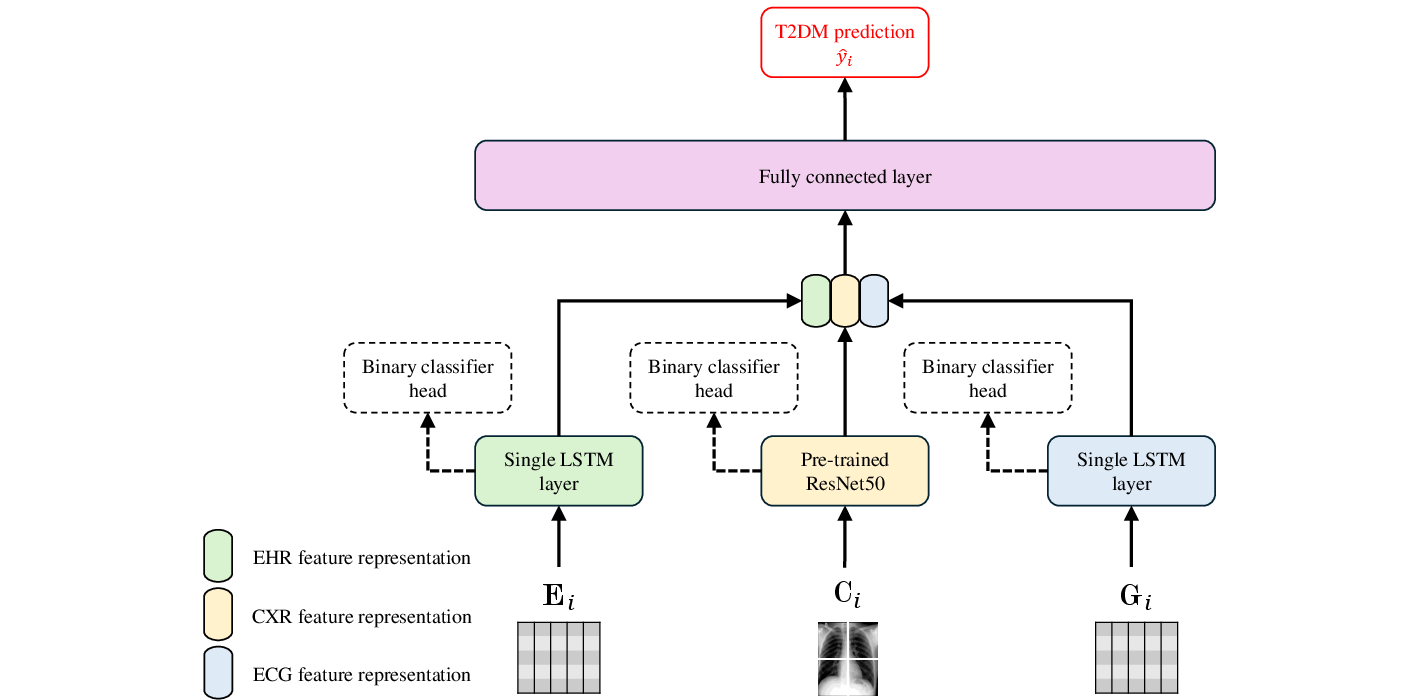

Figure 2: ResNet-LSTM model architecture with the $\mathbf{D_{\boldsymbol{E+C+G}$} dataset.

Introduction to Multimodal Approaches

The study begins with a discussion on T2DM's asymptomatic onset, which complicates early detection and often leads to late interventions. The T2DM prevalence continues to rise, stressing the need for reliable, early screening tools. Traditional approaches largely depend on blood tests, which pose practical limitations due to their invasive nature and potential for delayed detection. This paper proposes utilizing widely available CXR images, in conjunction with EHRs, to develop a more comprehensive, noninvasive diagnostic framework.

Methods and Dataset Preparation

The research utilizes datasets from the MIMIC-IV databases, integrating CXR images with EHR data, and including electrocardiography (ECG) signals. Two architectures were evaluated:

- Multimodal Transformer (ViLT): Employs a vision transformer without convolutional or region supervision, leveraging an early fusion strategy to integrate EHR, CXR, and ECG data.

- Joint Fusion ResNet-LSTM: Utilizes a modular structure combining residual networks for CXR encoding and LSTMs for EHR and ECG time-series data encoding.

Both architectures were trained to maximize AUROC performance, with the ResNet-LSTM model achieving an AUROC improvement of 2.3% over the CXR-only baseline.

Experimental Results

The ResNet-LSTM model exhibited superior performance with AUROCs of 0.8616 for the dataset incorporating EHR, CXR, and ECG data, and 0.8592 without ECG data. This highlights the benefit of integrating multiple modalities, although the addition of ECG provided minimal diagnostic improvement. The comprehensive use of multimodal data proved valuable for increasing the diagnostic accuracy of T2DM detection.

Ablation Studies

Ablation studies revealed several key insights:

- Pre-training Benefits: Pre-training the ViT improved the performance significantly, demonstrating the efficacy of transfer learning.

- Robustness to Noise: The ViLT model showed greater robustness against noisy inputs compared to the joint fusion models, likely due to its attention-based architecture's ability to generalize from global information.

- Handling Missing Modalities: Joint fusion models better handled missing CXR data due to their modular encoders, highlighting the resilience of such architectures.

Conclusion

This study successfully demonstrates that integrating CXRs and EHRs can enhance the sensitivity and specificity of T2DM detection, even with a limited number of samples. This approach has potential implications for clinical practice, especially in resource-limited environments. Future work should involve validating these models on external datasets and exploring alternative fusion architectures to leverage multimodal data fully.

This research marks a significant step toward practical, noninvasive screening tools for T2DM, paving the way for more personalized and early interventions in diabetes care.