- The paper introduces a two-stage training method using video diffusion models to automate the 2D animation colorization process.

- The approach overcomes challenges such as pose variations and binarization by using explicit color correspondence and background augmentation.

- Results show improved visual fidelity and temporal coherence, with quantitative gains in metrics like PSNR, SSIM, and LPIPS.

AniDoc: Animation Creation Made Easier

The paper "AniDoc: Animation Creation Made Easier" presents a novel approach to automate aspects of the 2D animation colorization process. The authors propose a method based on video diffusion models to efficiently colorize line art sketches, which fits seamlessly into existing animation workflows. This approach significantly lowers labor costs in animation production by minimizing manual steps in the coloring process.

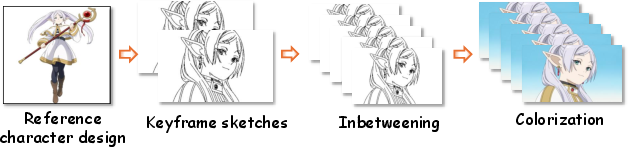

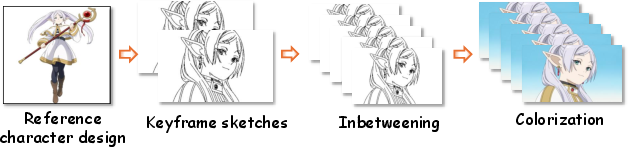

Animation Production Workflow Overview

The 2D animation production workflow typically involves several stages: character design, keyframe animation, in-betweening, and coloring. The proposed method focuses on automating the coloring stage while maintaining temporal coherence and fidelity to character designs.

Figure 1: Illustration of the workflow of 2D animation production.

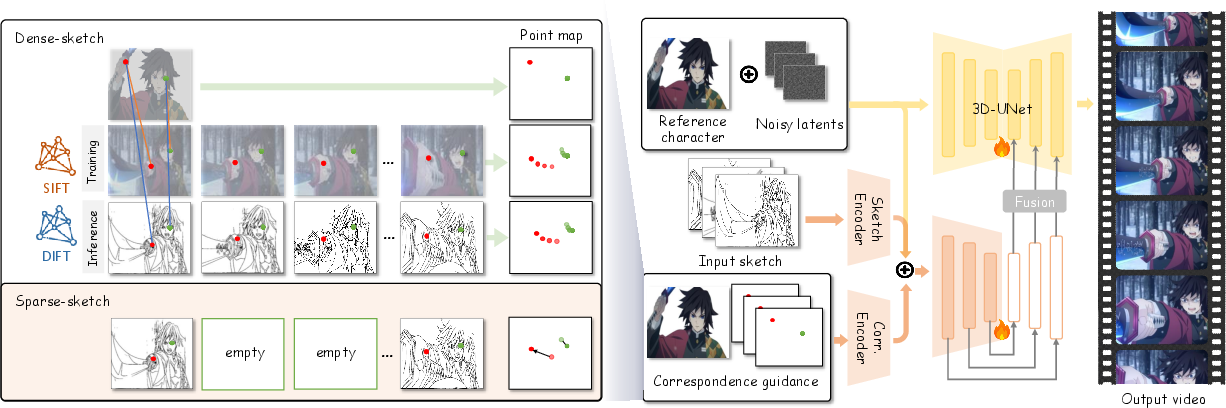

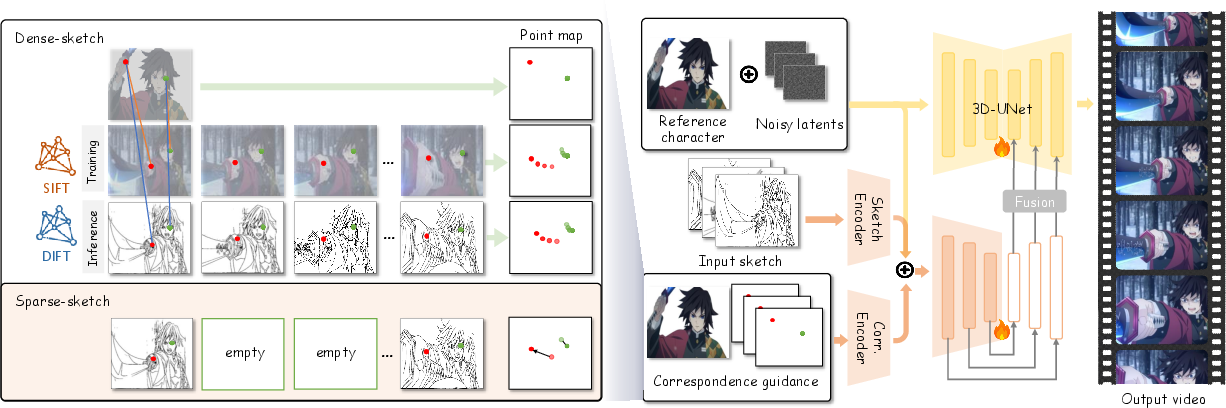

Method Overview

The authors introduce a two-stage training process leveraging video diffusion models to tackle colorization challenges in animation. The first stage involves dense-sketch training to establish correspondences between reference images and sketches. This step deals with extracting keypoints to form point maps, ensuring color consistency from the reference image throughout the animation.

Figure 2: Overview of pipeline. We adopt a two-stage training strategy...

Key Challenges Addressed

- Mismatch Between Reference and Sketches: The method uses an explicit correspondence matching mechanism to align colors from reference images to sketch frames, overcoming differences in pose, angle, and scale.

- Binarization and Background Augmentation: To simulate production conditions, sketches are binarized, which excludes hidden color information. The model trains with background augmentation to improve robustness and distinguish between character and background.

- Sparse Sketch Training: The second stage of training removes intermediate sketches, relying instead on matching points and start/end frames to interpolate smooth transitions, requiring only minimal sketches.

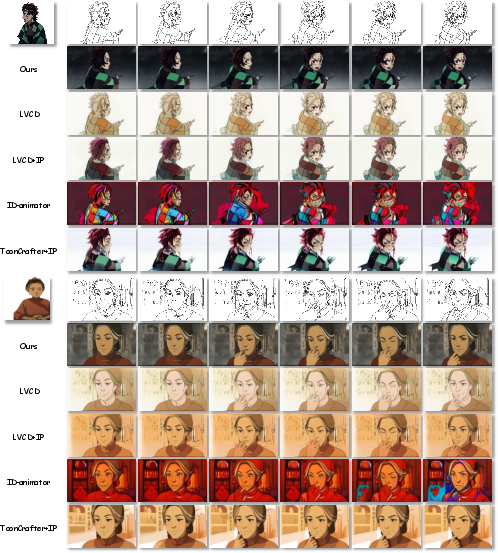

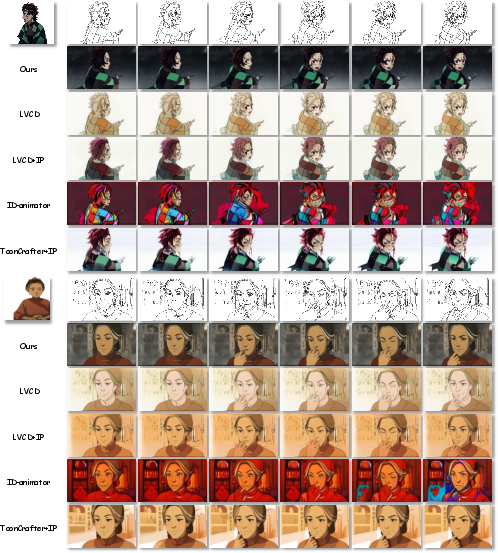

Results

Qualitative results show superior visual fidelity and temporal coherence with fewer artifacts compared to previous methods. Quantitative assessments using metrics like PSNR, SSIM, and LPIPS reflect improved colorization accuracy, while FID and FVD demonstrate enhanced generation quality.

Figure 3: Visual comparison of reference-based colorization with four methods.

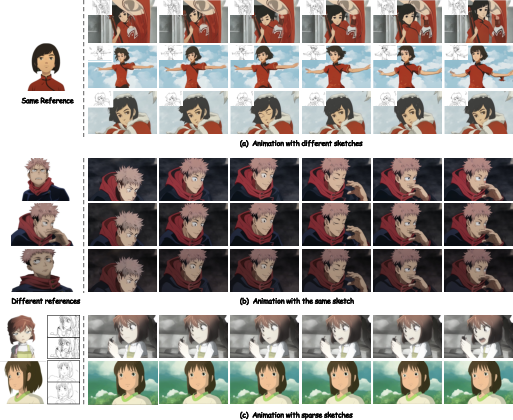

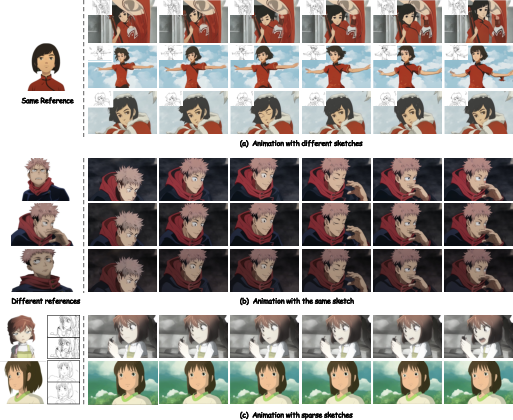

Flexibility and Robustness

The method showcases robustness to varying animation styles and scales, demonstrating its applicability across diverse scenarios. It efficiently colorizes character sequences with significantly different poses and scales using a single reference image.

Figure 4: Illustration of the flexible usage of our model...

Conclusion

AniDoc represents a step forward in automating animation workflows, particularly in the field of video colorization with reference images. Despite its advantages, there remain limitations such as color uncertainty in unseen objects and attire variations. Future work may focus on enriching the model's contextual understanding and broadening its applicability to more complex animation scenarios.