An Empirical Study of Accuracy-Robustness Tradeoff and Training Efficiency in Self-Supervised Learning

Abstract: Self-supervised learning (SSL) has significantly advanced image representation learning, yet efficiency challenges persist, particularly with adversarial training. Many SSL methods require extensive epochs to achieve convergence, a demand further amplified in adversarial settings. To address this inefficiency, we revisit the robust EMP-SSL framework, emphasizing the importance of increasing the number of crops per image to accelerate learning. Unlike traditional contrastive learning, robust EMP-SSL leverages multi-crop sampling, integrates an invariance term and regularization, and reduces training epochs, enhancing time efficiency. Evaluated with both standard linear classifiers and multi-patch embedding aggregation, robust EMP-SSL provides new insights into SSL evaluation strategies. Our results show that robust crop-based EMP-SSL not only accelerates convergence but also achieves a superior balance between clean accuracy and adversarial robustness, outperforming multi-crop embedding aggregation. Additionally, we extend this approach with free adversarial training in Multi-Crop SSL, introducing the Cost-Free Adversarial Multi-Crop Self-Supervised Learning (CF-AMC-SSL) method. CF-AMC-SSL demonstrates the effectiveness of free adversarial training in reducing training time while simultaneously improving clean accuracy and adversarial robustness. These findings underscore the potential of CF-AMC-SSL for practical SSL applications. Our code is publicly available at https://github.com/softsys4ai/CF-AMC-SSL.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how to train computer vision models faster and make them more resistant to “trick” inputs, called adversarial attacks. It focuses on self-supervised learning (SSL), a way for models to learn from images without needing labels. The authors study different training strategies and introduce a new, faster method called CF-AMC-SSL that aims to keep good normal accuracy while being robust to attacks, all in much less training time.

Key Objectives

The paper asks simple, practical questions:

- Can we train models with fewer practice sessions (epochs) by showing them more views of each image (many “crops” or “patches”)?

- Does using many different crops of an image help the model be both accurate on regular images and strong against adversarial attacks?

- Is it better to use multi-scale crops (different sizes) or fixed-size patches when training for robustness?

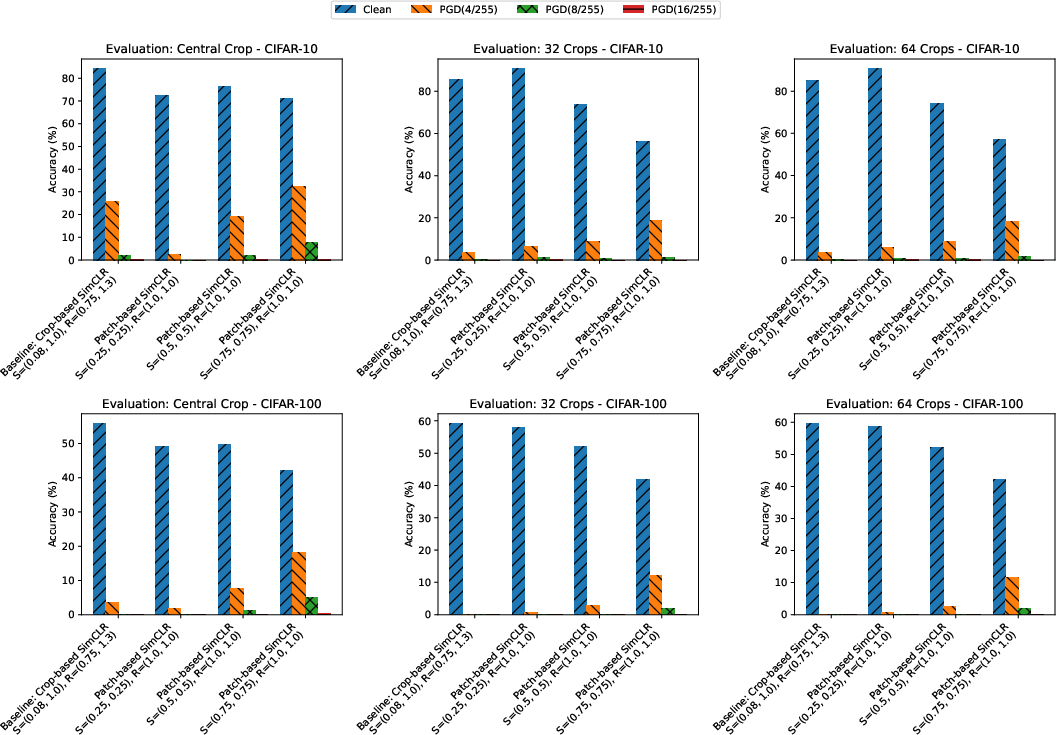

- How should we test these models: using one simple view (a central crop) or by averaging many crop embeddings?

- Can a faster kind of adversarial training (“free adversarial training”) work well in self-supervised learning and save time?

Methods and Ideas Explained

What is self-supervised learning (SSL)?

Think of SSL as a model learning by comparing different views of the same picture—like zooming into different parts or changing colors—and figuring out what stays the same. It doesn’t need labels. Two key styles are:

- Contrastive methods (like SimCLR): push apart different images and pull together different views of the same image.

- Non-contrastive methods (like EMP-SSL): avoid collapse (everything looking the same) using regularization and consistency rules, often with many crops/patches per image.

What are adversarial attacks and adversarial training?

- Adversarial attacks are tiny, carefully designed changes to an image that fool a model (like adding very small noise so a model mistakes a cat for a dog).

- Adversarial training prepares the model by training it on these “trick” images, so it learns to resist them. PGD is a common way to craft these tricks: it tweaks the image a little bit over multiple steps.

Crops vs. patches

- Crops: cut out different sizes and parts of an image (multi-scale, random regions).

- Patches: fixed-size cutouts of the image (same size each time). Using many crops or patches gives the model more diverse views of the same image, like seeing a scene from different angles.

Evaluation strategies

To check how good the model is, the authors use:

- A simple “central crop” test: feed a standard view into a small classifier on top of the learned features.

- Multi-crop aggregation: average the features from many crops before classification. This is richer but slower and not always better.

Datasets and models

They tested on CIFAR-10 and CIFAR-100 (small image datasets) and ran extra checks on ImageNet-100. They used popular neural networks (ResNet-18 and ResNet-50).

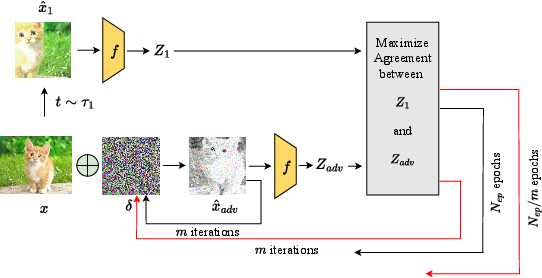

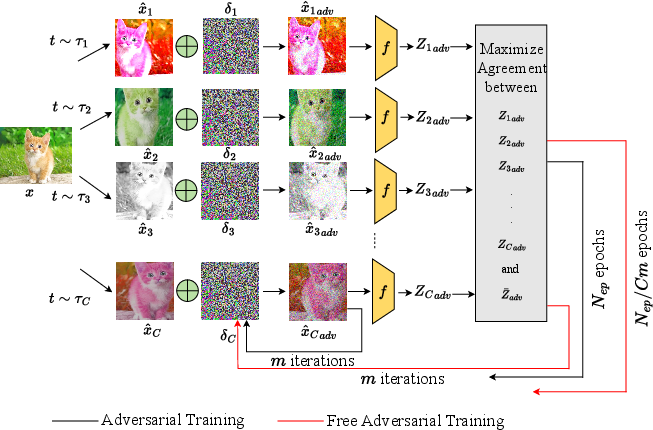

The new method: CF-AMC-SSL

CF-AMC-SSL applies “free adversarial training” to a multi-crop SSL setup. In everyday terms, it reuses work inside each mini-batch multiple times to create adversarial examples and update the model without spending lots of extra time. It trains in far fewer epochs and still gets strong results.

Main Findings

Here are the most important takeaways:

- More crops per image can replace many training epochs: Even though each epoch is a bit heavier, you need far fewer of them overall, so total training time goes down.

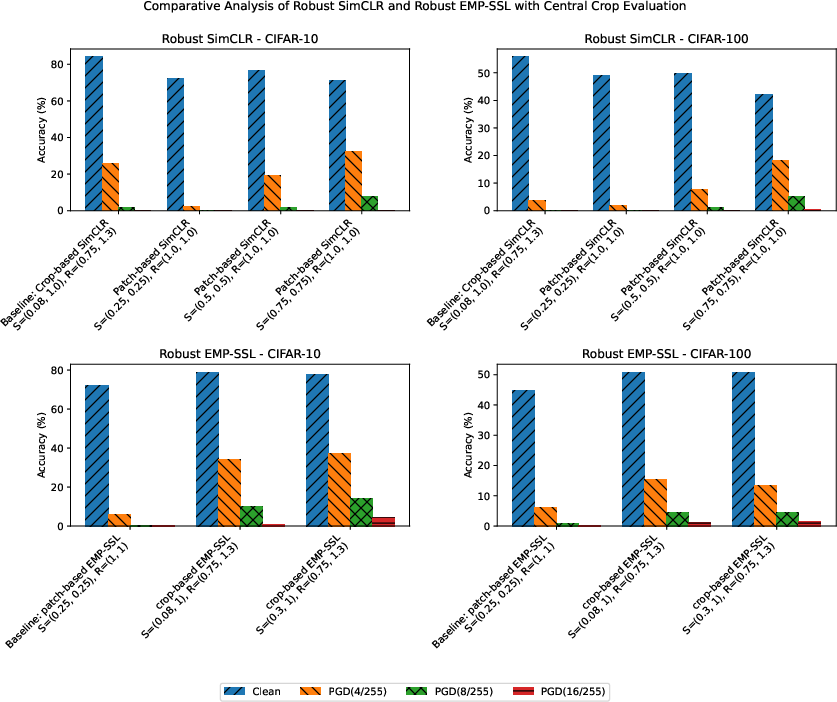

- Crop-based EMP-SSL strikes a better balance: Compared to SimCLR (which uses only two views), EMP-SSL with many crops achieves higher normal accuracy and better robustness under attack.

- Crops beat fixed-size patches for robustness: Multi-scale crops improve adversarial robustness more than fixed-size patches inside EMP-SSL.

- Simple testing wins: Using a central crop and a standard linear classifier is faster and often gives better accuracy and robustness than averaging many crop embeddings.

- CF-AMC-SSL is fast and strong: By combining multi-crop SSL with free adversarial training, CF-AMC-SSL cuts training time dramatically (down to around one-fifth in key cases) while matching or beating robustness and normal accuracy.

- Results generalize: The improvements hold across different networks (ResNet-18, ResNet-50), different datasets (including ImageNet-100), and stronger attacks (like AutoAttack), showing the approach is reliable.

Why This Matters

Practical impact

- Faster training: CF-AMC-SSL reaches good performance in far fewer epochs, saving compute time and energy.

- Better protection: Models trained this way are tougher against adversarial attacks, which matters for safety in real-world systems (like self-driving cars or medical imaging).

- Simple deployment: Using a central crop for evaluation is both easier and better for time and performance.

Big picture

This research shows a smart way to balance speed, normal accuracy, and robustness in self-supervised learning. Instead of training forever, you can train smarter: use more varied views (multi-crops) and efficient adversarial training. This makes SSL more practical for everyday applications where both reliability and efficiency are important.

Collections

Sign up for free to add this paper to one or more collections.