- The paper presents Flow, a framework that modularizes multi-agent workflows with dynamic real-time updates for enhanced efficiency.

- It introduces Activity-on-Vertex (AOV) graphs to decompose tasks, enabling parallel execution and streamlined dependency management.

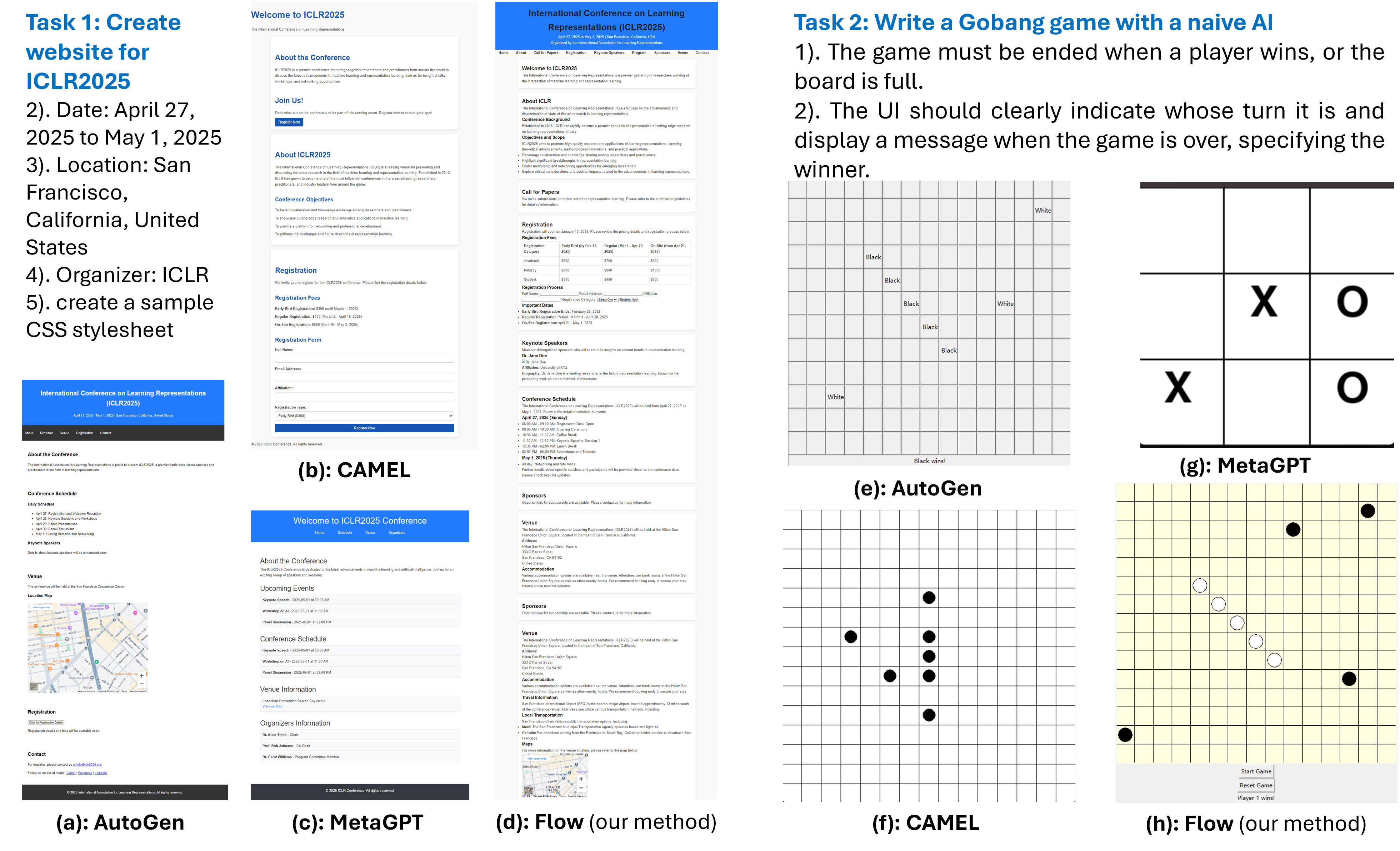

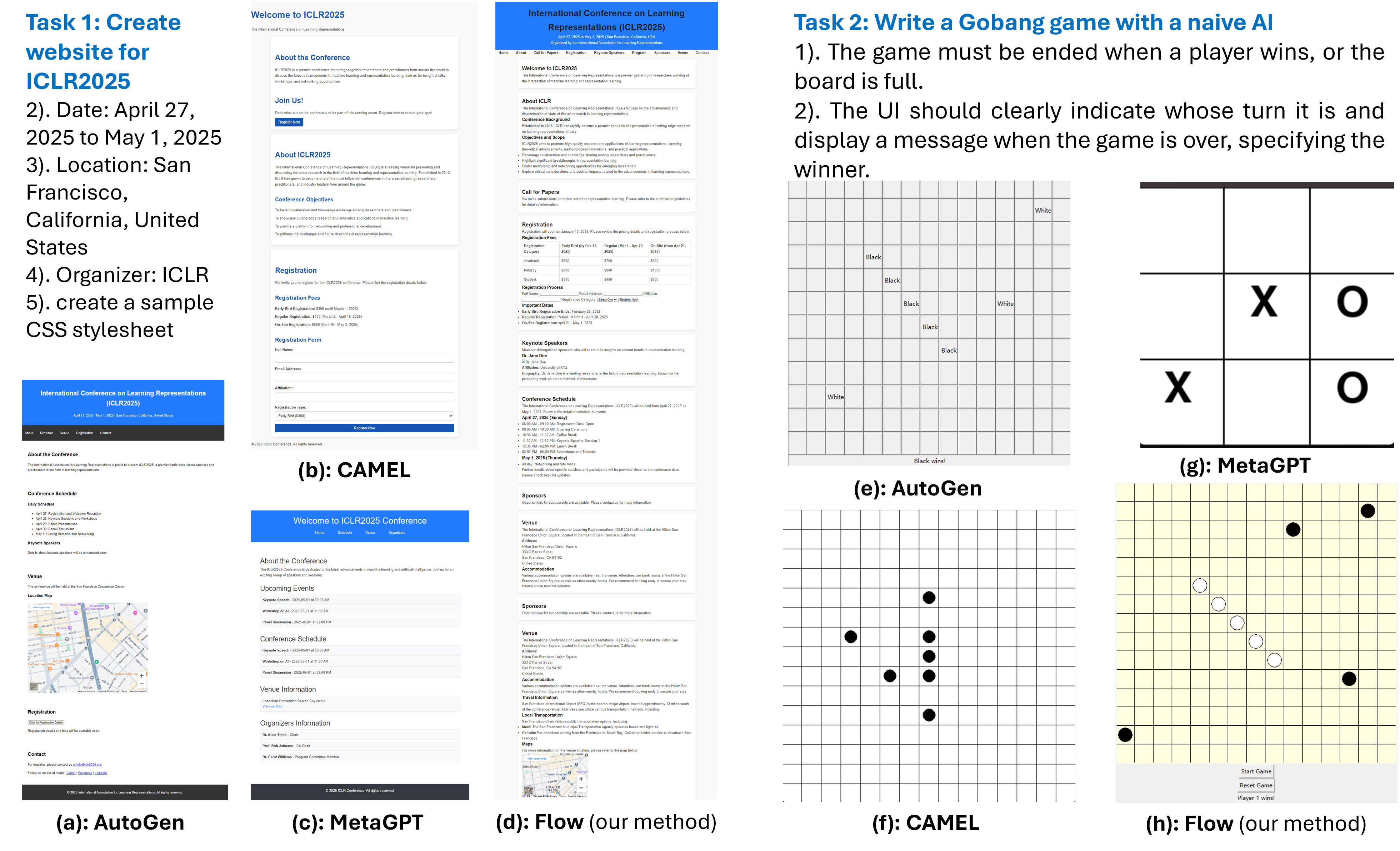

- Experimental results show Flow’s superior performance in applications like website development, LaTeX slide creation, and interactive game design.

Flow: Modularized Agentic Workflow Automation

Advancements in AI have led to the development of multi-agent systems powered by LLMs, which excel in automated planning and execution. The paper "Flow: Modularized Agentic Workflow Automation" introduces a framework that enhances these systems by emphasizing modularity and dynamic workflow updating. These innovations address the need for real-time adjustments in task execution, crucial for maintaining efficiency amidst unforeseen challenges.

Workflow Representation and Optimization

Activity-on-Vertex (AOV) Graph

Flow represents workflows using AOV graphs, where each sub-task corresponds to a vertex and dependencies are denoted by directed edges. This structure allows for task decomposition and parallel execution, optimizing both efficiency and adaptability.

In practical implementations, such graphs facilitate modular task execution and efficient dynamic updates. Consider the Python package networkx for graph management and use an LLM API to generate task dependencies and allocations. Below is an example code snippet to model workflows as AOV graphs:

1

2

3

4

5

6

7

8

9

10

11

|

import networkx as nx

G = nx.DiGraph()

tasks = ["Task_A", "Task_B", "Task_C", "Task_D"]

G.add_nodes_from(tasks)

dependencies = [("Task_A", "Task_B"), ("Task_B", "Task_C"), ("Task_A", "Task_D")]

G.add_edges_from(dependencies)

nx.draw(G, with_labels=True) |

Modularity and Dynamic Updates

Modularity

Leveraging modularity involves breaking down tasks into independent components that can be executed concurrently. This reduces dependency complexity, measured by the variability in degree distribution across tasks. High modularity allows efficient workflow updates and promotes system robustness.

To encourage modularity, use the following pseudocode to select workflows with optimal parallel execution potential:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

def select_best_workflow(candidates):

best_workflow = None

highest_parallelism = -float('inf')

lowest_complexity = float('inf')

for workflow in candidates:

parallelism = calculate_parallelism(workflow)

complexity = calculate_dependency_complexity(workflow)

if parallelism > highest_parallelism or (parallelism == highest_parallelism and complexity < lowest_complexity):

best_workflow = workflow

highest_parallelism = parallelism

lowest_complexity = complexity

return best_workflow |

Dynamic Workflow Updates

Dynamic updates adjust workflows in real-time based on sub-task performance and system feedback. An effective update strategy involves generating multiple candidate workflows using LLMs and selecting the one with the best modular characteristics.

In practice, the dynamic update function can be implemented as follows:

1

2

3

4

5

6

7

8

9

10

|

def update_workflow(G, performance_data):

for node in G.nodes:

# Update tasks based on performance data

if performance_data[node] < threshold:

# Adjust dependencies or reallocate tasks

G.remove_node(node)

add_corrective_task(G, node)

# Re-calculate parallelism and complexity for revised workflow

return calculate_parallelism(G), calculate_dependency_complexity(G) |

Experimental Results and Applications

Flow demonstrates significant improvements over existing frameworks in three experimental scenarios: website development, LaTeX Beamer slide creation, and interactive game development. The superior performance is attributed to effective modularization and dynamic updates that accommodate real-time task conditions.

Figure 1: Comparative evaluations among frameworks show Flow excelling with fully developed outputs, contrasting the incomplete designs from competitors.

Real-World Implications

Flow's ability to dynamically adjust workflows is particularly valuable in environments requiring rapid adaptation to changing requirements and unexpected challenges. Industries such as software development, machine learning research, and data science can benefit from adopting this framework.

Conclusion

Flow offers a robust multi-agent framework that emphasizes modularity and dynamic workflow updating, addressing critical limitations in existing systems by optimizing task decomposition and execution efficiency. These innovations facilitate the effective use of LLMs in complex tasks, demonstrating significant potential in advancing real-world AI applications.