- The paper introduces a zero-shot re-ranking method using LLM-generated answer scent to improve document retrieval performance.

- It combines standard retrieval techniques like DPR/BM25 with LLM-guided re-ranking to reduce computational overhead and enhance RAG model efficiency.

- Experimental results show significant Top-1 accuracy improvements on datasets such as Natural Questions, alongside reduced latency.

ASRank: Zero-Shot Re-Ranking with Answer Scent for Document Retrieval

Introduction

The paper "ASRank: Zero-Shot Re-Ranking with Answer Scent for Document Retrieval" presents a novel approach to enhancing document retrieval systems. Through a mechanism termed answer scent, this method leverages LLMs to improve the ranking of initially retrieved documents in open-domain question answering (ODQA) systems. By incorporating zero-shot methodologies, ASRank stands out for its ability to efficiently rerank documents without requiring domain-specific data or task-specific fine-tuning.

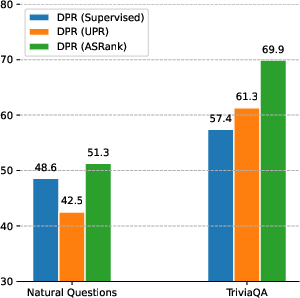

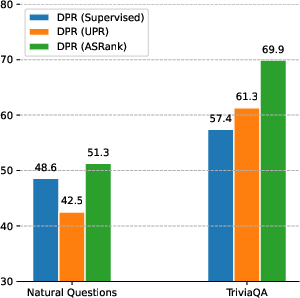

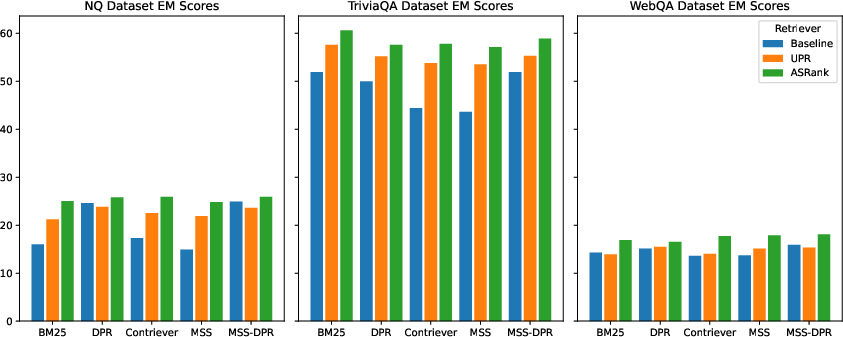

Figure 1: After re-ranking the top 1,000 passages retrieved by DPR, ASRank surpasses the performance of strong unsupervised models like UPR on the Natural Questions and TriviaQA datasets.

Methodology

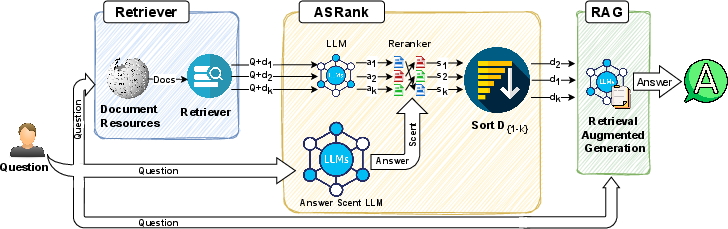

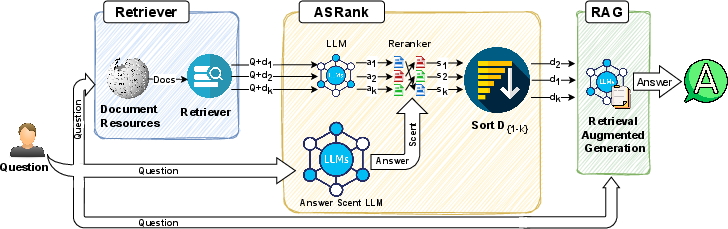

ASRank's methodology consists of three main components: document retrieval, answer scent generation, and re-ranking. Initially, conventional retrieval methods like Dense Passage Retrieval (DPR) or BM25 are employed to gather relevant documents. These documents often lack a refined ranking order, which limits the performance of Retrieval-Augmented Generation (RAG) models in ODQA.

Figure 2: Our ASRank framework begins with document retrieval, applies re-ranking using answer scent from LLMs, and finally feeds the top-k documents into the RAG system.

The answer scent concept draws inspiration from the notion of information scent in cognitive psychology, where individuals use subtle clues to guide their navigation through information. In ASRank, large LLMs such as GPT-3.5 or Llama 3-70B generate an answer scent by predicting text segments likely to satisfy the query. This prediction doesn't require prior training on specific datasets, operating under a zero-shot framework.

Once an answer scent is established, it guides a smaller model, such as T5, to perform document re-ranking. This two-tiered approach reduces computational demand by only utilizing larger models once, while capitalizing on their generative capabilities to enhance the downstream smaller model's efficiency.

Experimental Results

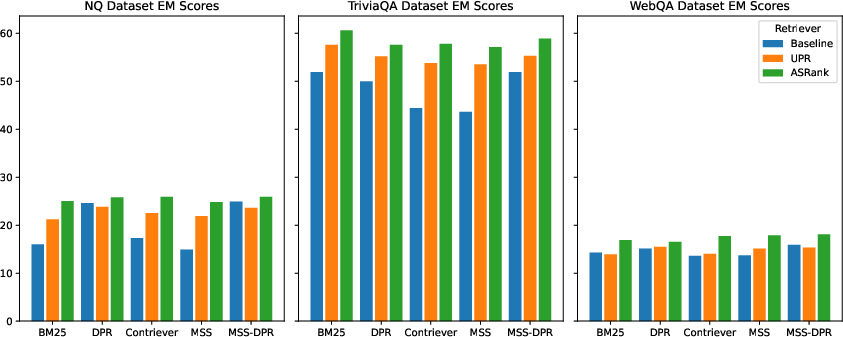

In extensive evaluations across multiple datasets including NQ, TriviaQA, and WebQA, ASRank demonstrated significant improvements in retrieval metrics. For instance, on the Natural Questions (NQ) dataset, ASRank raised Top-1 retrieval accuracy from 19.2% to 46.5% using MSS retrievers, outperforming prior methods like UPR (Table 1).

Not only did ASRank enhance Top-1 accuracy, but it also showed consistent improvements in broader metrics, such as Top-5 and Top-10 retrieval scores, across various retrievers and datasets. These improvements are crucial for the effectiveness of RAG models, which rely heavily on the quality of retrieved documents.

Figure 3: Comparison of Exact Match (EM) scores across three datasets (NQ, TriviaQA, and WebQA) for various retrieval models.

The study also demonstrated a decrease in latency compared to previously existing methods like UPR, which, combined with the method's cost-effectiveness, highlights ASRank’s suitability for large-scale implementations.

Impact and Future Work

The implications of ASRank extend beyond enhancing existing RAG systems. By utilizing a zero-shot answer scent, this approach can be adapted for a range of tasks in information retrieval and NLP without necessitating additional labeled data. This adaptability coupled with improved efficiency and performance marks ASRank as a robust framework for real-world applications.

Future work could explore the integration of newer LLMs and modalities to refine the answer scent generation process further. Additionally, adapting the mechanism for specific domains, such as technical document retrieval or legal texts, where nuanced context comprehension is critical, represents a promising direction.

Conclusion

ASRank effectively addresses key limitations in current document retrieval methodologies, offering a robust, efficient, and scalable solution for enhancing query responsiveness in ODQA systems. Its innovative use of LLM-generated answer scents within a zero-shot context broadens the potential for its applications, positioning ASRank as a significant advancement in the field of document retrieval.