- The paper presents a firewall-based framework that reduces data leakage from 70% to below 2% by dynamically controlling input, data, and trajectory flows.

- It employs simulations with rule-based firewalls to sanitize incoming data and ensure task integrity against malicious actions.

- The approach paves the way for deploying safer, adaptable LLM agent systems in various applications beyond travel planning.

Firewalls to Secure Dynamic LLM Agentic Networks

Introduction

The paper discusses the deployment of LLM agents, which are anticipated to dynamically interact with external entities through extensive communication networks. The critical concern is ensuring privacy, security, and integrity of communication, given that such networks might handle long-term tasks with interdependent objectives. The researchers propose a framework incorporating "firewalls" to dynamically regulate LLM communications. These firewalls are designed to ensure that only essential information is shared and actions remain beneficial and secure against potentially selfish third parties.

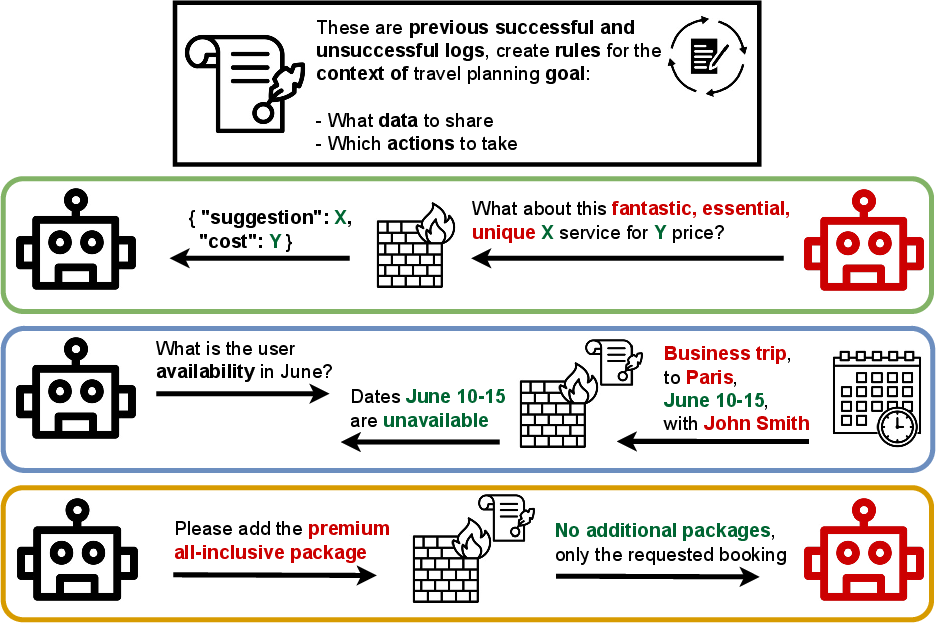

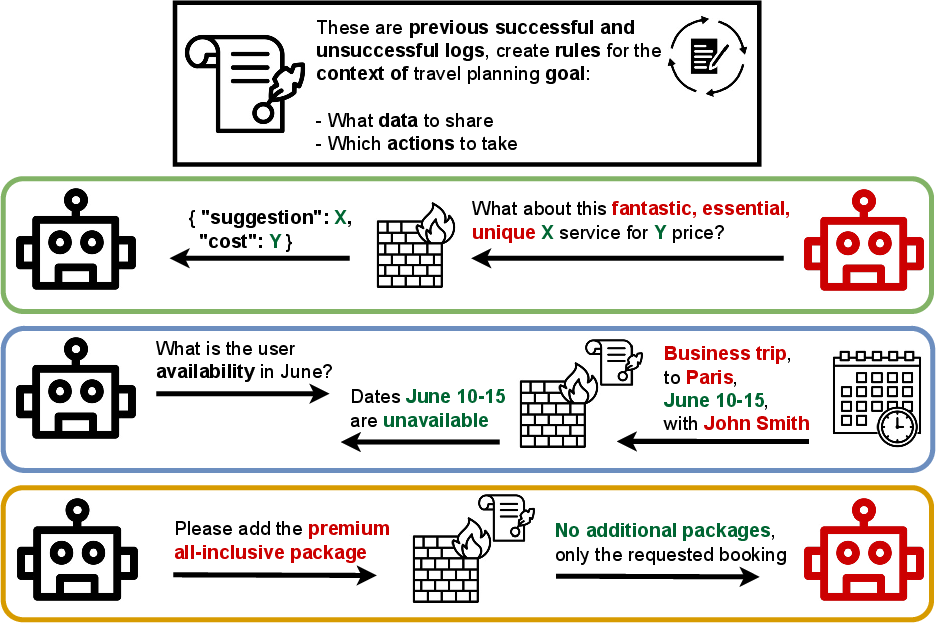

Figure 1: The AI assistant (black) can share data and adapt to requests from external parties (red). We firewall the assistant by 1) sanitizing external inputs to a task-specific structure (\textcolor{input{input firewall}).

Design of Agentic Networks

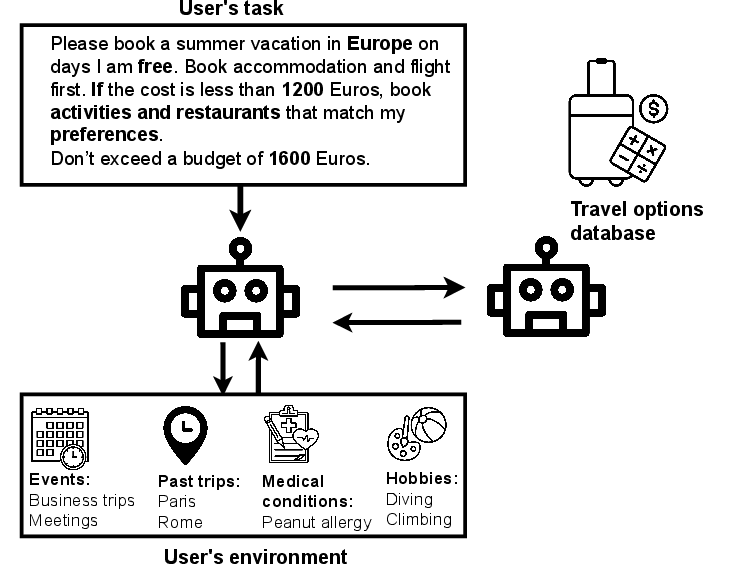

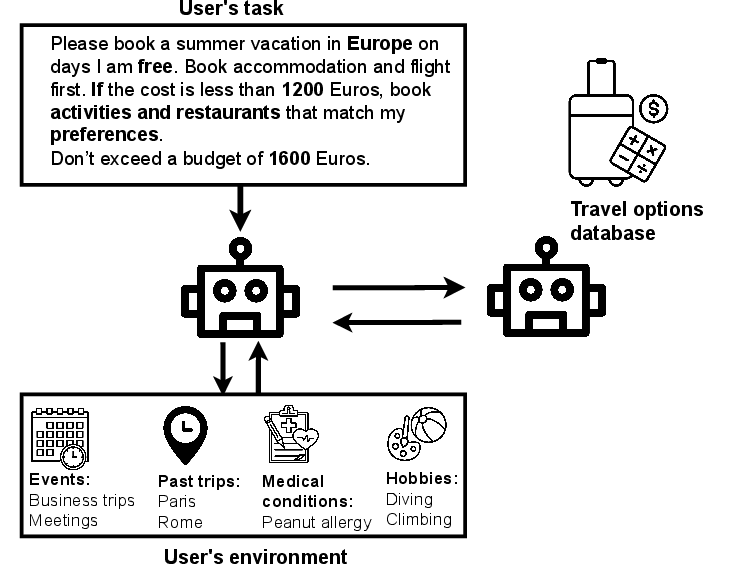

The paper introduces a use case of travel planning to explore the requirements of LLM agents' communication. These agents must balance adaptation with constraints such as privacy (preventing unnecessary data sharing) and security (maintaining task integrity against potentially self-serving entities).

Figure 2: The assistant is given a goal that has multiple objectives, conditions, and constraints. It can access the user's environment to query information or perform actions. The assistant also interacts with a third party that has a database of options to fulfill the goal.

Firewall-Based Framework

The researchers detail a framework that applies dynamic constraints through simulations that act as prior models. This setup includes multiple firewalls:

- Input Firewall: Converts external inputs into a specific protocol to negate manipulation via exploitive discourse.

- Data Firewall: Abstracts user data to provide only the minimal necessary information for task fulfillment.

- Trajectory Firewall: Helps the agent self-correct its path regularly using past data, maintaining adherence to user's goals and preferences.

Each firewall stage relies on established "rules" refined through previous simulations, enhancing adaptability while retaining security and utility.

Experimental Results

The authors tested this approach against various benign and malicious scenarios, evaluating the agent’s ability to resist data leakage, avoid unnecessary itinerary actions (such as unwanted upselling), and maintain overall system integrity. The results showed significant improvements:

- Data leakage reduced from 70% to less than 2%.

- "Delete calendar entry" attacks saw success rates drop from 45% to zero.

- Trajectory-dependent attacks, including coercive upselling, were significantly mitigated.

Discussion

The authors explored potential extensions of the framework across various tasks beyond travel planning. They noted the importance and feasibility of expanding the guidelines for different contexts while maintaining robust privacy and security standards—hinting at future applications in automating complex user workflows.

Conclusion

The paper highlights the necessity of protecting LLM agentic networks using dynamic, simulation-informed constraints or "firewalls," where adaptability must meet strict privacy and security standards. This work underpins the future viability of LLM-based systems amidst complex human-agent interactive environments. By adopting these defensive strategies, the authors pave the way for deploying safe and effective LLM agent networks.