- The paper establishes projective composition as a method to combine diffusion models by preserving key aspects of each distribution through projection functions.

- It identifies Factorized Conditionals and reparameterization-equivariance as crucial mechanisms linking theoretical analysis with empirical observations.

- The study introduces a heuristic based on mean vector orthogonality in feature spaces to predict compositional success amid sampling challenges.

Summary

This paper, titled "Mechanisms of Projective Composition of Diffusion Models" (2502.04549), focuses on advancing the theoretical understanding of composition in diffusion models. It addresses fundamental gaps in how linear score combinations of diffusion models can achieve projective composition and length-generalization. The authors introduce a novel concept of projective composition, define essential conditions for successful compositions, and connect theoretical analysis with empirical observations. The paper also proposes a practical heuristic for predicting compositional success in diffusion models.

Projective Composition Definition

The paper introduces the concept of projective composition, where given distributions {pi} and associated projection functions {Πi}, a distribution p^ is a projective composition if Πi♯p^=Πi♯pi for all i. This approach allows the definition of composition not purely as a function of distributions, but in terms of preserving specific aspects of each distribution. The authors emphasize that projective compositions can be truly out-of-distribution with respect to the distributions being composed.

Compositional Mechanisms

The paper delineates the conditions under which projective composition works in diffusion models. It establishes the Factorized Conditionals as a key criterion, where a set of distributions becomes independent when restricted to specific subsets of coordinates. This property ensures that linear score combinations using a "composition operator" can achieve correct projective compositions.

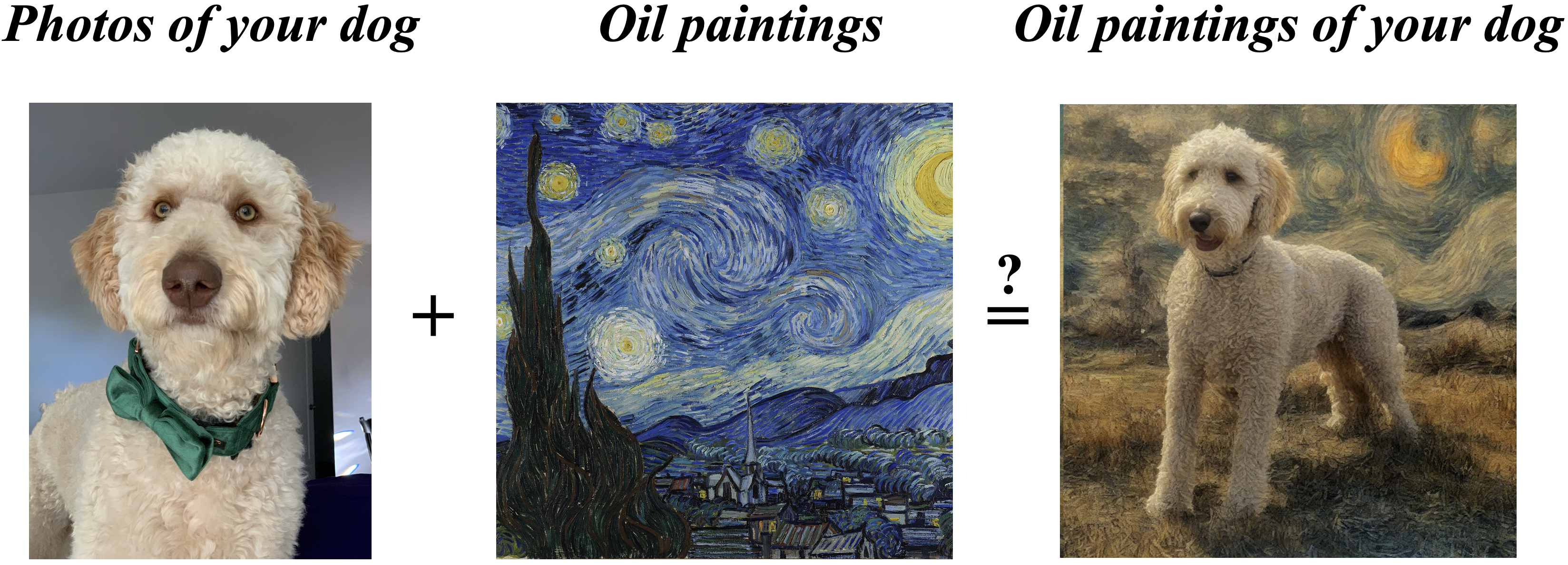

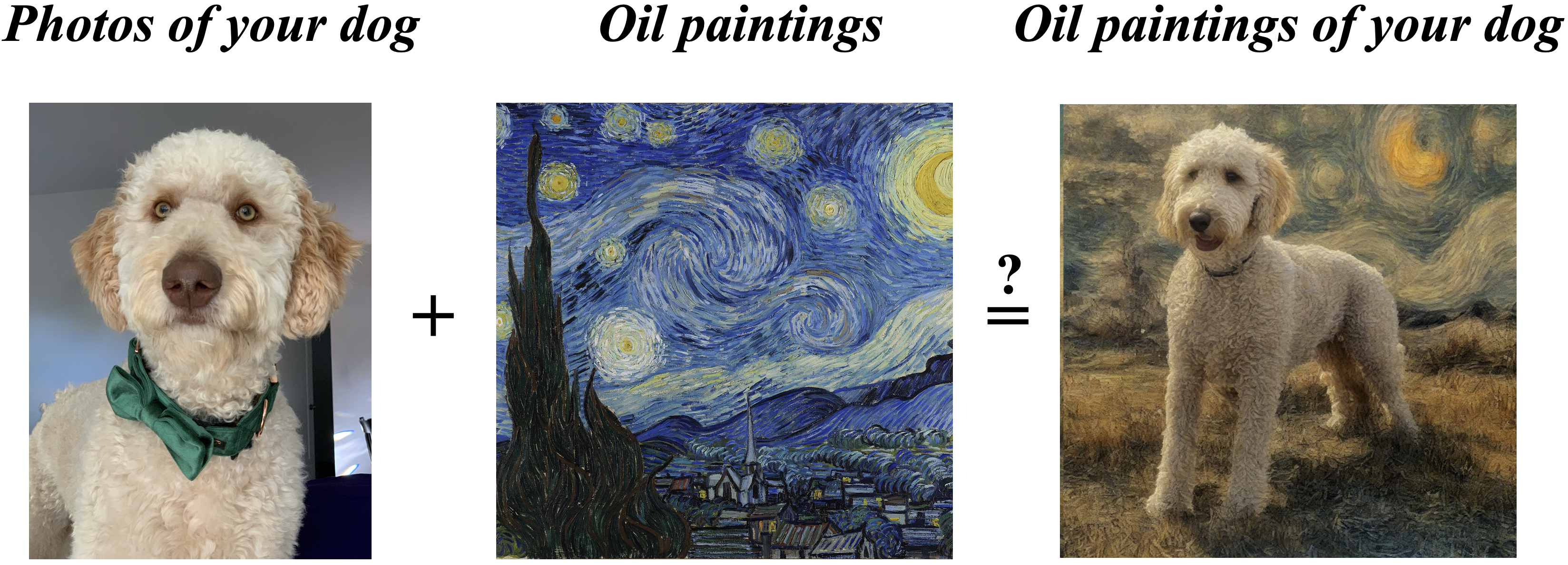

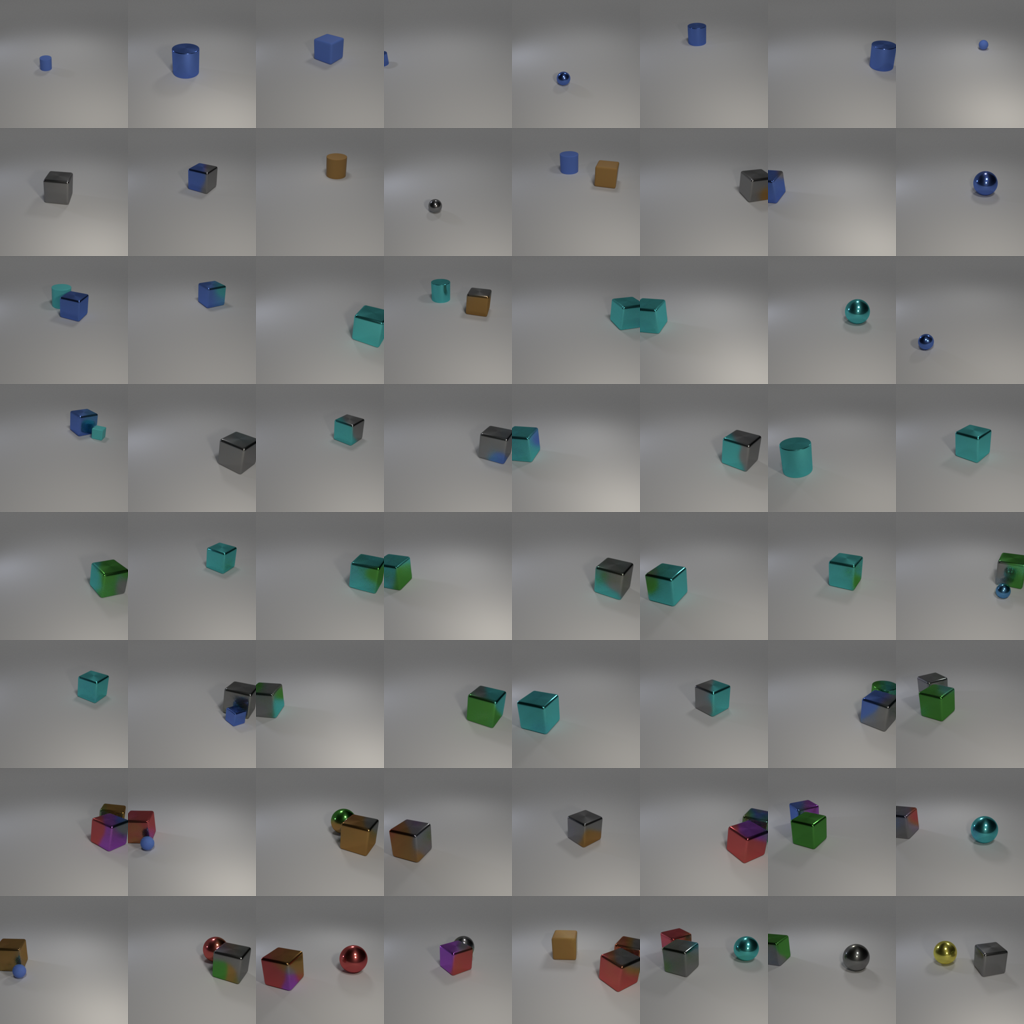

Figure 1: Composing diffusion models via score combination. Given two diffusion models, it is possible to sample in a way that composes content from one model with the style of another.

Further, the authors generalize this mechanism to feature spaces using diffeomorphic transformations. They demonstrate that projective composition can be achieved without explicitly knowing the feature space by leveraging the reparameterization-equivariance property of the composition operator.

Sampling Challenges

Projective composition in general feature spaces introduces challenges for sampling, as standard diffusion processes may not generate the desired compositions due to non-smoothness in compositional paths. The paper provides a theoretical insight into why these compositions, while possible at t=0, may be difficult to sample using conventional methods like reverse diffusion, particularly in non-orthogonal feature spaces.

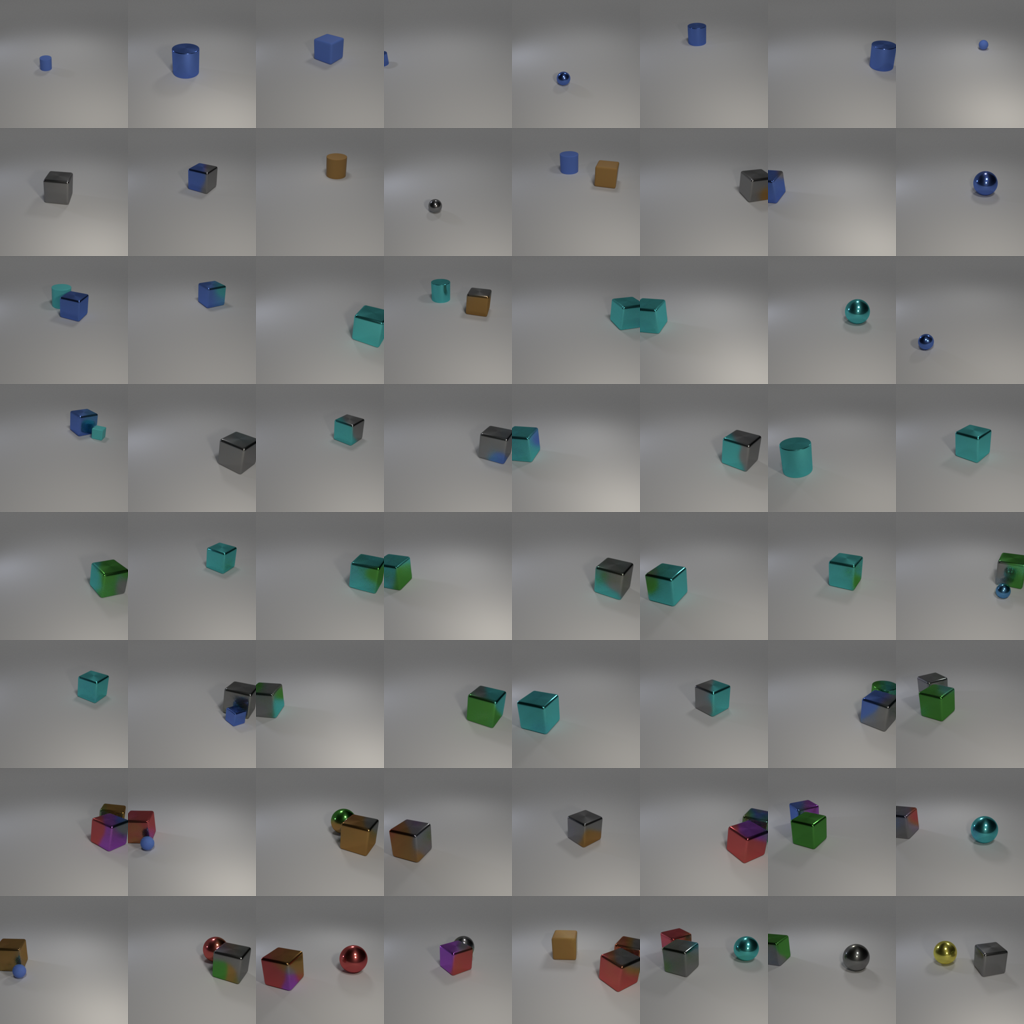

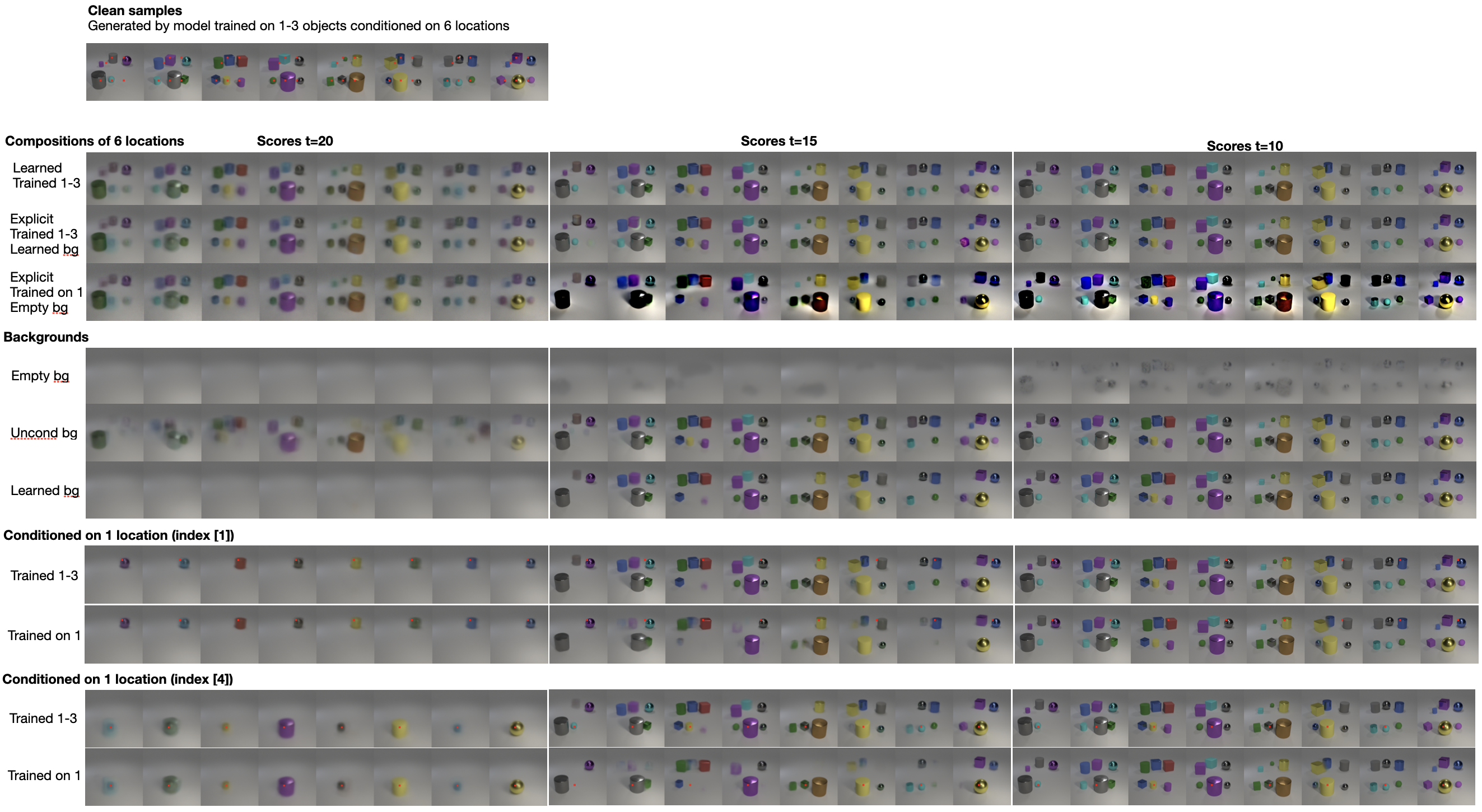

Figure 2: Compositions of models trained on multiple objects with a learned background. The model is tested for length-generalization from 1-10 objects.

Practical Implications

The paper advises the use of orthogonal transformations for feasible sampling and highlights the importance of choosing the correct background distribution for successful composition. It examines empirical cases where existing compositional methods either succeeded or failed and proposes a heuristic based on mean vector orthogonality in a disentangled feature space, such as CLIP. This heuristic provides a practical framework to predict the effectiveness of score-based compositions.

Conclusion

The authors contribute to a deeper theoretical understanding of diffusion model compositions, offering insight into the structural properties that allow for successful and meaningful compositions. This foundation can guide future research and practical applications in areas requiring complex generative models.

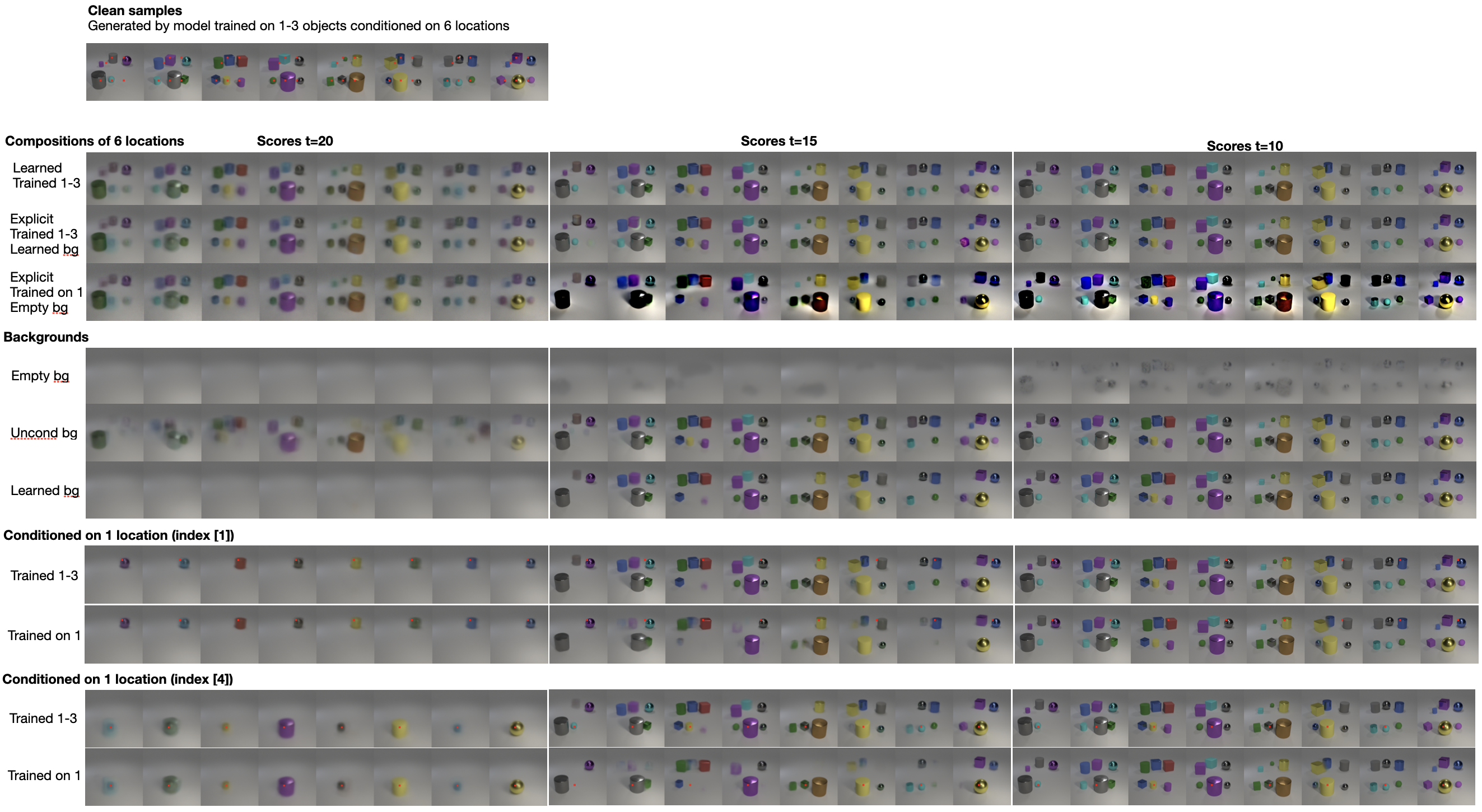

Figure 3: Score visualizations at various noise levels. Models are trained on different object settings with learned and explicit compositions.