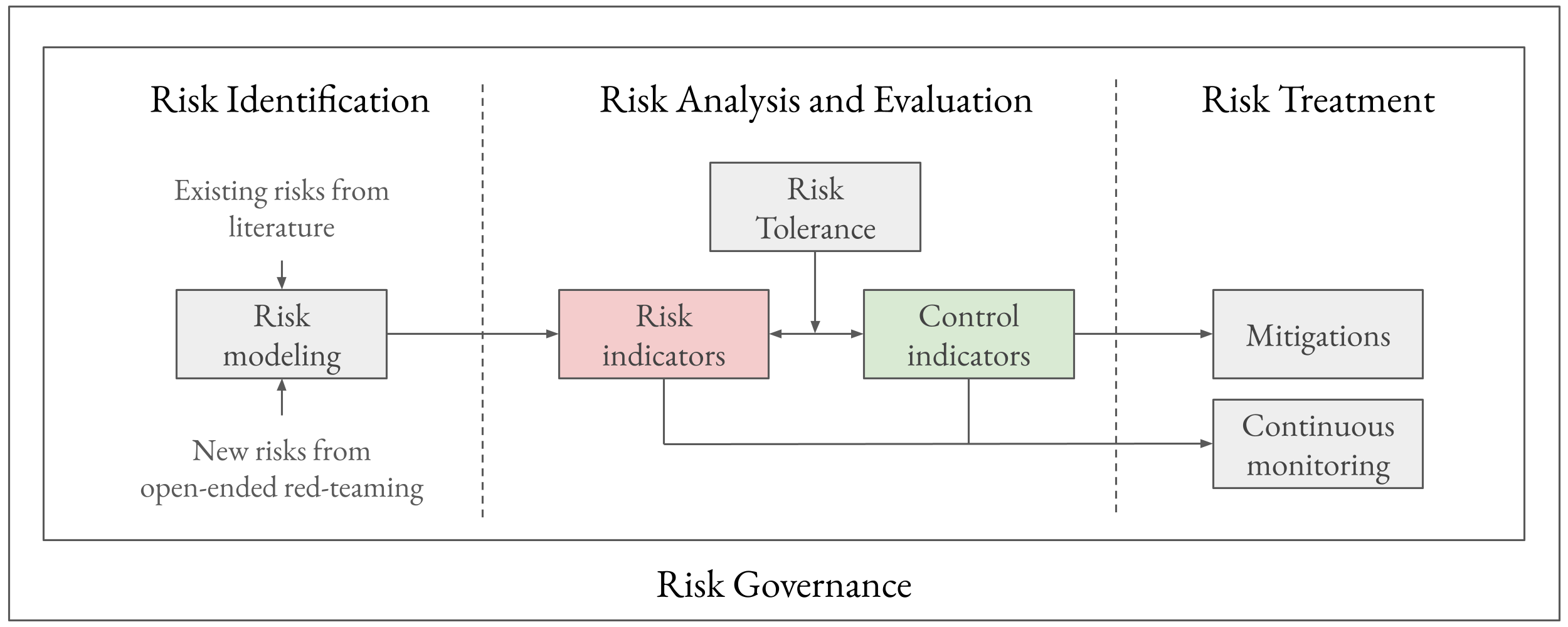

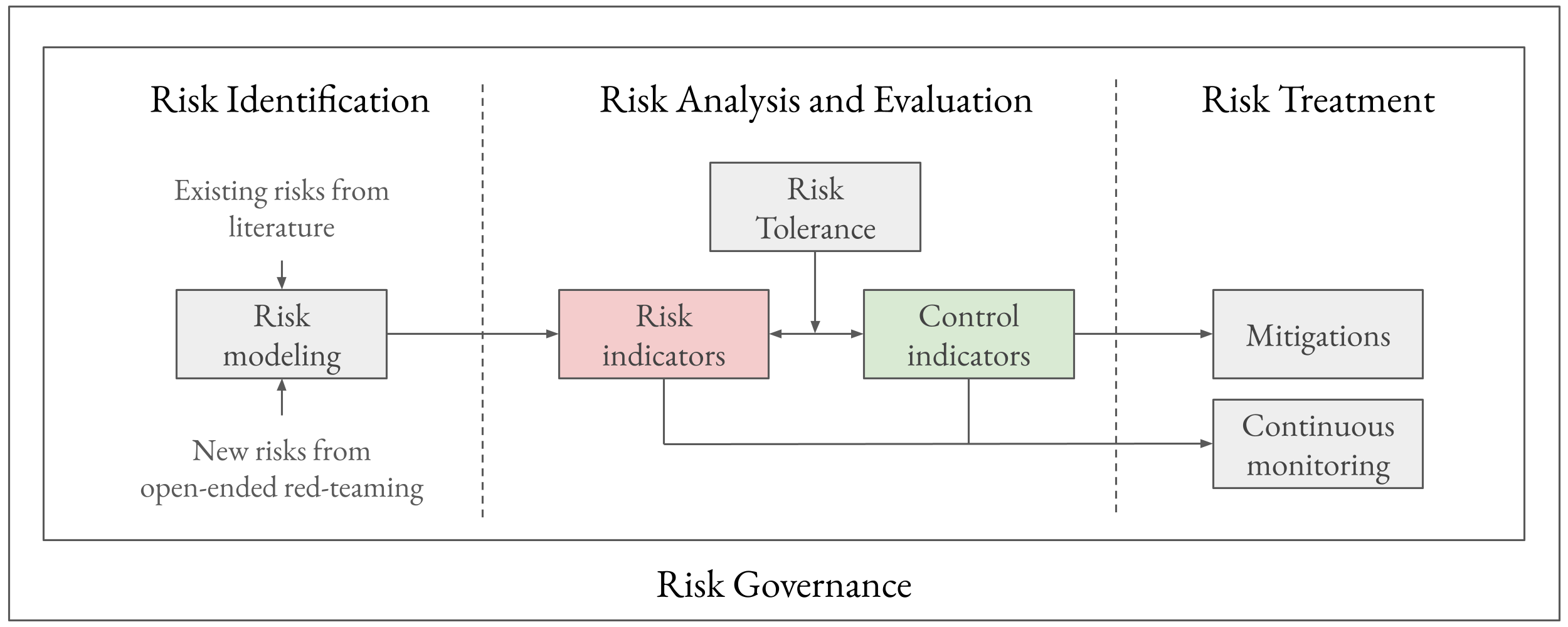

- The paper presents a comprehensive framework for managing AI risks by integrating risk identification, evaluation, treatment, and governance.

- It employs open-ended red teaming and quantifiable measures like KRIs and KCIs to define risk tolerance and monitor risk levels.

- The framework bridges current AI practices with established methods from high-risk industries such as aviation and nuclear power to ensure robust mitigation.

A Frontier AI Risk Management Framework: Bridging the Gap Between Current AI Practices and Established Risk Management

Introduction

The need for a robust risk management framework in the AI domain has been increasingly evident with the advancement of AI systems. Despite recent initiatives by AI companies to implement safety frameworks, a significant gap persists between current AI practices and established risk management methods from other high-risk industries. The framework presented in this paper aims to address this gap by integrating traditional risk management principles with AI-specific considerations. The core components of this framework—risk identification, risk analysis and evaluation, risk treatment, and risk governance—draw from industries such as aviation and nuclear power, providing AI developers with structured guidelines to manage emerging risks throughout an AI system's lifecycle.

Risk Identification

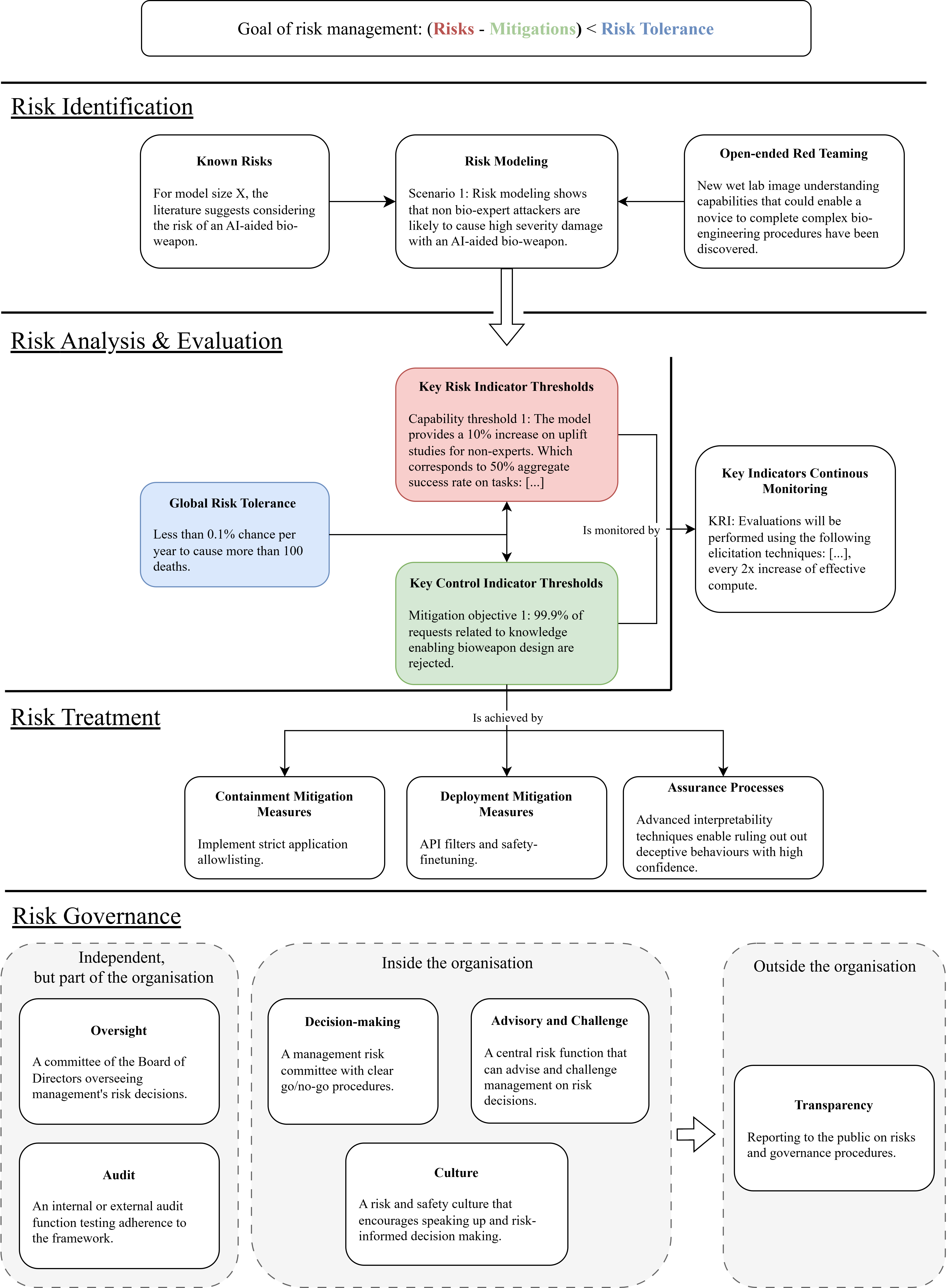

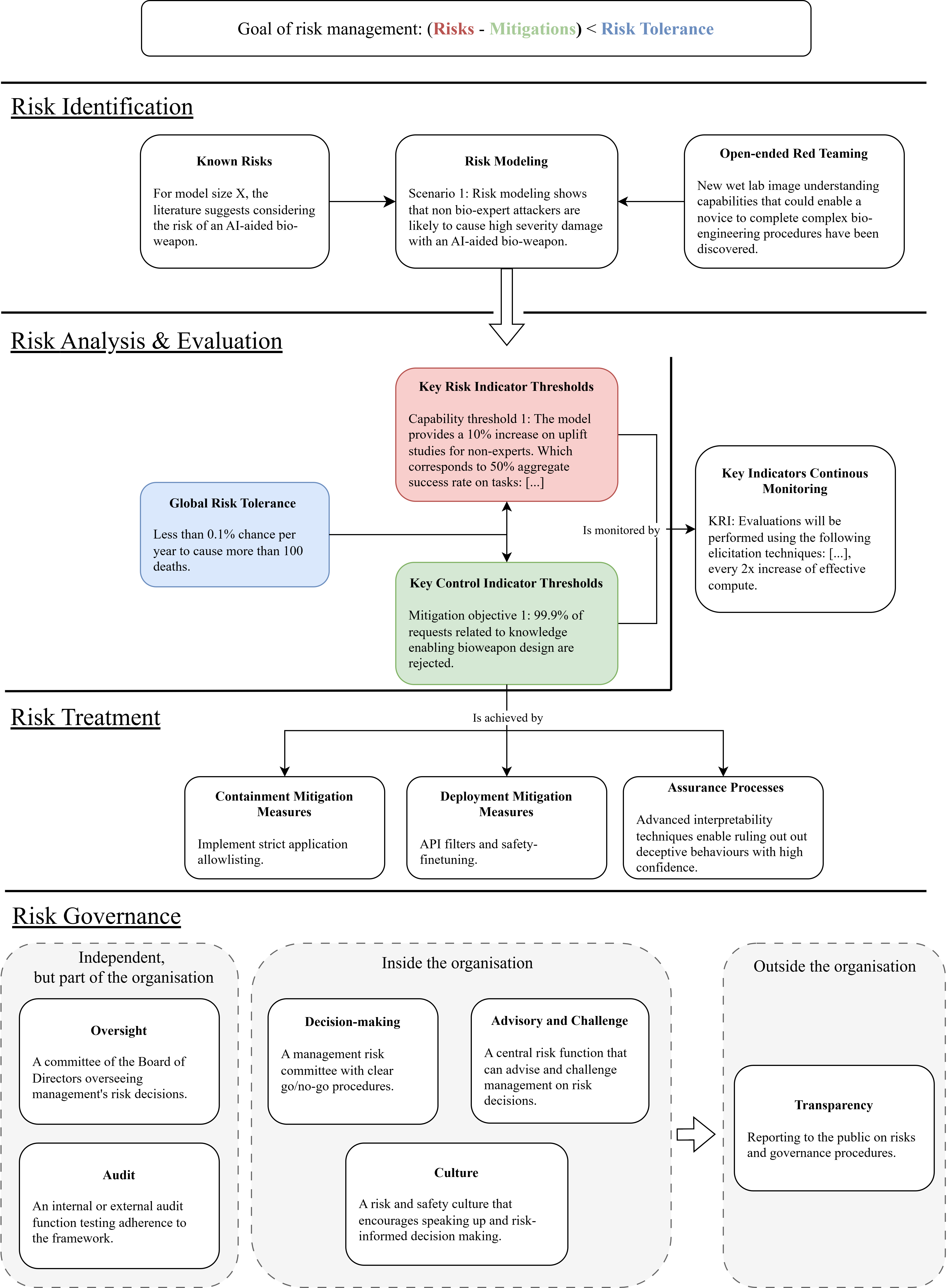

Risk identification in this framework involves classifying known risks, identifying unknown risks through extensive red teaming, and modeling scenarios that could lead to potential harms. Open-ended red teaming plays a crucial role, focusing on unforeseen risks that are not restricted to predefined categories. This approach allows developers to adapt risk assessments as new hazards or capabilities emerge, including those discovered during inference rather than training.

Figure 1: Key components of the frontier AI risk management framework.

Risk Analysis and Evaluation

A critical element in the framework is setting a risk tolerance, quantifying acceptable risk levels. Risk tolerance is operationalized through Key Risk Indicators (KRIs) and Key Control Indicators (KCIs), which serve as measurable proxies for risk and the effectiveness of mitigations respectively. KRI and KCI thresholds must be defined to ensure risks remain below the tolerance level. This approach requires detailed risk modeling to predict and manage risk through empirical thresholds.

Risk Treatment

Risk treatment involves implementing mitigation strategies to control risk levels, ensuring that mitigation meets pre-defined KCI thresholds. This includes containment measures to control model access, deployment measures to prevent misuse, and the development of assurance processes for high-capability models. Continuous monitoring is necessary to adjust mitigations in response to KRI measurements and to maintain compliance with the risk tolerance.

Risk Governance

Effective risk governance is essential to maintain robust risk management practices. It establishes clear roles and responsibilities within organizations and incorporates internal and external oversight mechanisms. Governance structures include risk ownership, advisory functions, risk culture, board-level oversight, independent audits, and transparency in risk reporting. These components together create a structured decision-making environment to ensure that risks are managed consistently and rigorously.

Figure 2: Our complete risk management framework along with examples illustrating each component.

Practical Implementation and Lifecycle

Implementing this risk management framework across the AI lifecycle—planning, training, and post-deployment—allows for the anticipation and mitigation of risks in advance. Key risk management activities should occur during the planning phase, minimizing commercial pressures and ensuring mitigations are ready when thresholds are crossed. The framework promotes continuous risk assessment and mitigation adjustments based on real-time KRI monitoring during training and deployment.

Conclusion

This comprehensive risk management framework provides a structured approach for AI developers to enhance risk management practices. It emphasizes explicit risk tolerance setting and integrates lessons from other high-risk fields. While the framework lays a foundational structure, ongoing research is required to advance risk modeling, quantitative assessment, and the development of robust assurance processes for increasingly capable AI systems. This framework aims to align AI risk management with the pace of AI advancements, ensuring societal benefits while mitigating potential harms.