- The paper systematically surveys over 500 studies to benchmark LLM-driven techniques for detecting software vulnerabilities.

- It employs methods like AST representations, prompt engineering, and parameter efficient fine-tuning to enhance detection accuracy.

- It highlights challenges such as dataset limitations and complex code semantics while outlining directions for future repository-level research.

LLMs in Software Security: A Survey of Vulnerability Detection Techniques and Insights

Introduction to LLM-based Vulnerability Detection

The paper "LLMs in Software Security: A Survey of Vulnerability Detection Techniques and Insights" explores the use of LLMs in detecting software vulnerabilities. Traditional static and dynamic analysis methods suffer from inefficiencies and high false positive rates, often due to the complex nature and scalability issues of modern software systems. LLMs, such as GPT, BERT, and CodeBERT, offer a novel solution by identifying code patterns, analyzing structures, and suggesting repairs. These models harness the advances in natural language processing and transformer architecture to tackle vulnerabilities at scale.

Key Contributions and Research Questions

The paper systematically reviews the application of LLMs in vulnerability detection, structured around several key research questions:

- LLM Applications: It examines which LLMs have been applied to vulnerability detection.

- Benchmarking: It identifies relevant benchmarks, datasets, and evaluation metrics used in LLM-based vulnerability detection.

- Techniques: It explores specific techniques used within LLMs to refine detection efficacy.

- Challenges and Directions: It specifies challenges faced and potential future directions for research.

Survey Methodology

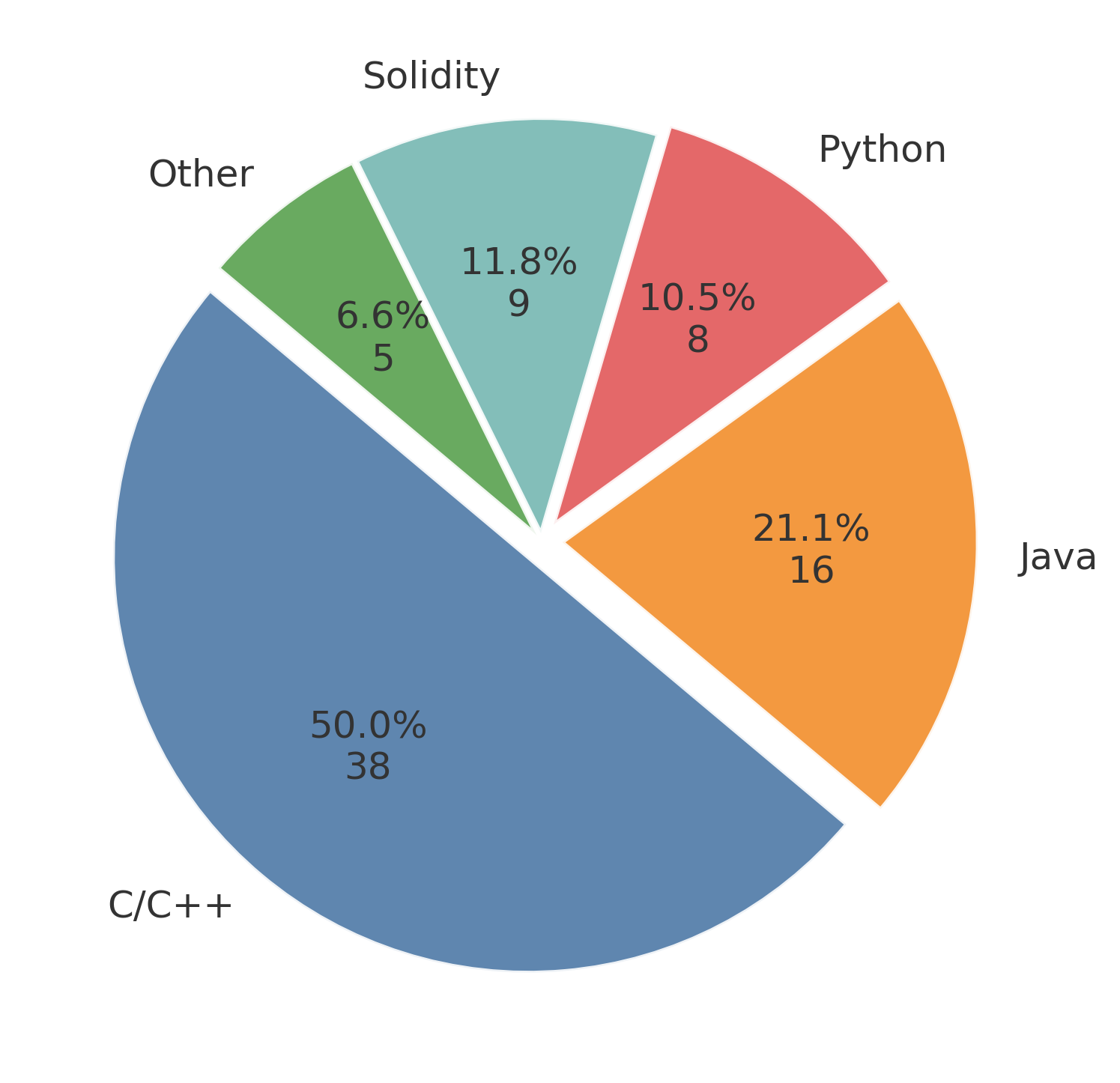

The survey draws insights from over 500 papers, selectively focusing on 58 highly relevant studies from 2019 to 2024. These studies predominantly address programming languages like C/C++, Java, and Solidity due to their extensive utilization and potential for security vulnerabilities. The survey excludes traditional machine learning approaches, directing its focus solely on approaches leveraging modern LLMs.

Dataset Analysis

The survey details the current state of dataset availability:

Techniques for LLM-based Vulnerability Detection

- Preprocessing Techniques:

- AST and Graph Representations: Abstract Syntax Trees improve semantic comprehension. Data/Control Flow analysis aids in contextual understanding.

- Program Slicing: Reduces code redundancy to highlight relevant sections for LLM analysis.

- Prompt Engineering:

- Chain-of-Thought Prompting: Enhances reasoning accuracy by guiding the model in logical steps.

- Few-shot Learning: Incorporates labeled examples to optimize LLM performance for vulnerability-specific tasks.

- Fine-tuning Strategies:

- Parameter Efficient Techniques (PEFT): These methods adapt LLMs to new tasks by partially updating model parameters, which is cost-effective and efficient.

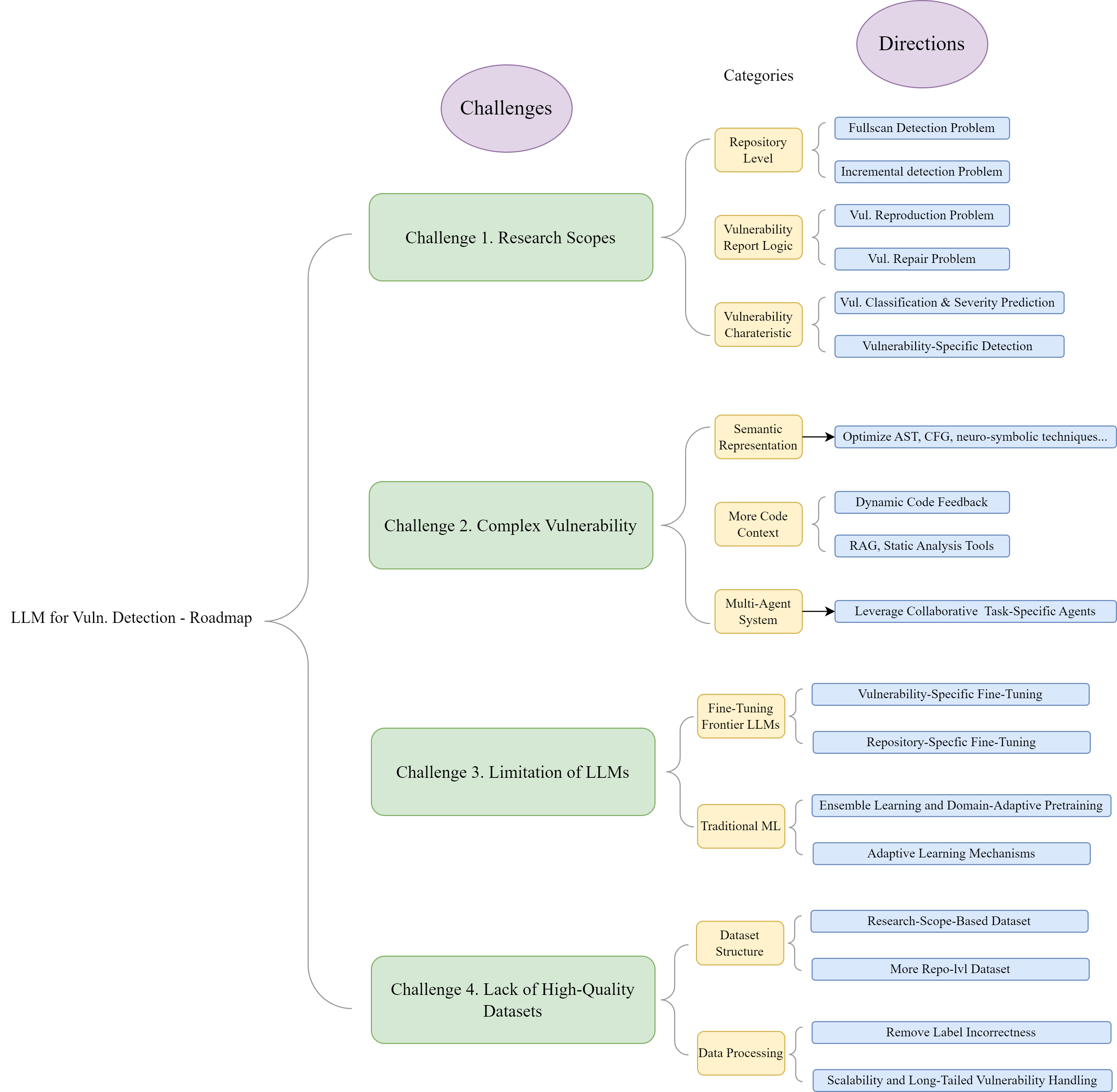

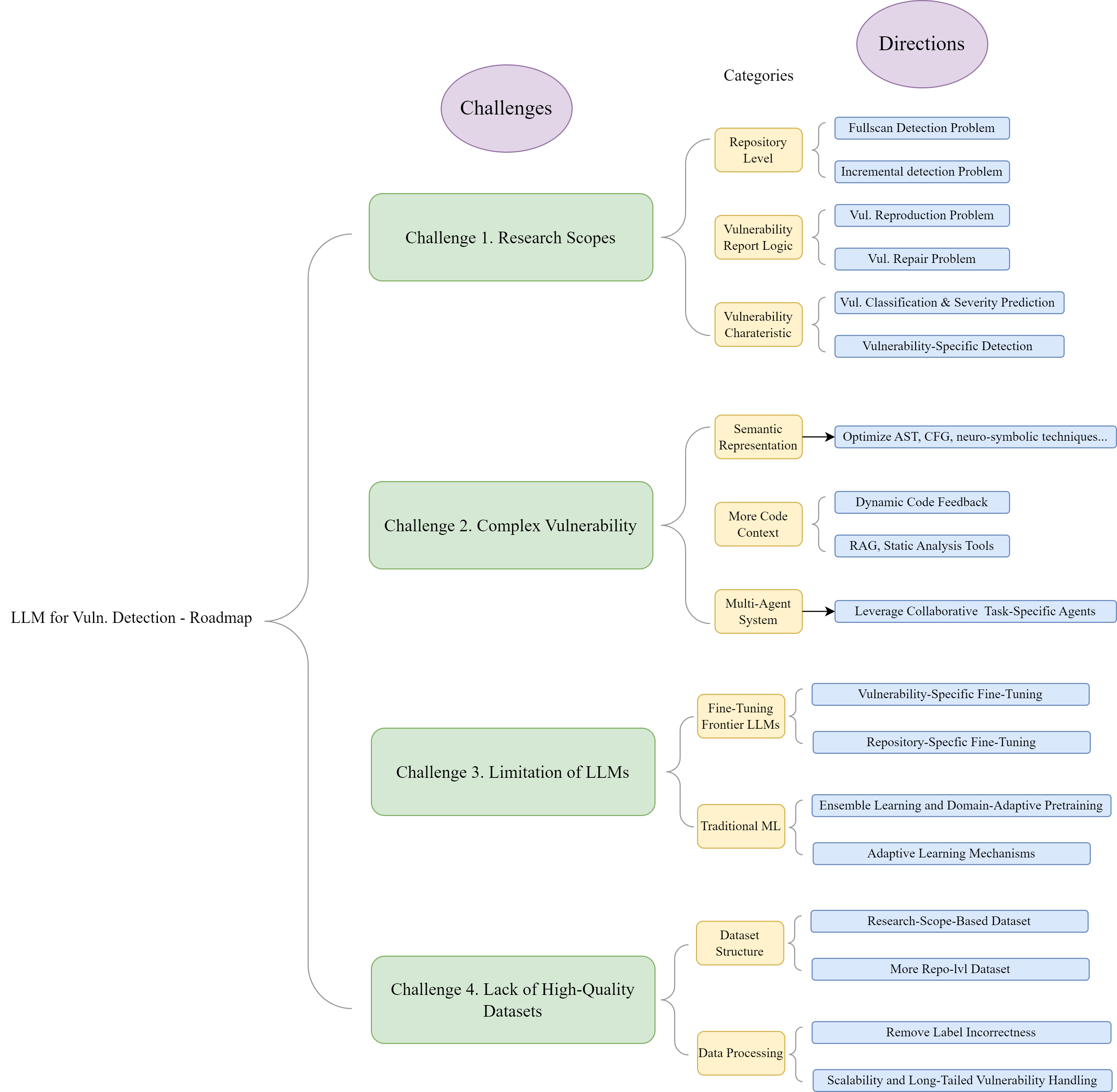

Challenges and Future Directions

- Scope of Research Problems: The landscape is dominated by binary vulnerability detection tasks. Future research should address broader and more practical challenges like repository-level analysis, vulnerability reproduction, and repair.

- Complexity in Code Semantics: Addressing code complexity through improved representation methods and dynamic context retrieval is crucial.

- Intrinsic LLM Limitations: Issues like explainability and robustness hinder real-world application. Enhancements can focus on fine-tuning sophisticated models and integrating ensemble learning techniques.

- Dataset Limitations: There's an urgent need to develop comprehensive, high-quality datasets that mirror real-world software evolutionary processes.

Figure 2: Challenges and Potential Directions.

Conclusion

This survey establishes a detailed landscape of LLM applications and potential directions in vulnerability detection. While LLMs introduce significant advancements over traditional methods, ongoing research is essential to overcome existing technical and practical challenges. Future efforts should continue to refine LLM capabilities, focus on high-quality dataset construction, and address complex real-world vulnerabilities. This systematic survey lays a foundation for the burgeoning field of LLM-based vulnerability detection and provides a roadmap for future innovations.