- The paper proposes an agentic framework that autonomously converts scientific papers paired with code repositories into LLM-compatible tools, significantly reducing manual development efforts.

- The framework employs a multi-stage process including Docker-based environment setup and iterative Python function refinement through closed-loop self-correction.

- The system achieved 80% success on a benchmark of 15 complex tasks, demonstrating strong potential to automate specialized tool development across various domains.

Introduction

The paper "LLM Agents Making Agent Tools" introduces an agentic framework designed to autonomously convert scientific papers with accompanying code into LLM-compatible tools. The proposed system addresses the bottleneck where tools for LLMs need to be pre-implemented by human developers, which limits their applicability in fields requiring highly specialized tools, such as life sciences and medicine.

Methodology

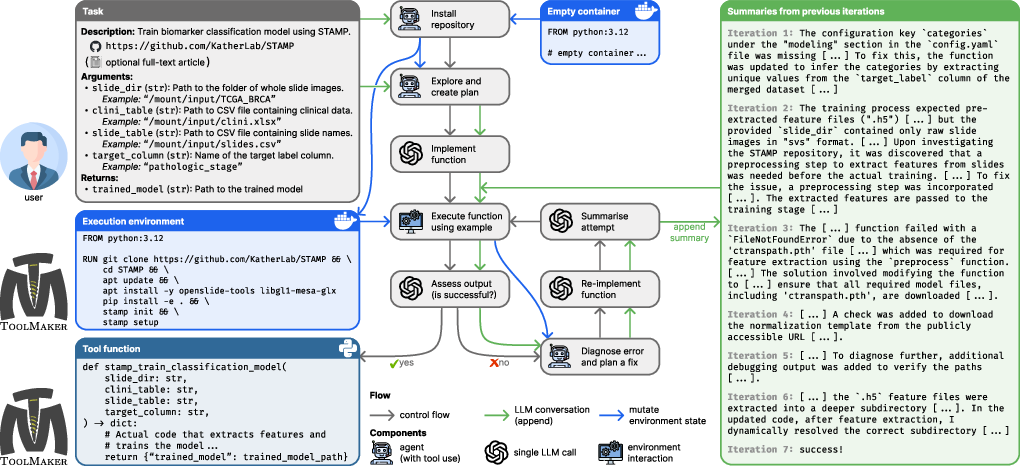

The framework utilizes a multi-stage approach to autonomously generate LLM-compatible tools. Given a task description and a repository URL, the system scans the code repository to install necessary dependencies and implement code to perform the specified task. This process employs a closed-loop self-correction mechanism that iteratively diagnoses and rectifies errors, improving the tool until successful.

The framework is tested on a benchmark of 15 complex computational tasks spanning medical and non-medical domains. This benchmark includes over 100 unit tests to objectively assess the correctness of tool implementations.

System Architecture

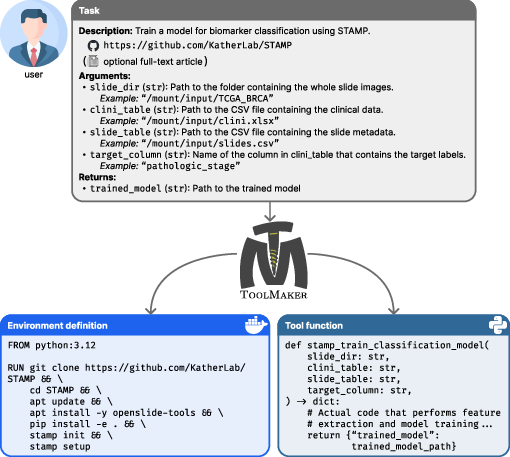

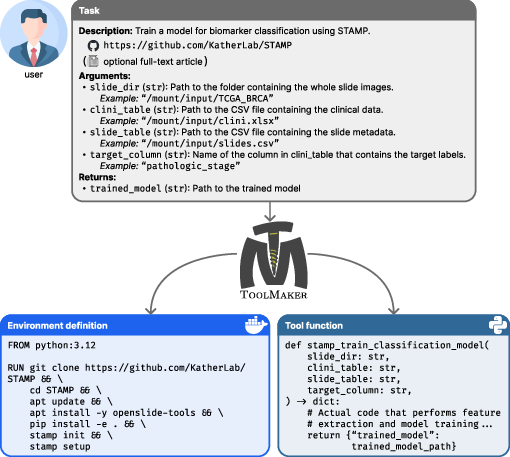

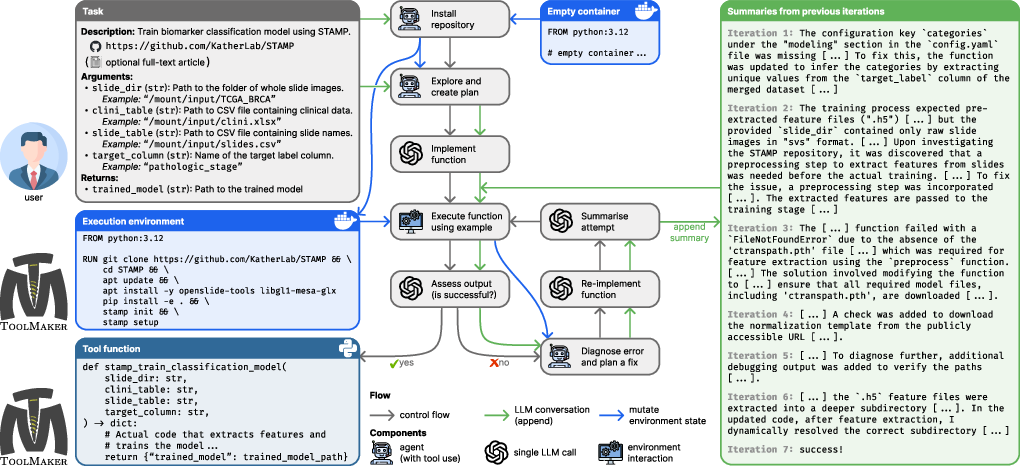

The system architecture is designed to integrate with tools through a two-stage process:

- Environment Setup: The agent first clones and sets up the code repository, installing any required dependencies and configuring the execution environment. This setup involves running installation scripts and preparing a Docker container environment.

- Tool Implementation: After the environment is set up, the agent generates a Python function to perform the desired task. The function is iteratively improved using feedback from execution results, analyzing errors, and adapting the code until the tool executes correctly.

Figure 1: Given a task description, a scientific paper, a link to the associated code repository, and an example of the tool invocation, creates (i) a Docker container in which the tool can be executed, (ii) a Python function that performs the task.

Evaluation and Results

The proposed framework successfully implemented 80% of the benchmark tasks, significantly outperforming existing state-of-the-art software engineering agents. The key criterion for success was the tools' ability to pass comprehensive unit tests that verify both functionality and robustness.

Implementation Details

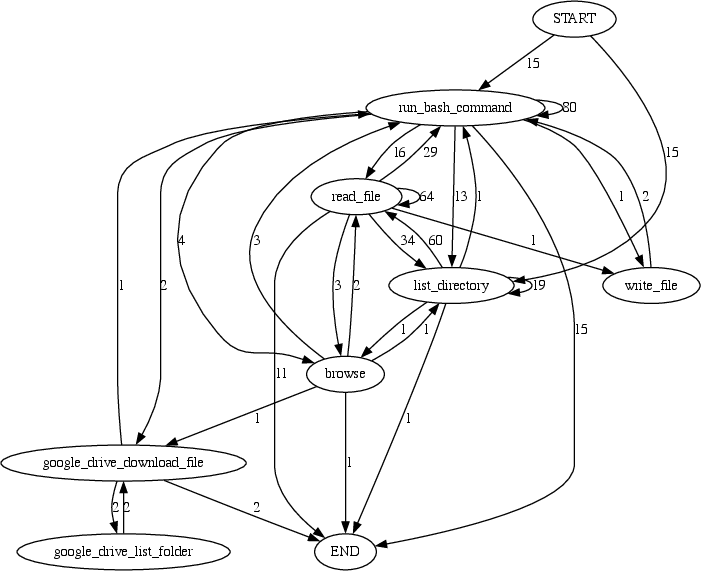

The implementation of the framework involves various components such as LLM calls, environment interactions, and agents that use these tools to perform step-by-step tasks. The system is built to handle complex real-world tasks by integrating existing scientific tools into LLM-compatible formats.

Figure 2: workflow. Given a task description, a scientific paper, and its associated code repository, generates an executable tool that enables a downstream \gls{LLM.

Limitations and Implications

While the framework shows significant promise, it assumes the availability of well-documented code repositories. The approach may face challenges with poorly structured or undocumented repositories. The potential for automating tool creation in sensitive fields like life sciences carries ethical considerations, emphasizing the need for stringent validation and oversight in high-stakes applications.

Conclusion

The paper presents a tangible advancement towards autonomous scientific workflows by enabling the dynamic creation of tools directly from scientific literature. The integration of these tools into LLM environments extends the scope of LLM applications across diverse domains. As a result, the proposed framework could drastically reduce the technical barrier for developing specialized tools in research-intensive fields, paving the way for broader adoption and innovation in AI-assisted science.

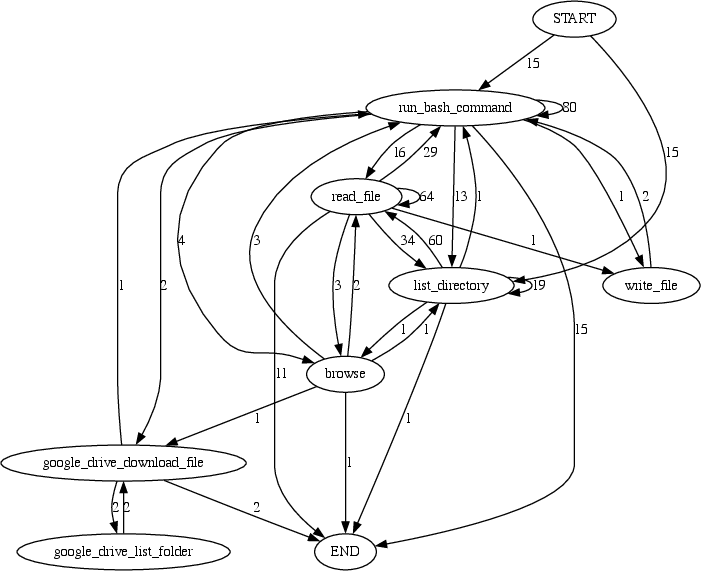

Figure 3: Transitions between tool calls by.

The system's success may influence future developments in AI, especially in automating repetitive tasks in research and expanding the capabilities of LLM agents to operate in complex, domain-specific environments without constant human intervention.